In this section you’ll find the latest RealLoad documentation.

If you need help or would like to provide feedback on product documentation, please email us at support@realload.com .

This is the multi-page printable view of this section. Click here to print.

In this section you’ll find the latest RealLoad documentation.

If you need help or would like to provide feedback on product documentation, please email us at support@realload.com .

RealLoad is an Enterprise Tool to perform next generation Load Testing, Regression Testing, and Synthetic Monitoring.

Due to the universal product architecture, a Test of any Type supported by RealLoad can be executed as:

The following Types of Tests are supported::

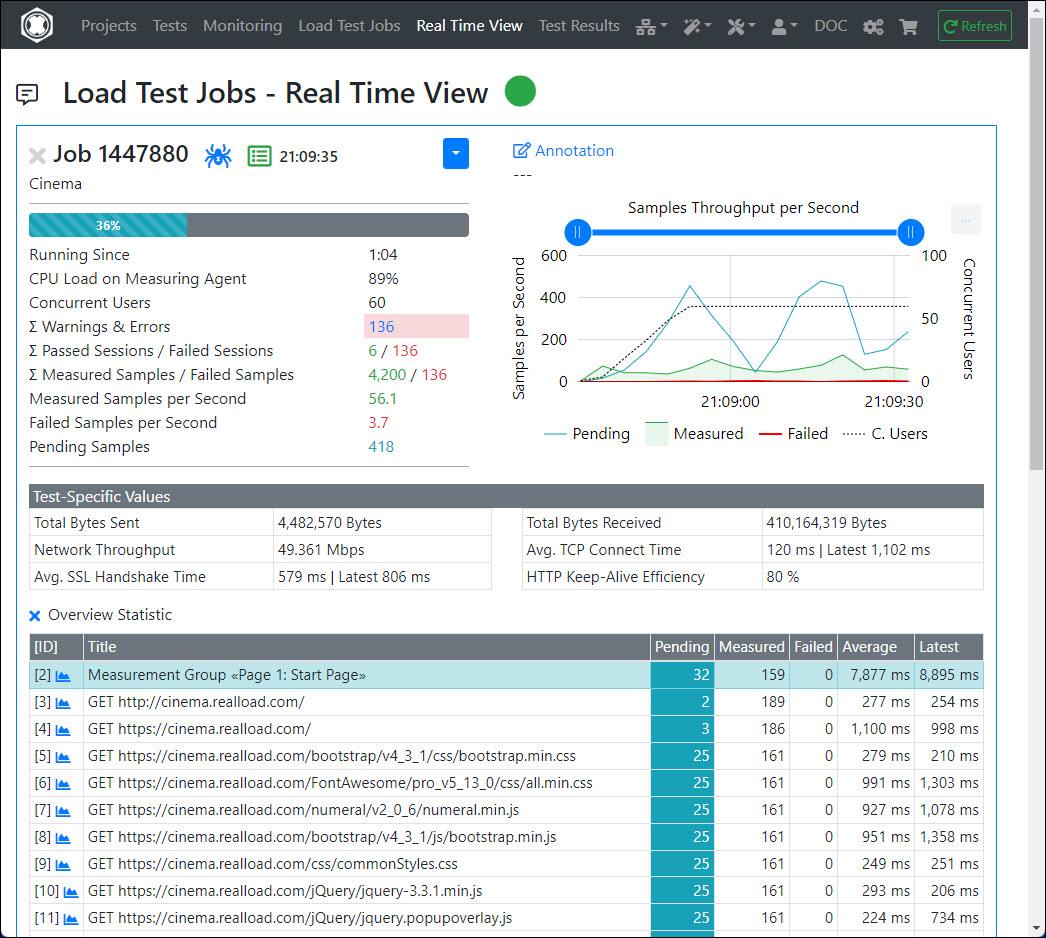

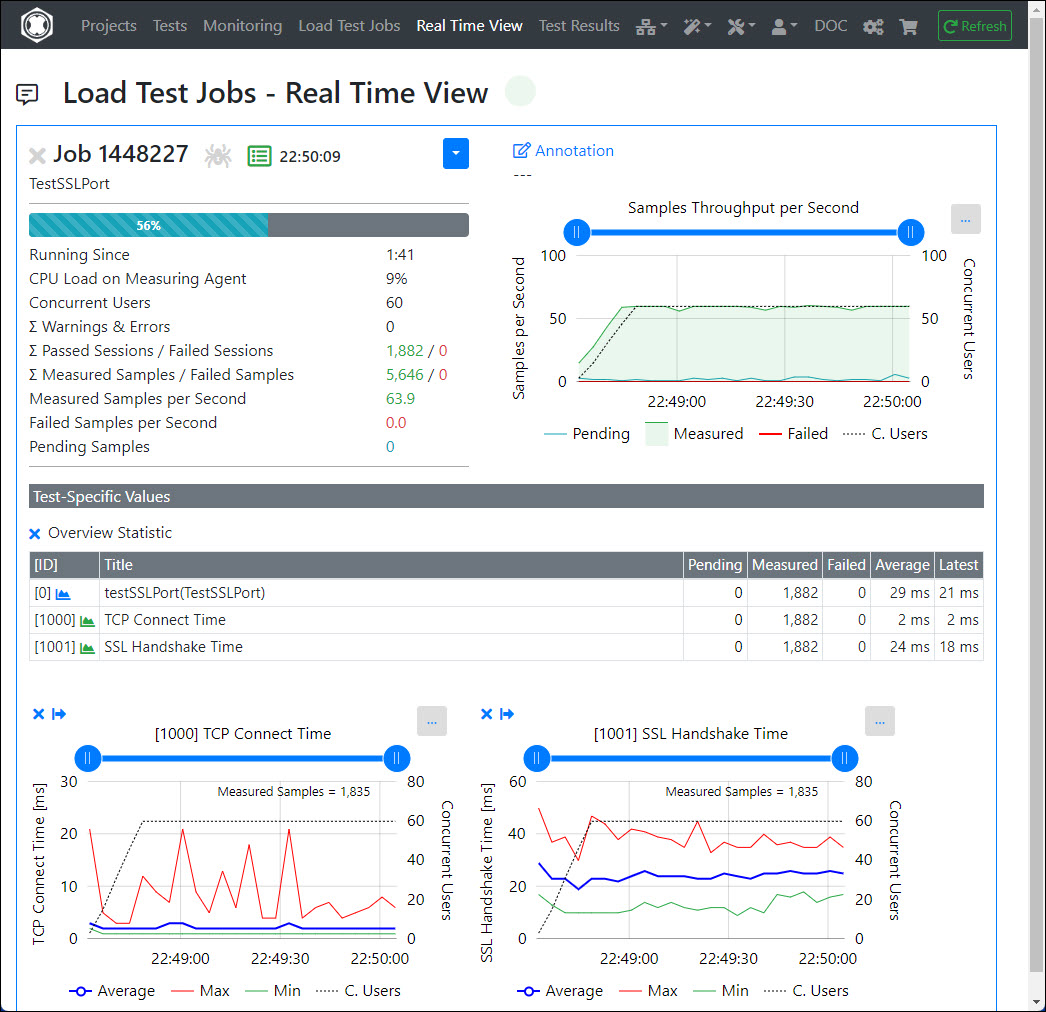

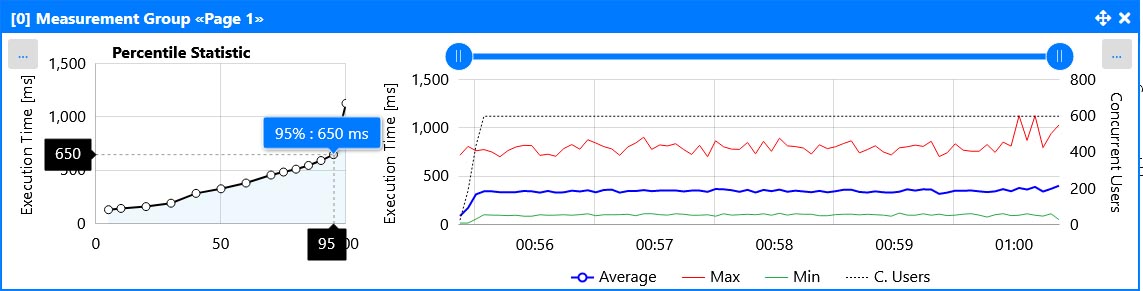

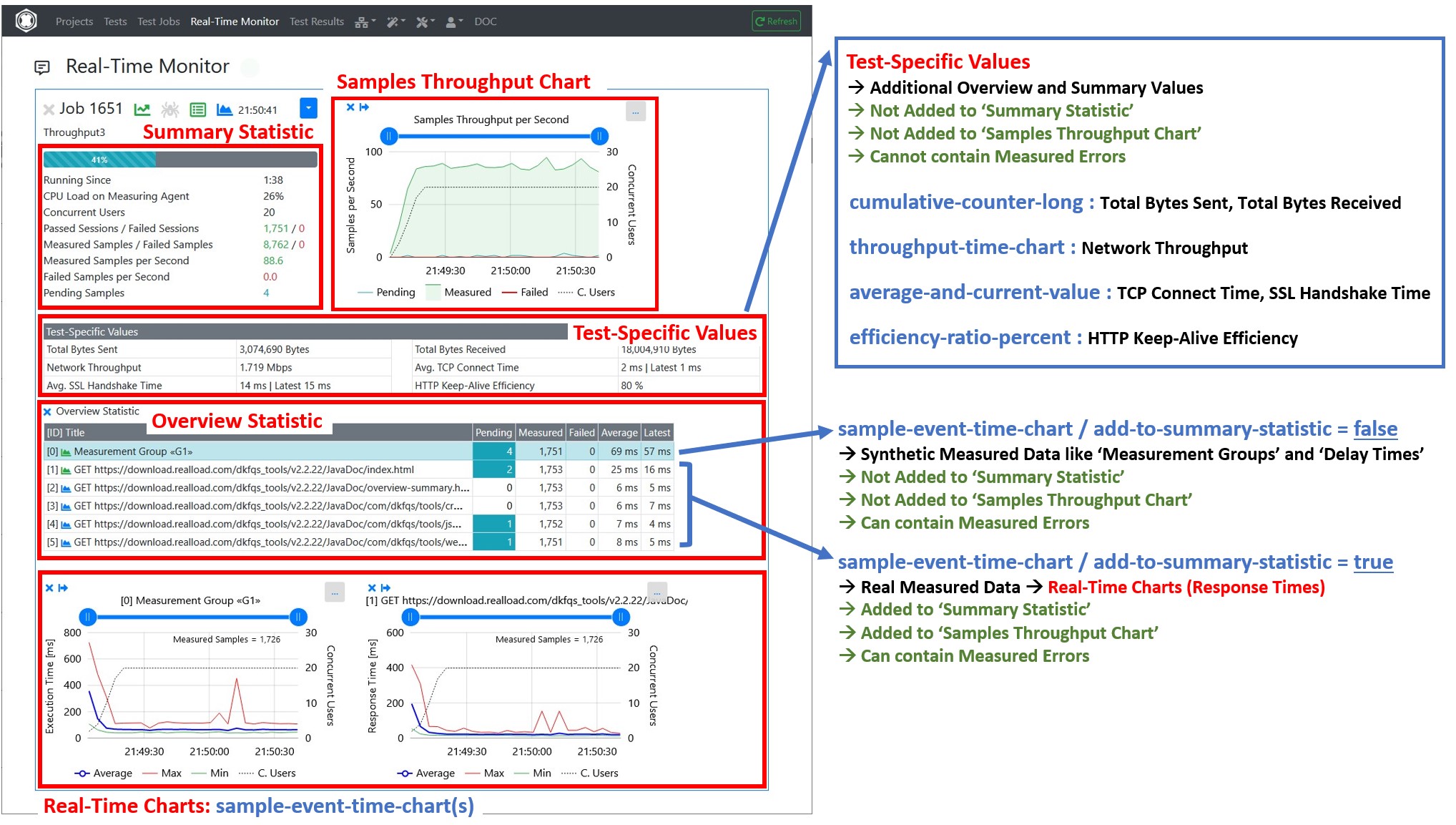

Regardless of what type of test you are running, all measured data are displayed directly in real time in form of statistics and charts.

All work steps can be carried out within the web interface of the RealLoad Portal which:

In addition, using the RealLoad Portal offers many other advantages, for example:

Complete Overview of All Product Features

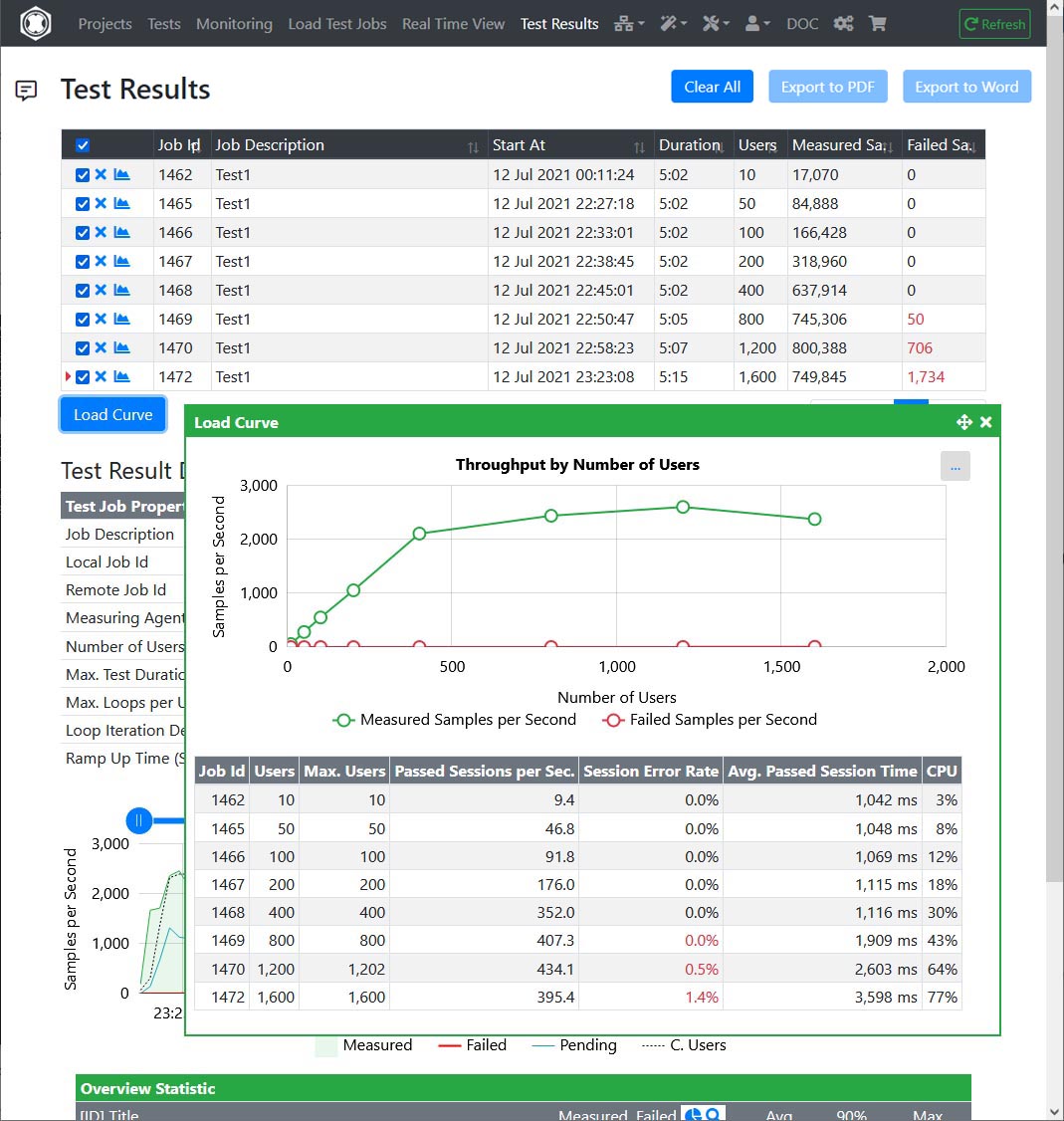

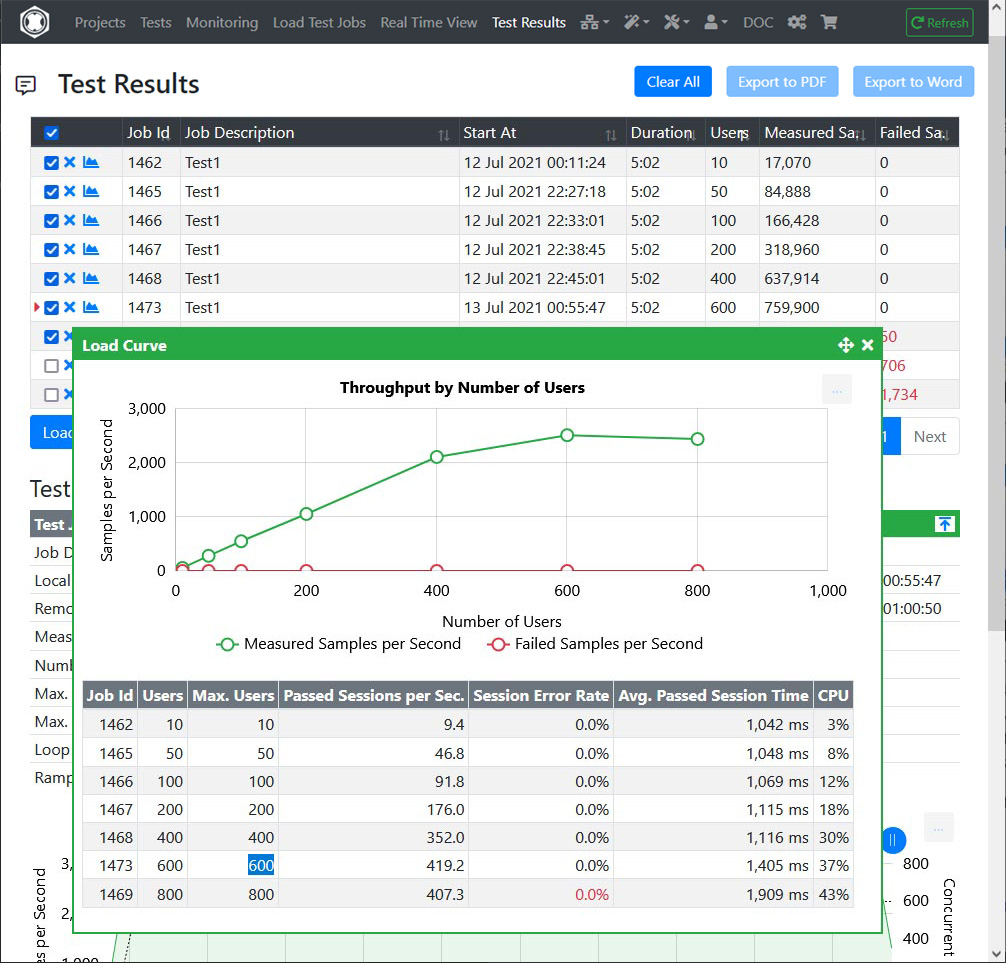

Many larger companies that provide web services that have hundreds or thousands of users logged in at the same time have started combining synthetic monitoring and regression testing with load testing.

For example, if an average of 2000 real users are logged in at the same time, it doesn’t matter if a monitoring job with 100 virtual users is running concurrently in parallel.

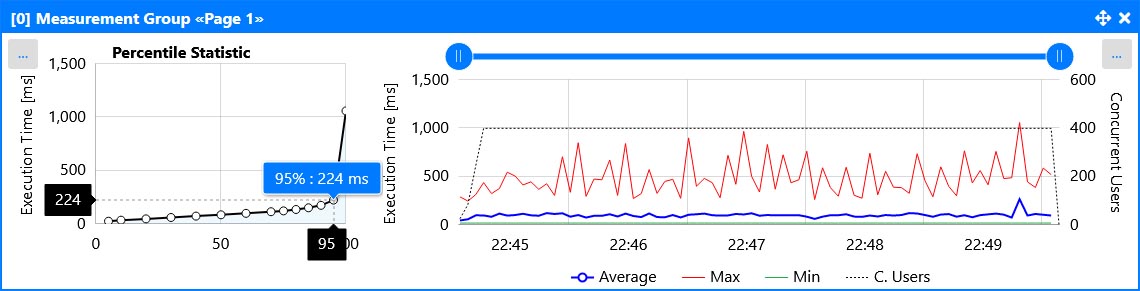

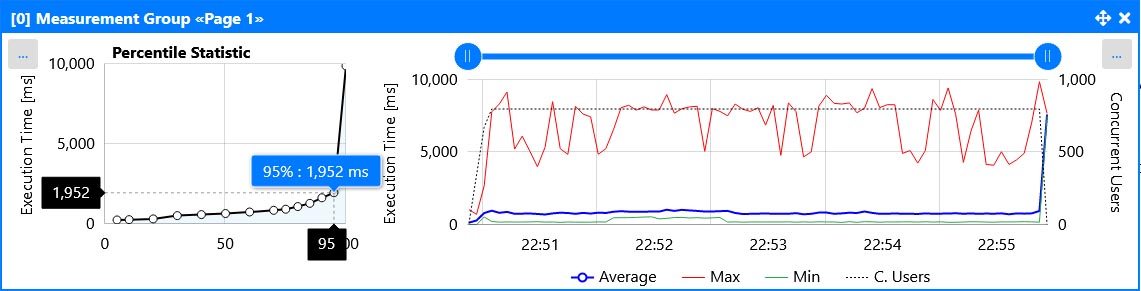

However, the data measured in this way are much more meaningful than a test that only runs with a single user, as rarely occurring errors can be identified better and faster. In addition, percentile statistics are also available for each individual test run. This allows performance outliers to be better identified and evaluated.

Another reason to run monitoring jobs with many virtual users is when several servers are combined behind a load balancer. If one of these servers becomes unstable or slow, a monitoring job that only runs with one user will often not detect this - or detect it much too late.

‘RealLoad Tests’ are universal, abstract tests which can created from any type of test. All types of tests can be executed as:

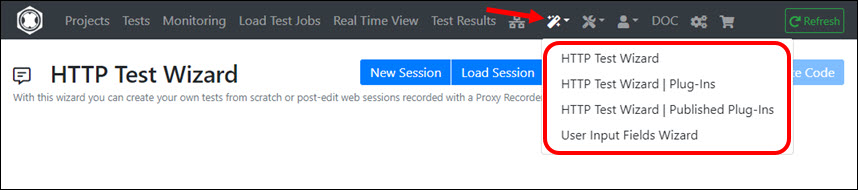

Creating a ‘RealLoad Test’ is usually easy and supported by wizards and powerful dialogs.

The following Types of Tests are supported:

All core components are independent OS processes that can be executed on Linux and Windows:

¹ = Available as public AWS/EC2 image

Using locally installed RealLoad applications is optional, but can be quite useful:

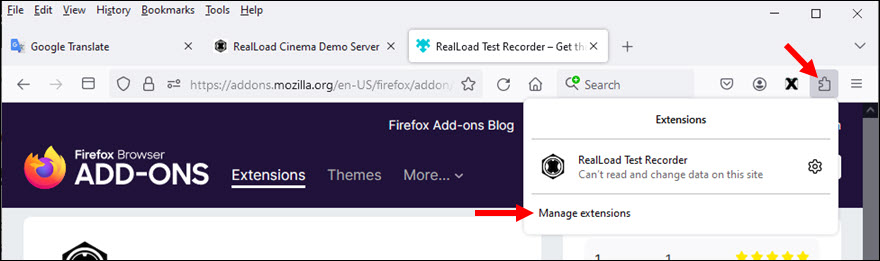

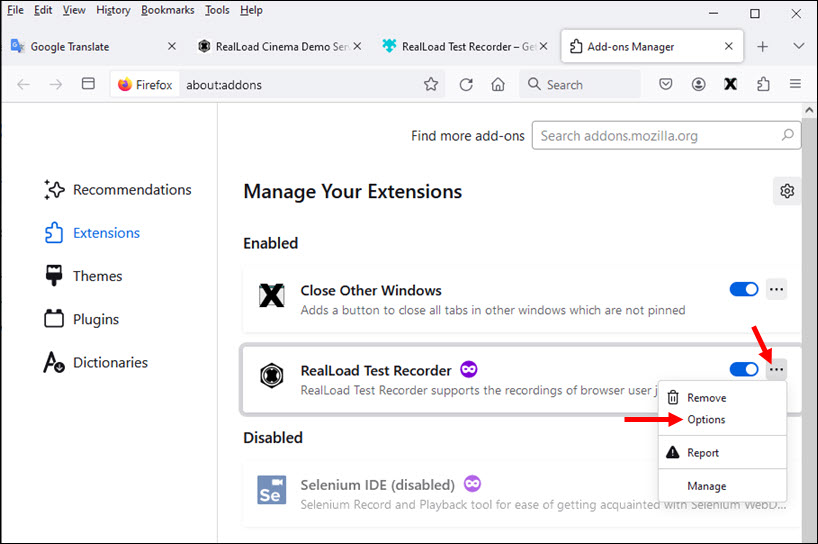

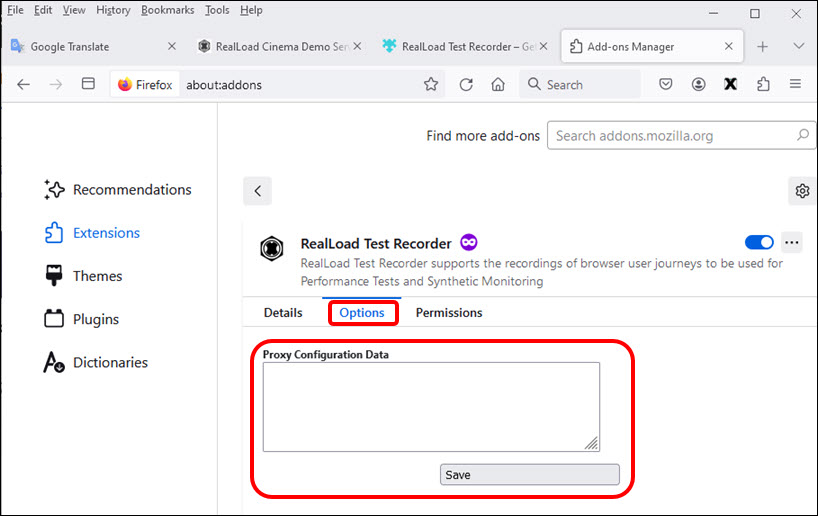

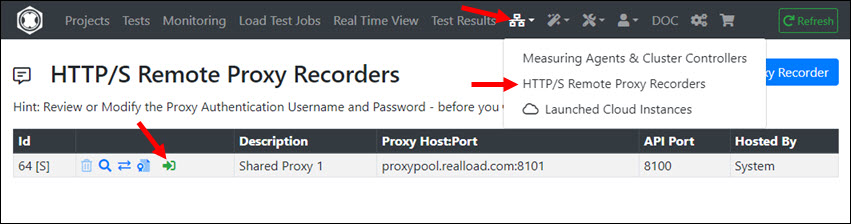

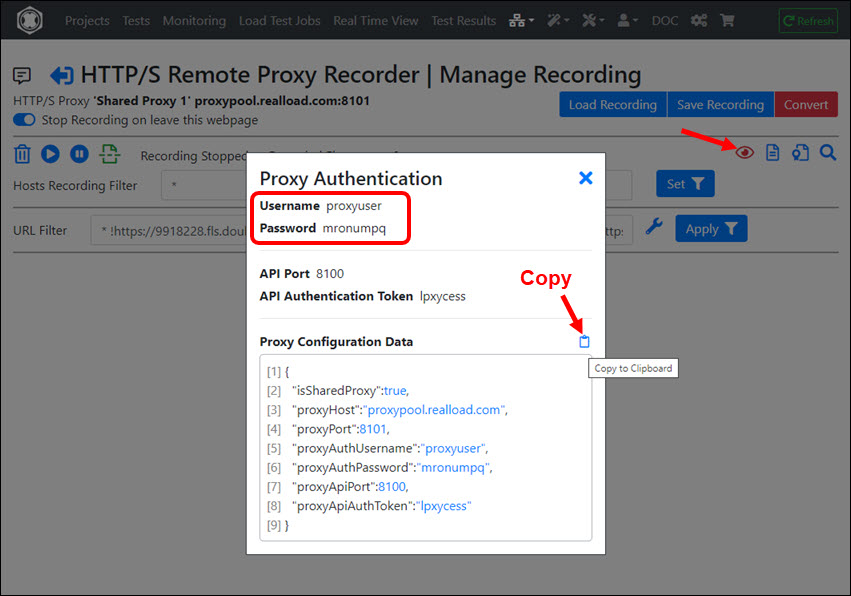

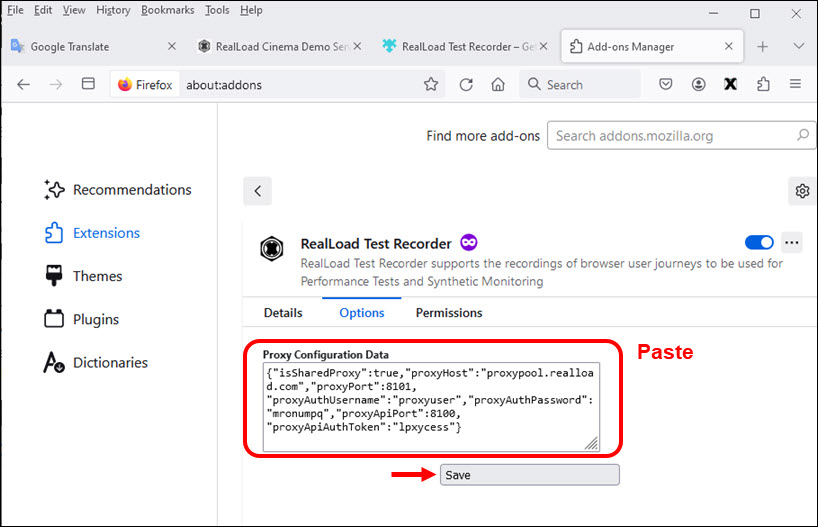

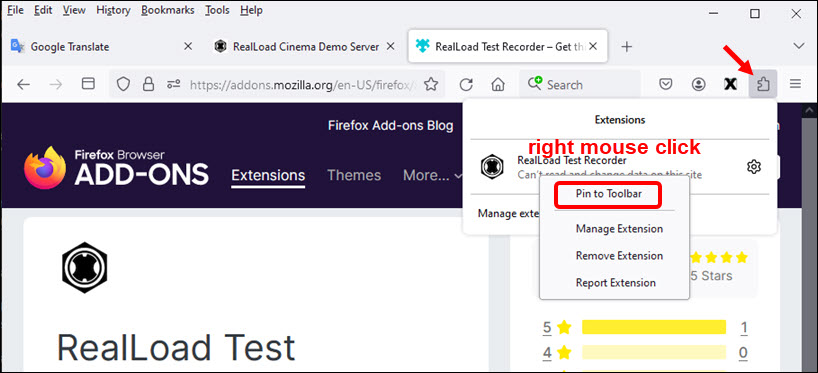

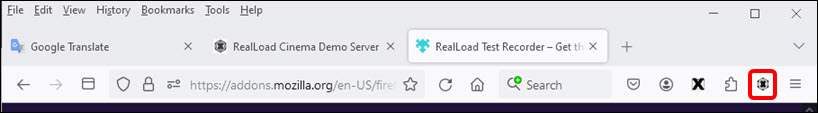

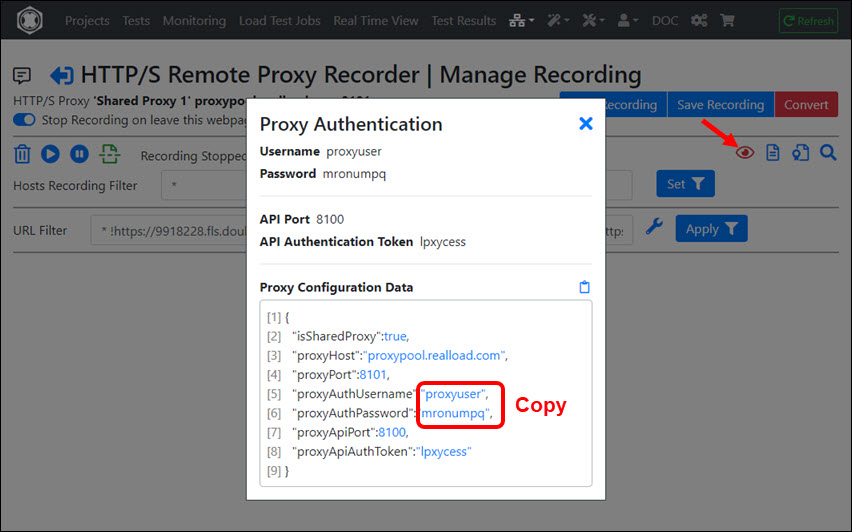

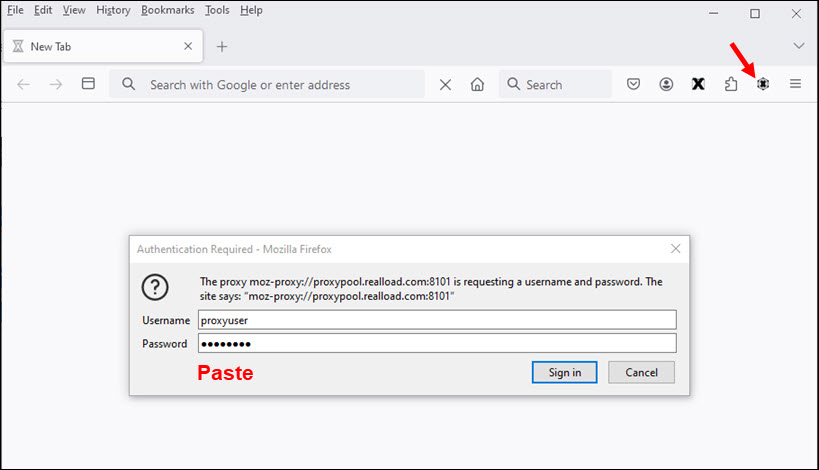

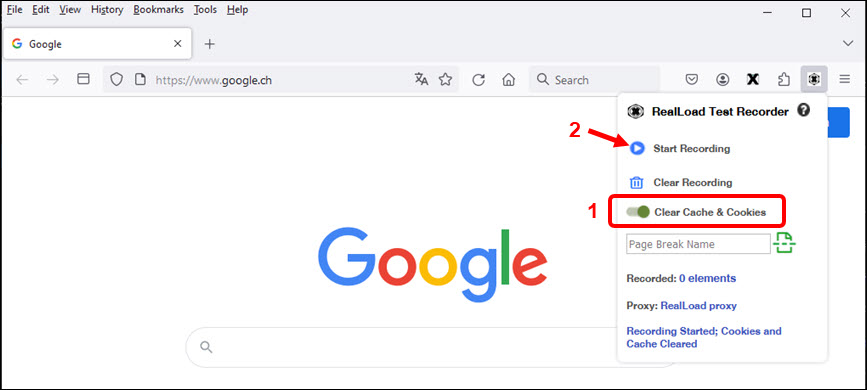

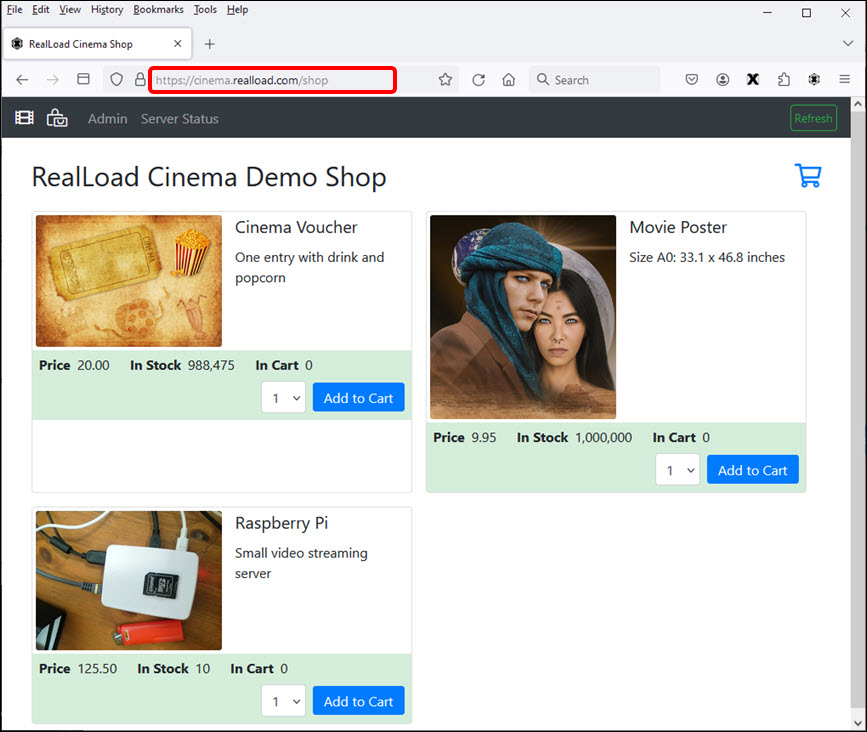

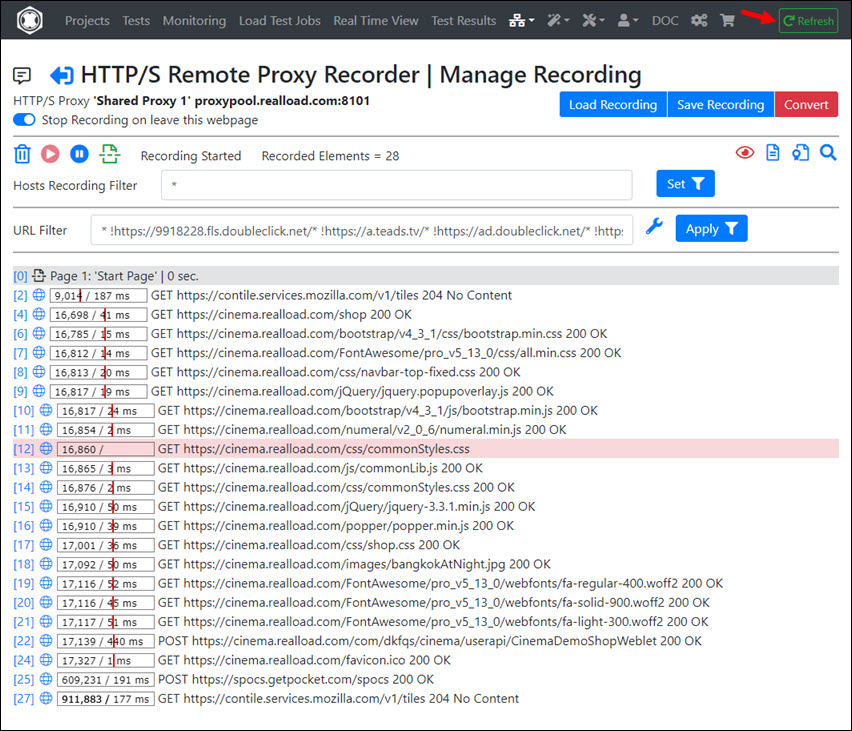

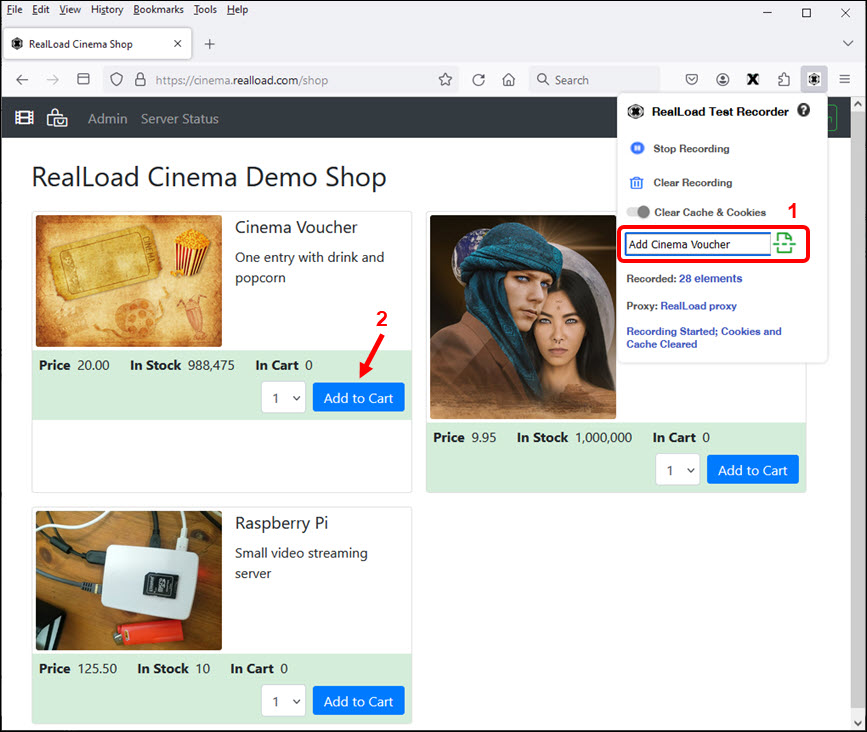

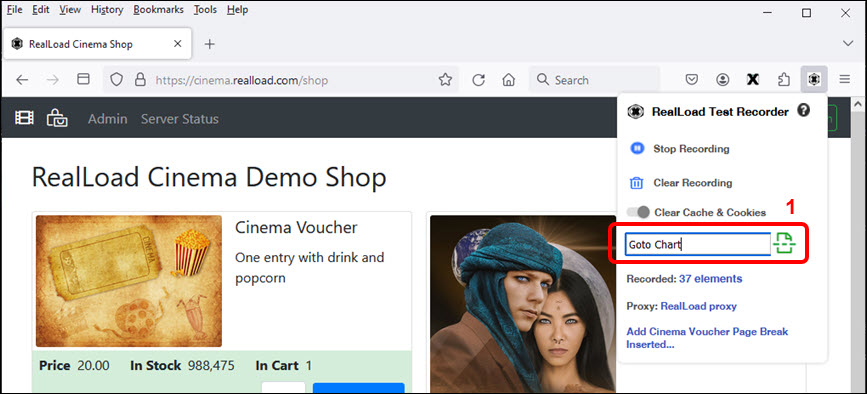

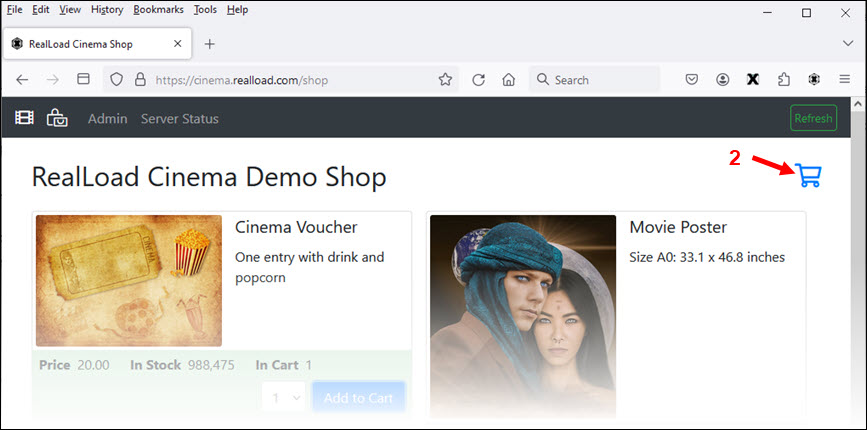

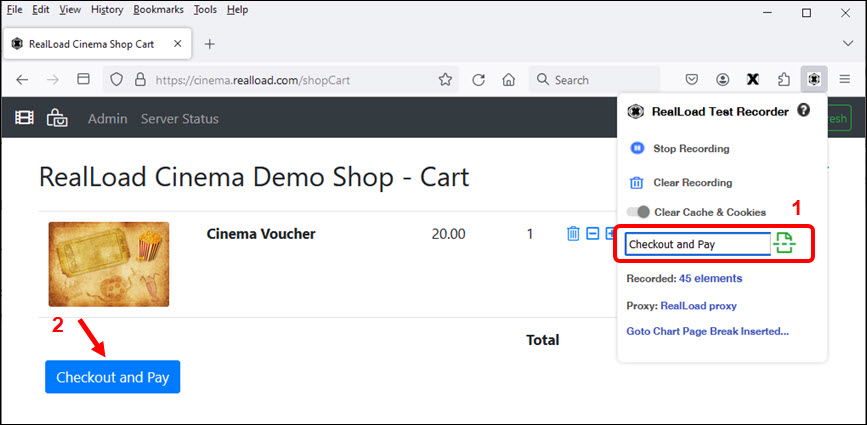

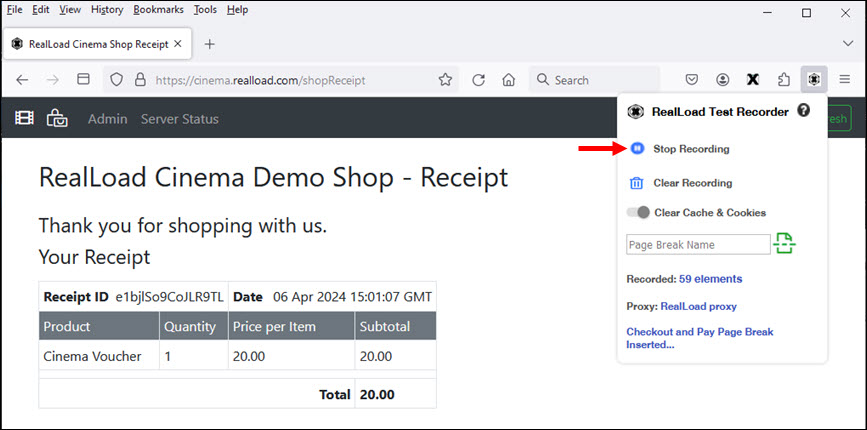

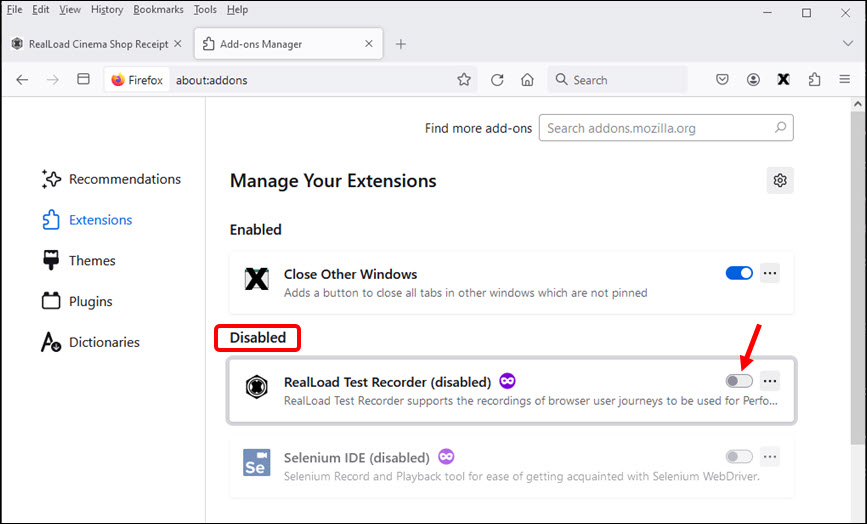

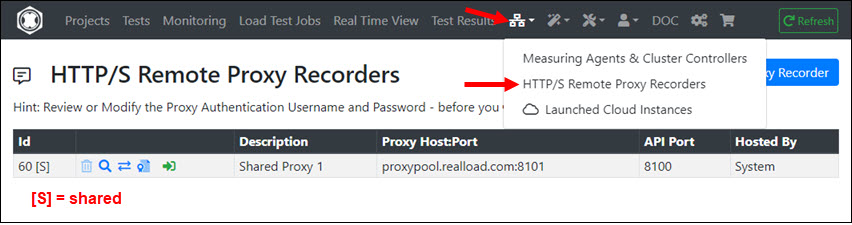

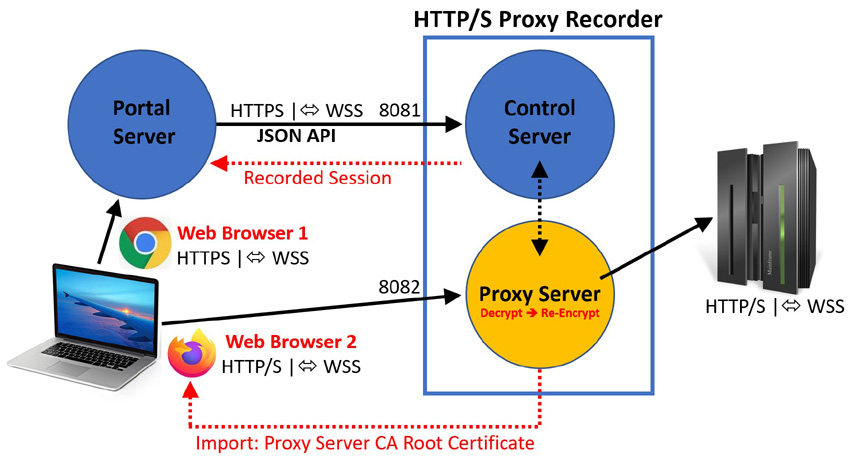

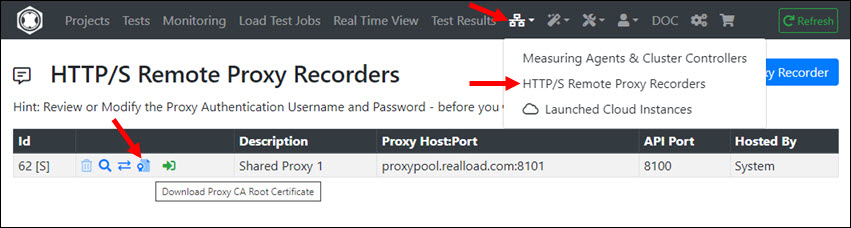

The use of RealLoad browser extensions is optional, but allows you to conveniently control the recording of a web surfing session in combination with a Remote HTTP/S Proxy Recorder.

This section of the documentation will walk you through the first steps in order to getting started with the RealLoad Product.

Follow the next sections of the document and you should be able to run your first basic test script using the SaaS Evaluation scenario within 20 minutes.

Let us know if encounter any issues while getting started, as that will help us updating this documentation to make it as clear and user friendly as possible. Please email us at support@realload.com

First of all you’ll have to decide which RealLoad deployment type better suits your needs. These guidelines should assist you in making an informed decision.

There a few components that make up the RealLoad solution. Most components are available under an SaaS model which doesn’t require any infrastructure commitment from your side.

If required by security or other constraints, some or all components can be deployed on your own infrastructure. Please reach out to us to discuss requirements if you’re planning to go down this road.

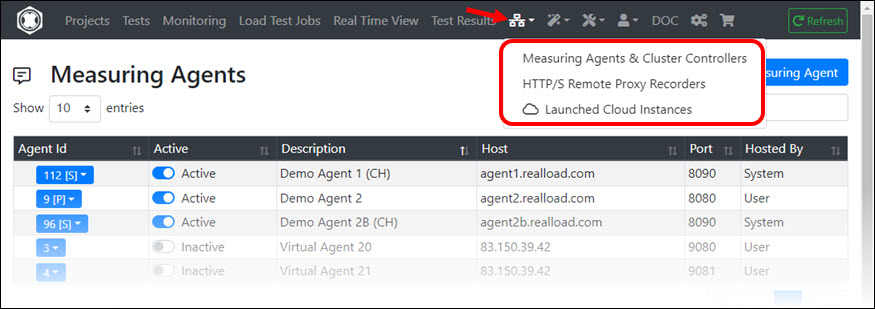

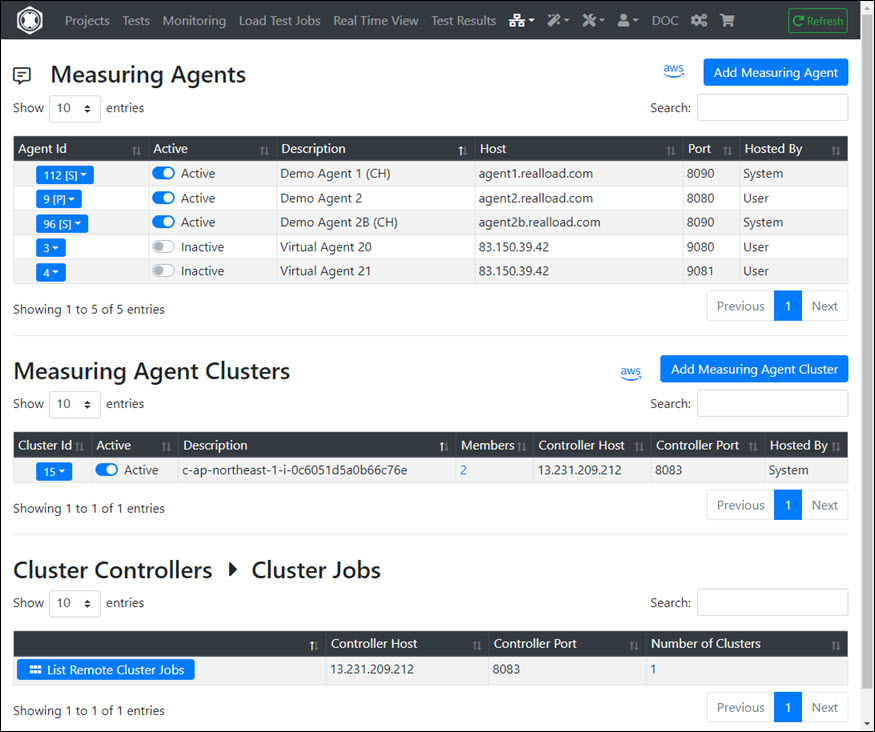

The key components that make up RealLoad’s solution are:

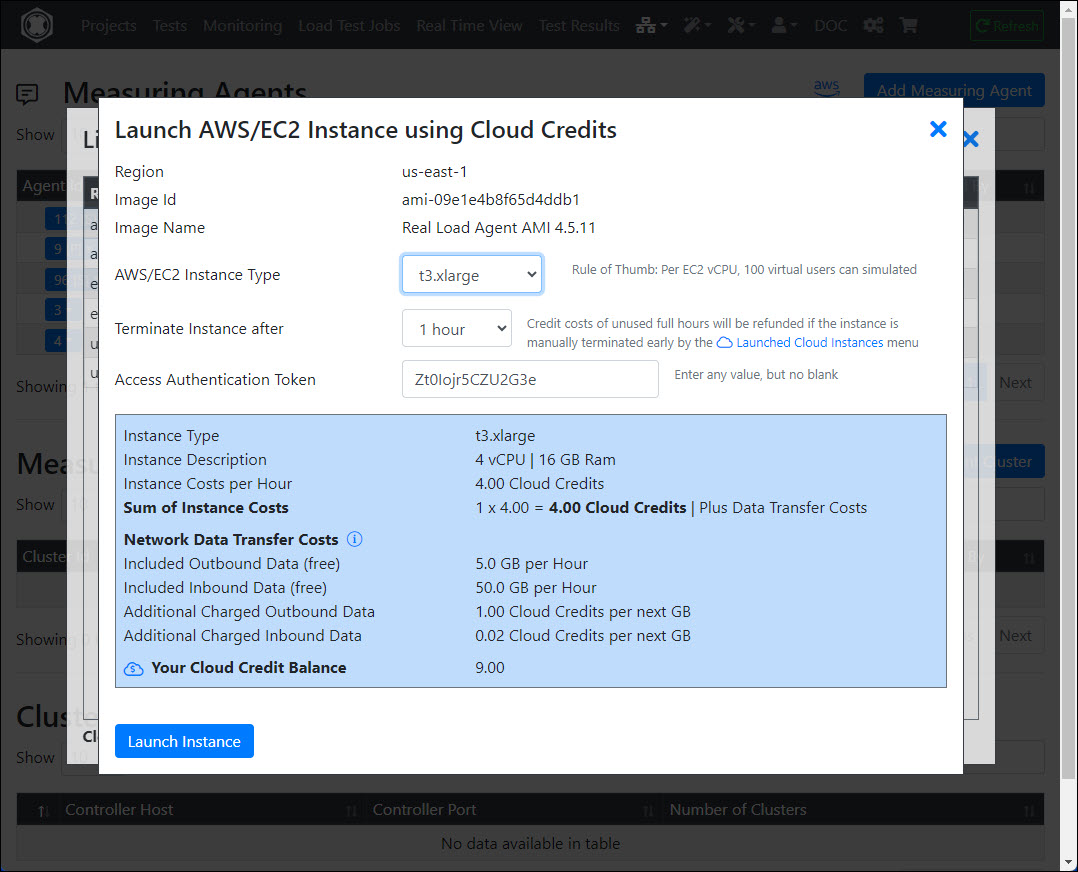

There are 2 options for your to run AWS cloud based Measuring Agents:

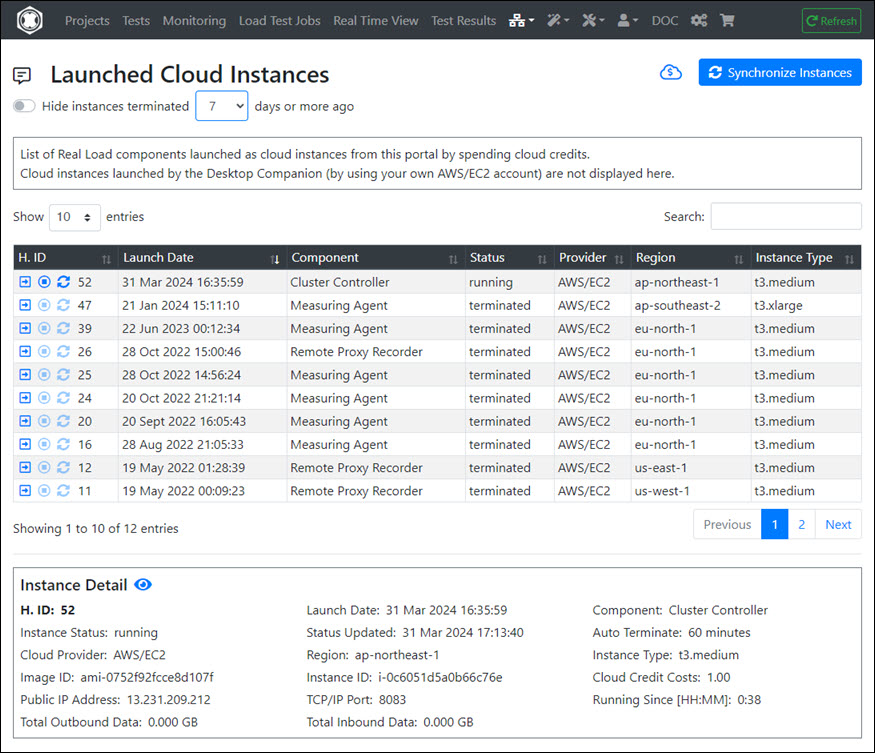

- Using our Cloud Credits: The relevant Virtual Machines are spun up from our portal under our own AWS account and you pay a usage fee using Cloud Credits you’ve previously purchased from us.

- By starting pre-configured AWS AMIs under your own AWS account. You’ll be charged directly for AWS related costs.

The RealLoad portal gives you the ability to launch load generators (Measuring Agents) in the AWS cloud with a click of button and the execute a load test.

Key features and requirements are:

- You’re not required to have an AWS account, as the Measuring Agent is run under RealLoad’s AWS account.

- You pay for the AWS and RealLoad licensing costs using previously purchased Cloud Credits.

- The application to be load tested needs to be reachable from the Measuring Agent’s public IP address, assigned by AWS at launch.

Using Cloud Credits is not an option if the servers to be performance tested are not exposed to the internet. In this scenario, the Measuring Agent will need to be deployed behind perimeter firewalls as follows:

Key features and requirements are:

- The Measuring Agent needs to be reachable from the internet based Portal Server. You’ll need to configure firewall and NAT rules on your perimeter firewall to allow communication.

- You’ll need to update the Measuring Agent software from time to time, to stay compatible with updates on the Portal Server.

- You’ll need to purchase a Load Testing license to suit your load requirements.

Typically Synthetic monitoring is use to monitor services that are exposed to the internet. RealLoad uses Measuring Agents to implement the monitoring functionality.

We recommend executing Synthetic Monitoring tests from at least 2 Measuring Agents, to cater for transitory local disruptions (like network issues or agent software updates) which might trigger false positive alerts.

There are two types of agents you can use for monitoring purposes:

Key features and requirements are:

- The application or service to be monitored needs to be reachable from the internet.

- You’ll need to subscribe to a Synthetic Monitoring plan. Reach out to us for pricing.

Should you have the need to monitor services within your perimeter, this can be arranged by deploying Measuring Agents within your networks.

Key features and requirements are:

- You’ll need to deploy two measuring agents behind the firewall perimeter. For AWS deployments your can use our existing AMIs, for other infrastructure install the Measuring Agent on a supported Linux Distribution.

- You’ll need to update the Measuring Agent software from time to time, to stay compatible with updates on the Portal Server.

- The Measuring Agents need to be reachable from the internet based Portal Server. You’ll need to configure firewall and NAT rules on your perimeter firewall to allow communication.

- You’ll need to subscribe to a Synthetic Monitoring plan. Reach out to us for pricing.

If you already have an account, you can login at https://portal.realload.com

In order to login to the portal you’ll first have to setup an account. Go to the portal URL and click on the Sign Up button or go this URL: https://portal.realload.com/SignUp

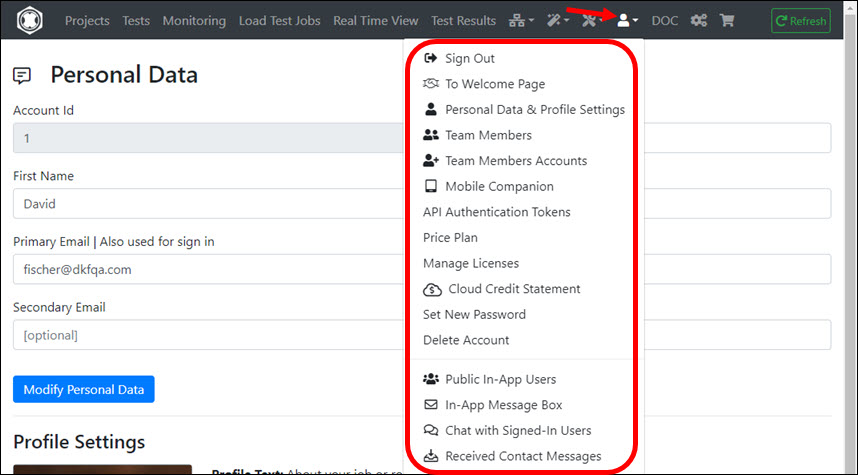

You’ll need to provide:

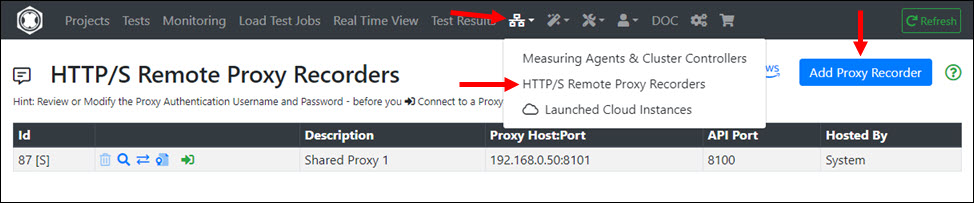

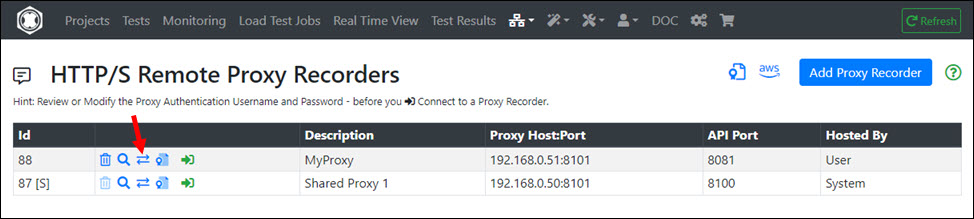

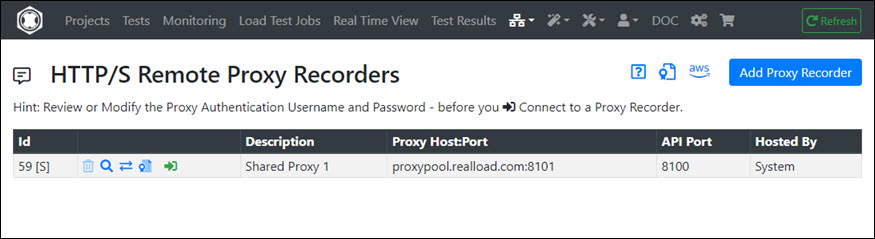

After sign up, you have access to 2 (shared) Measuring Agents and to a remote HTTP/S Proxy Recorder.

A free account allows you to execute both Synthetic Monitoring Jobs & Load Test Jobs with the following limitations:

¹ = Does not apply for Load Test Jobs executed on Measuring Agents launched by Cloud Credits: in this case there are no such restrictions.

In addition, you will receive at sign up 20 free Cloud Credits, which you can use to launch additional (private) AWS Measuring Agents to perform Load Tests with hundreds of virtual users.

You can add a RealLoad license to your account at any time, even if your account is expired (but no longer than 6 months ago), by purchasing licenses at https://shop.realload.com .

To have your account deleted, please send an email to support@realload.com. We’ll then reach out to you to confirm deletion.

Your account will be immediately disabled and all your data will be removed within 90 days, including backups.

Provide your details, including email and mobile number. All data you enter in this form are only required to verify your identity and will not be shared with any third parties.

Validate your email address.

Validate your mobile number

Configure your nickname and password

Once you signed up, you will be automatically loggedIn and the welcome page will be displayed. Three examples are shown which are also available online:

This example shows how a simple HTTP/S Test can be defined as a RealLoad ‘Test’ and executed as both a Load Test Job and a Monitoring Job.

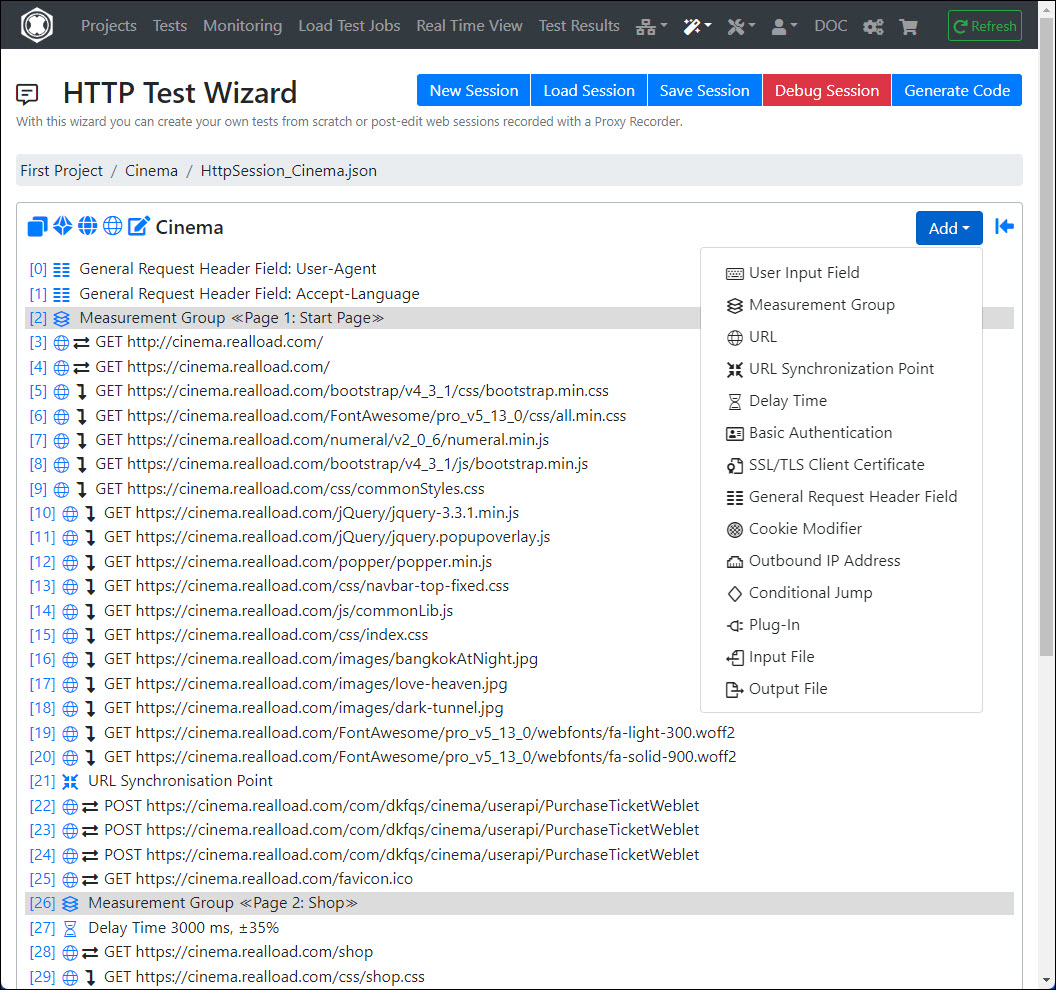

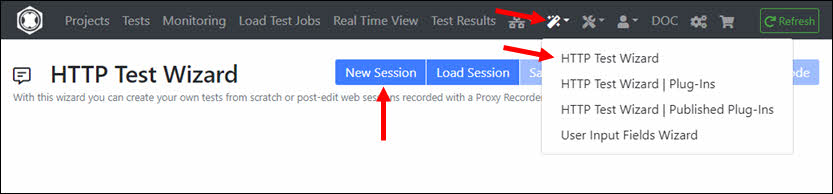

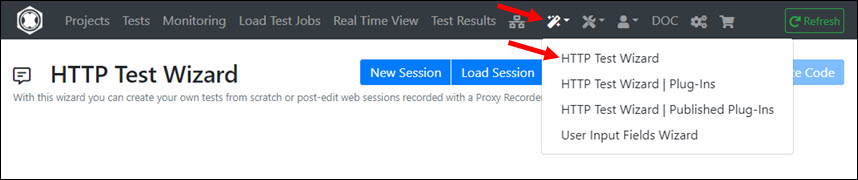

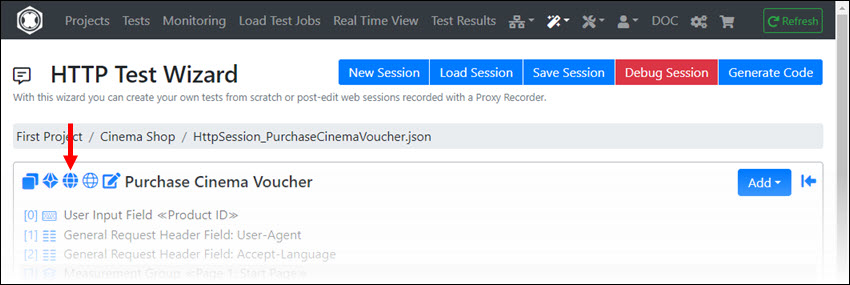

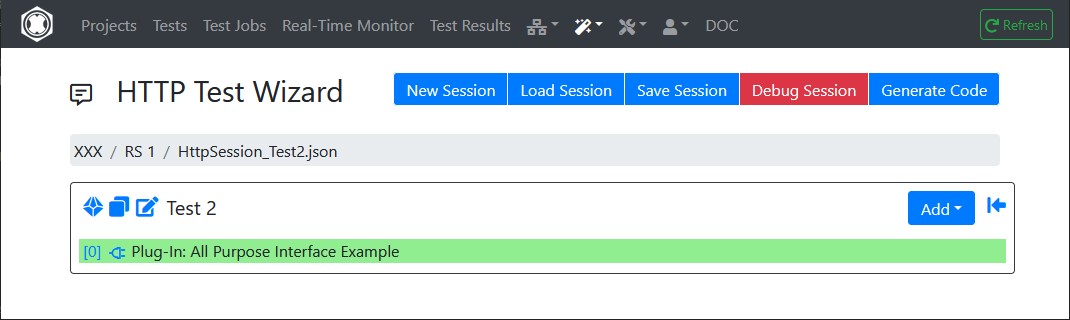

The test sequence is manually entered into the HTTP Test Wizard to keep this example simple. However, you can also record HTTP/S tests using an HTTP/S Proxy Recorder and convert the recording into a HTTP Test Wizard session.

To configure and execute a simple test you’ll need:

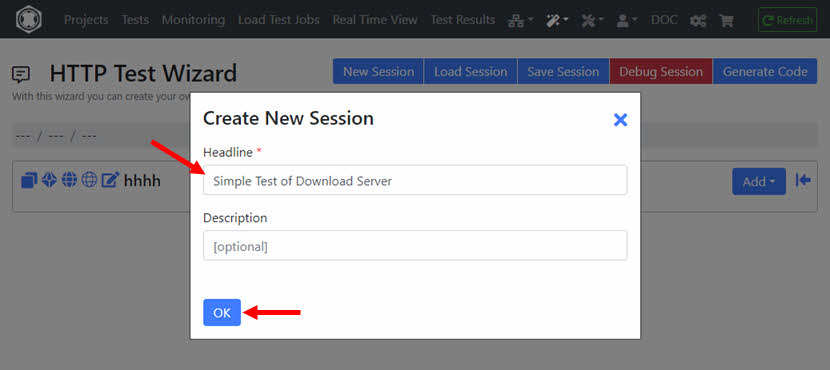

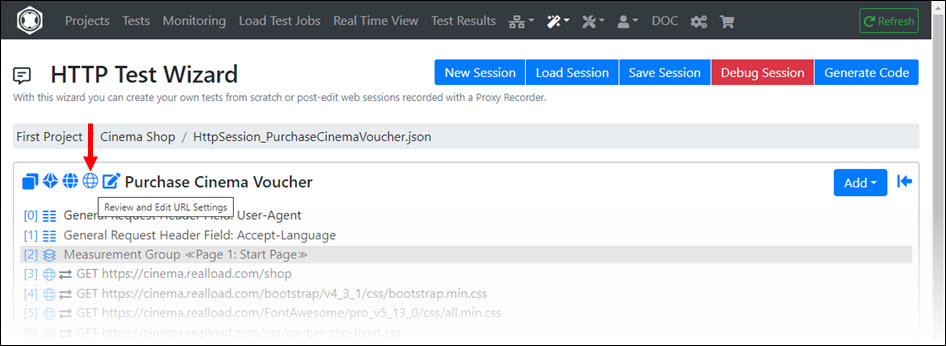

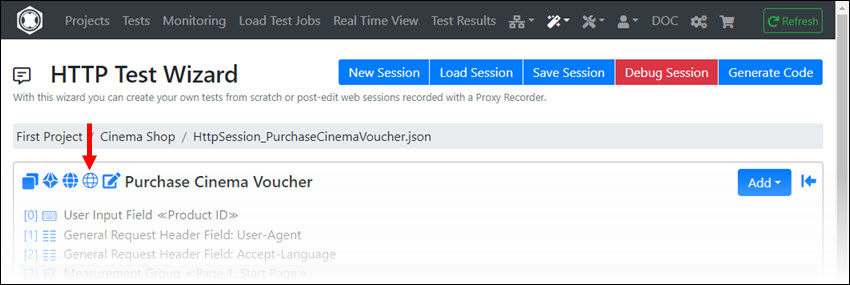

Enter the Headline that briefly describes what the test does.

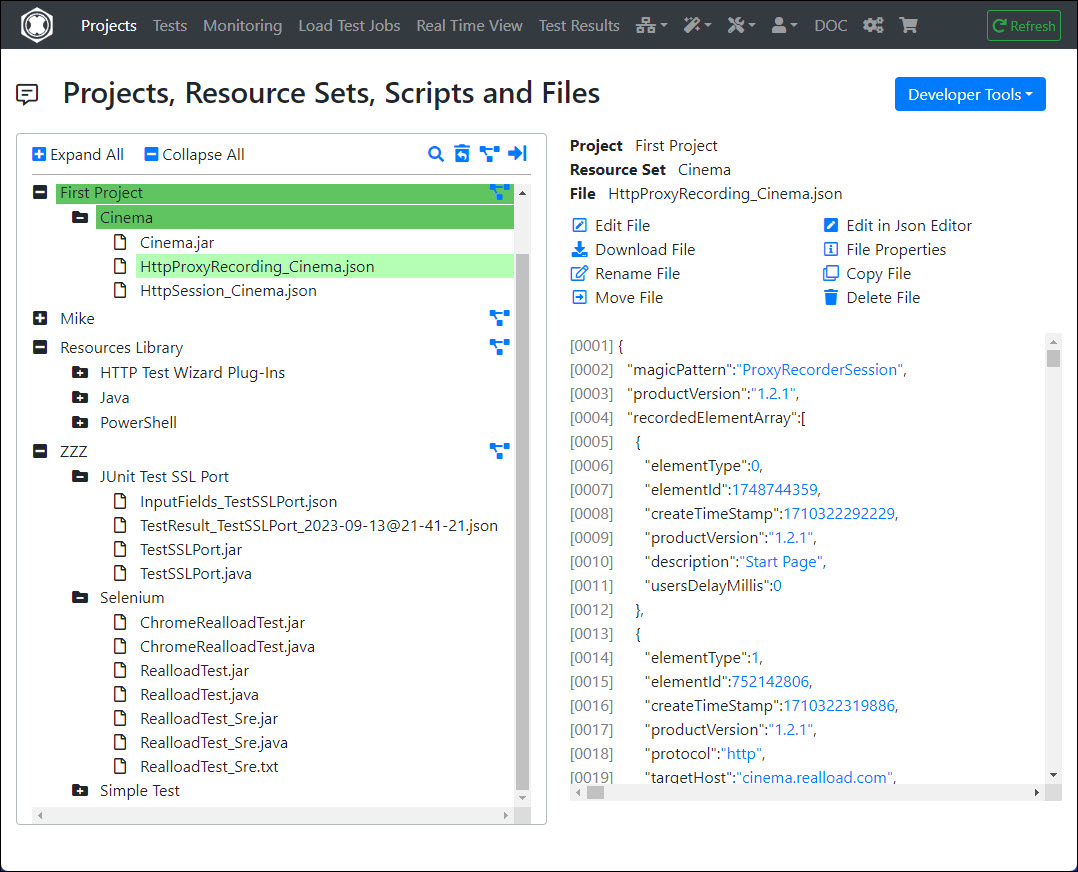

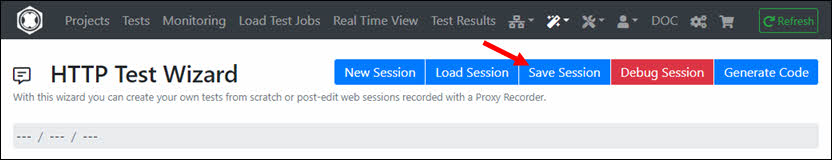

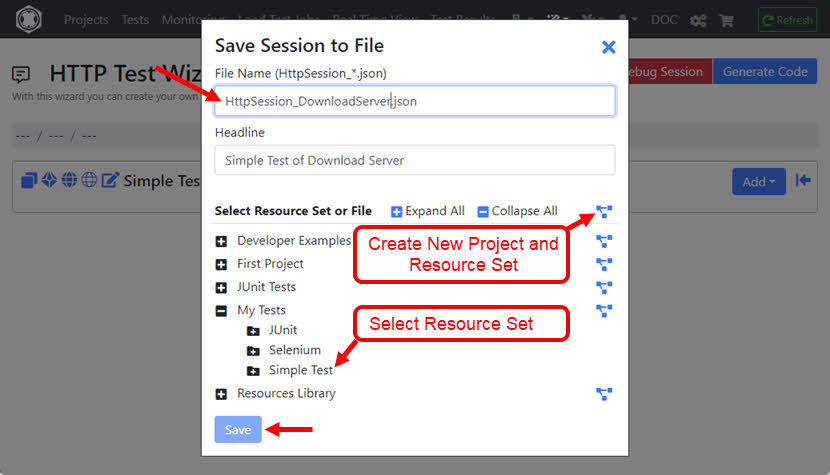

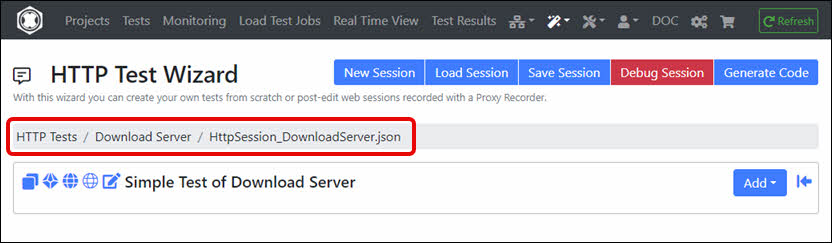

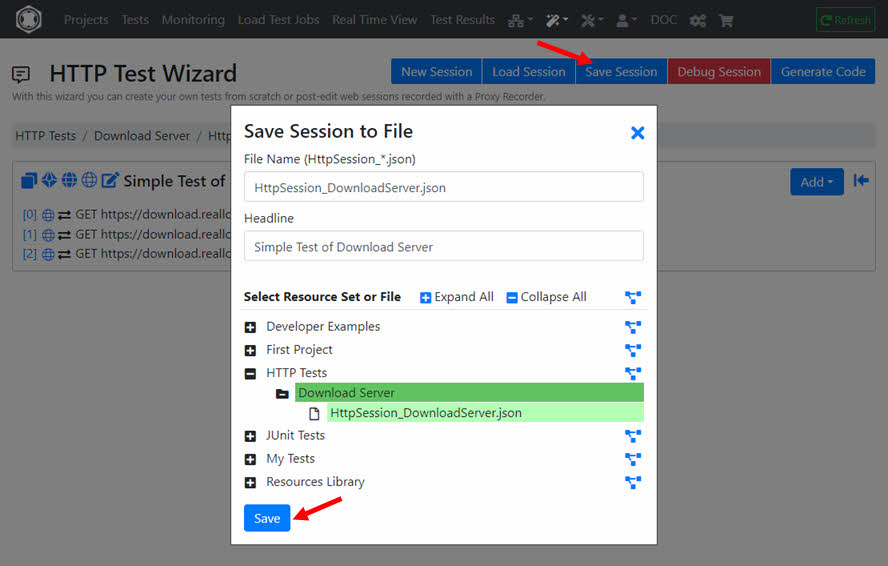

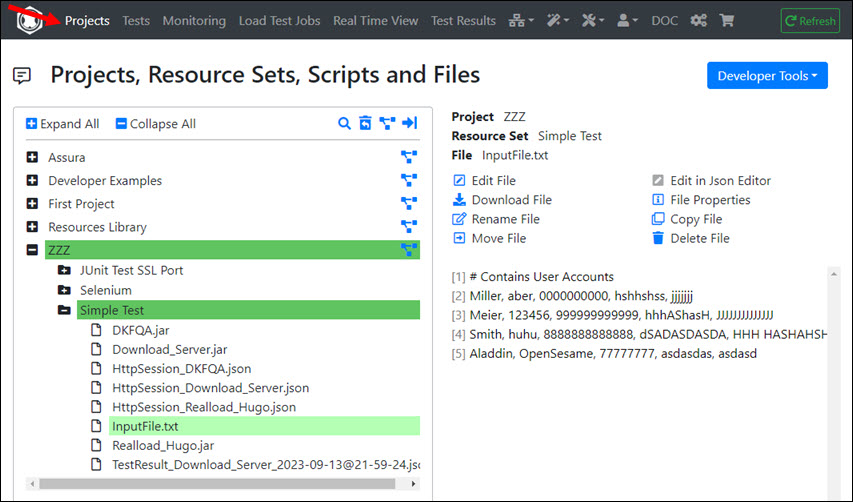

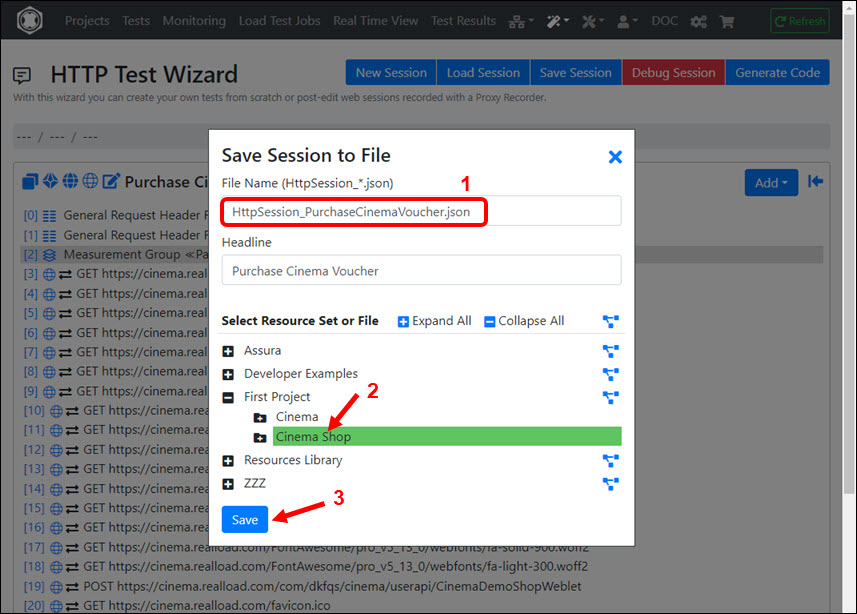

It’s best to save the empty session now. To do this, you must select a Resource Set of a Project. Alternatively, you can also create a new ‘Project’ and/or ‘Resource Set’. ‘Resource Sets’ are something like sub-directories of a Project, which contain all the files necessary to define and execute a test.

Enter the file name for the session, e.g. HttpSession_TestDownloadServer.json and save the session.

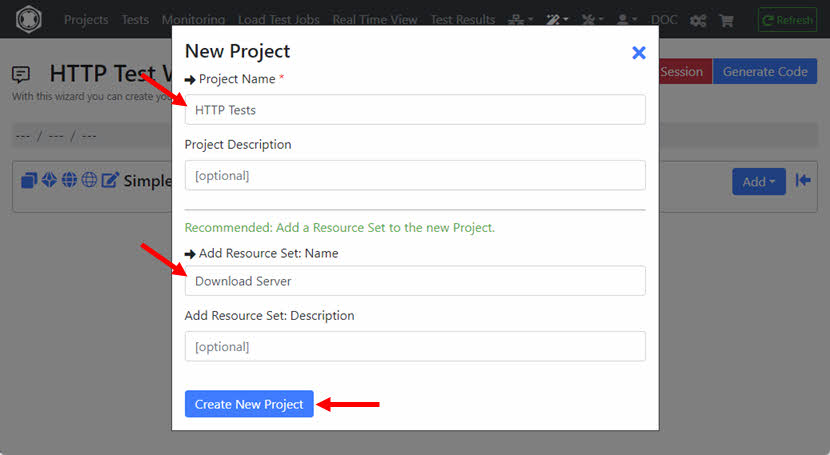

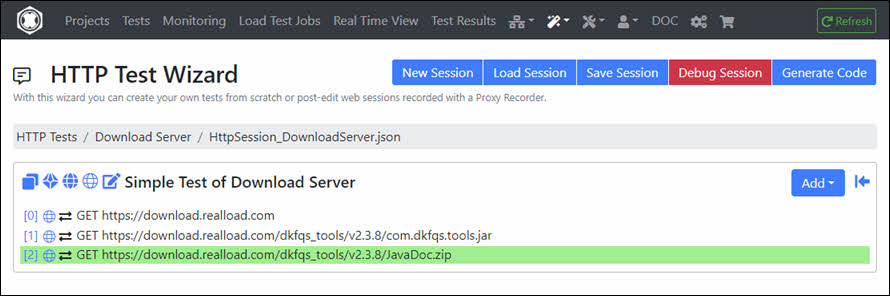

In this example, a new Project named ‘HTTP Tests’ is created which also contains a new Resource Set named ‘Download Server’.

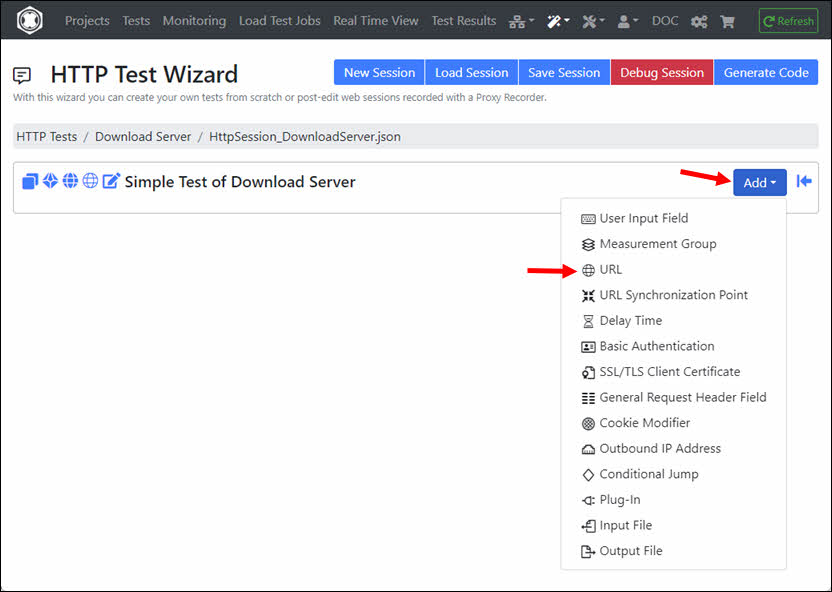

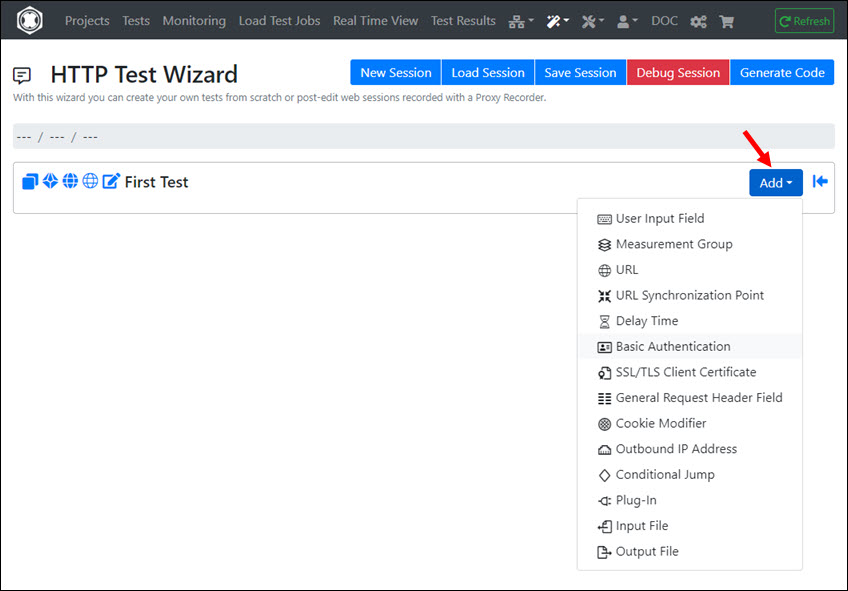

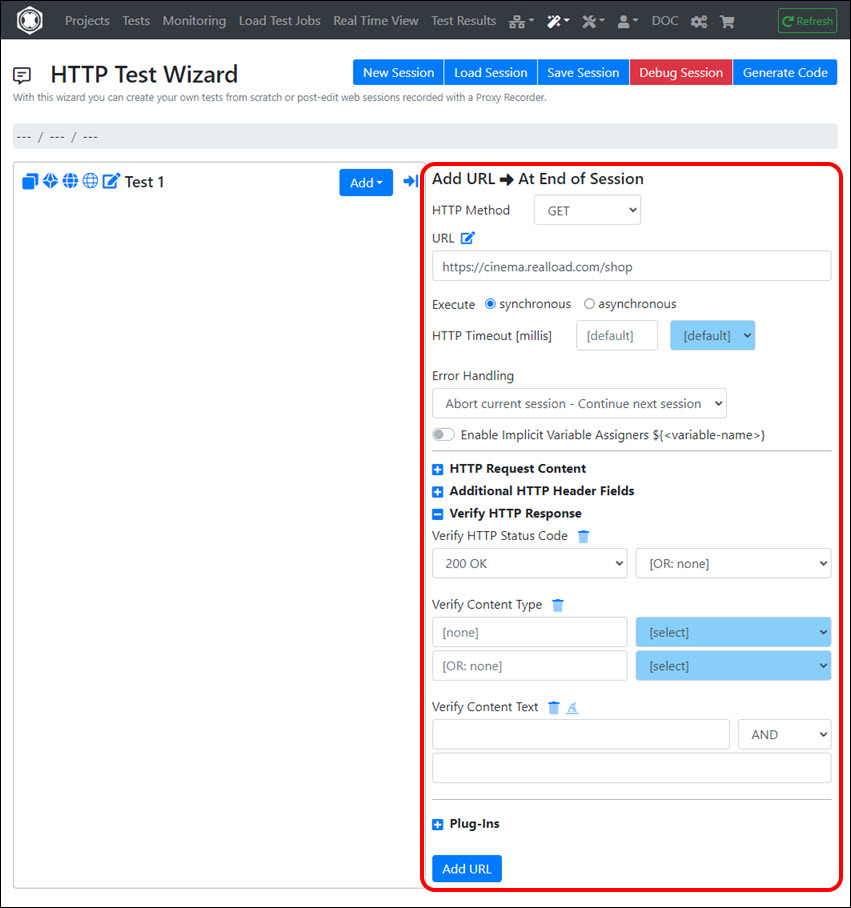

Click on the ‘Add’ dropdown and select ‘URL’.

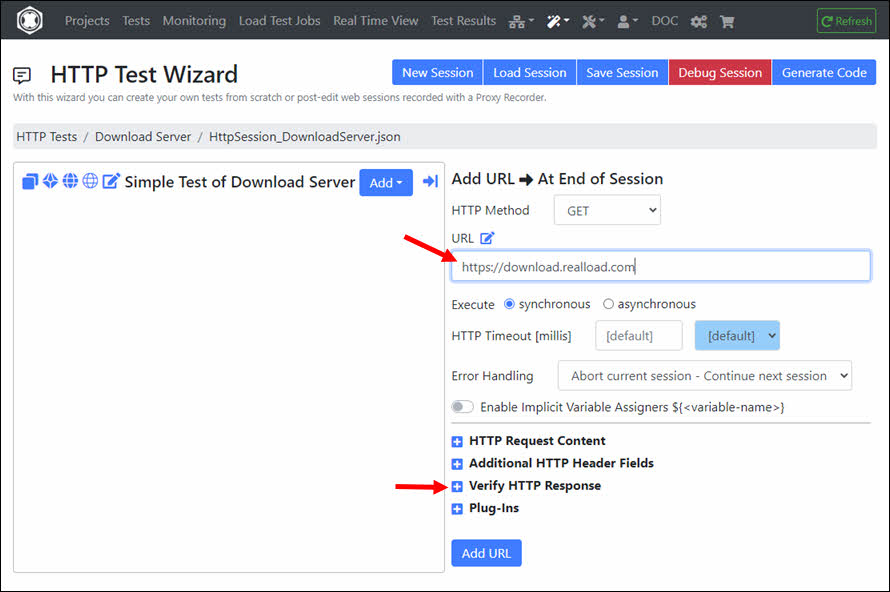

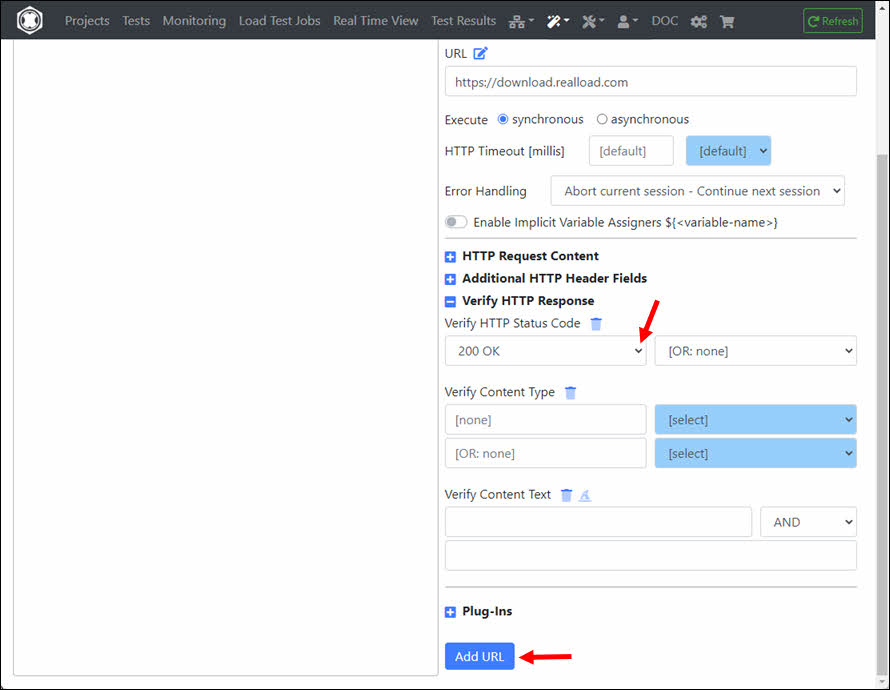

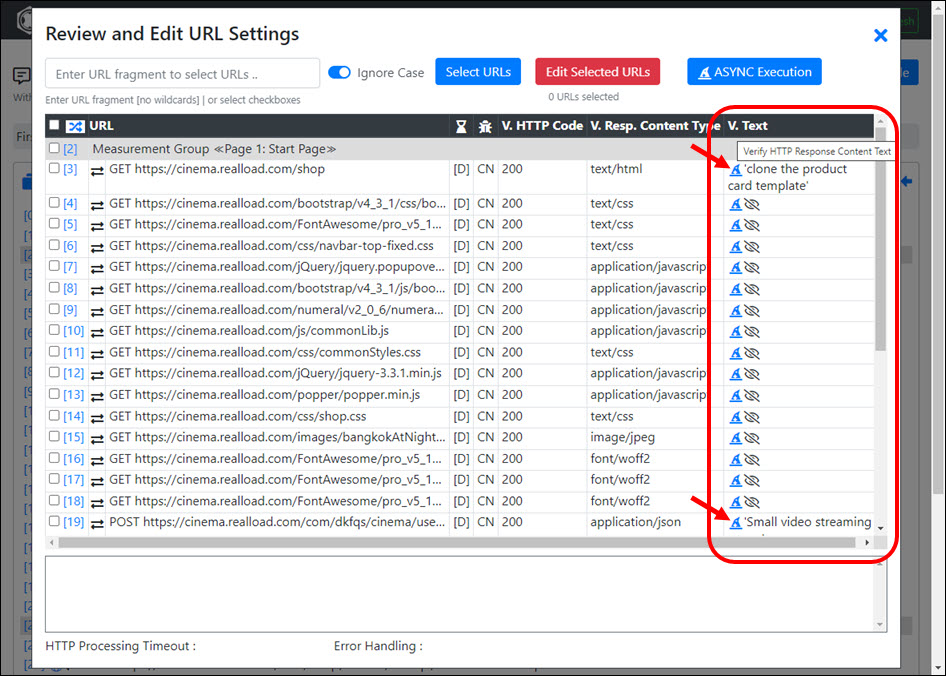

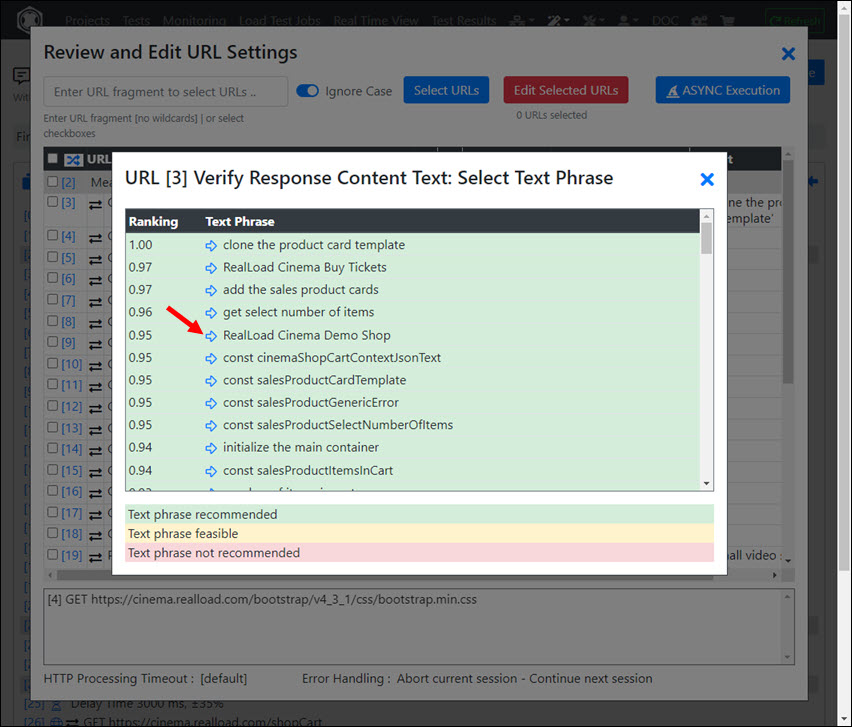

Enter the URL and click on the ‘+’ icon at ‘Verify HTTP Response’.

At ‘Verify HTTP Status Code’ select ‘200 OK’ and then click the ‘Add URL’ button.

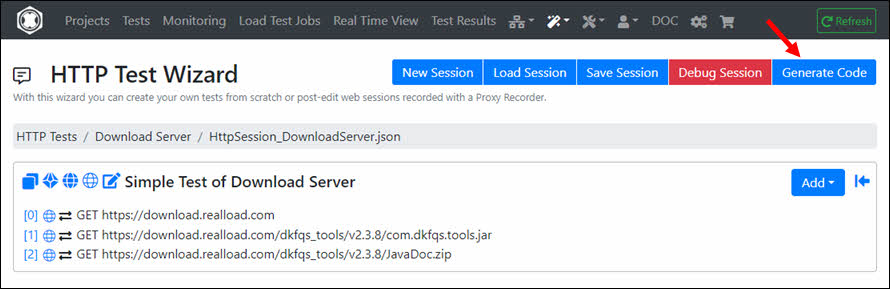

You can then add additional URLs to the session. In this example it looks like this:

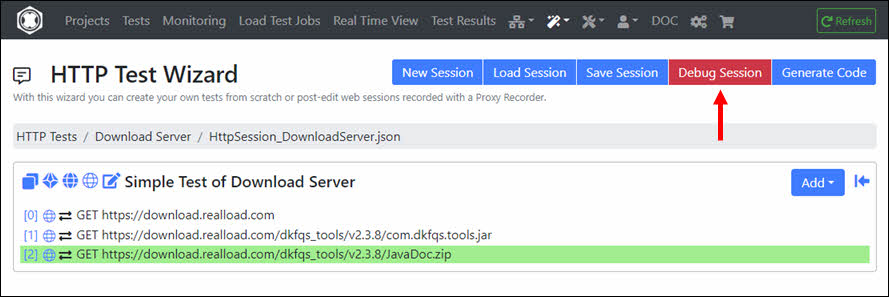

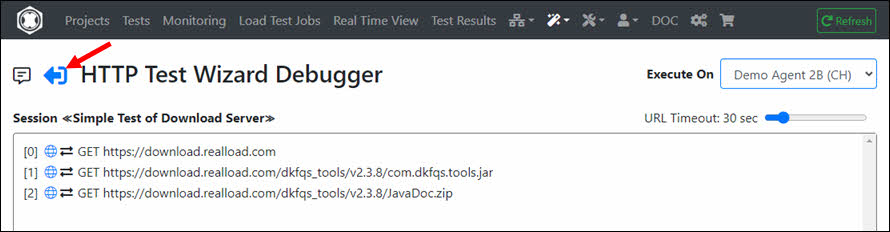

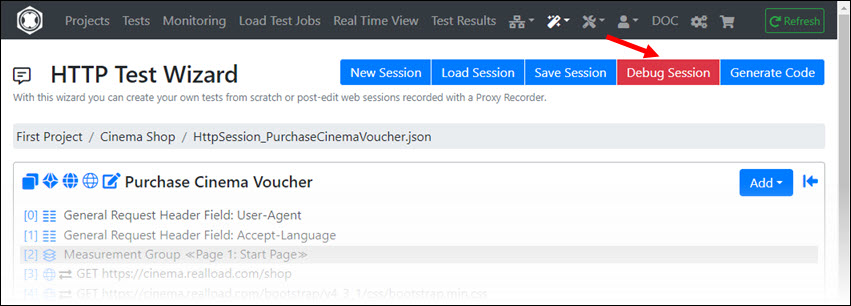

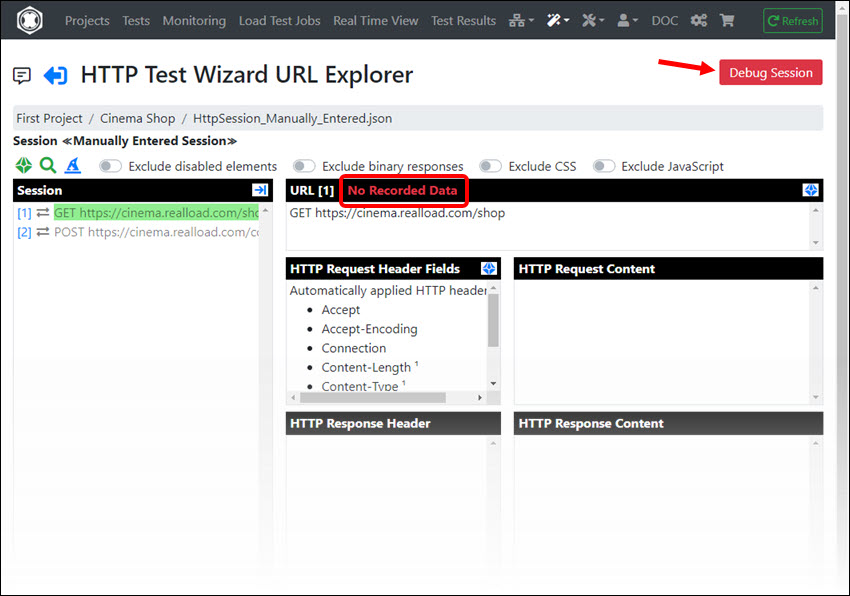

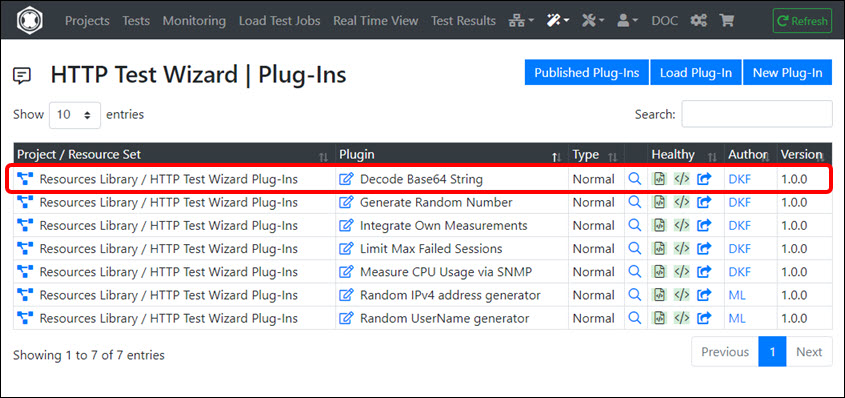

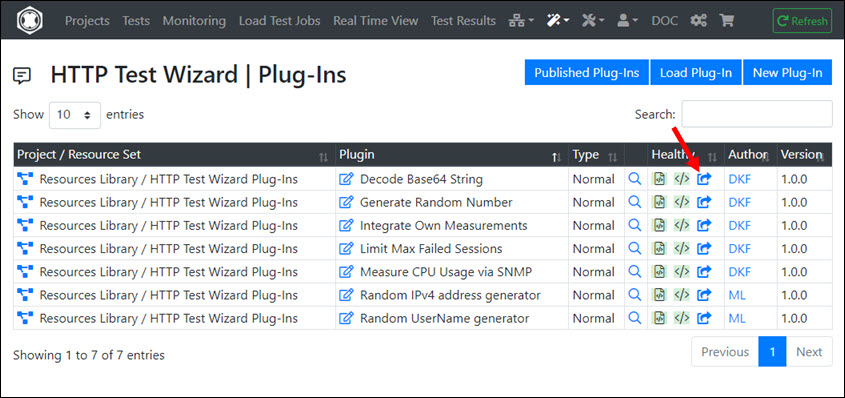

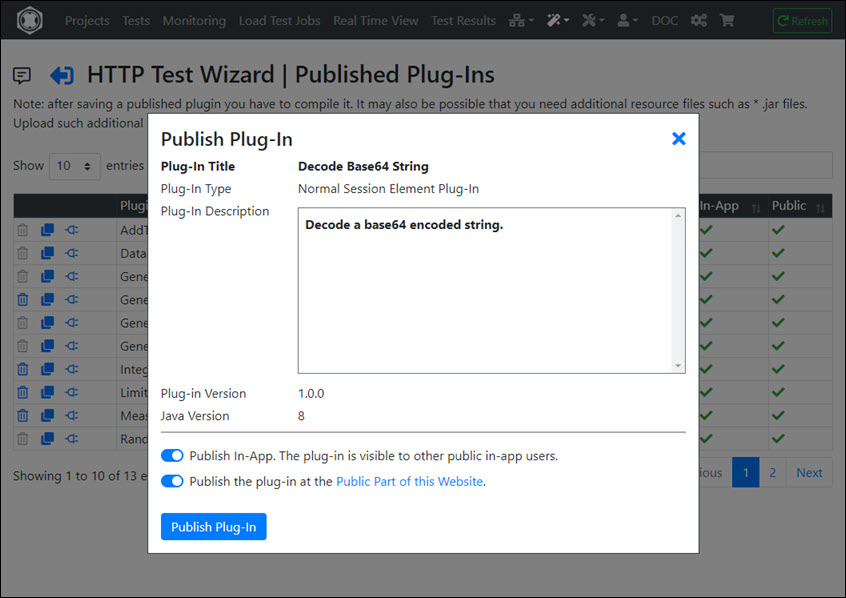

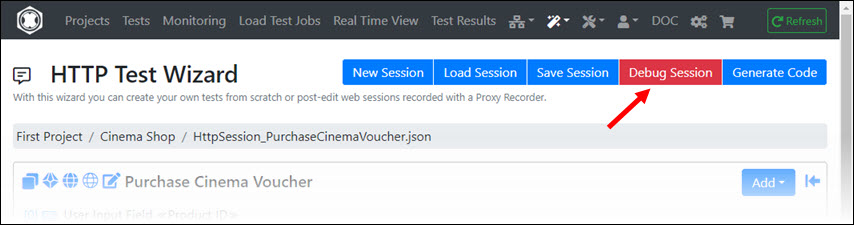

Before converting the session into a RealLoad ‘Test’ you should Debug the Session first. This way you can check whether all URL calls are working correctly. Click ‘Debug Session’.

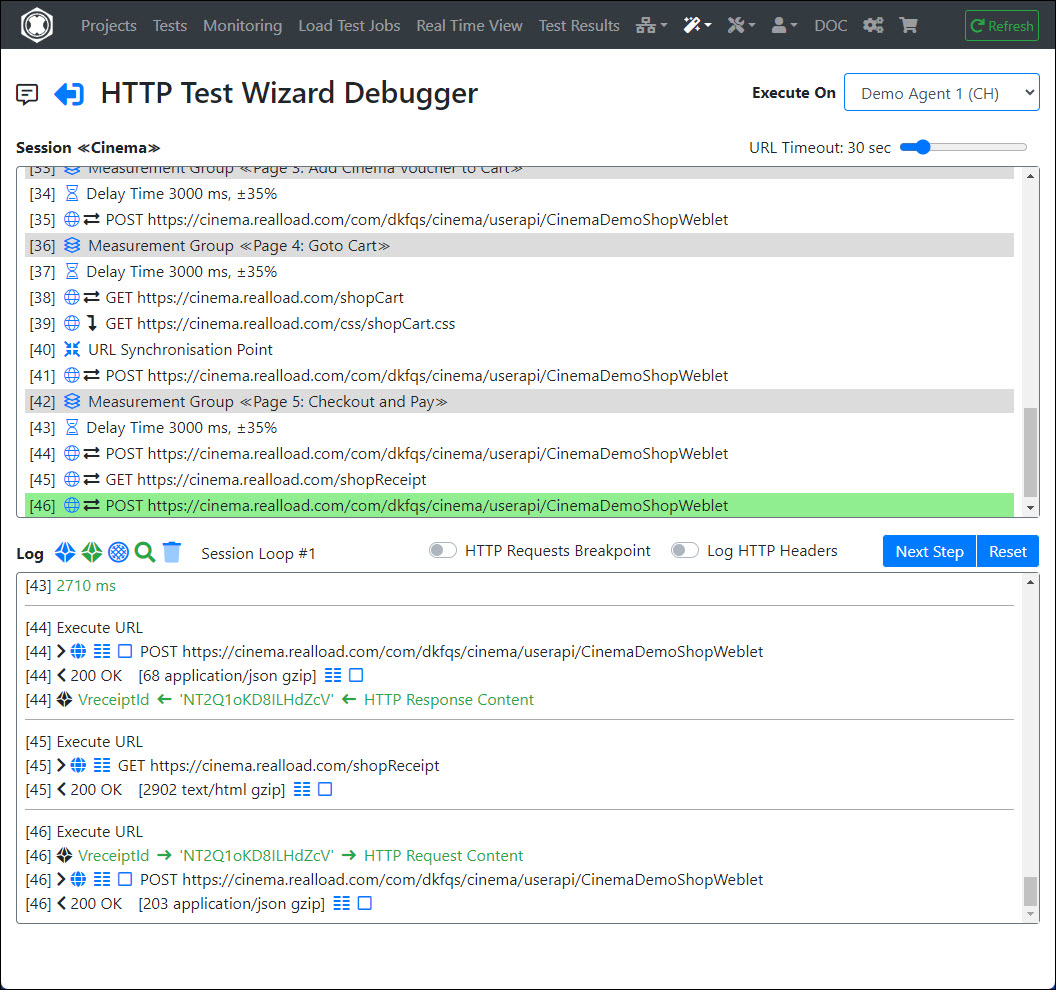

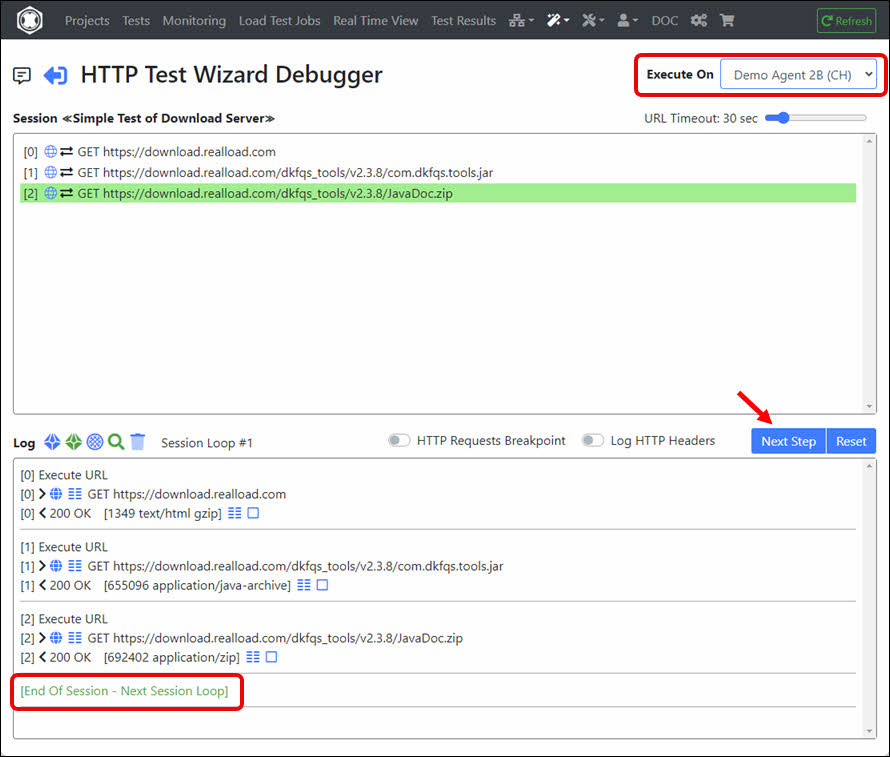

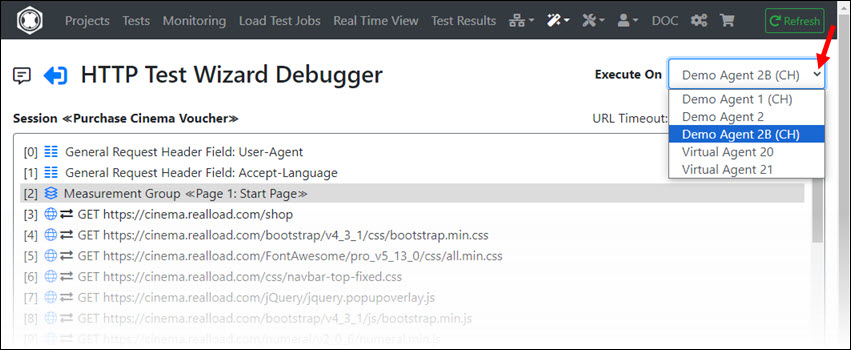

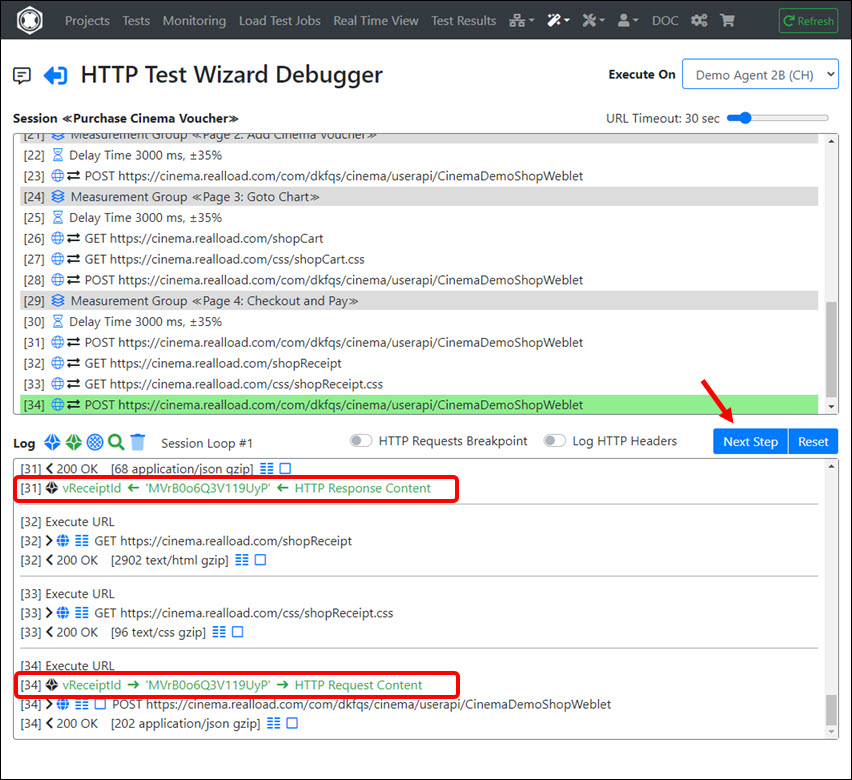

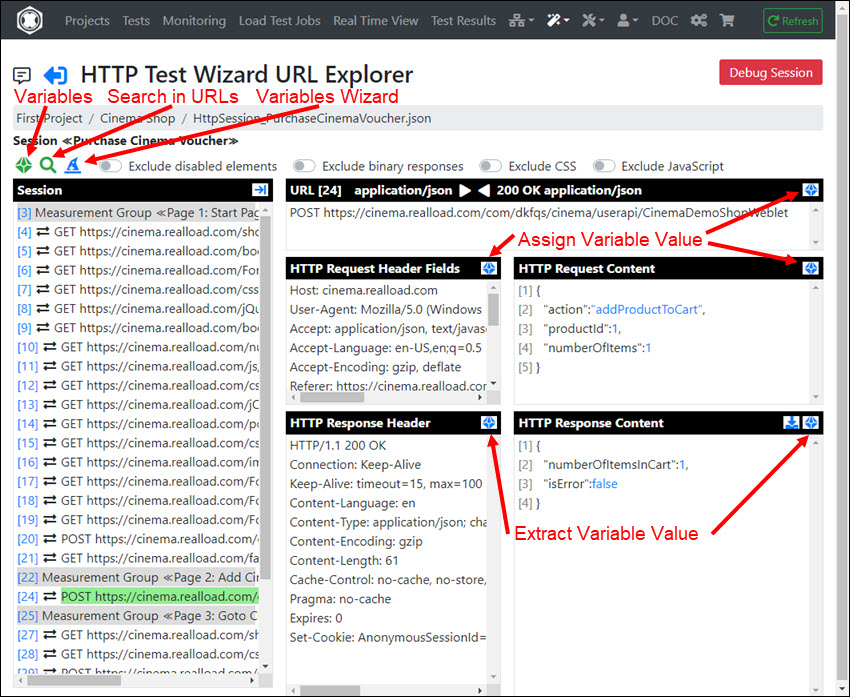

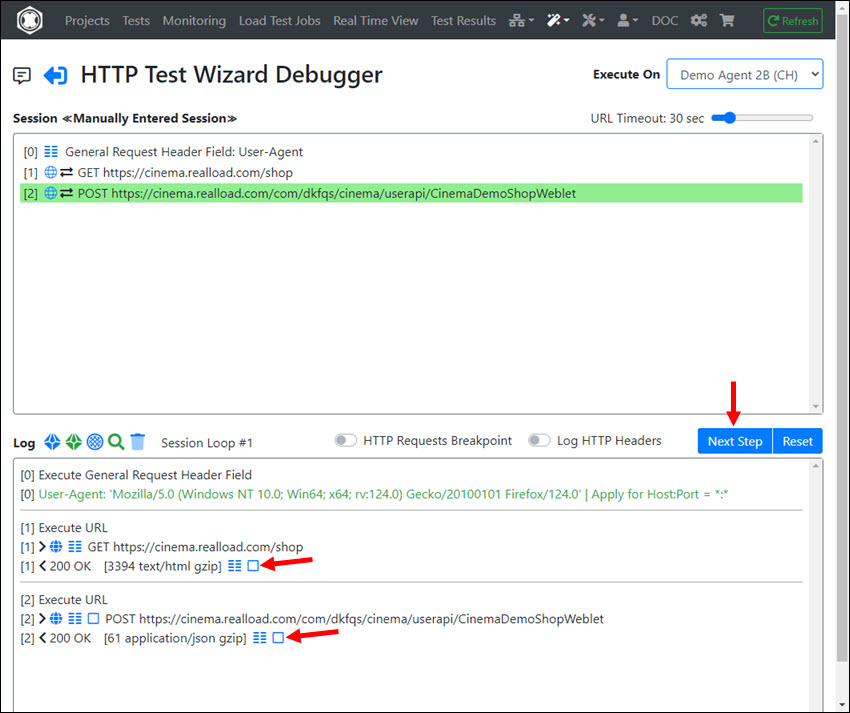

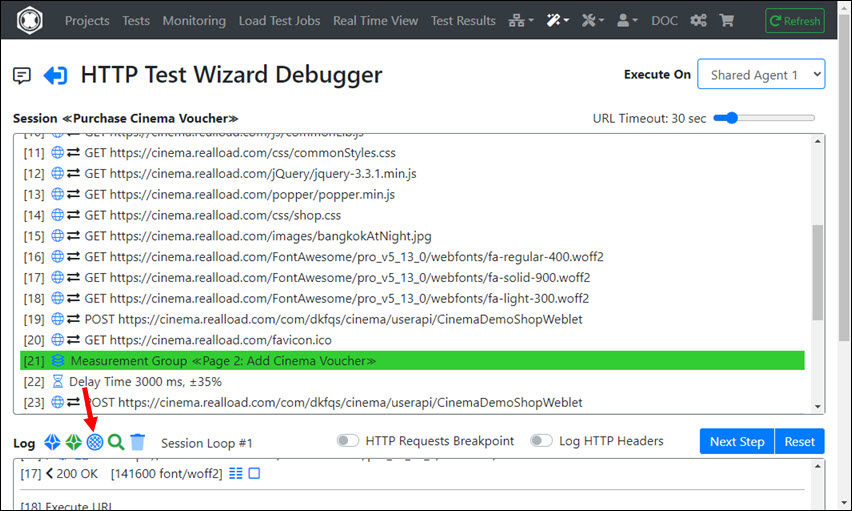

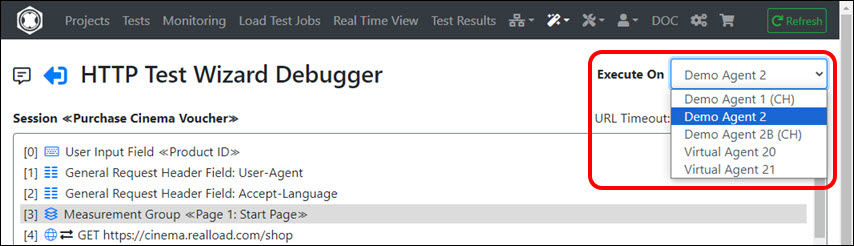

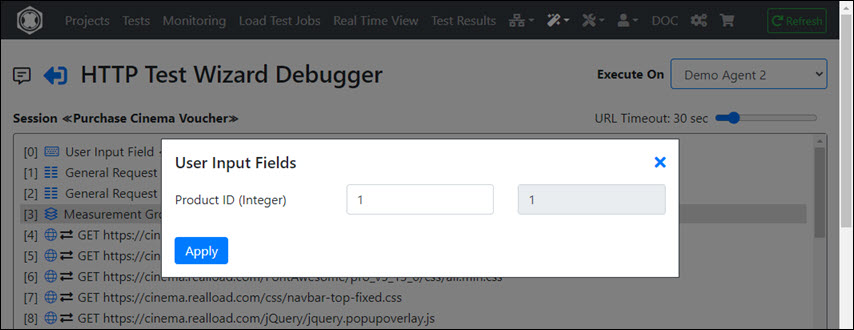

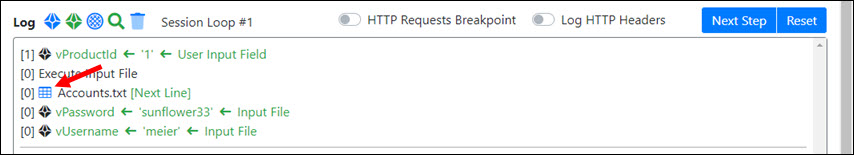

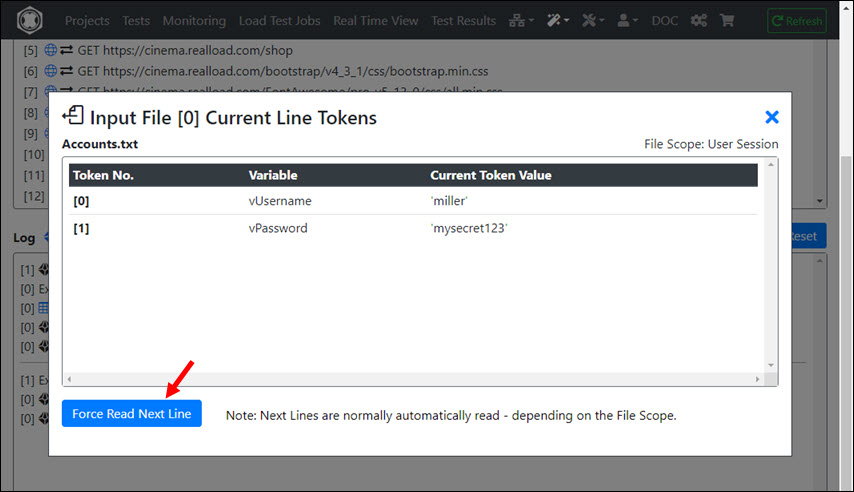

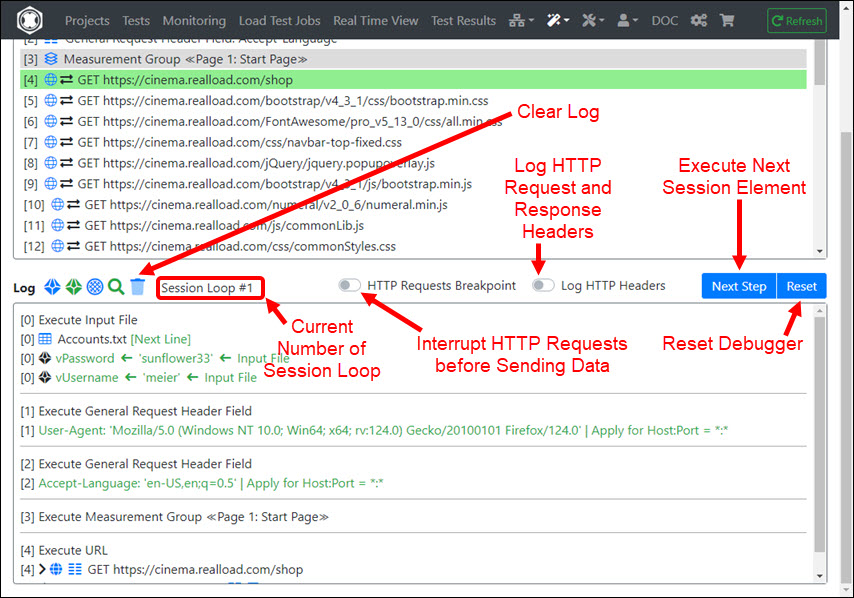

This invokes a powerful Remote Debugger that runs always on a Measuring Agent. As shown at the top right of the following image you can also select an alternative Measuring Agent on which the debugger will run.

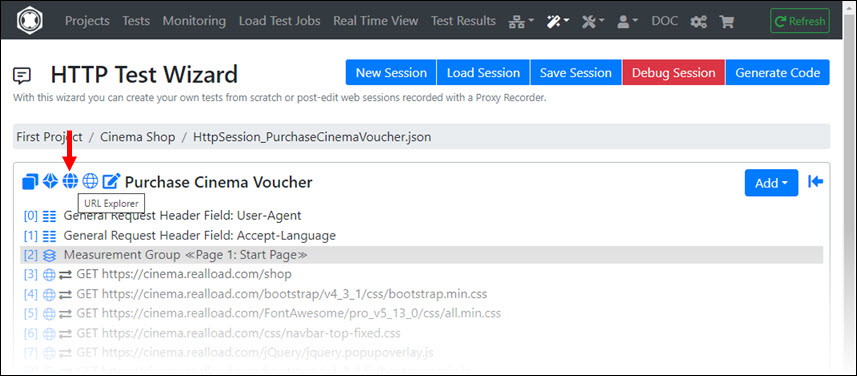

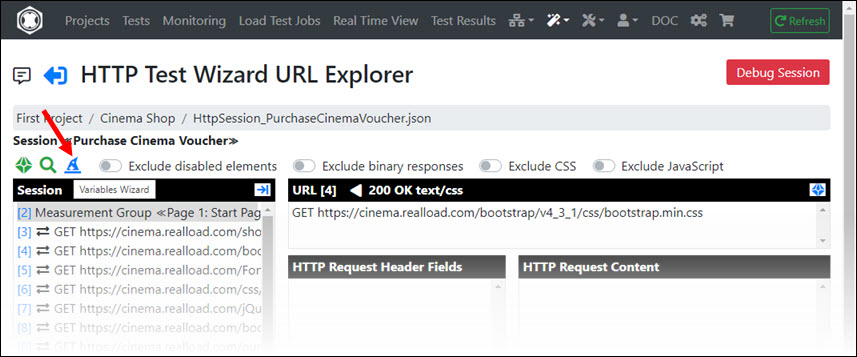

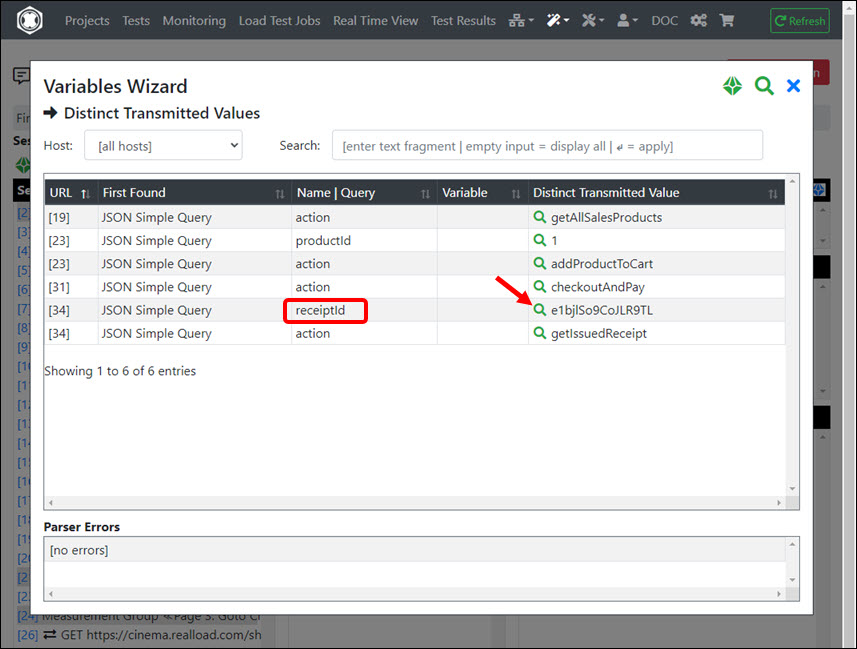

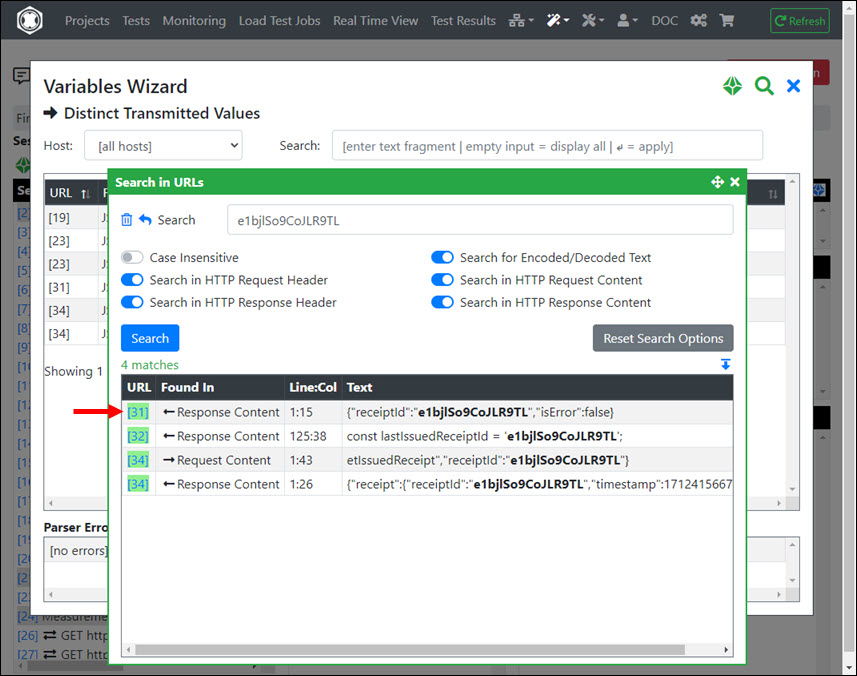

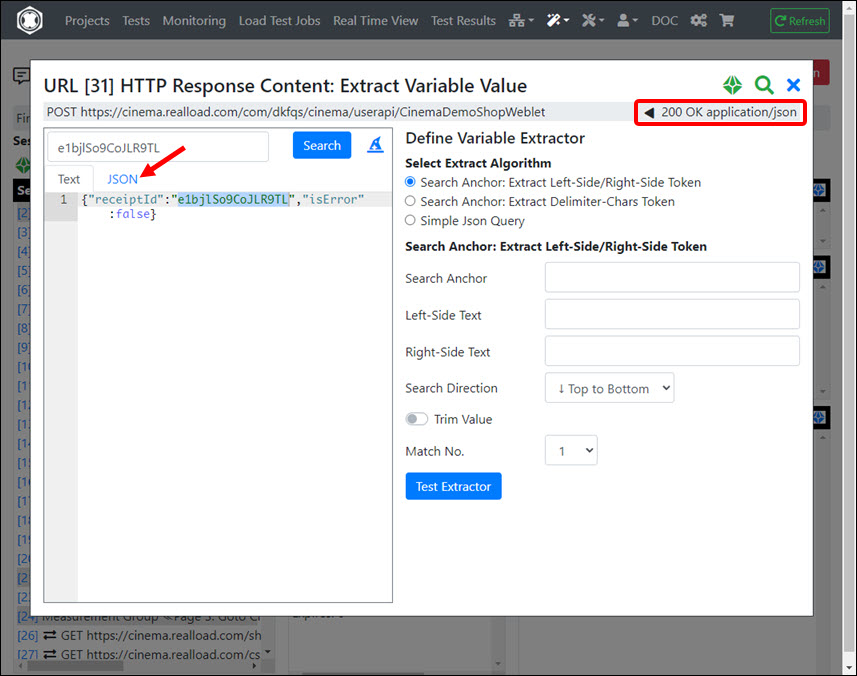

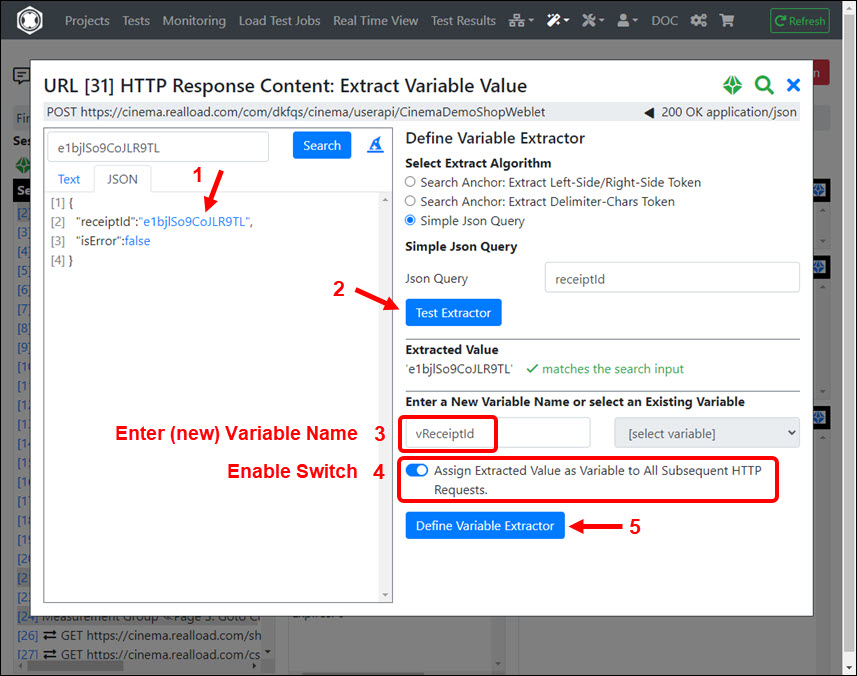

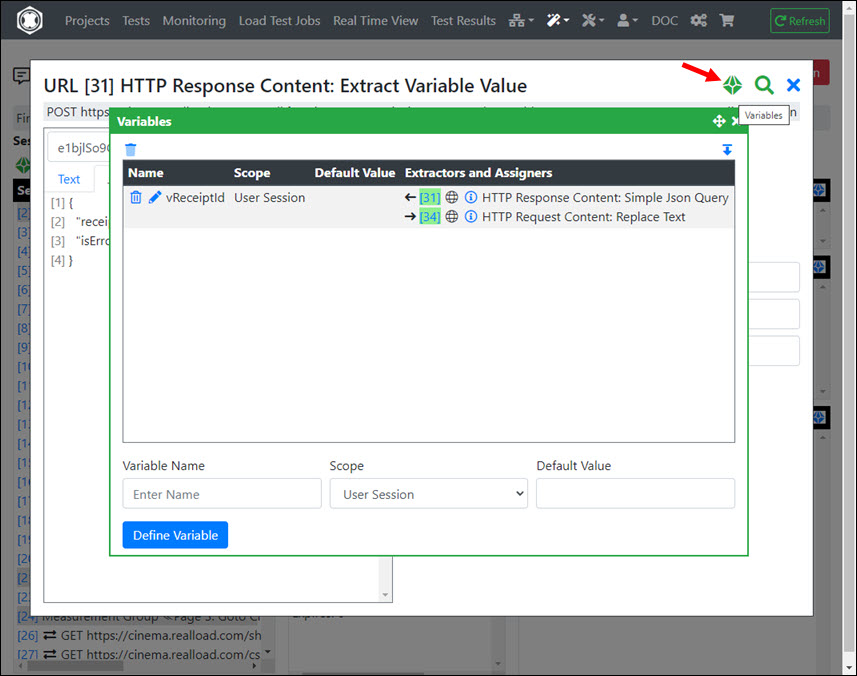

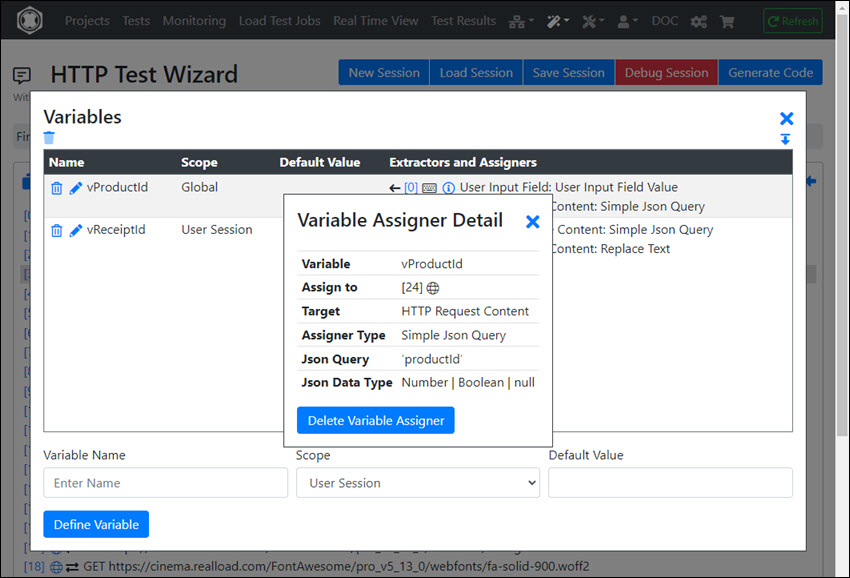

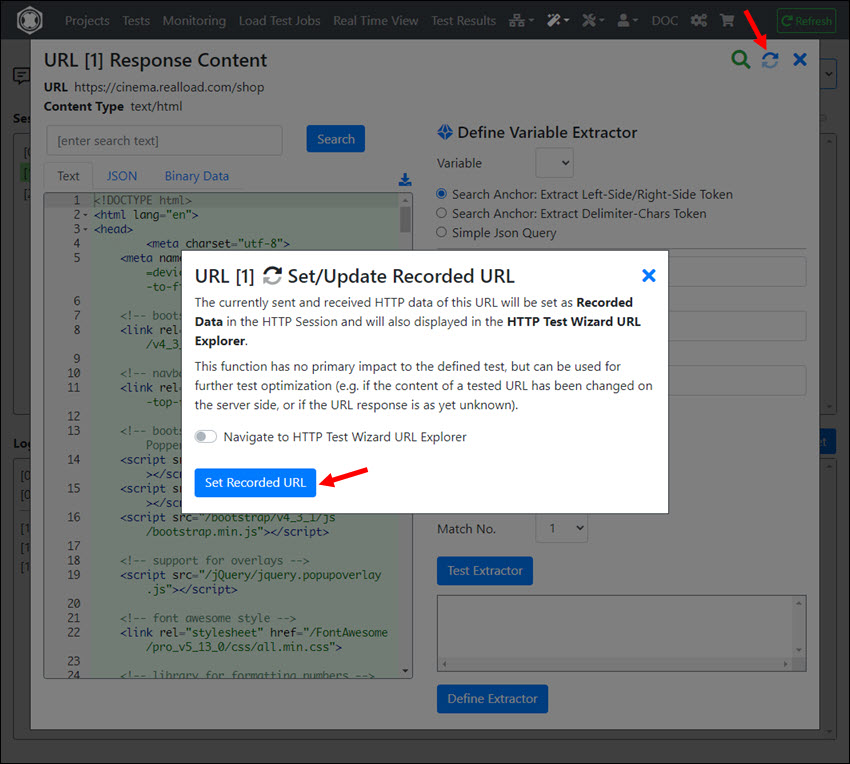

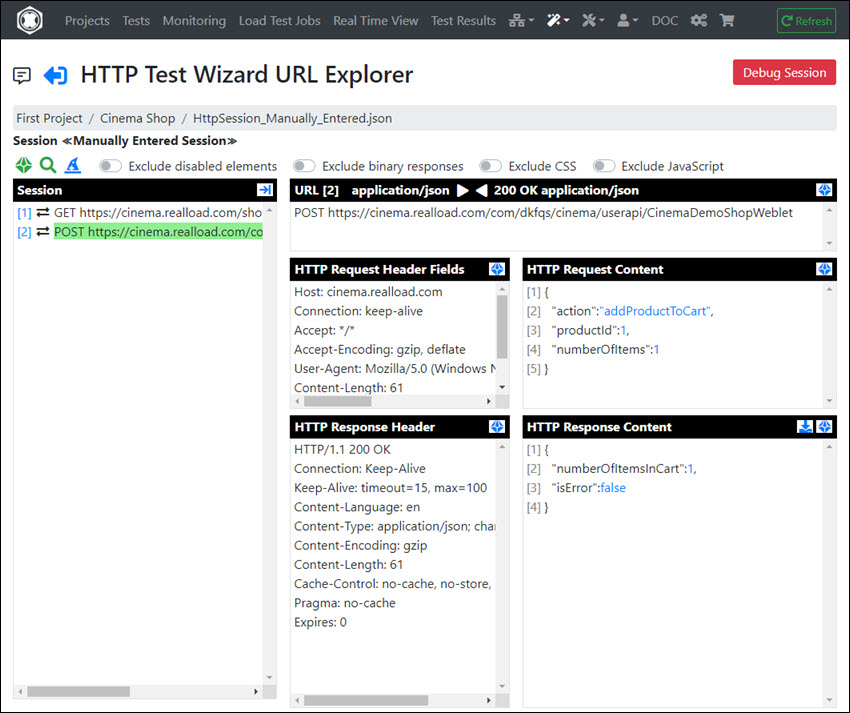

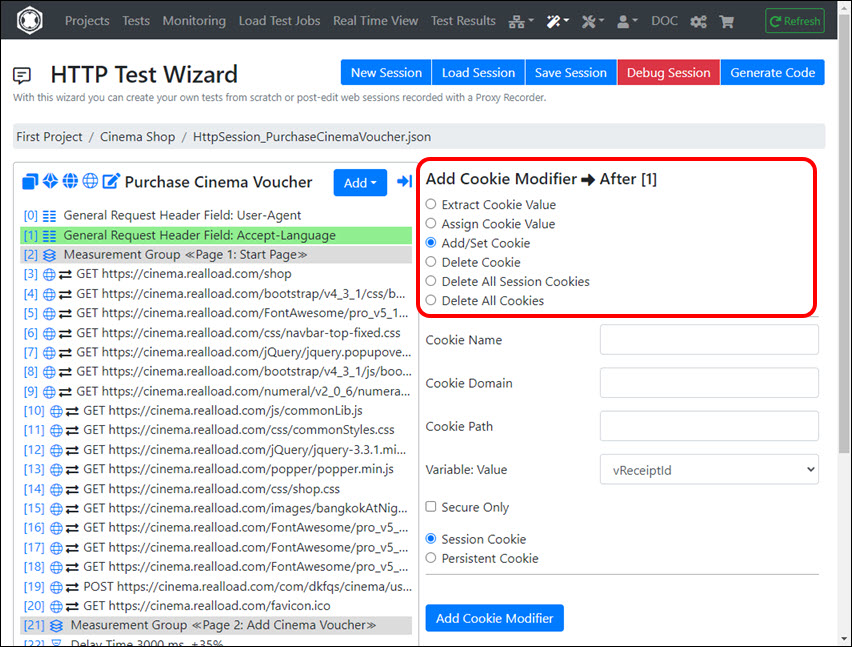

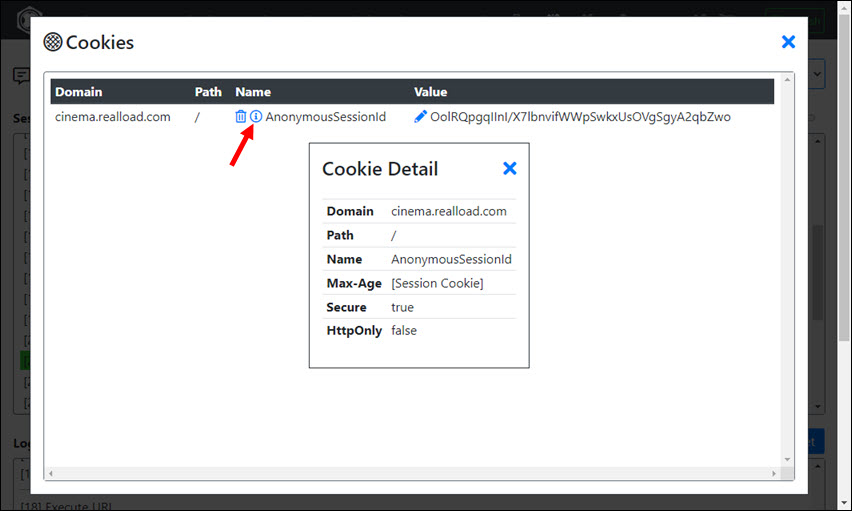

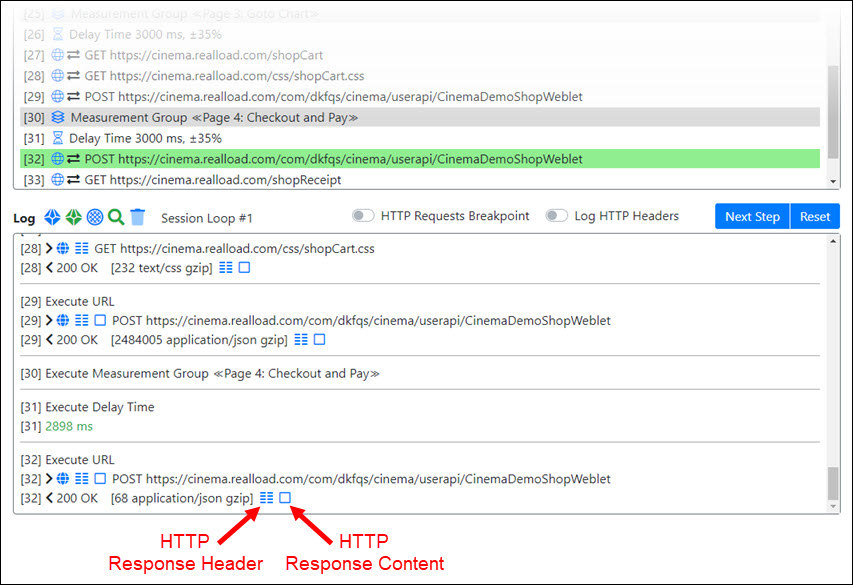

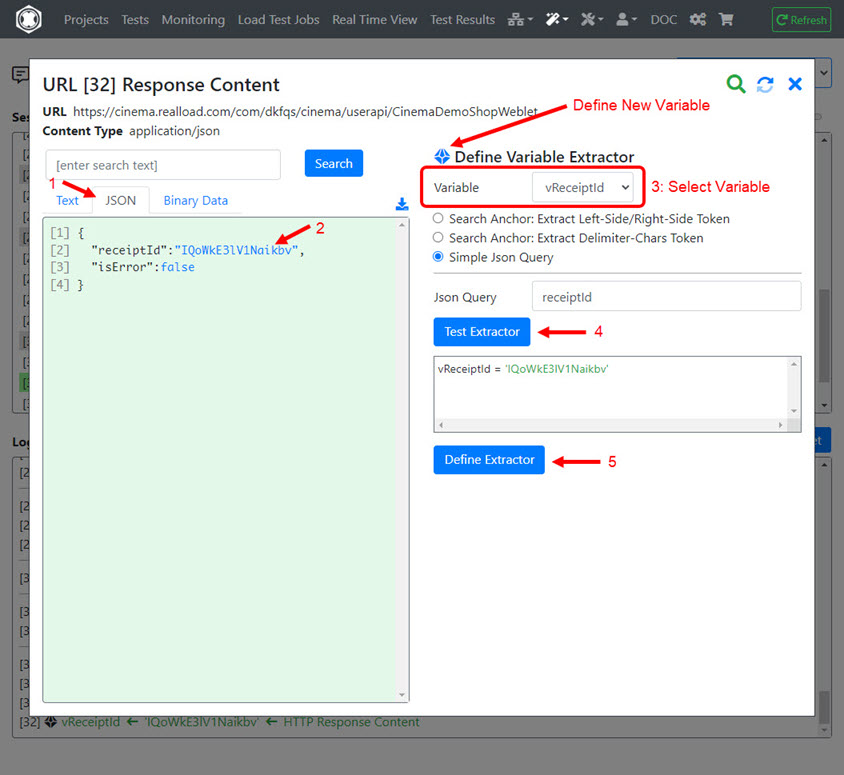

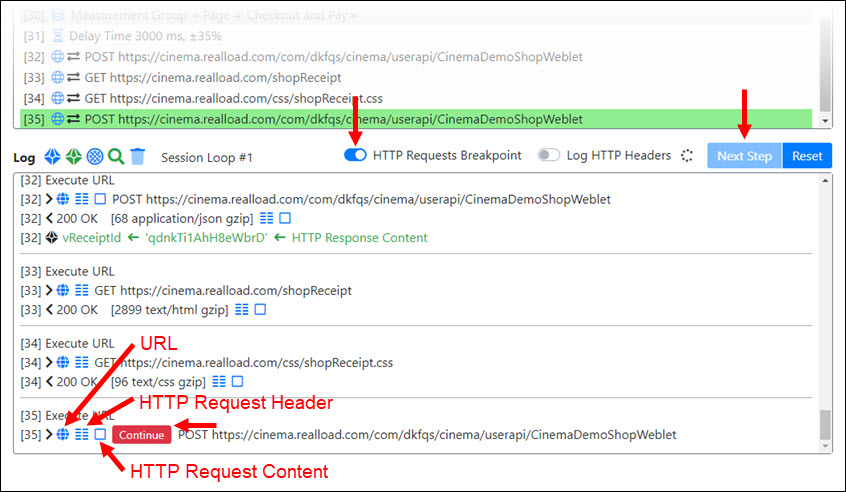

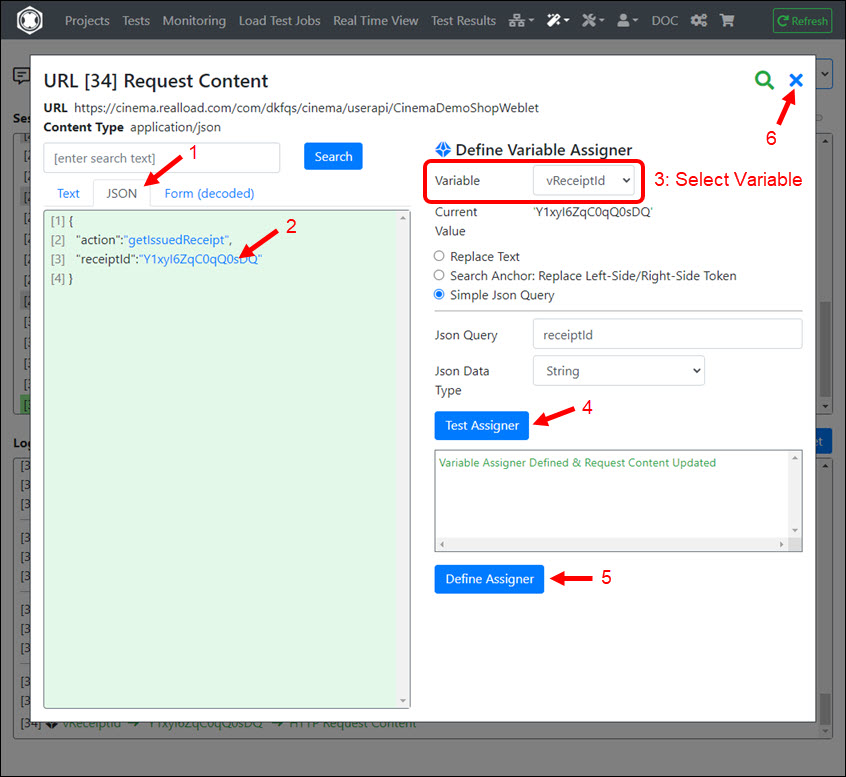

In the debugger you can also extract values from HTTP responses and assign them to succeeding HTTP requests, whereby the session is automatically synchronized between the debugger and the HTTP Test Wizard.

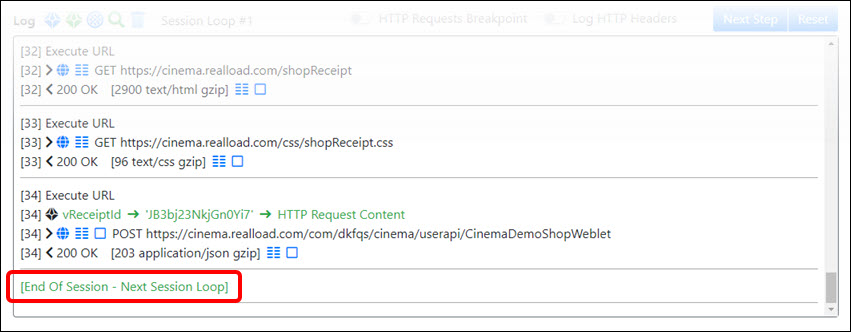

In the debugger, click Next Step until all URLs have been executed successfully and the end of the session has been reached - or until an error occurs. If an error occurs, exit the debugger, then repair the URL (= HTTP/S request), and then invoke the debugger again.

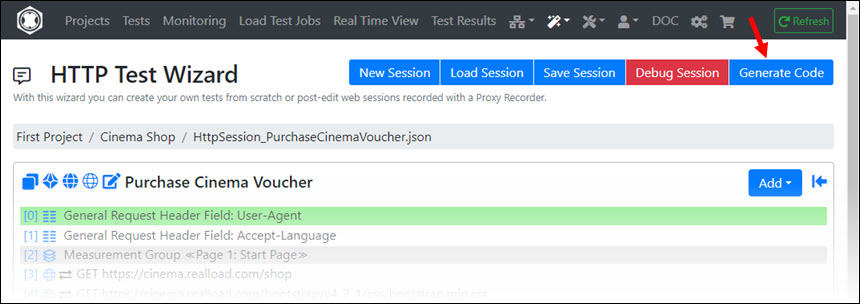

After debugging is successful, return to the HTTP Test Wizard.

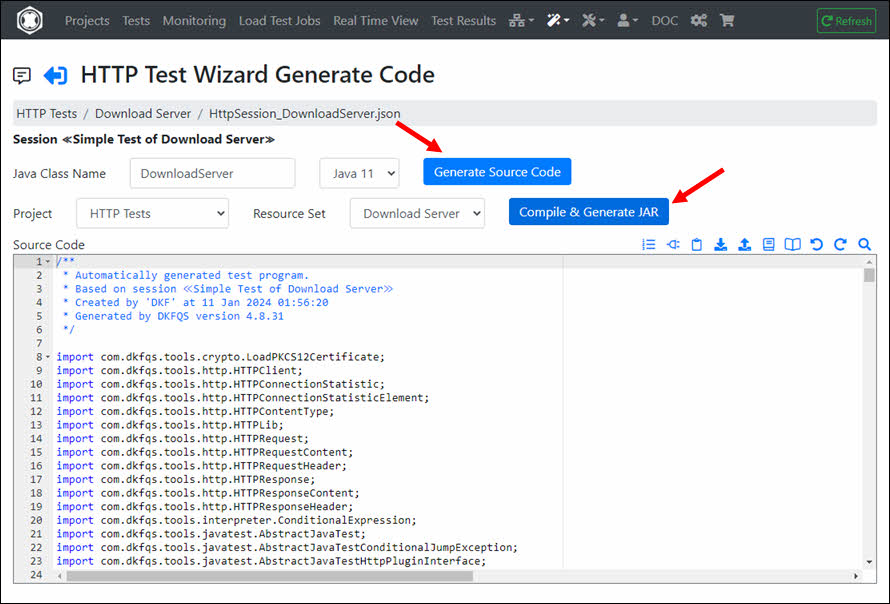

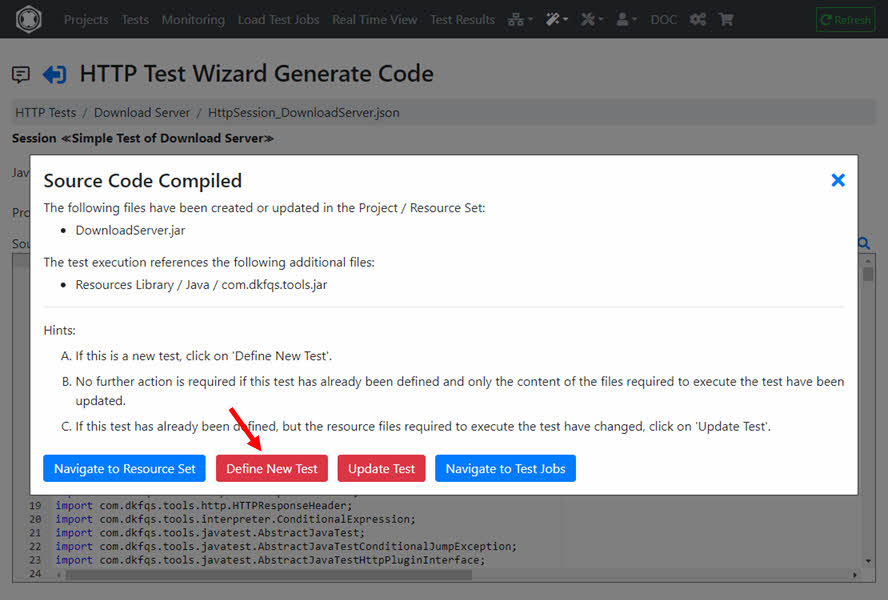

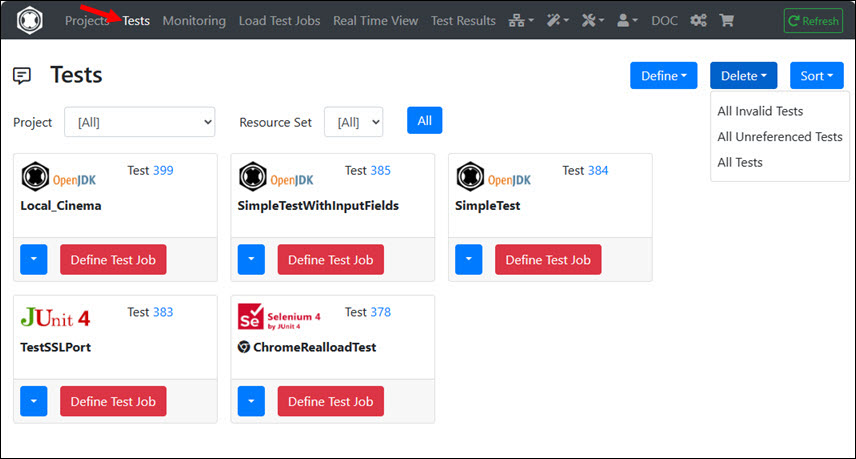

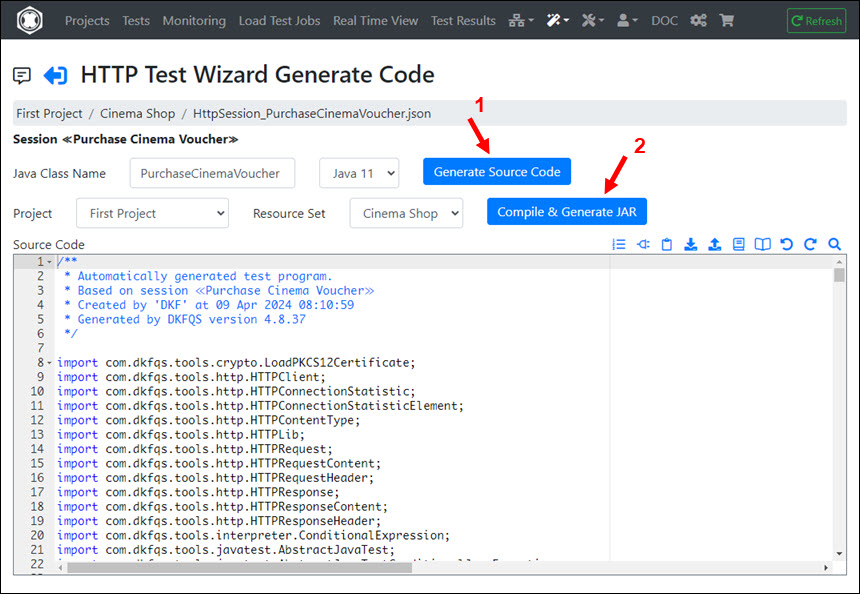

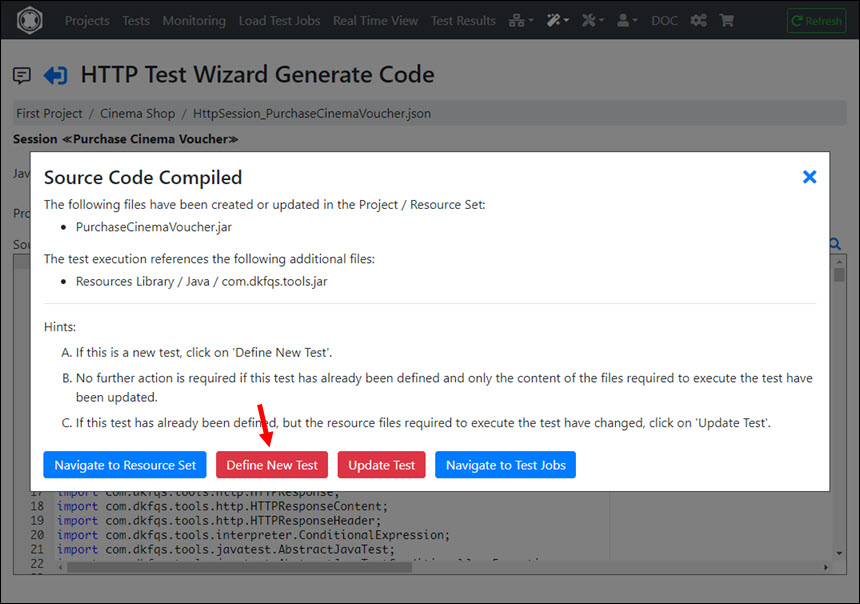

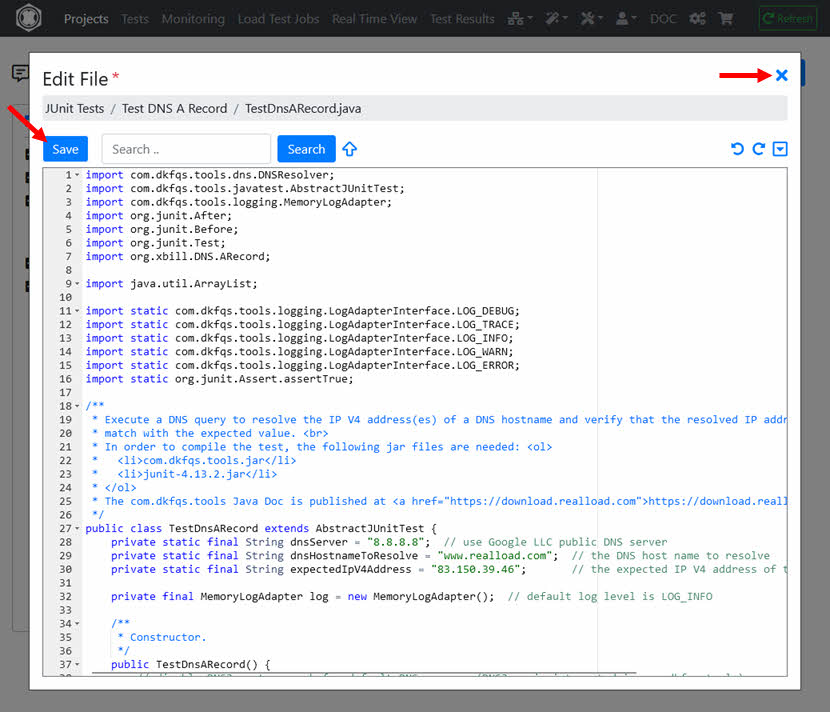

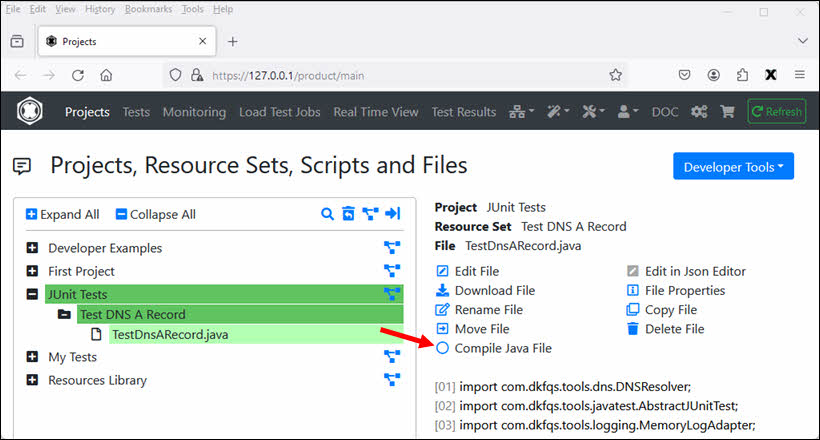

After the source code is compiled, click ‘Define New Test’.

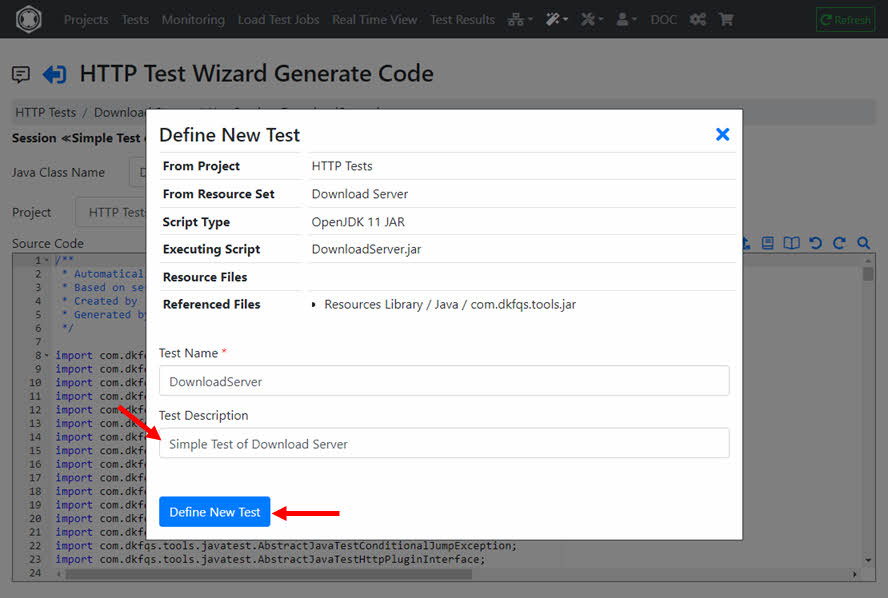

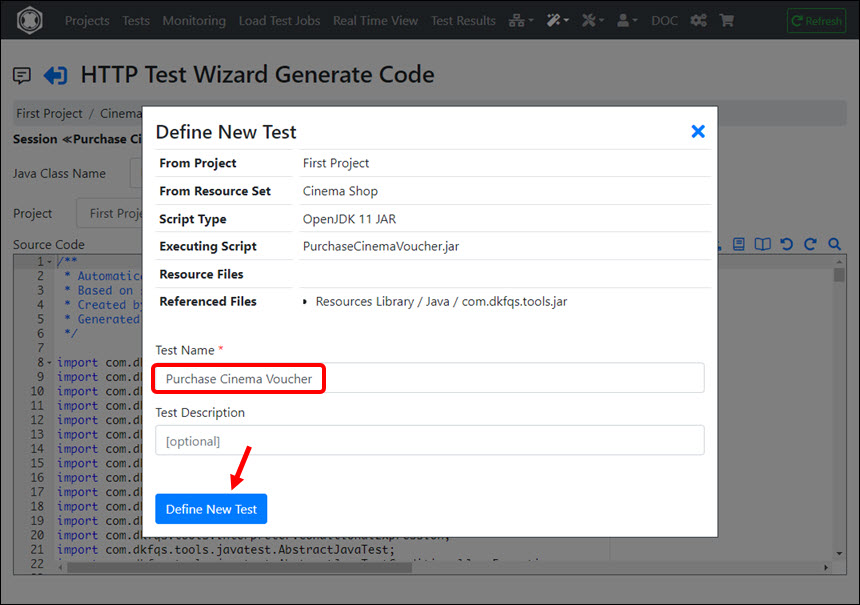

Enter the Test Description, and click ‘Define New Test’.

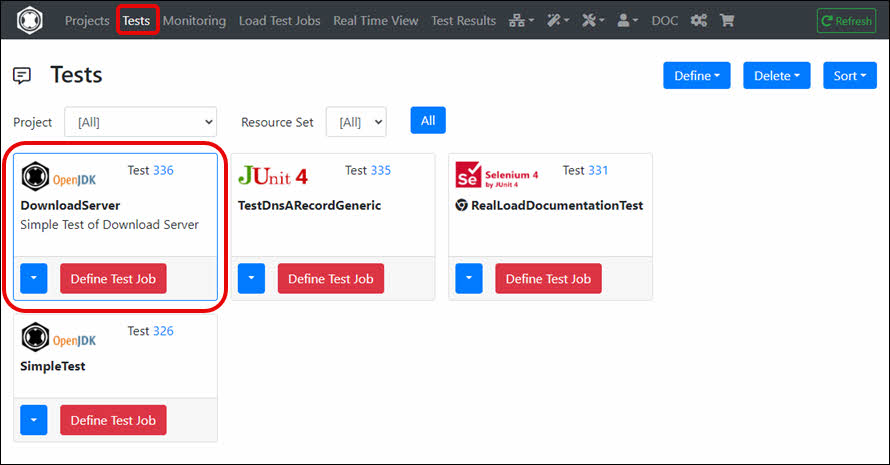

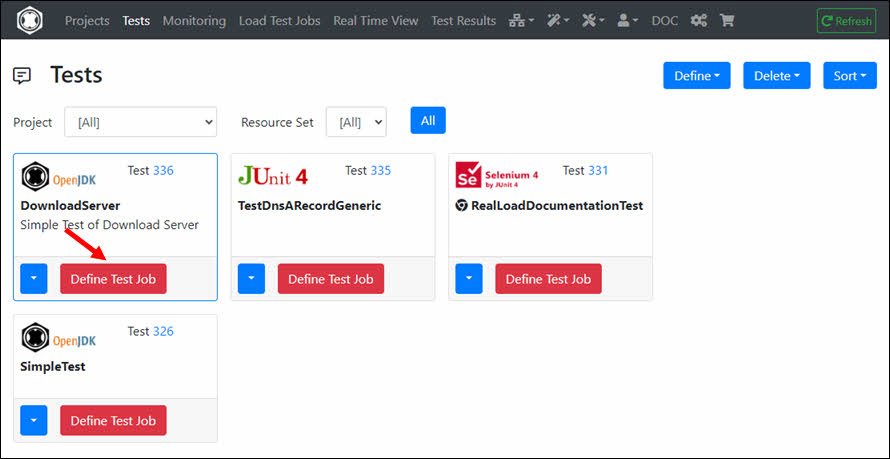

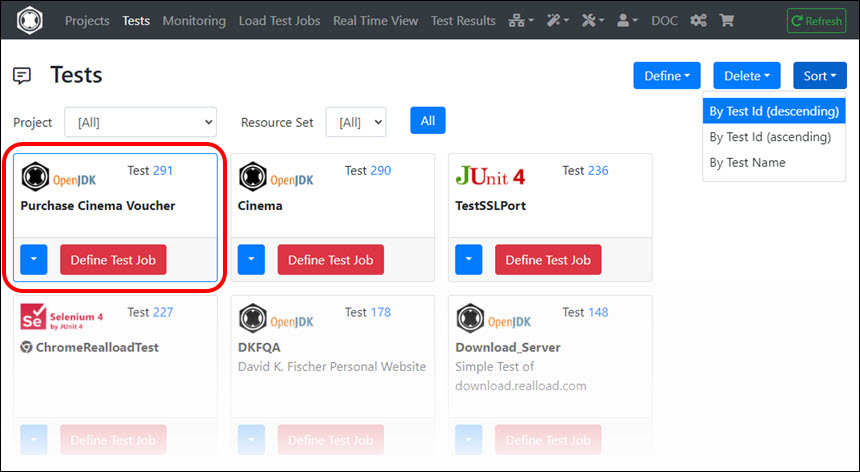

The RealLoad ‘Test’ is now defined. From here you can now create both a Load Test Job and a Monitoring Job.

Note: In case you (later) modify the session in the HTTP Test Wizard, you will have to generate and compile the source code again.

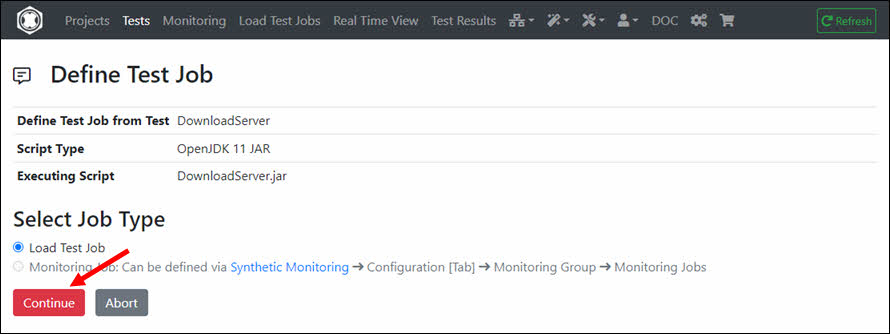

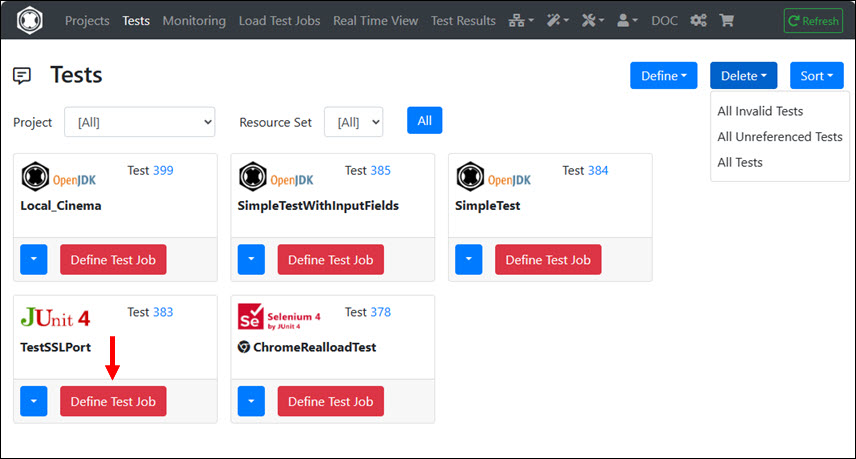

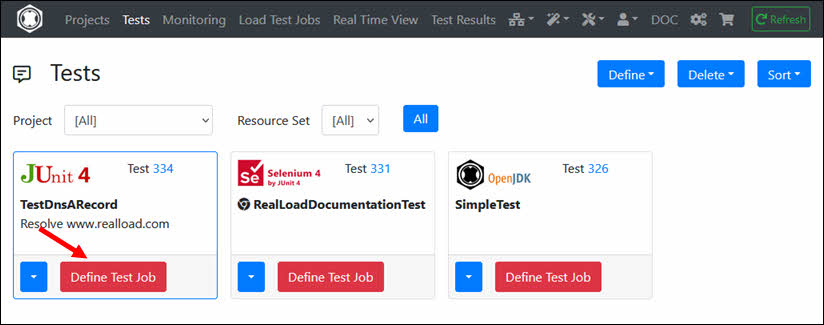

In the RealLoad ‘Test’ click ‘Define Test Job’.

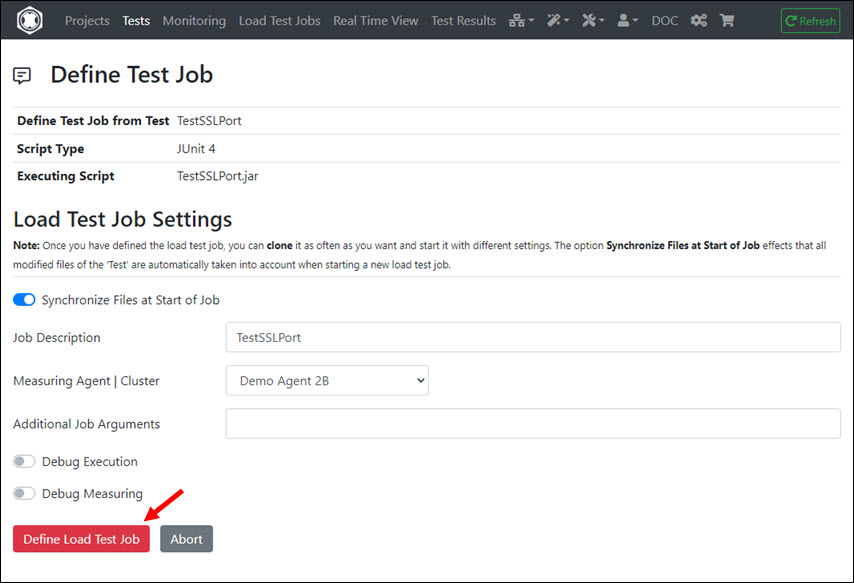

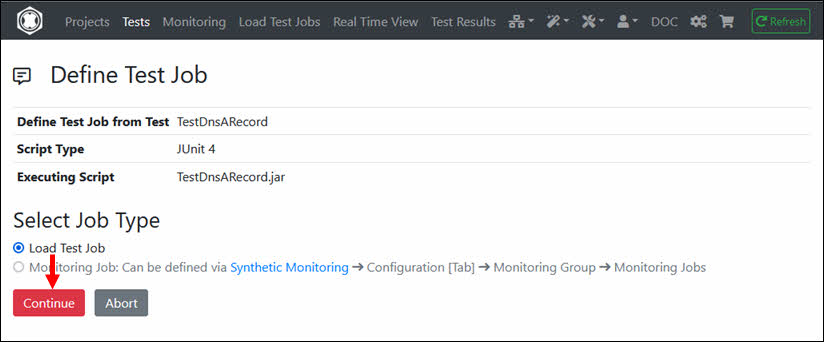

In next menu click ‘Continue’ …

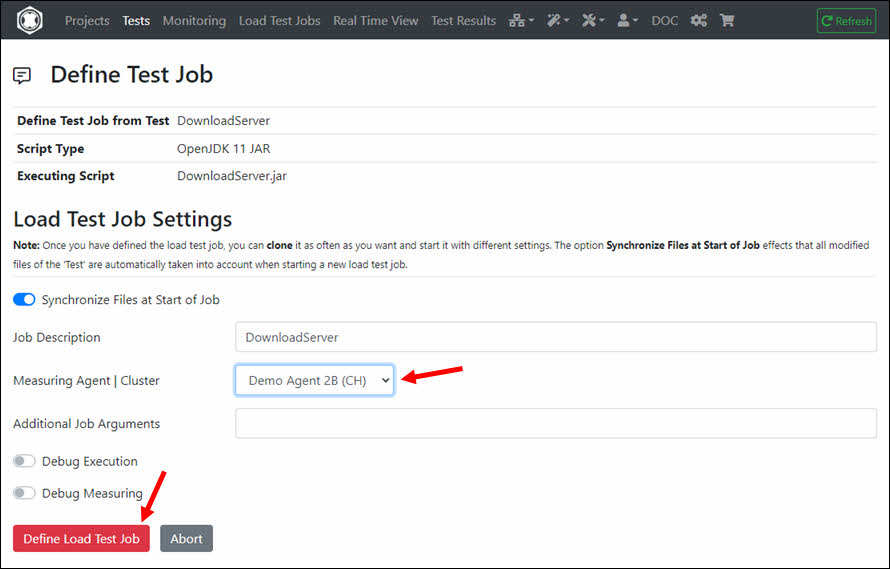

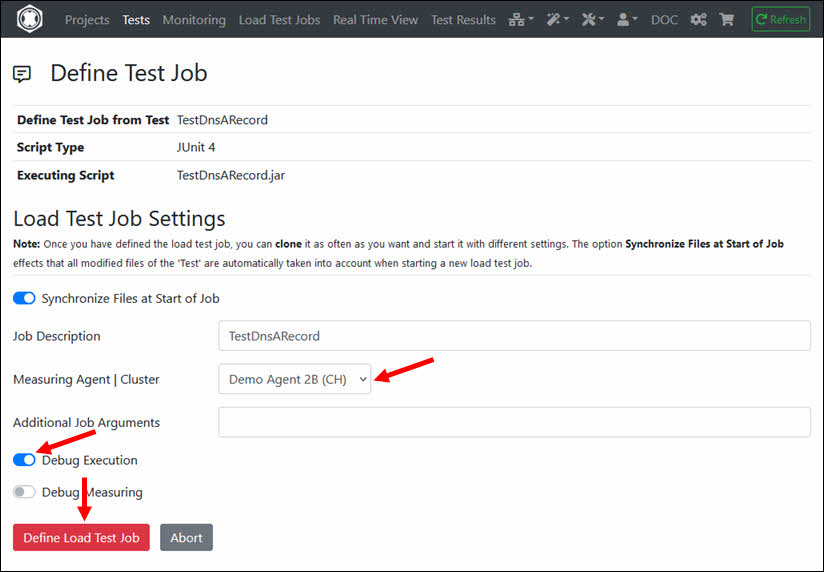

… And select the Measuring Agent on which the Load Test Job will be executed, then click ‘Define Load Test Job’.

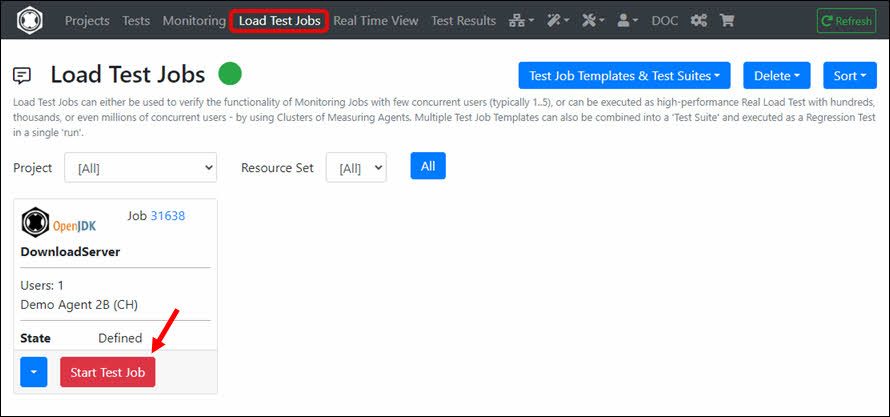

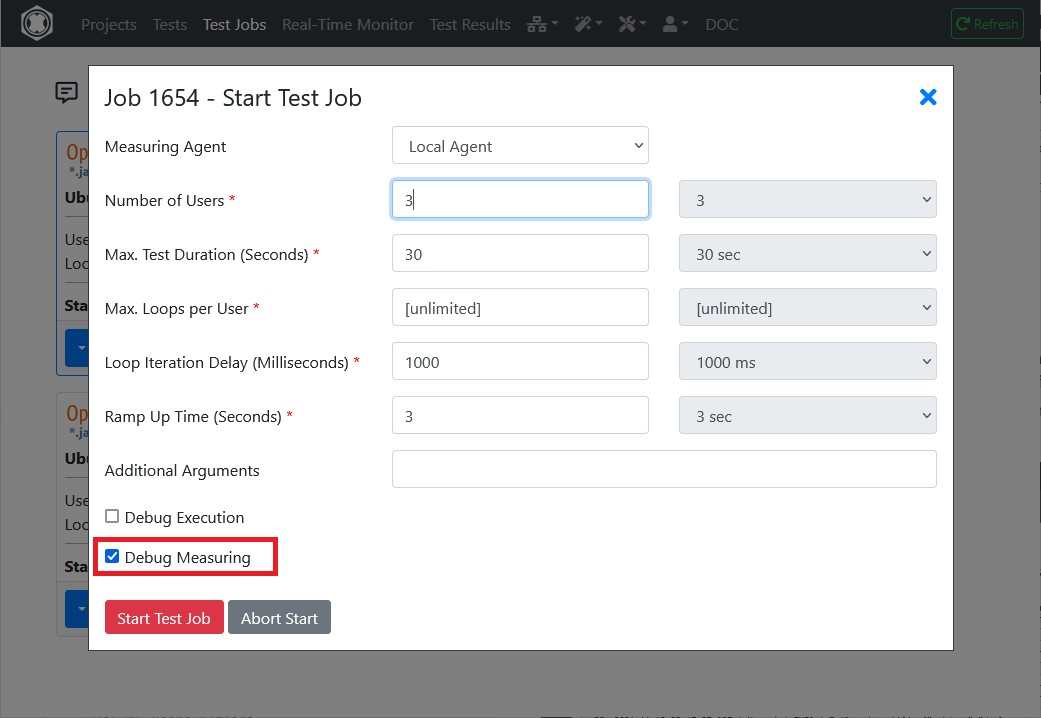

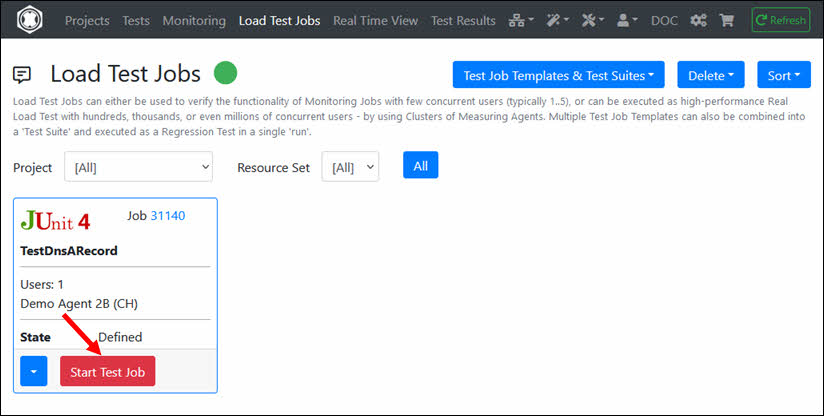

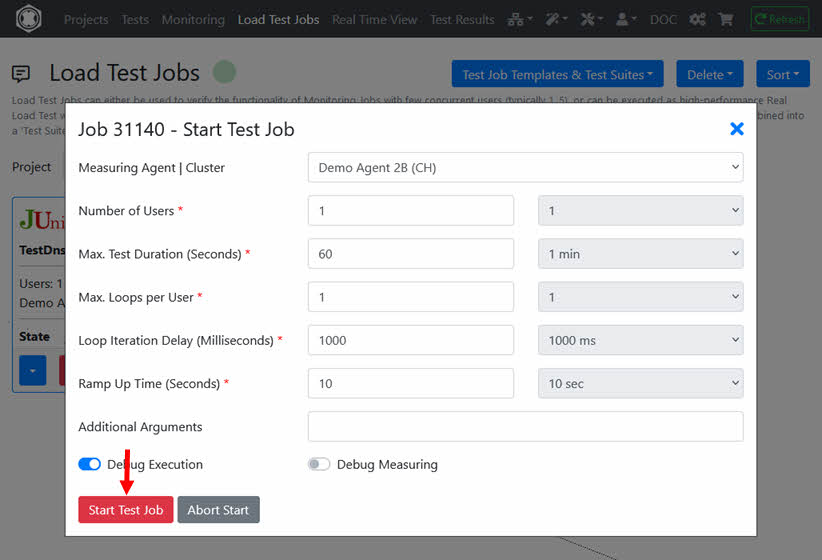

The Load Test Job is now defined. Click ‘Start Test Job’.

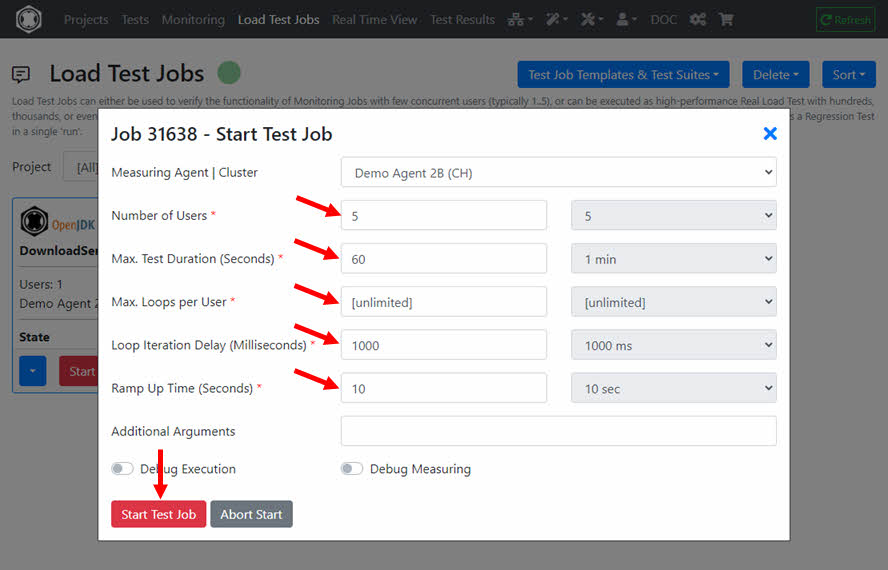

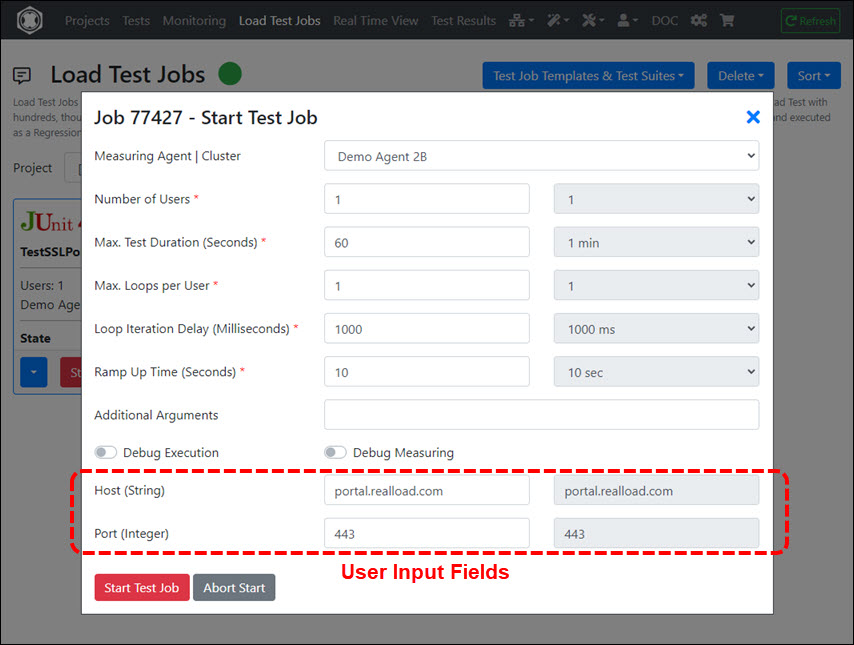

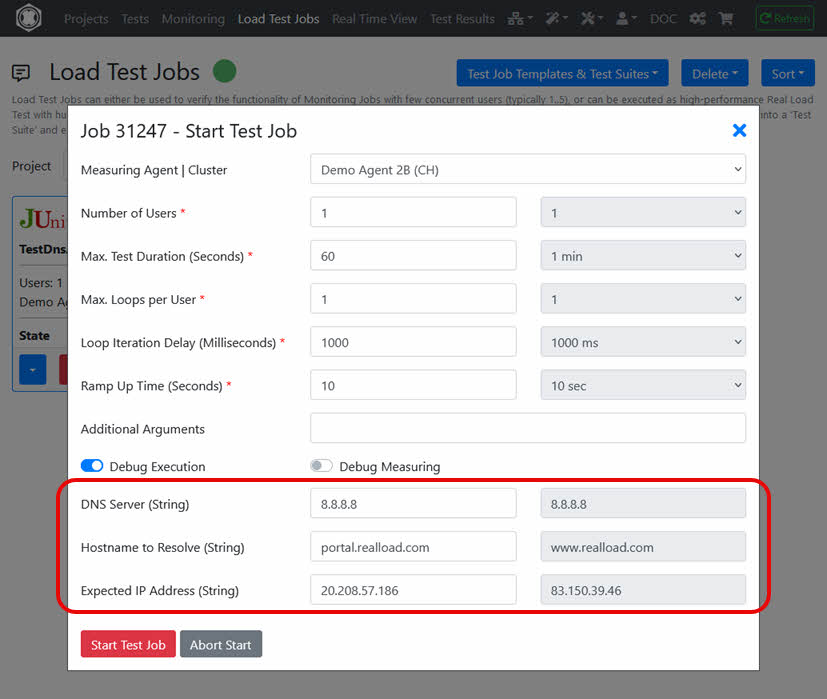

Enter the settings of the Load Test Job here and then click ‘Start Test Job’. We recommend the following settings for the first load test run:

A free account allows you to execute Load Tests with the following limitations ¹ :

¹ = Does not apply for Load Test Jobs executed on Measuring Agents launched by Cloud Credits: in this case there are no such restrictions.

You can add a Real Load license to your account at any time by purchasing it in our shop https://shop.realload.com .

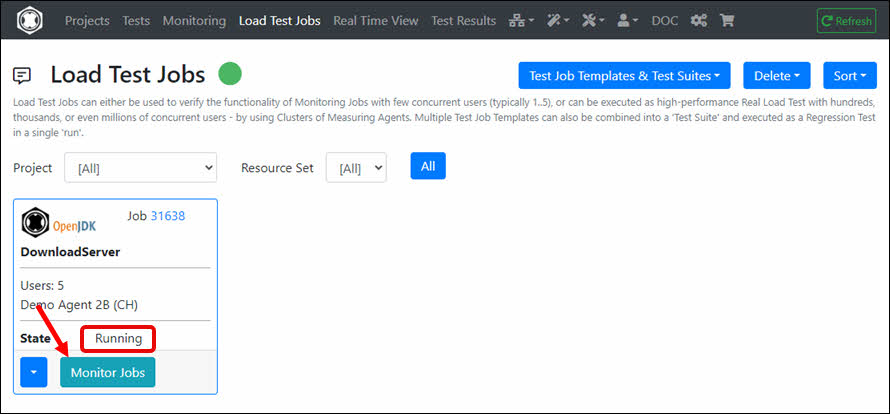

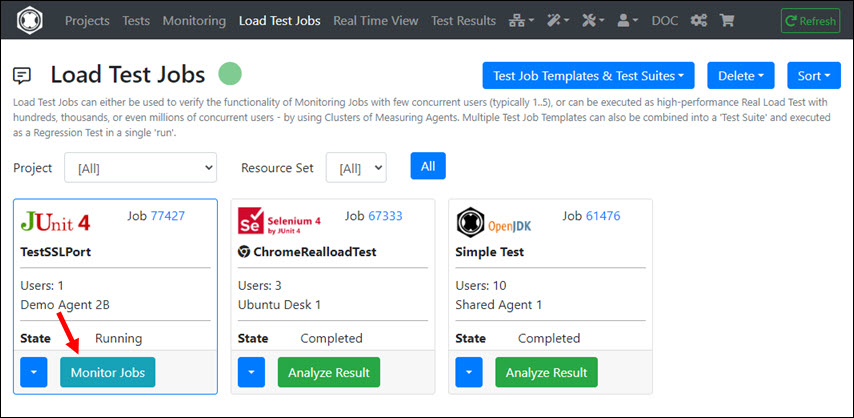

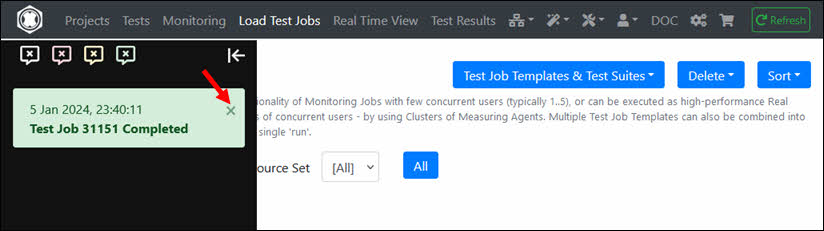

After a few seconds the Load Test Job is in the state ‘Running’. Click ‘Monitor Jobs’.

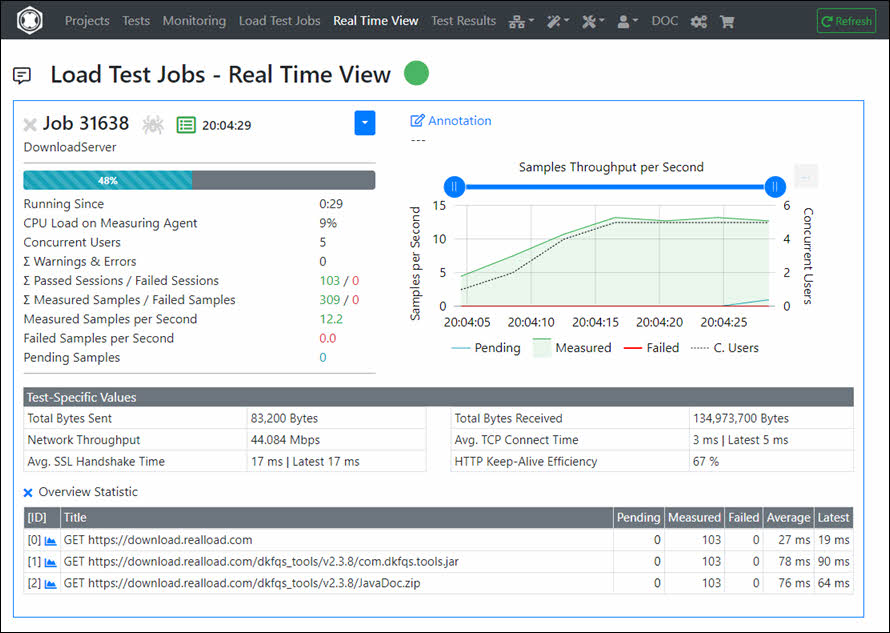

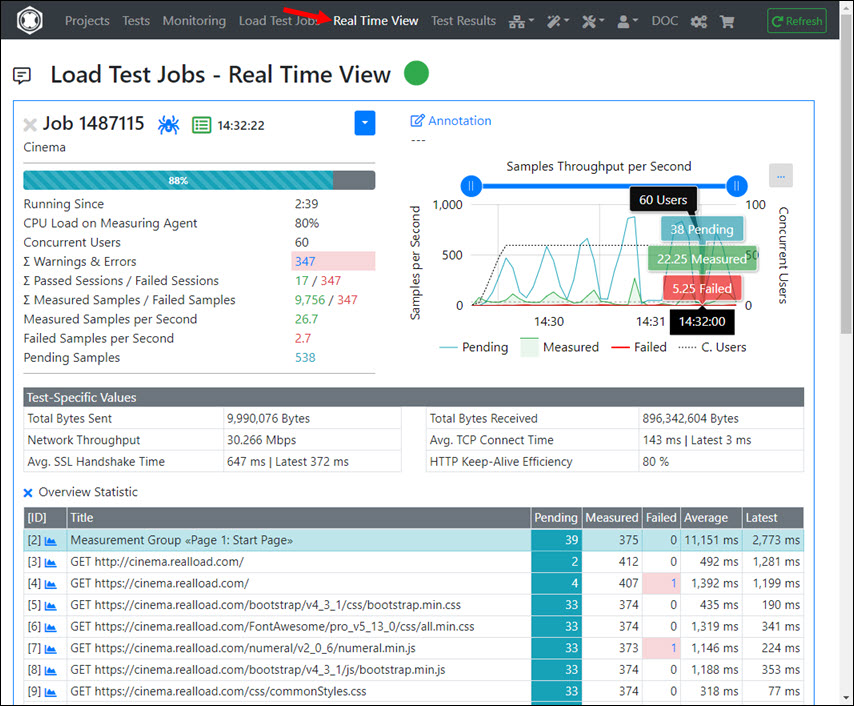

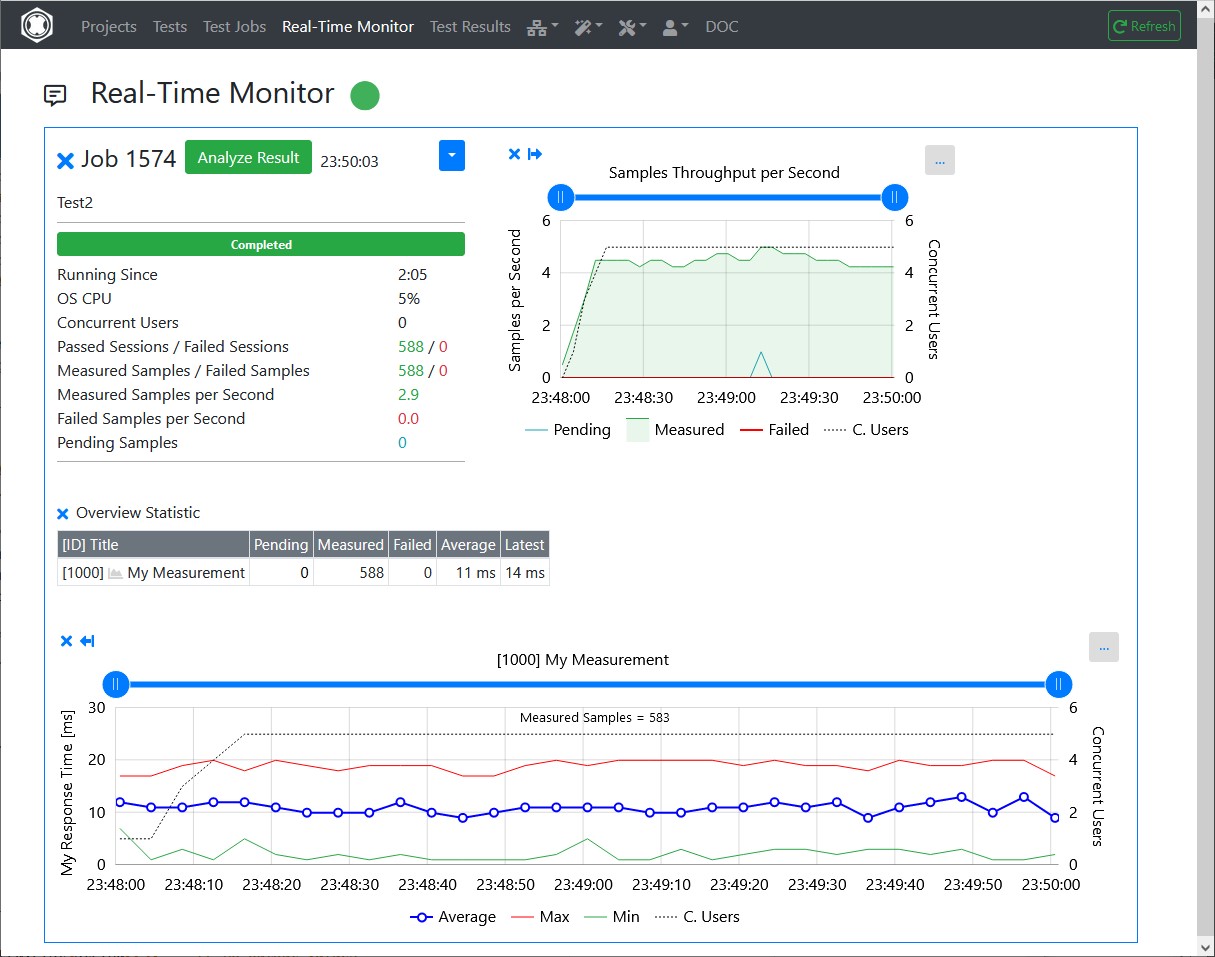

The real-time data of the running Load Test Job is now displayed.

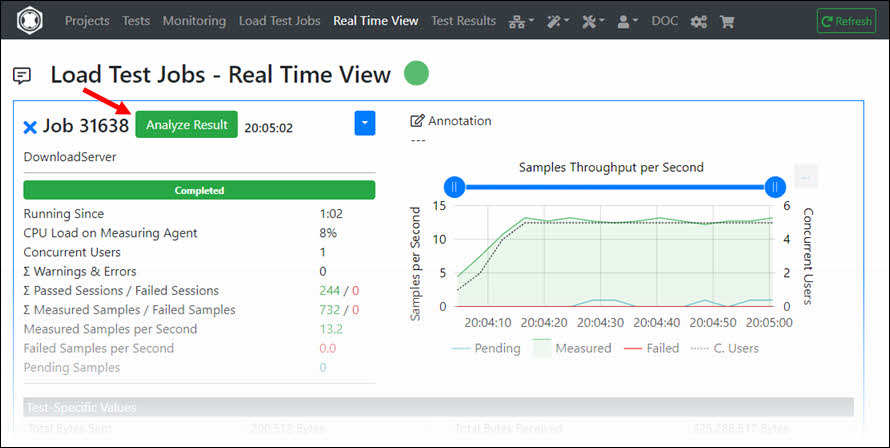

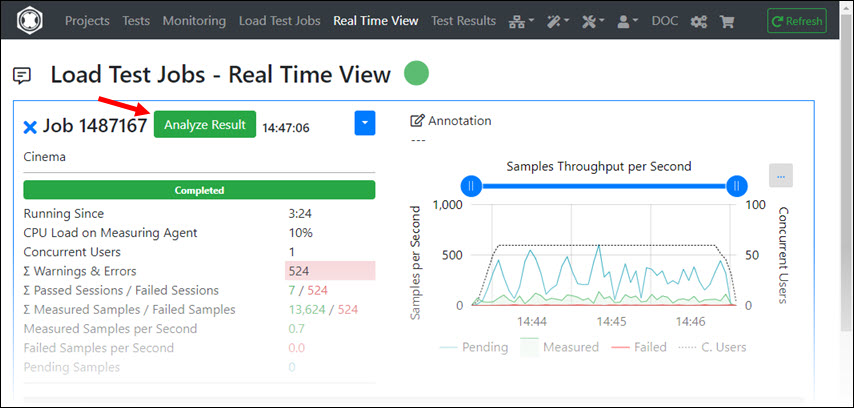

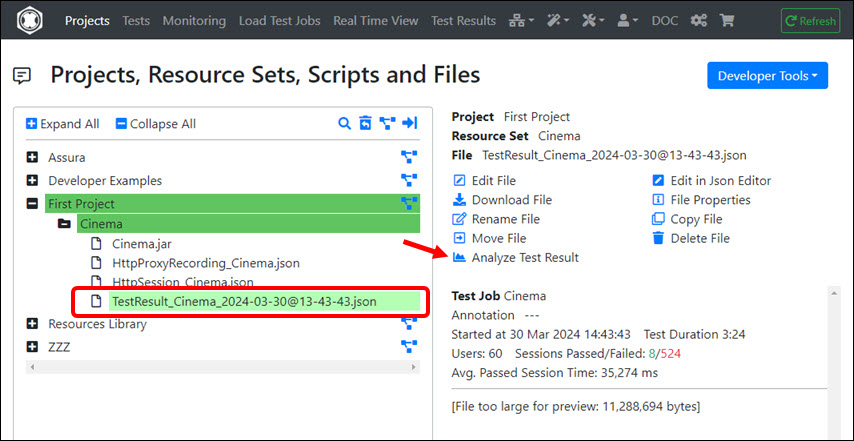

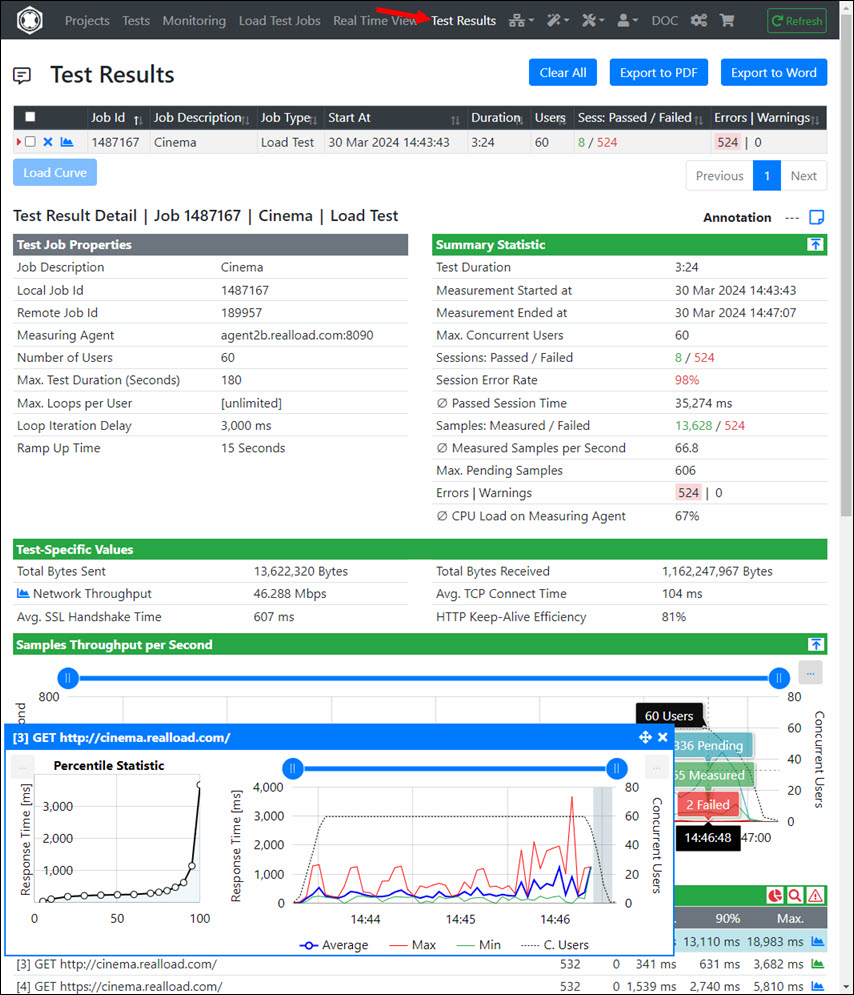

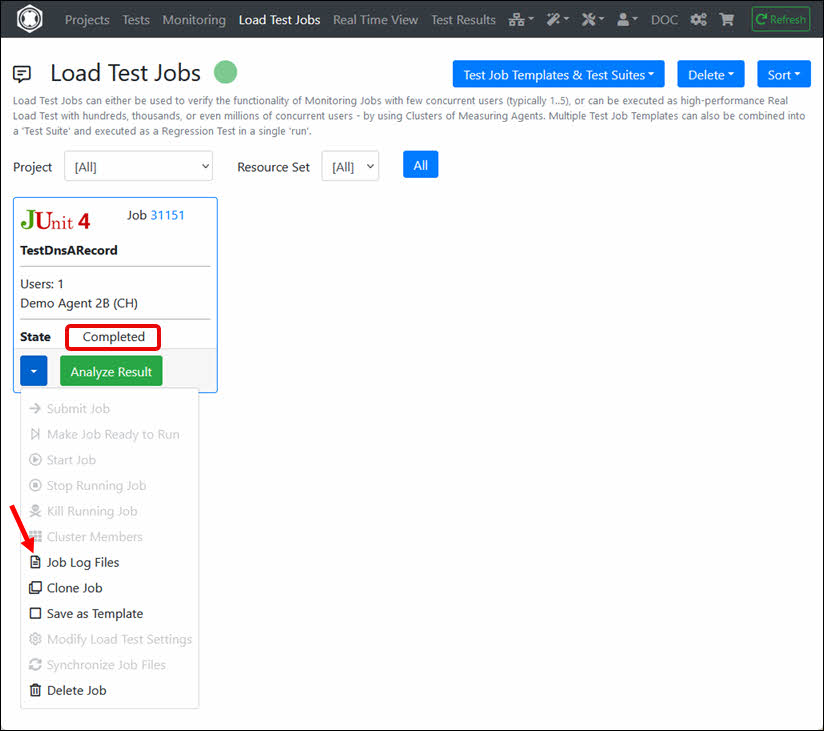

Click on ‘Analyze Result’ after the Load Test Job is completed.

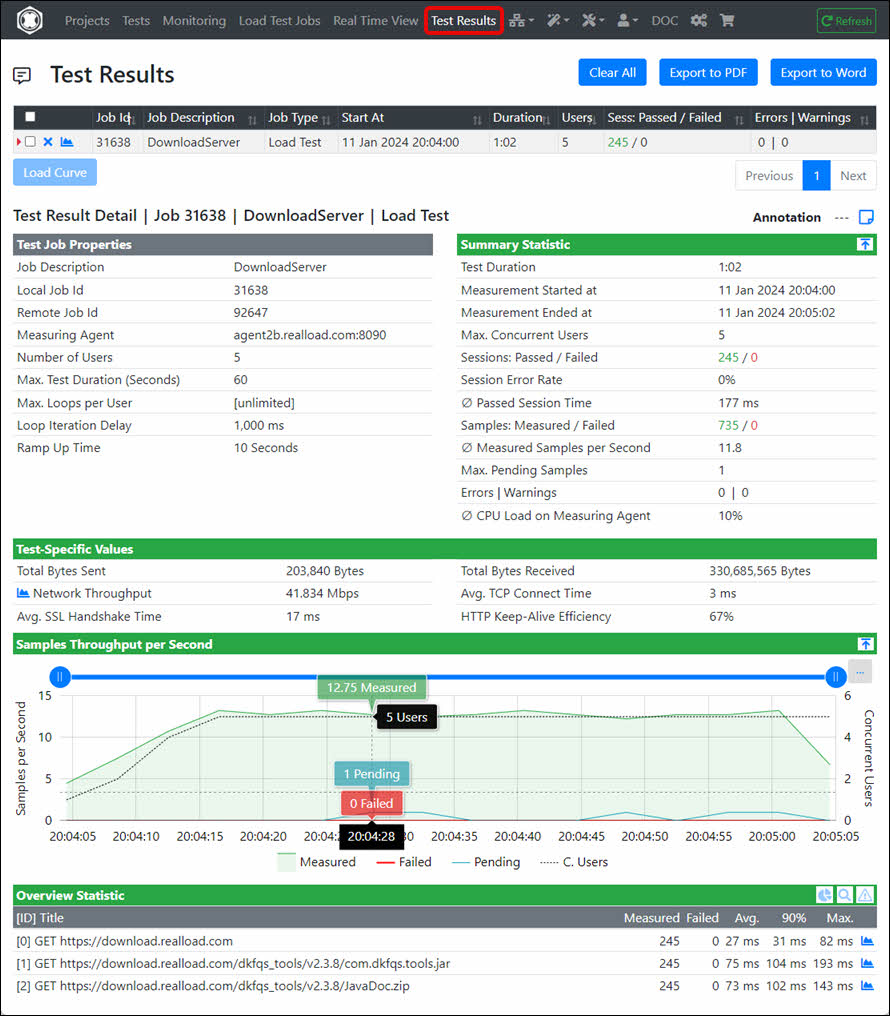

The Load Test Job result is then displayed.

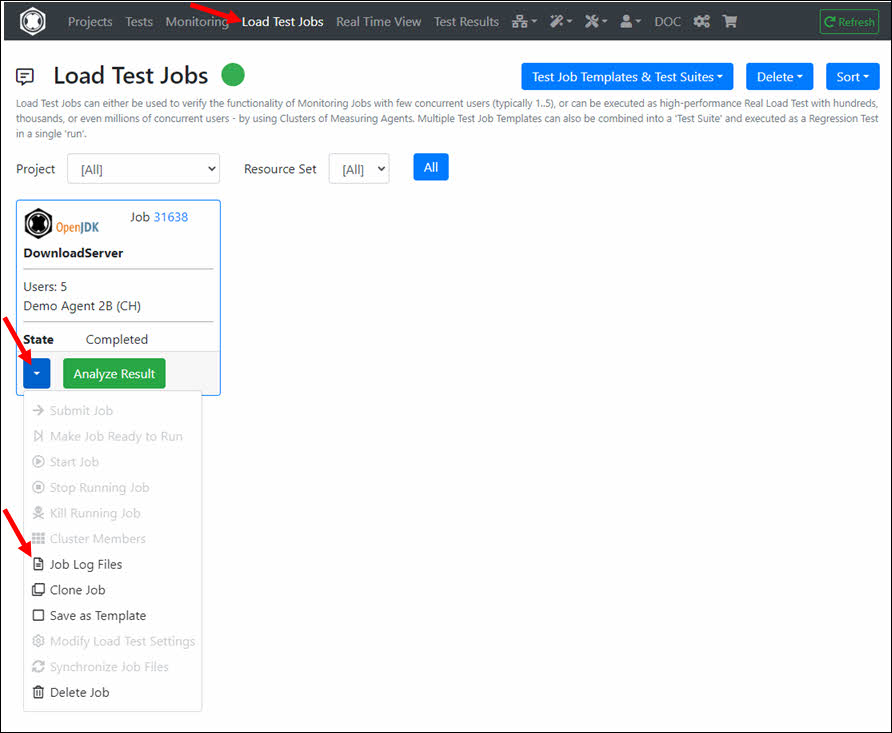

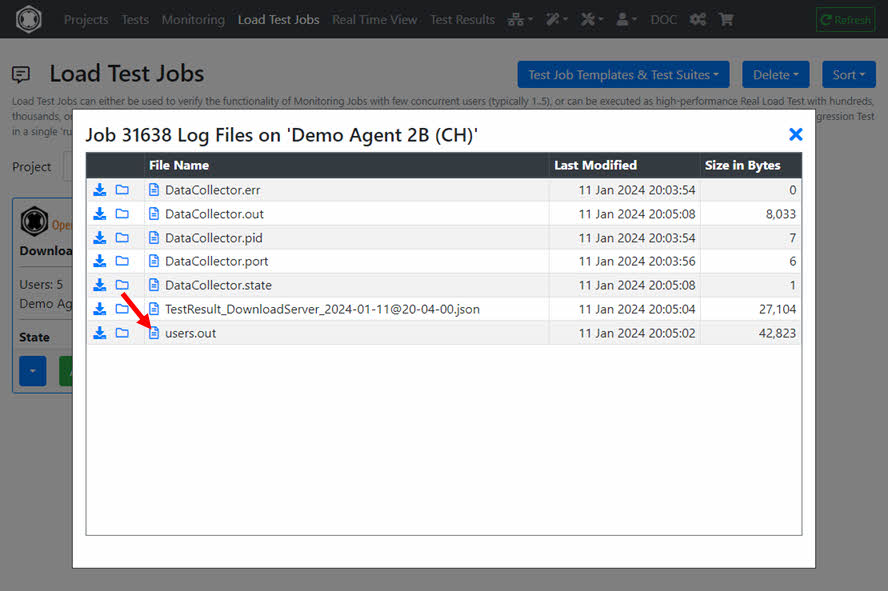

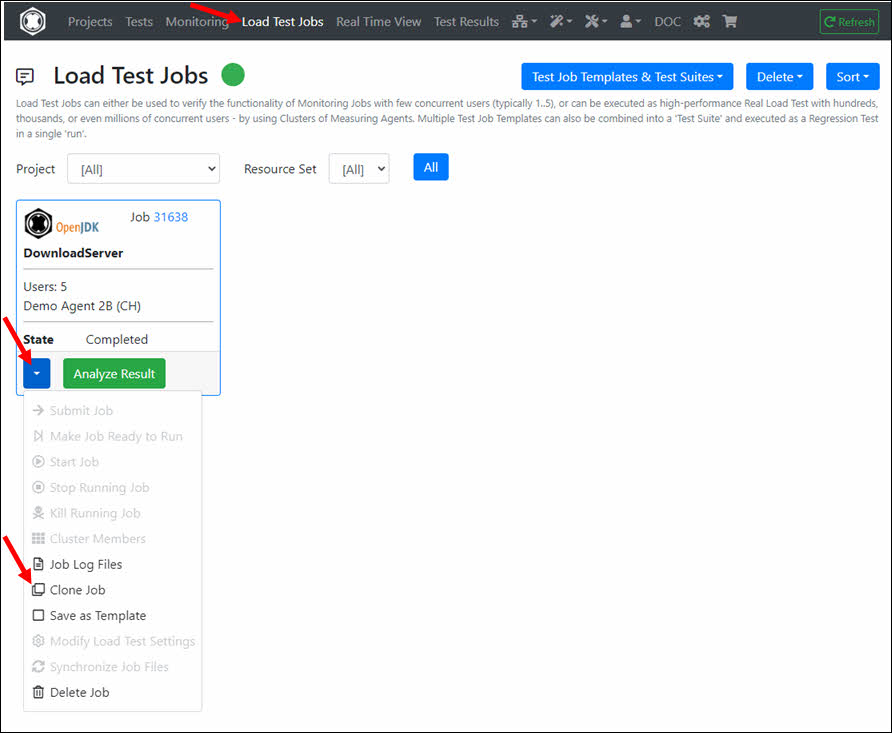

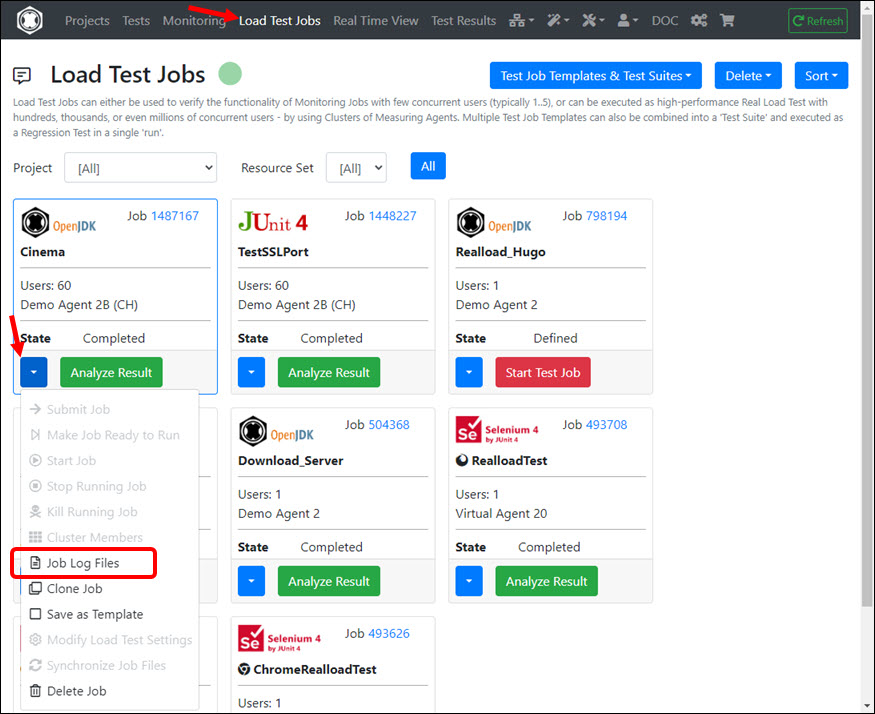

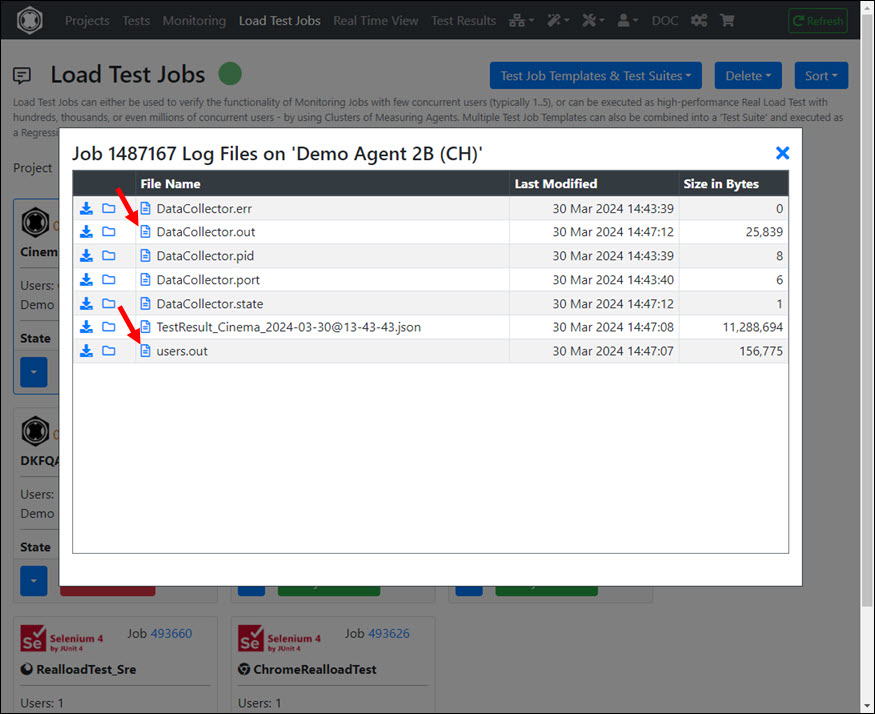

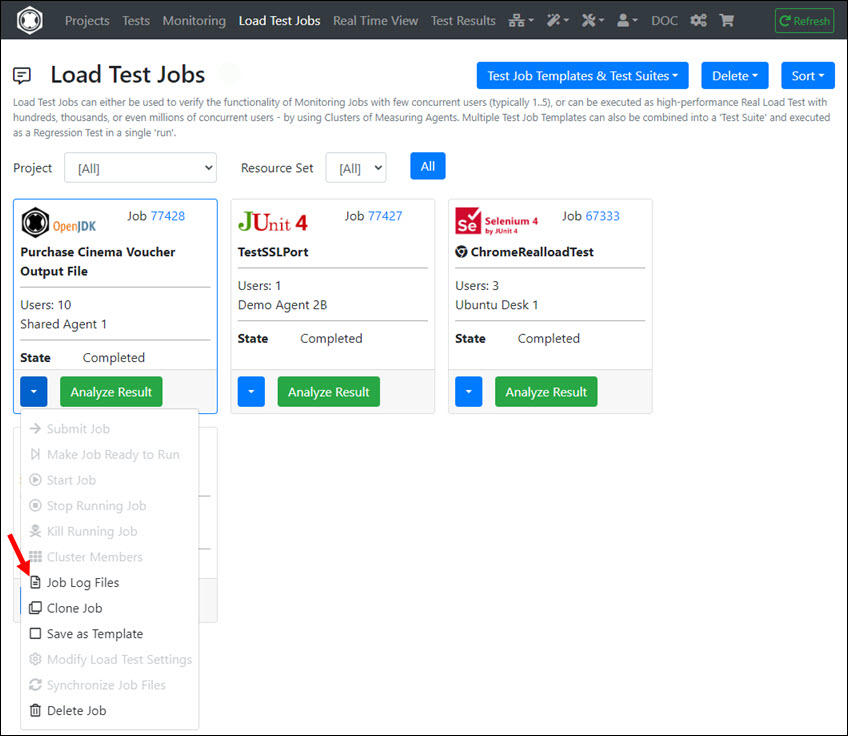

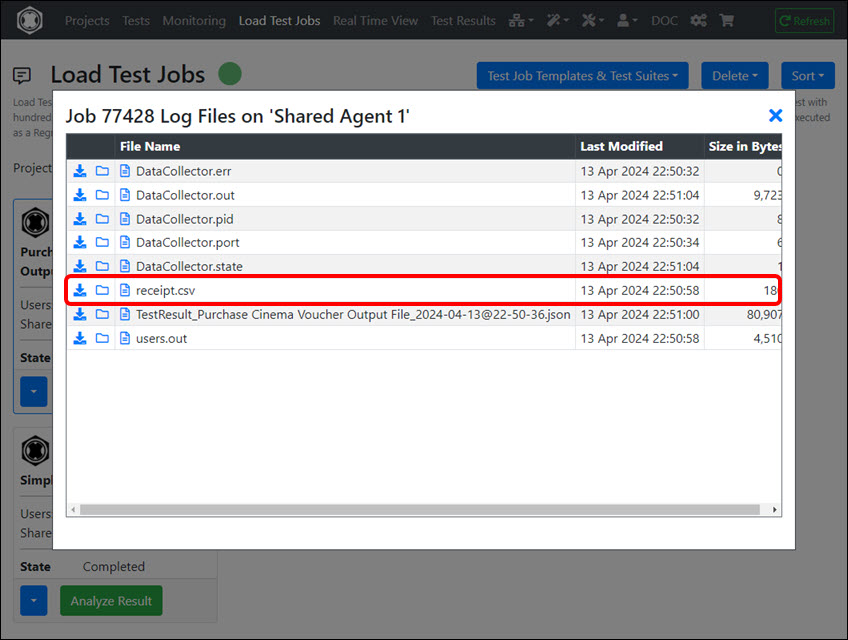

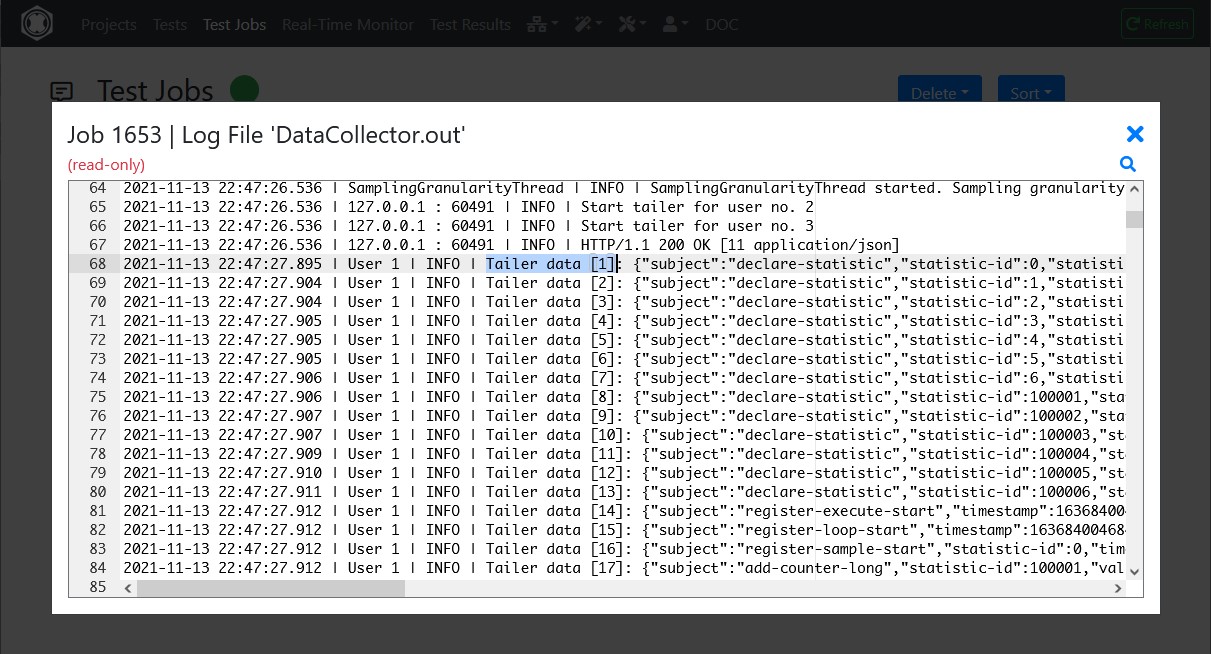

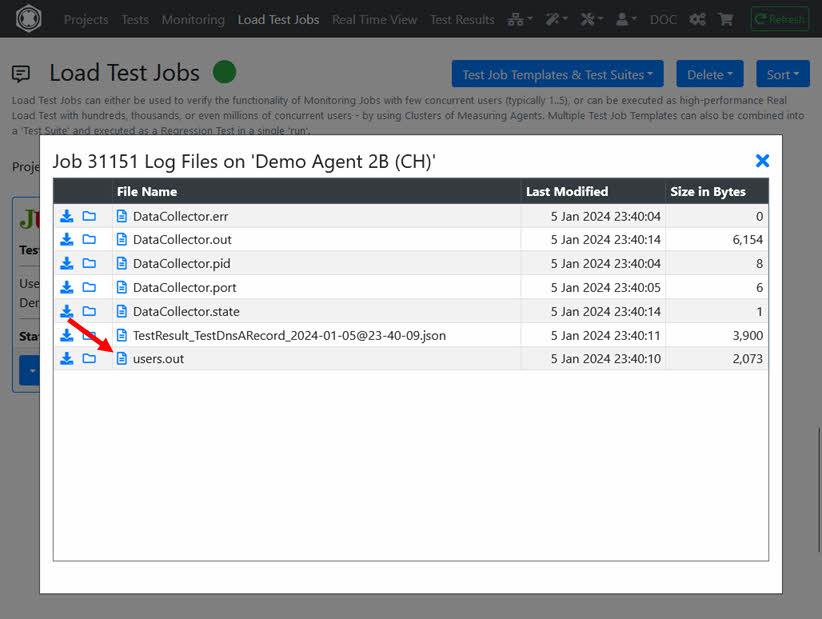

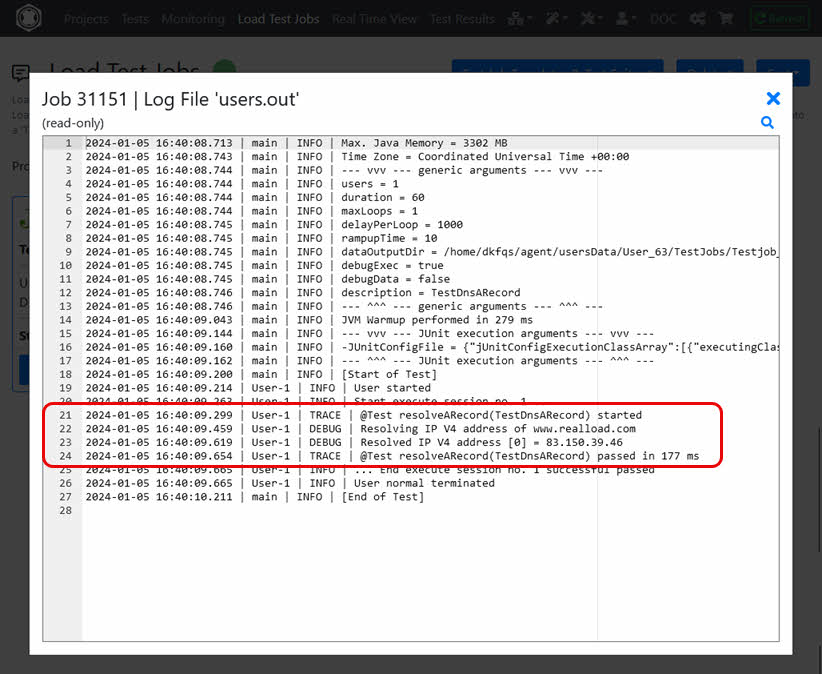

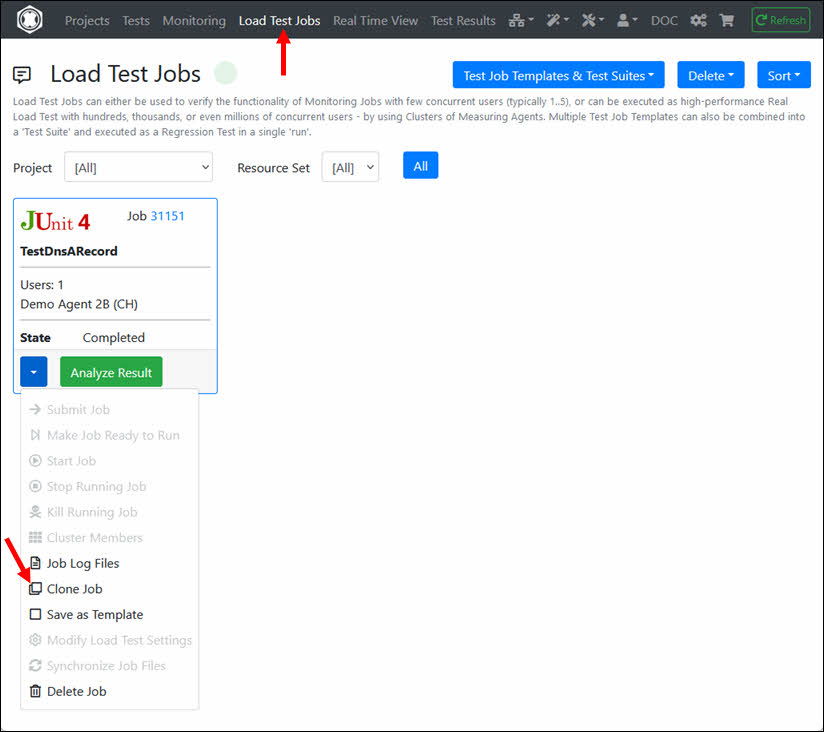

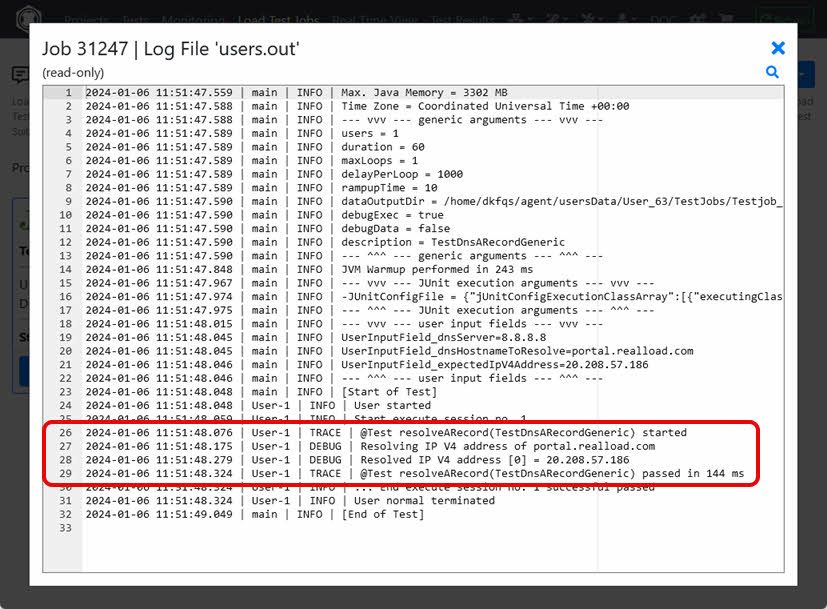

To view the Job Output Files navigate to ‘Load Test Jobs’ and click in the dropdown of the Job on ‘Job Log Files’.

Select the file users.out which contains the Log Output of the Job.

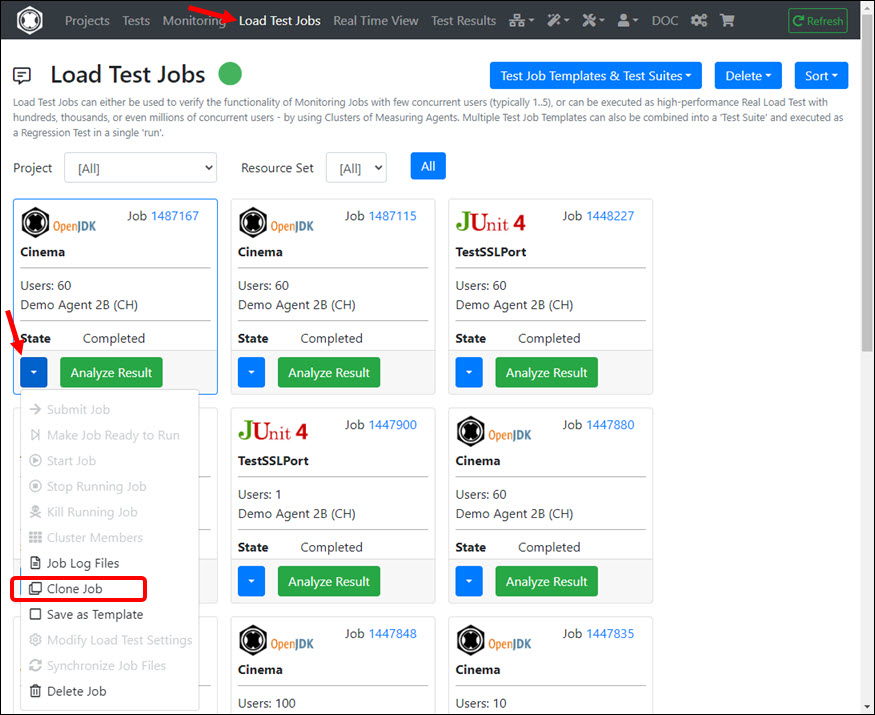

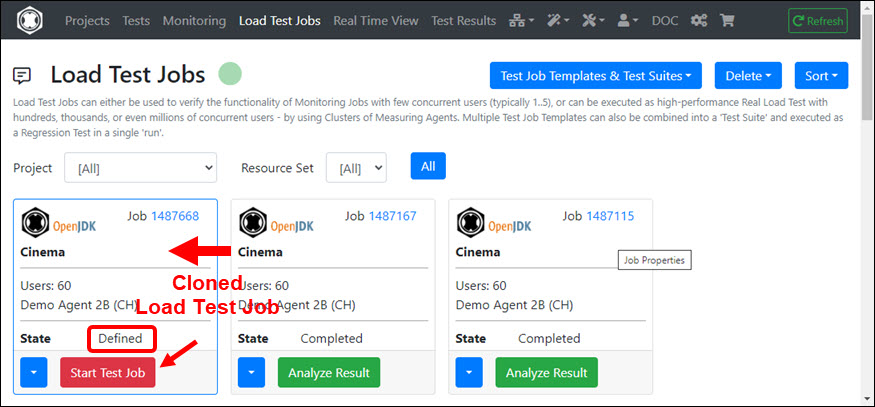

If you would like to run the Load Test Job again with the same or changed settings, click on ‘Clone Job’ in the Job dropdown and then start the cloned job.

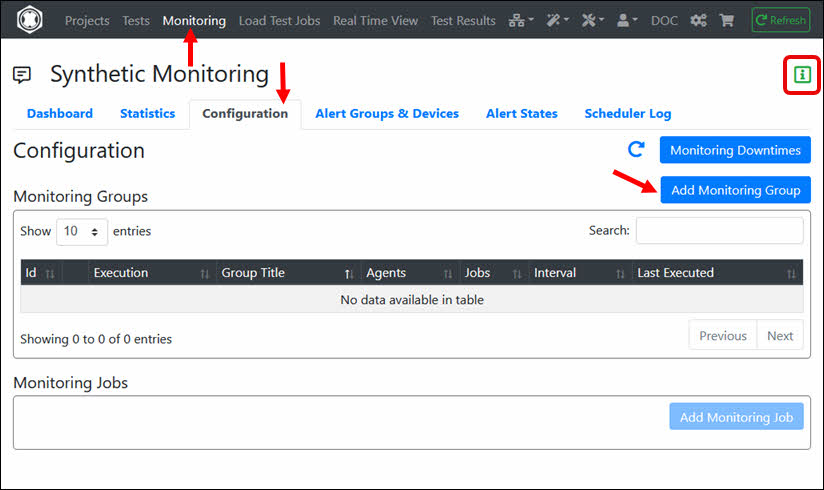

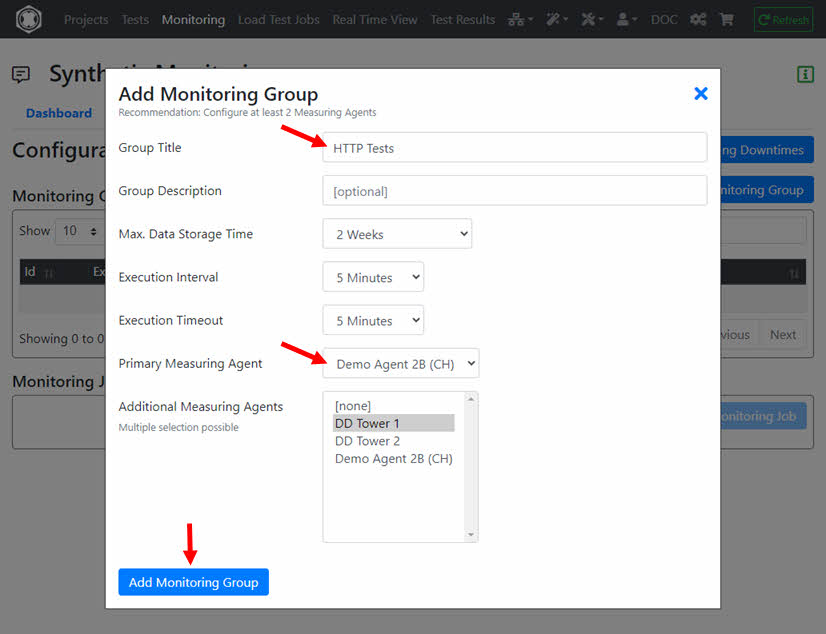

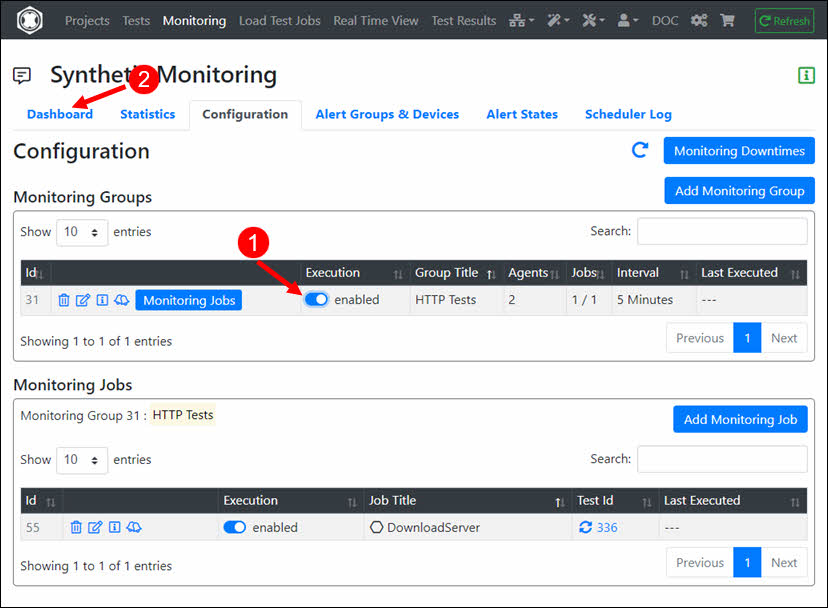

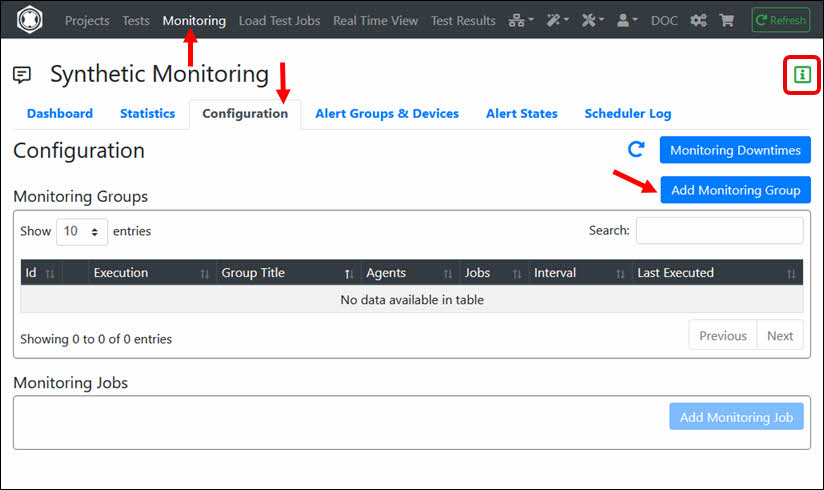

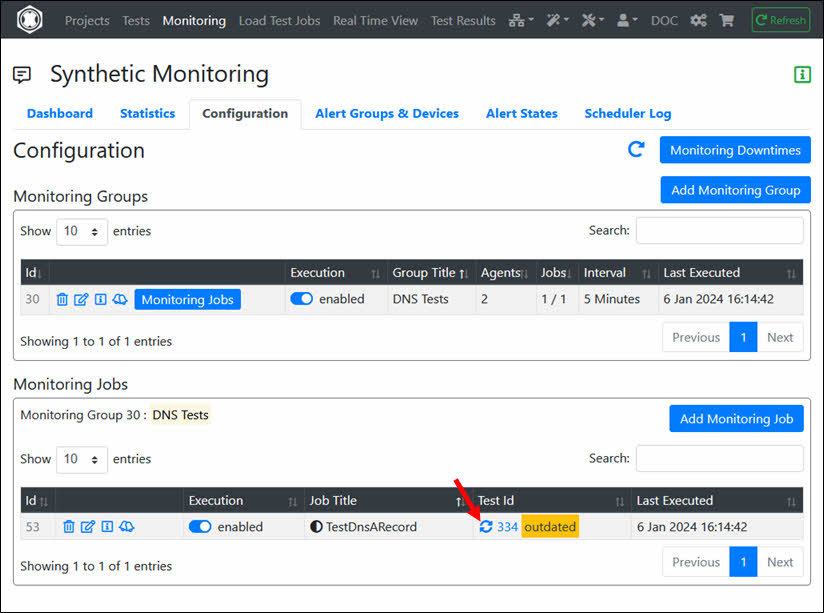

If this is your first Monitoring Job, you must first create a Monitoring Group. Navigate to Monitoring, click the ‘Configuration’ tab and then click ‘Add Monitoring Group’. There is also a help available for configuring the Synthetic Monitoring.

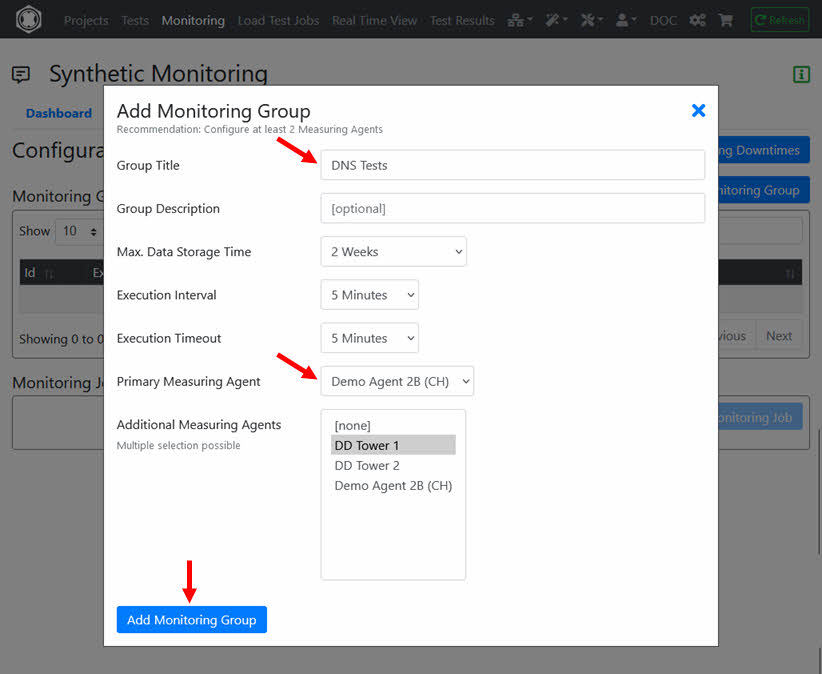

Enter the ‘Group Title’ and select at least one ‘Measuring Agent’ on which the Monitoring Job(s) will be executed. Then click ‘Add Monitoring Group’.

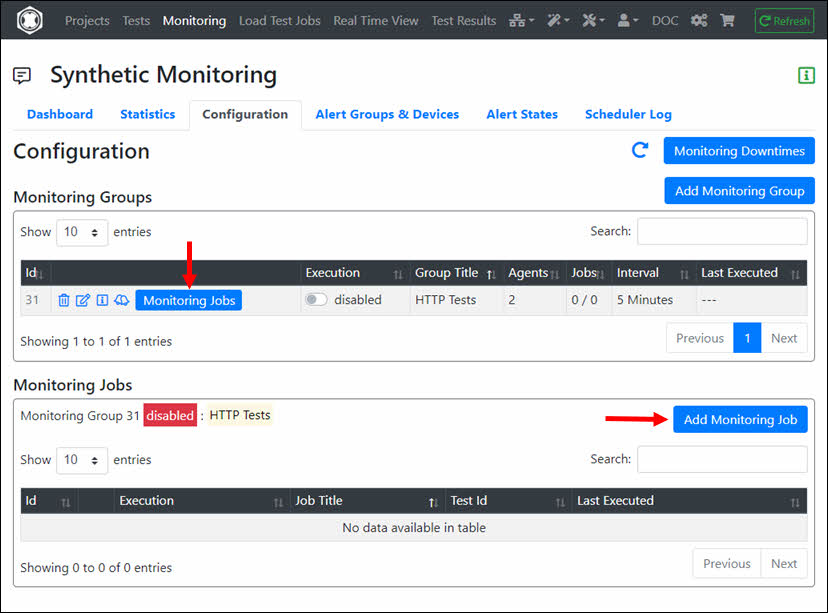

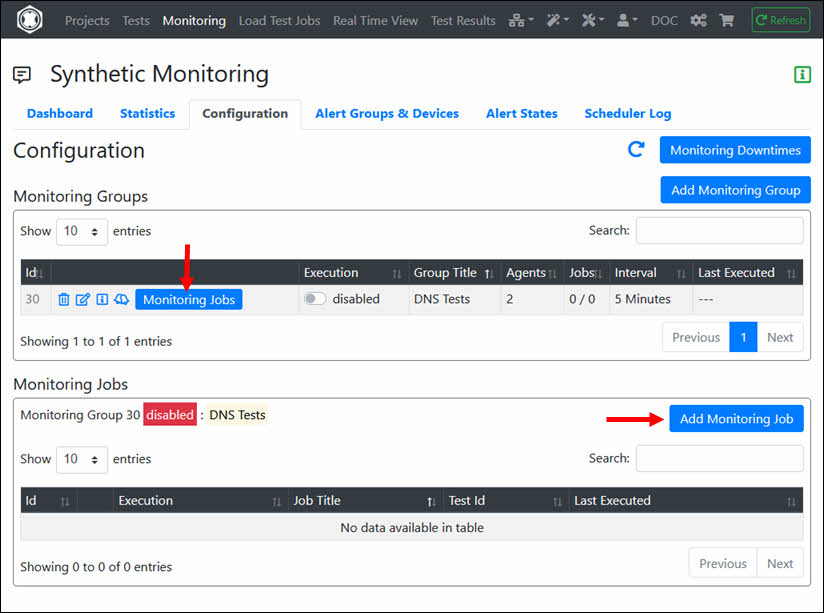

In the ‘Monitoring Group’ click ‘Monitoring Jobs’ and then click ‘Add Monitoring Job’.

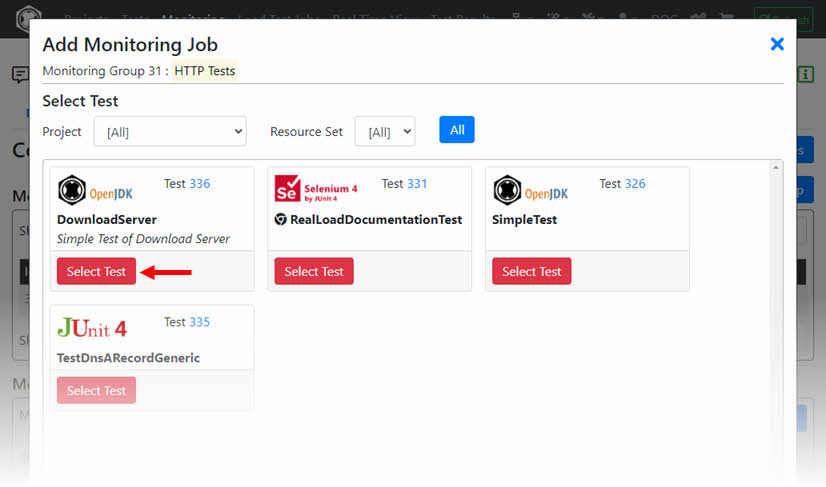

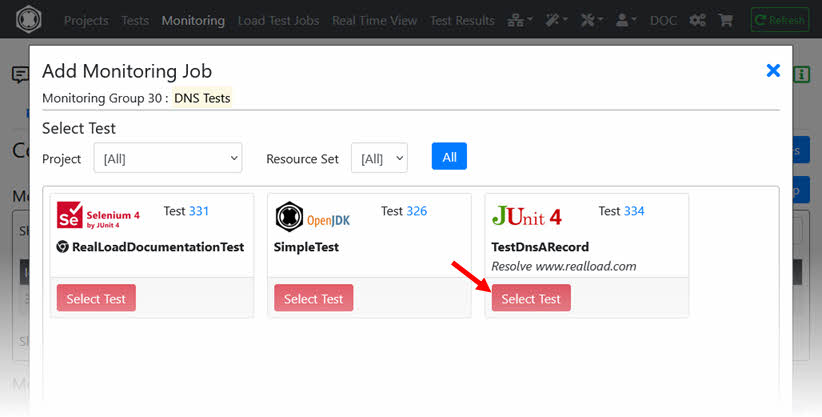

Select the ‘Test’ of the Monitoring Job.

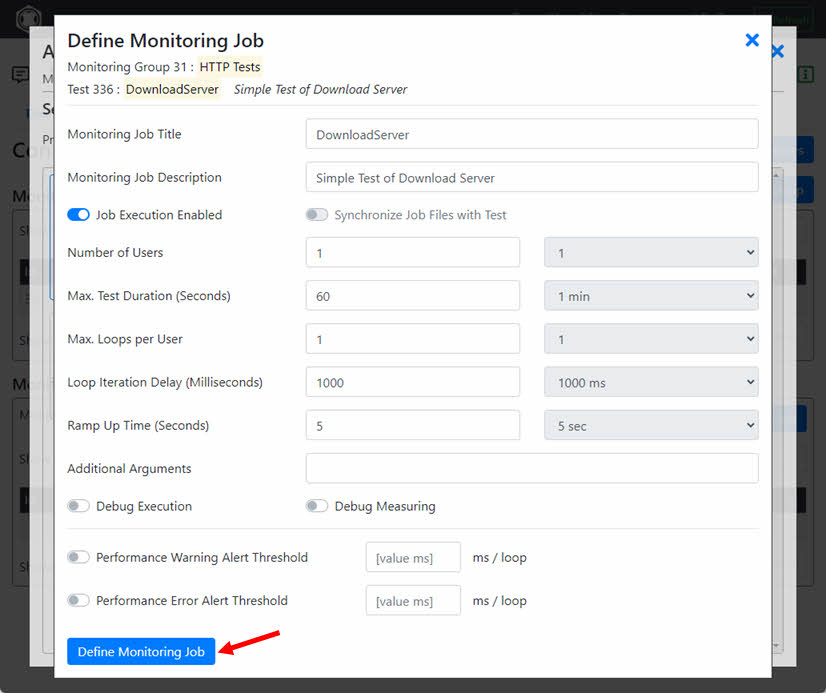

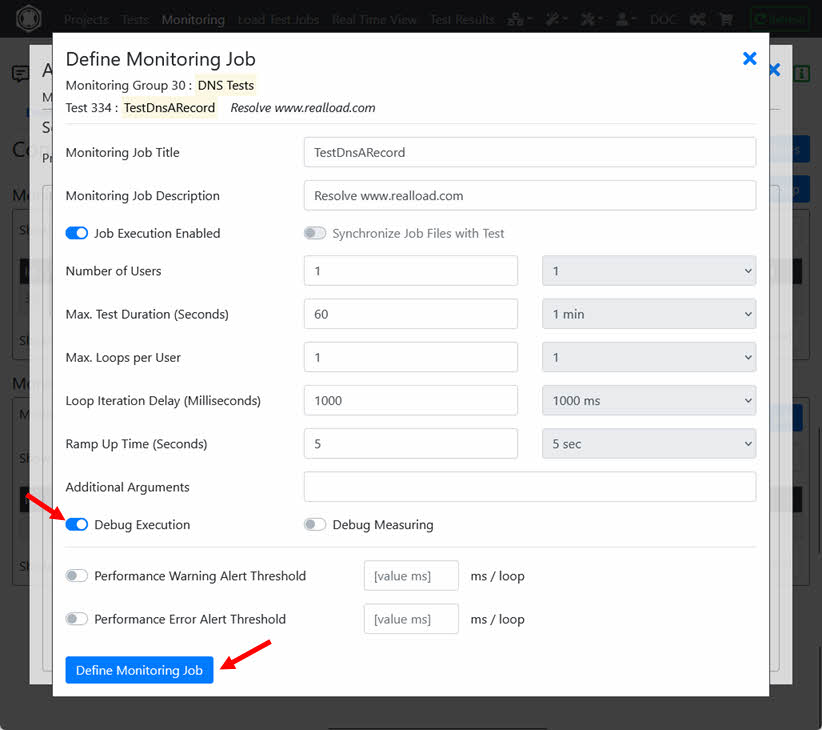

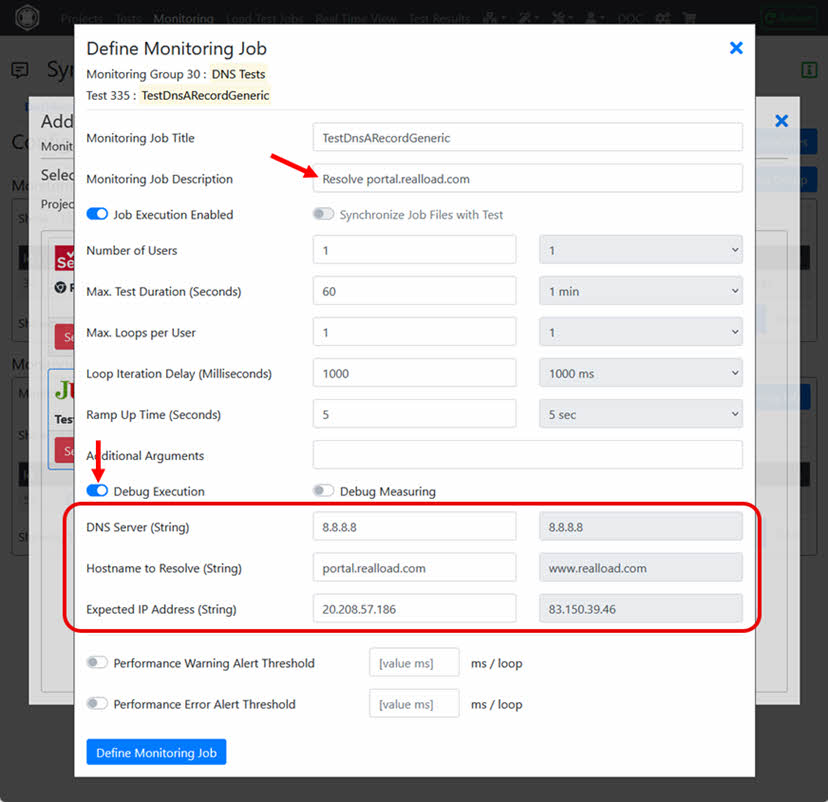

Click ‘Define Monitoring Job’.

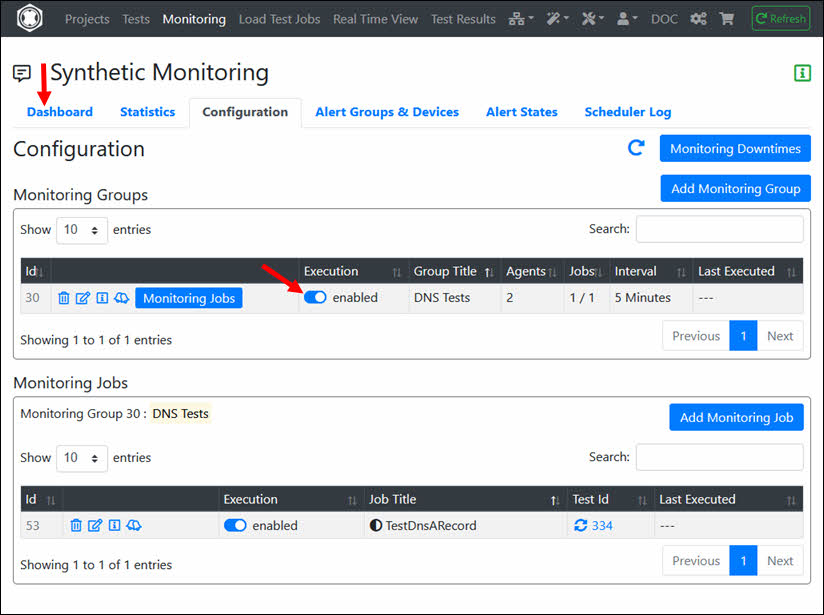

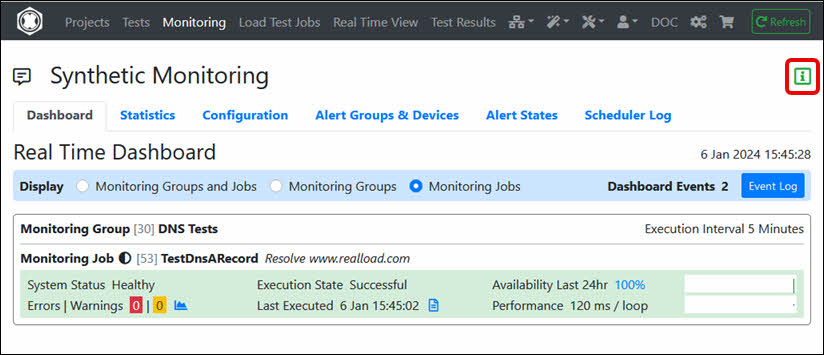

Enable the execution of the Monitoring Group and navigate to ‘Dashboard’.

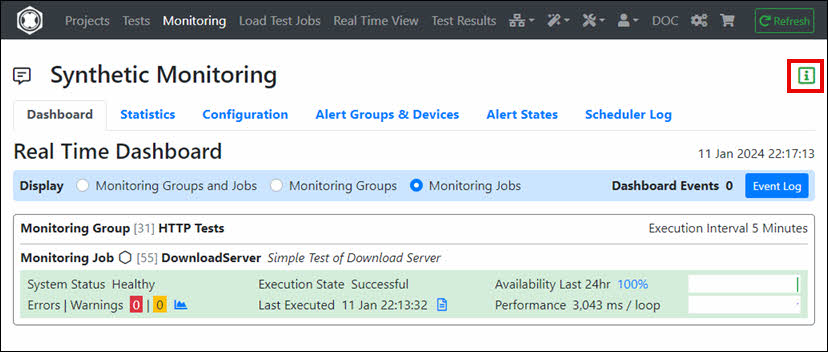

The Monitoring Job is now defined and will be executed periodically. For additional help configuring monitoring (e.g. adding ‘Alert Devices’), see Monitoring Help.

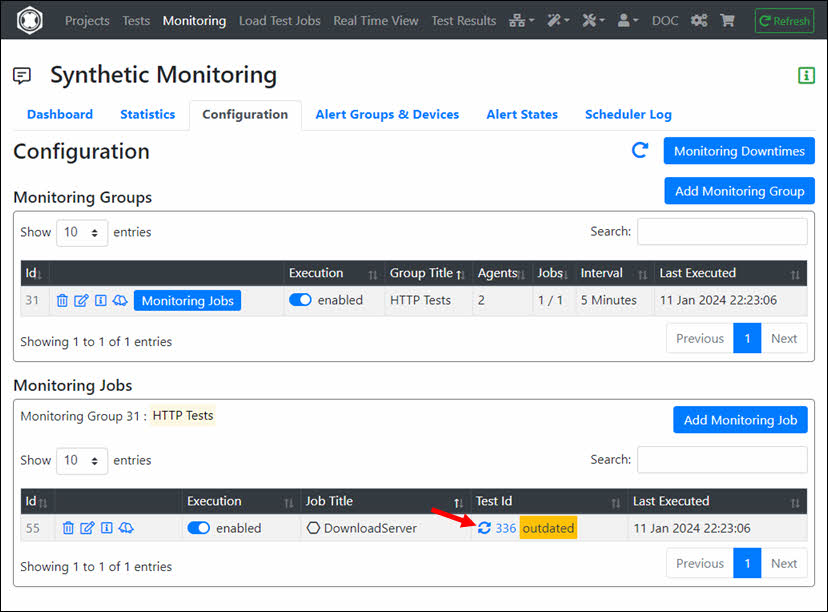

Tip: If you modify the RealLoad ‘Test’, the corresponding Monitoring Job is not automatically updated to avoid unexpected corruption of the Monitoring Job. After you have verified the modified ‘Test’ by running a small Load Test Job, you must manually update the Monitoring Job.

Done, congrats, you’ve run your first test with Real Load.

As you have seen, a RealLoad Test can be run as both a Load Test Job and a Monitoring Job.

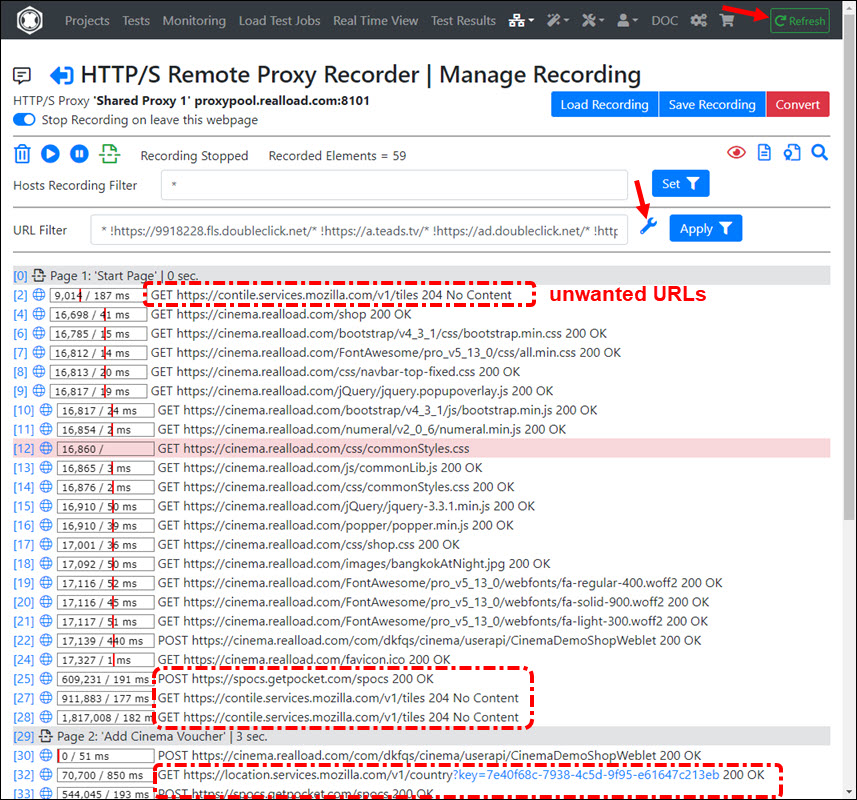

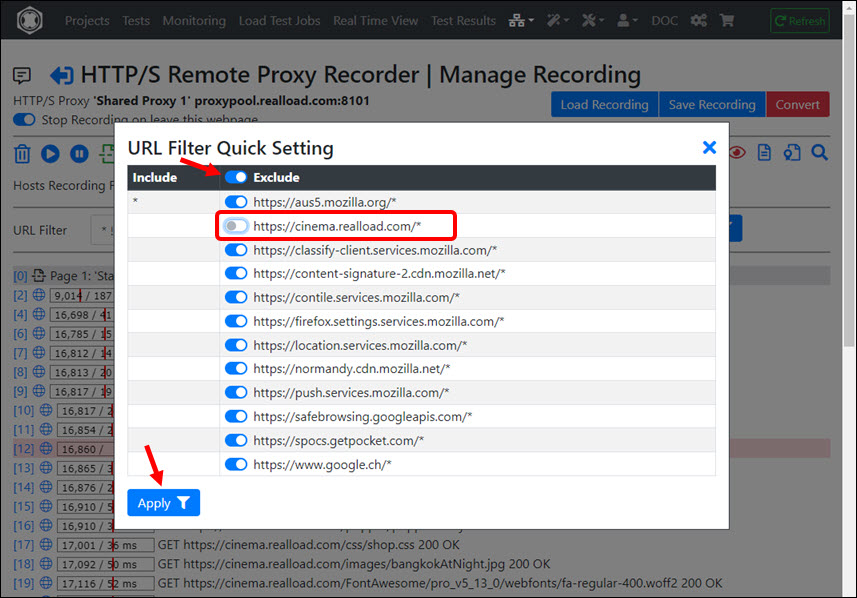

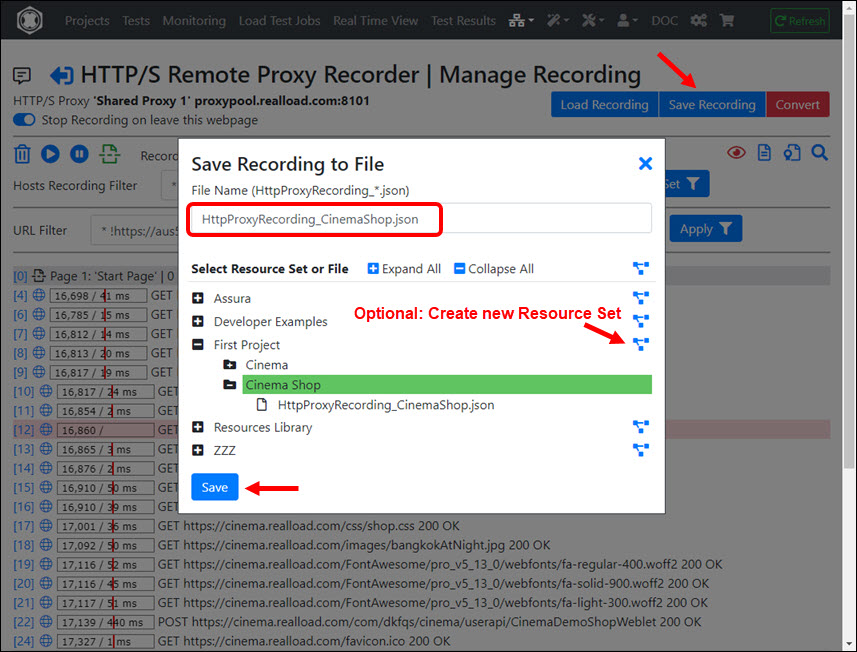

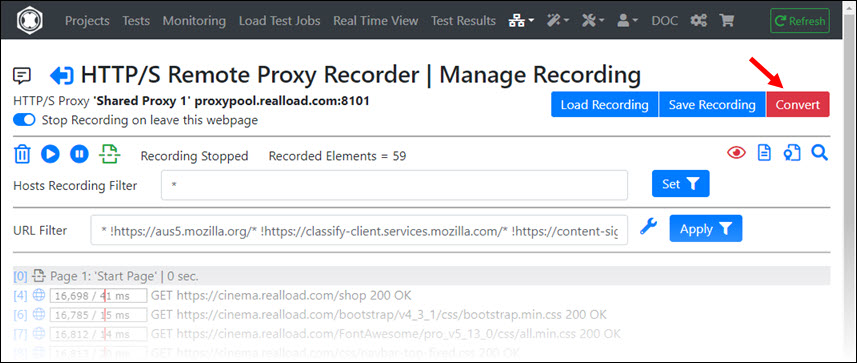

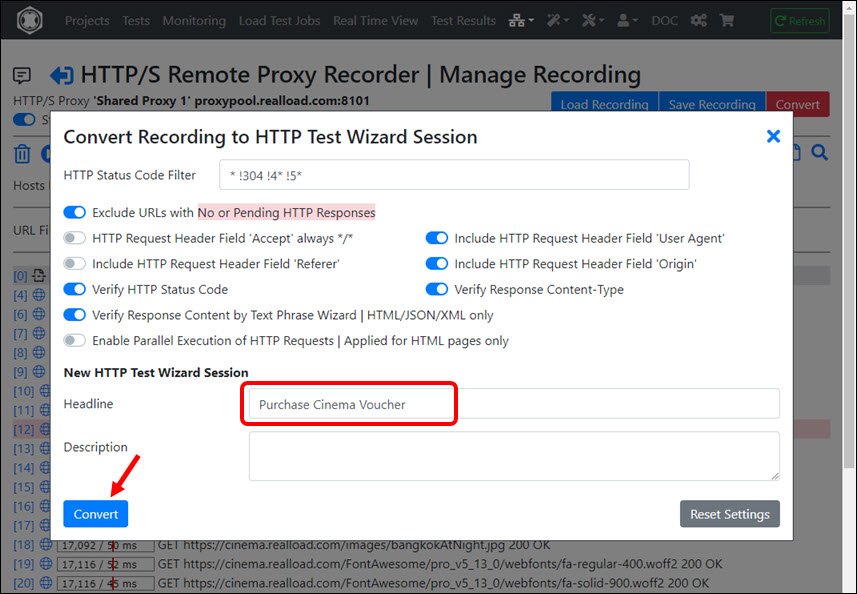

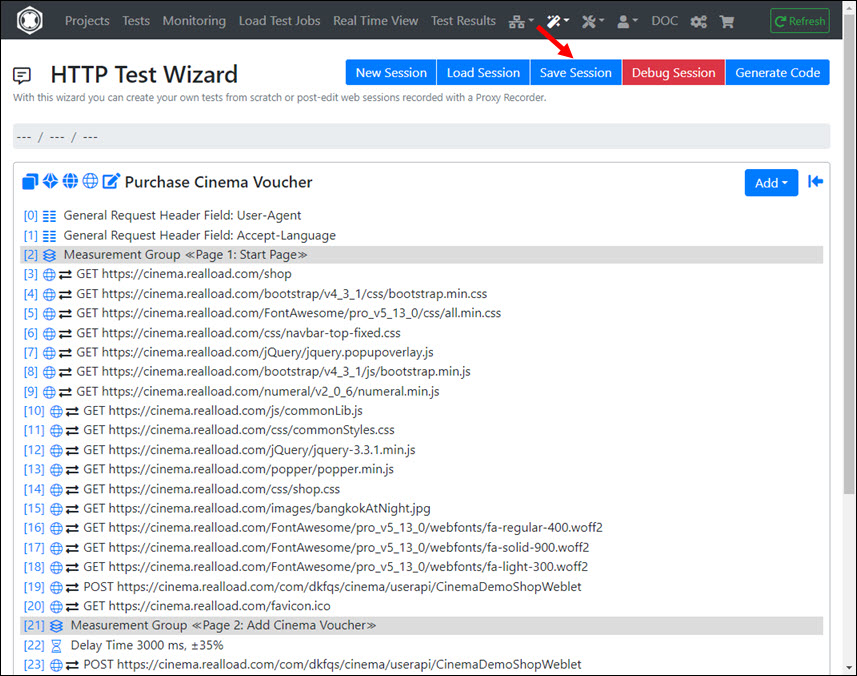

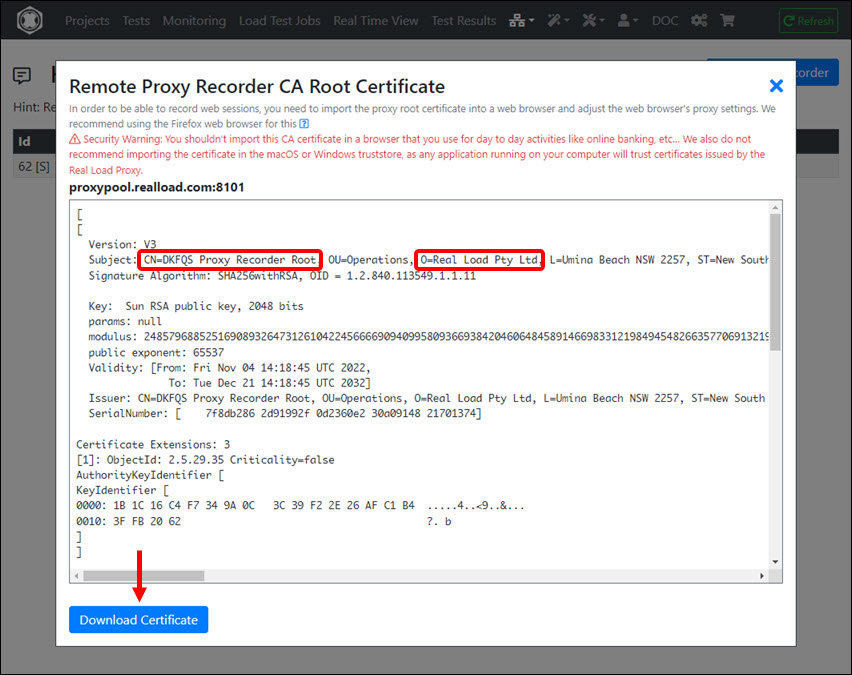

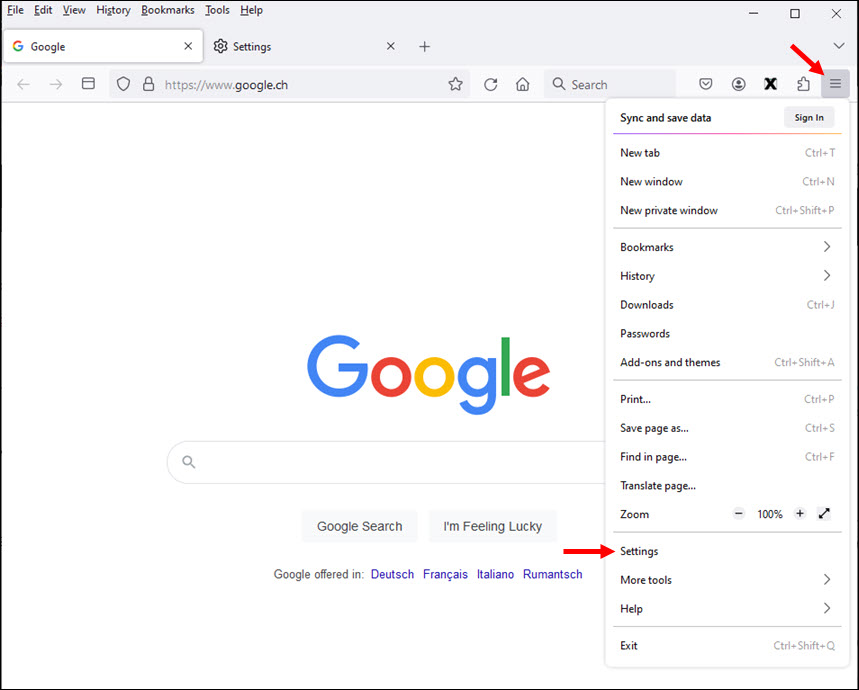

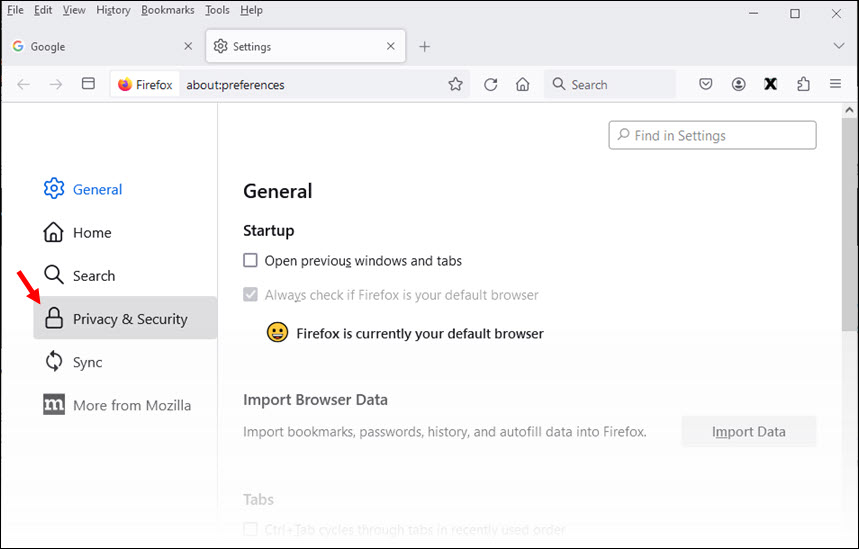

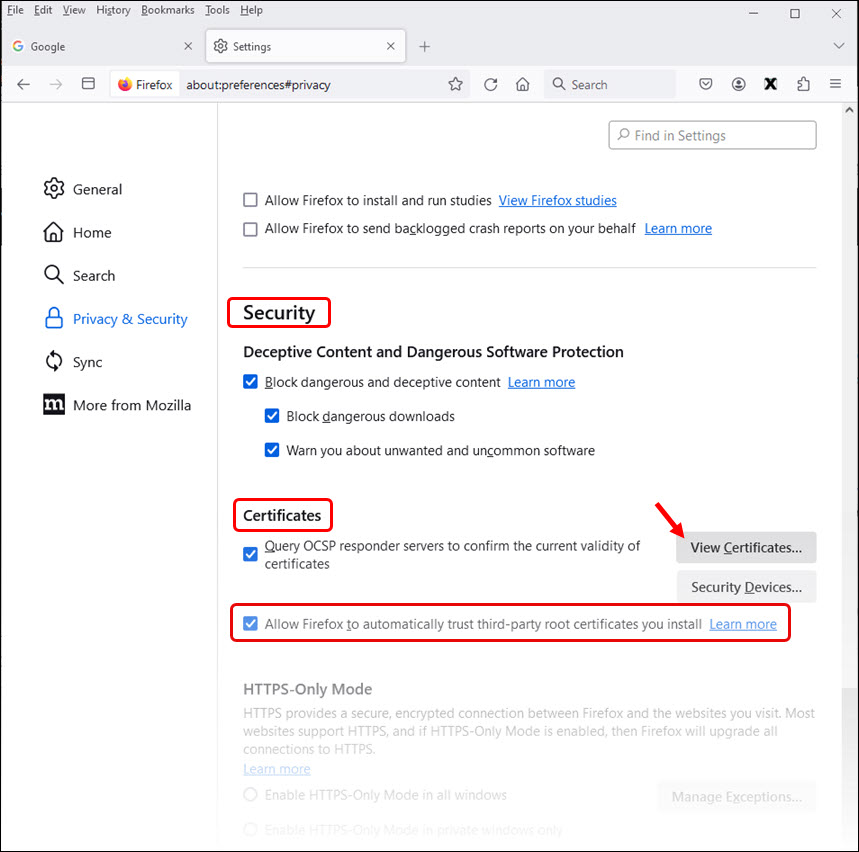

This example was deliberately kept simple so that you can become familiar with the RealLoad product. To create a test with a web browser session across multiple web pages, you can use a Remote Proxy Recorder to record the test case and then convert the recording into an HTTP Test Wizard session.

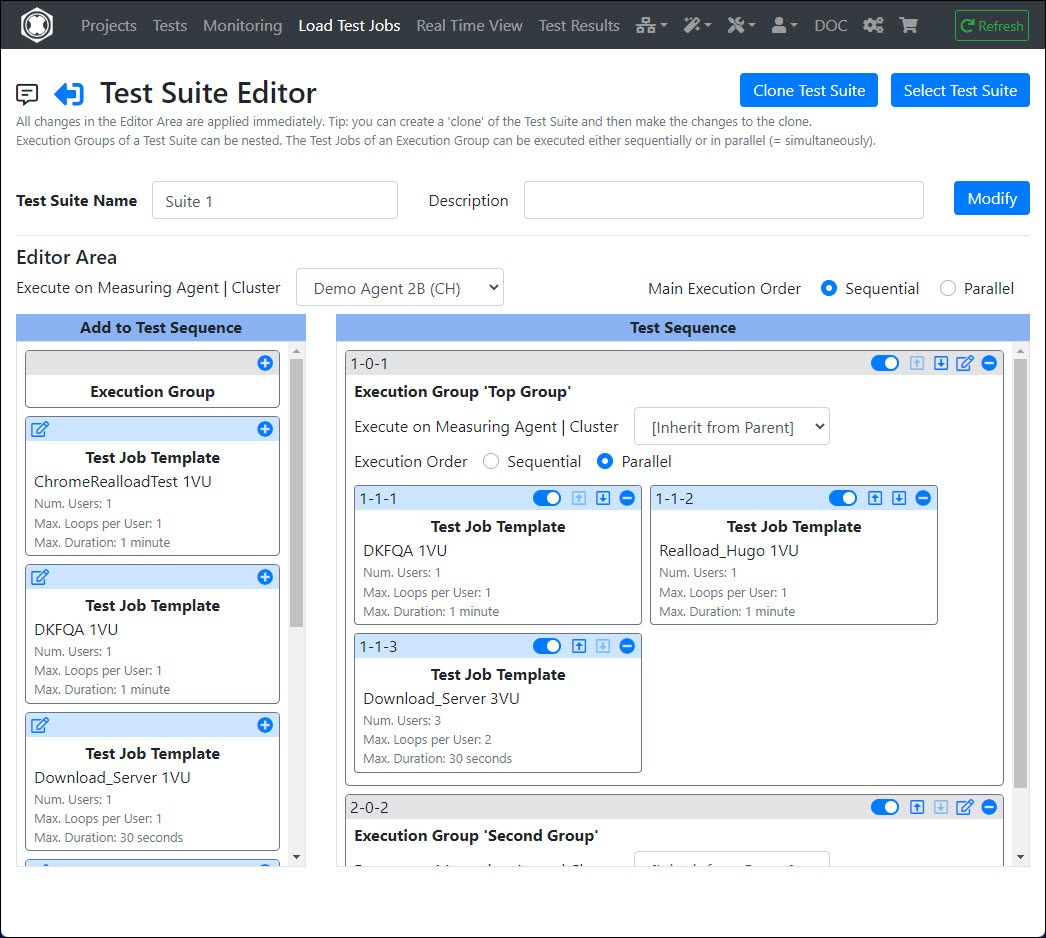

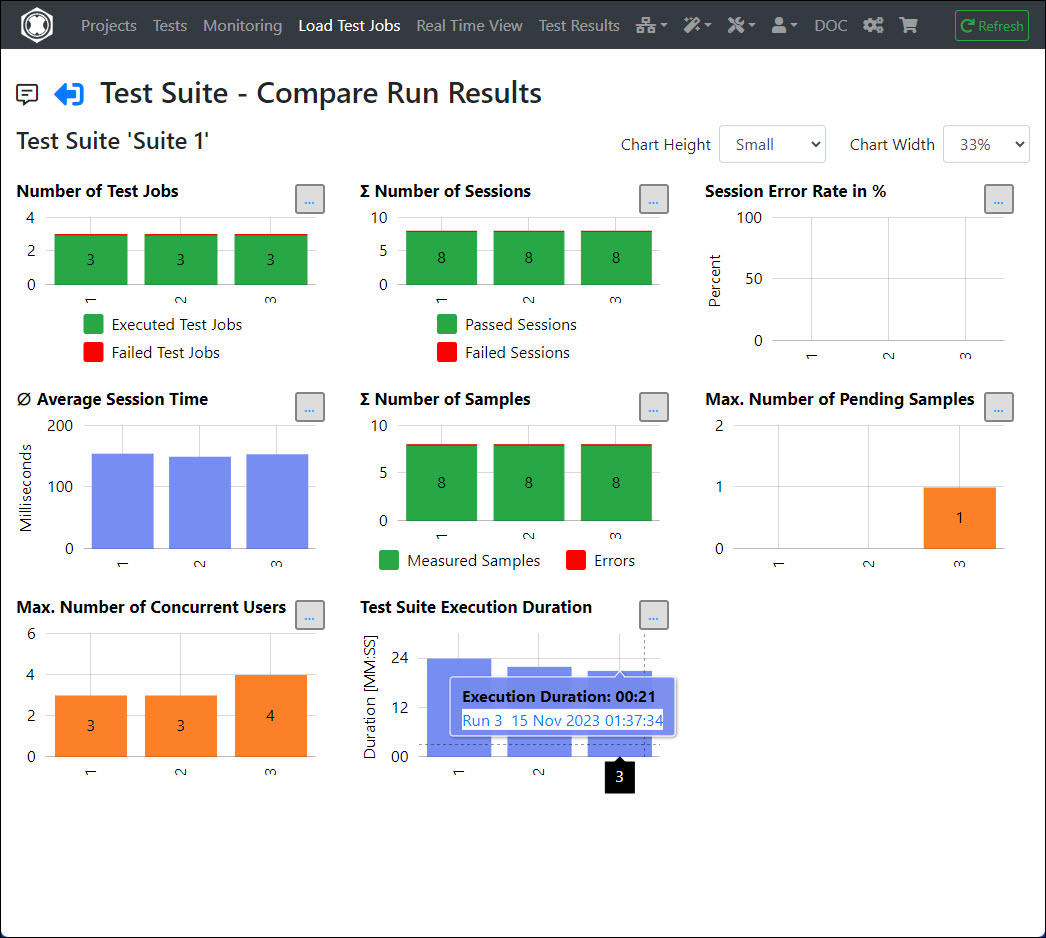

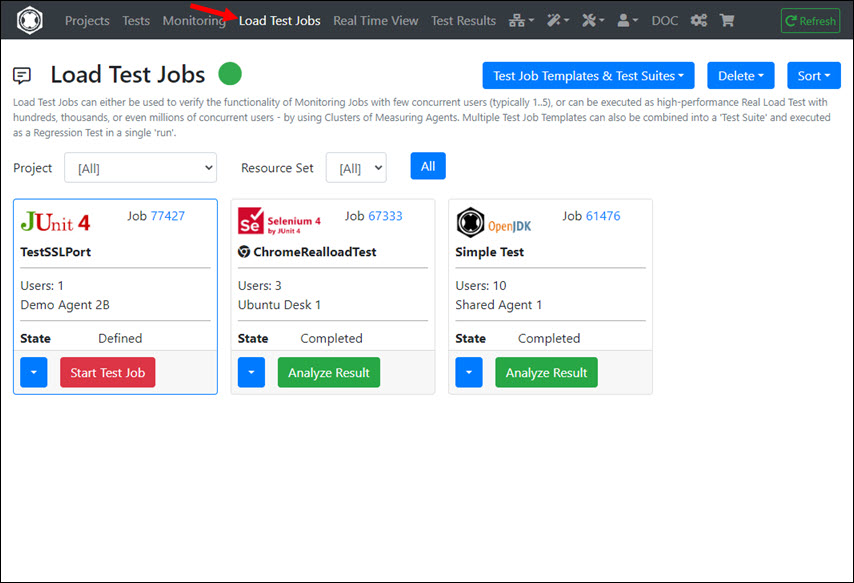

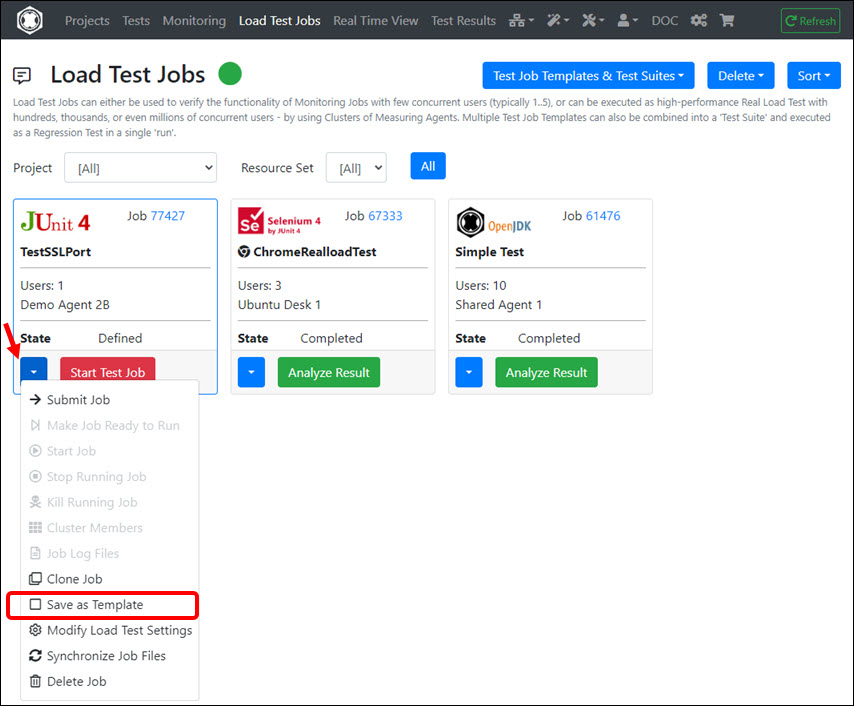

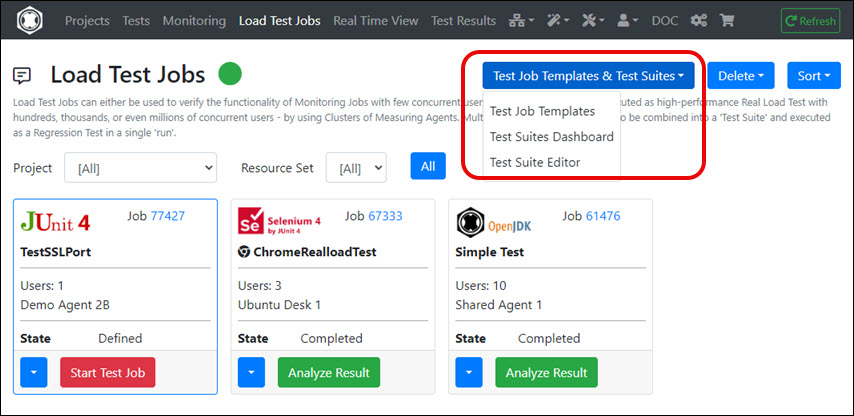

Additionally, a Test Job Template can also be defined from any ‘Load Test Job’, which can then be part of a Test Suite. This means that you can add multiple ‘Test Job Templates’ to a Test Suite and execute them in a single run as a Regression Test.

To learn more, we recommend you read the User Guide. There you will find detailed documentation on the steps described in this document.

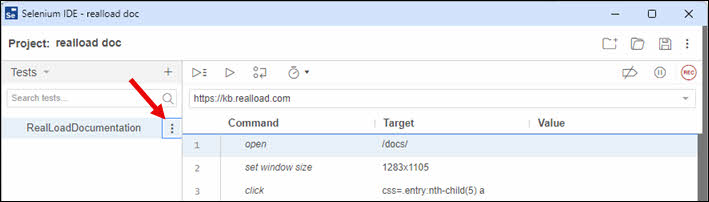

This example shows how a Selenium IDE Test - which was recorded with a Chrome or Firefox web browser - can be converted into a RealLoad ‘Test’. The converted RealLoad ‘Test’ can then be executed both as a Load Test Job and as a Monitoring Job. In addition, the converted test can also be part of a Test Suite for performing Regression Tests.

In most cases, the conversion can be done easily with just a few mouse clicks - without the need for any special settings.

At the end of each executed test run (of the converted RealLoad test), a final screenshot of the simulated web browser session is taken for each virtual user so that you can check whether the web browser session of the virtual user has been fully executed.

Tip: If possible, always use the Chrome web browser for Selenium IDE recording, as Chrome sessions are simulated much faster (= more realistic) than Firefox sessions.

To configure and execute this test you’ll need:

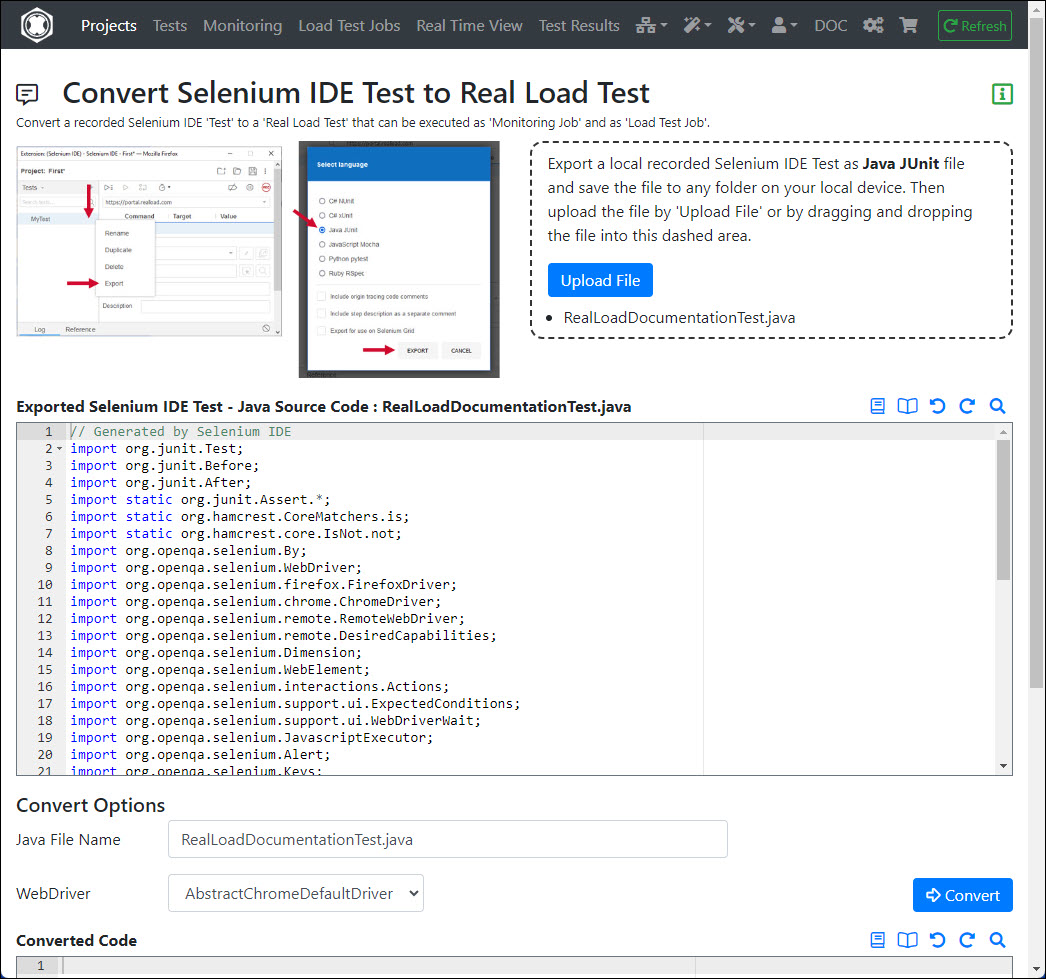

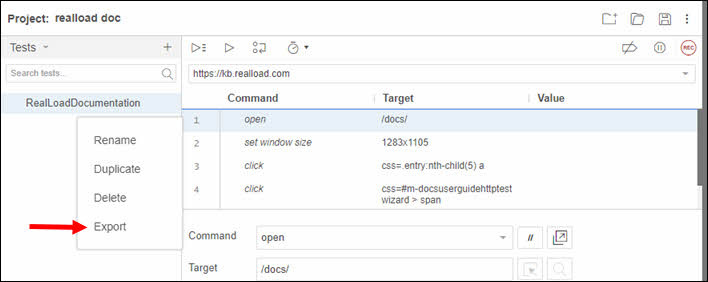

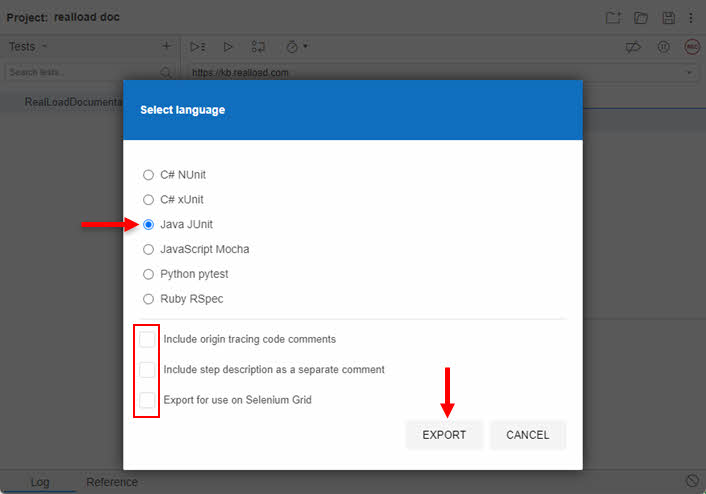

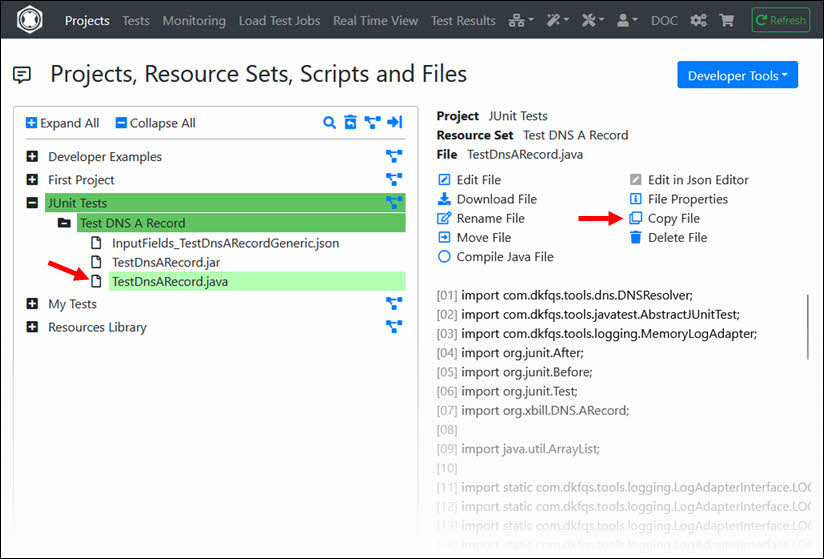

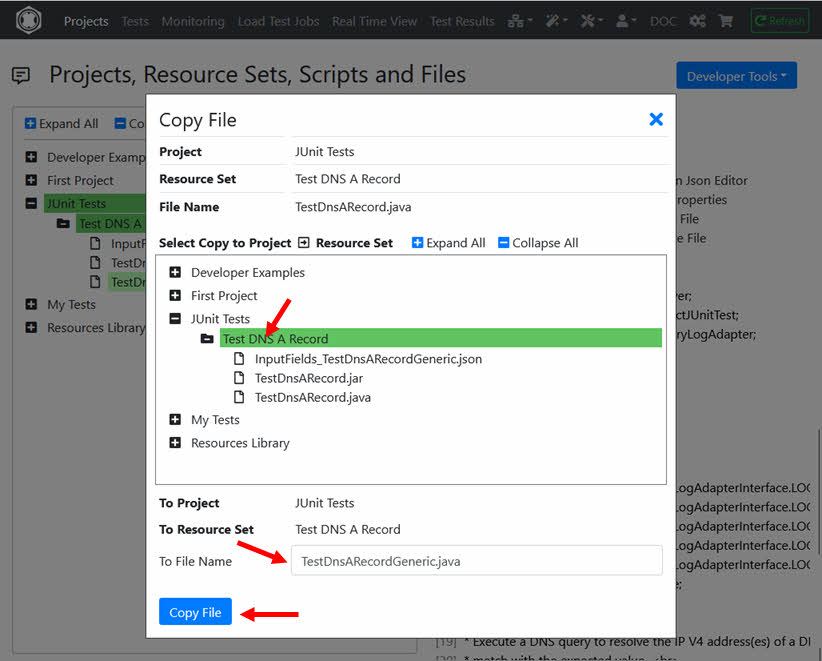

Export the your recorded Selenium IDE Test as Java JUnit file and save the file to any folder on your local device.

Note that the 3-dot dropdown icon may initially be hidden and may only appear when you are near to the Selenium ‘Test Case’ area. Do not choose any export option.

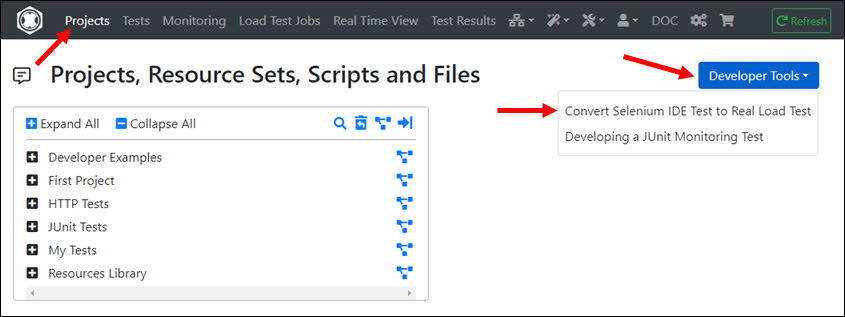

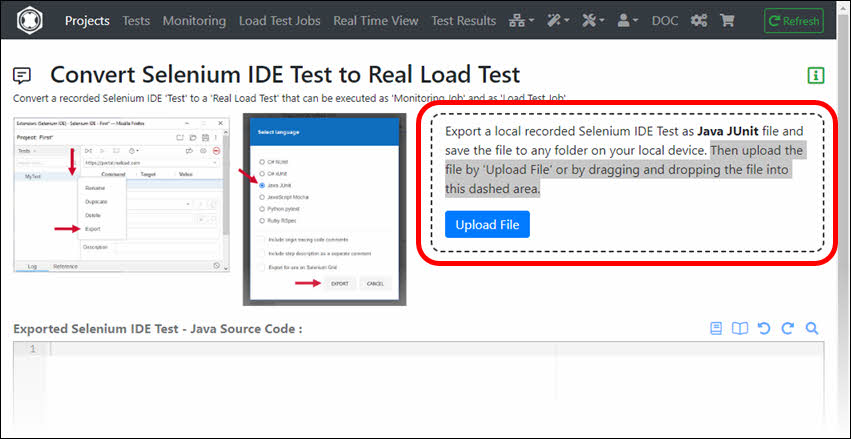

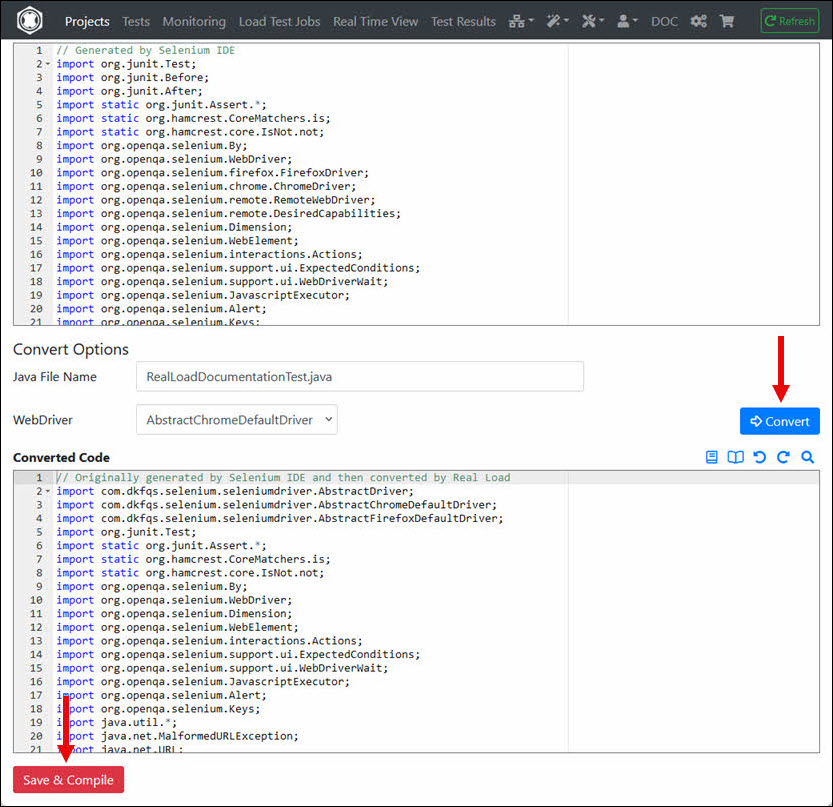

At Projects menu click on the ‘Developer Tools’ dropdown and select ‘Convert Selenium IDE Test to Real Load Test’.

Then upload the file by ‘Upload File’ or by dragging and dropping the file into the dashed area.

Click on the ‘Convert’ button after uploading the file. The converted Java code is then displayed. Then click on the ‘Save & Compile’ button.

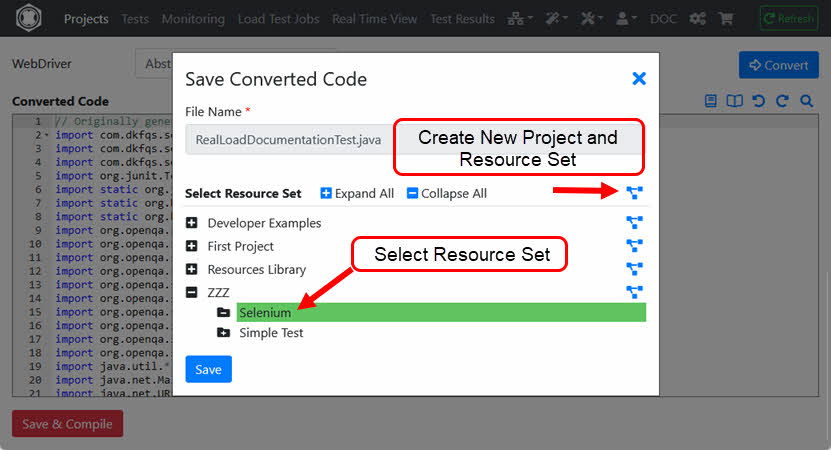

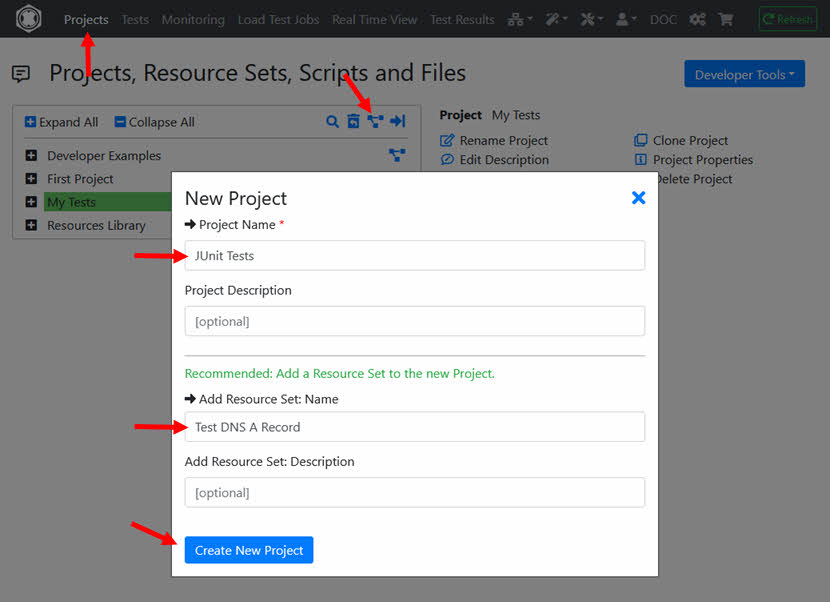

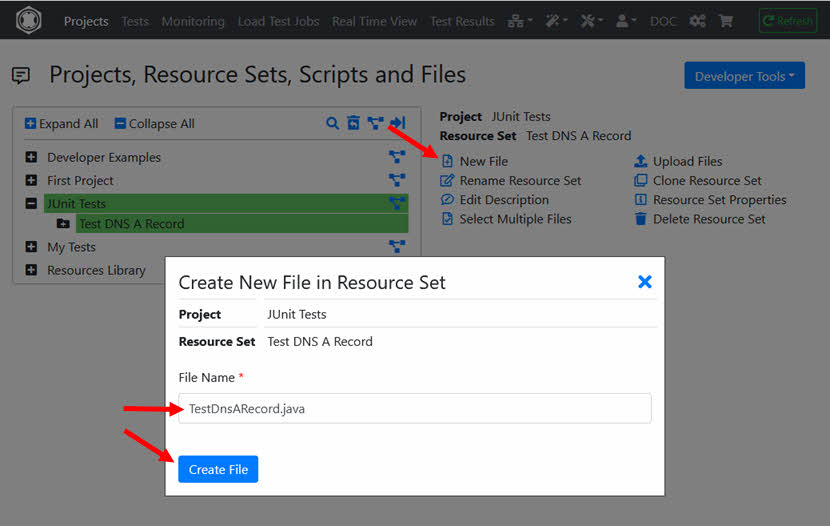

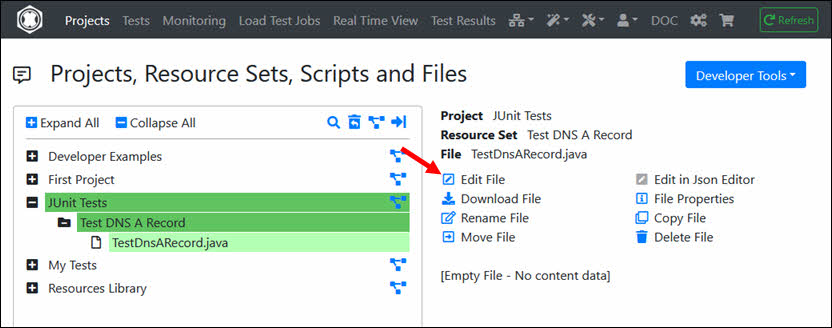

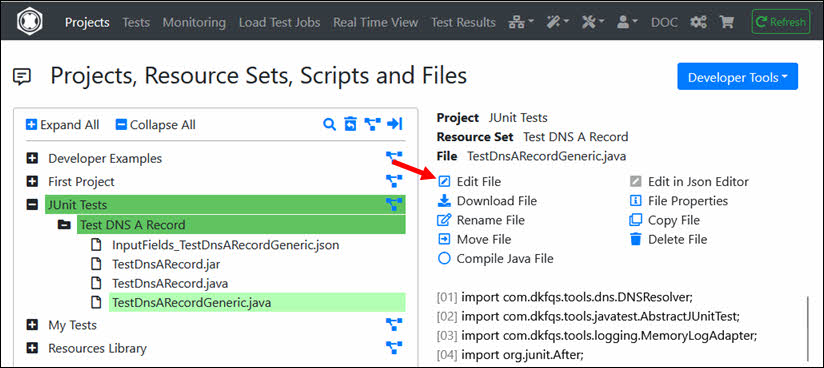

You have to save the converted code in a Resource Set of a Project. You can also first create a new Project with a new Resource Set.

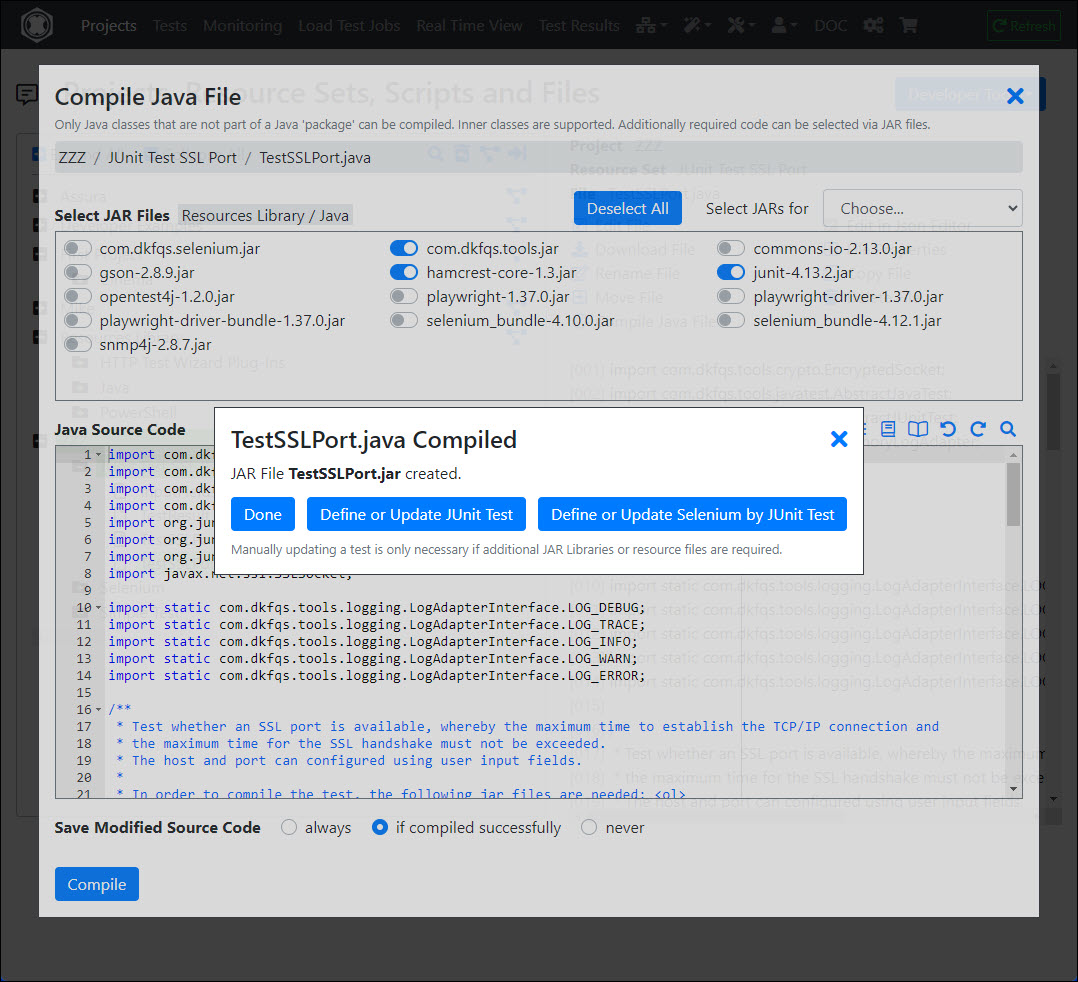

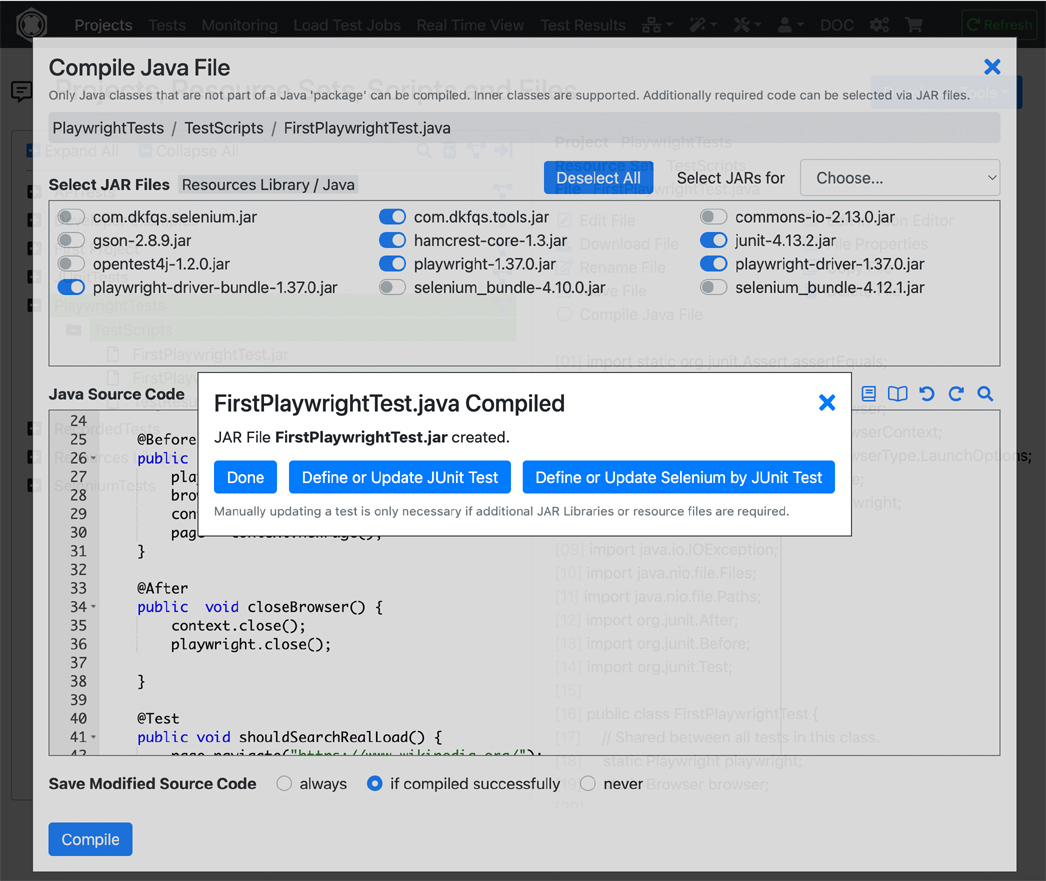

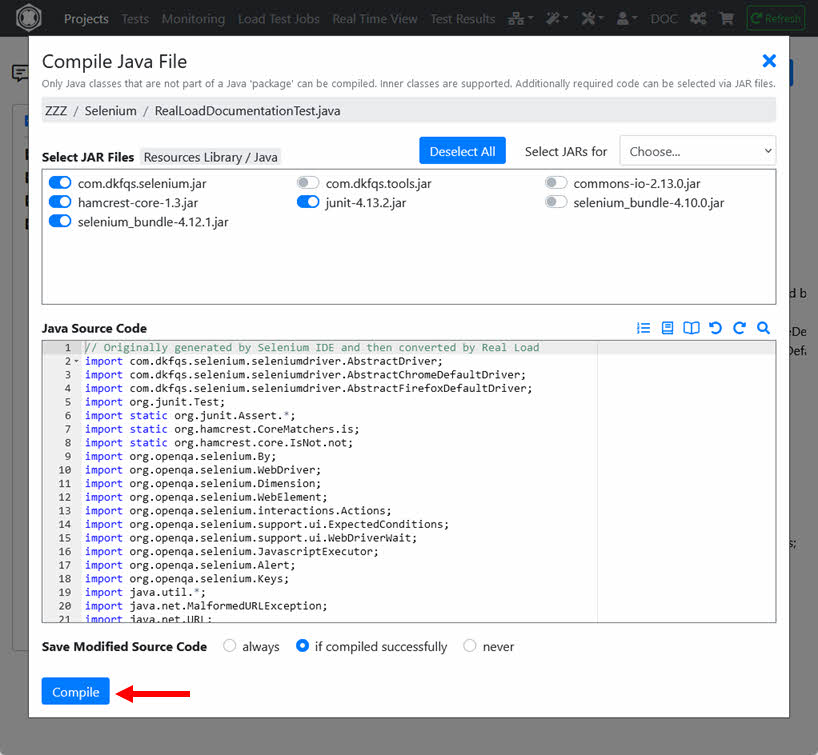

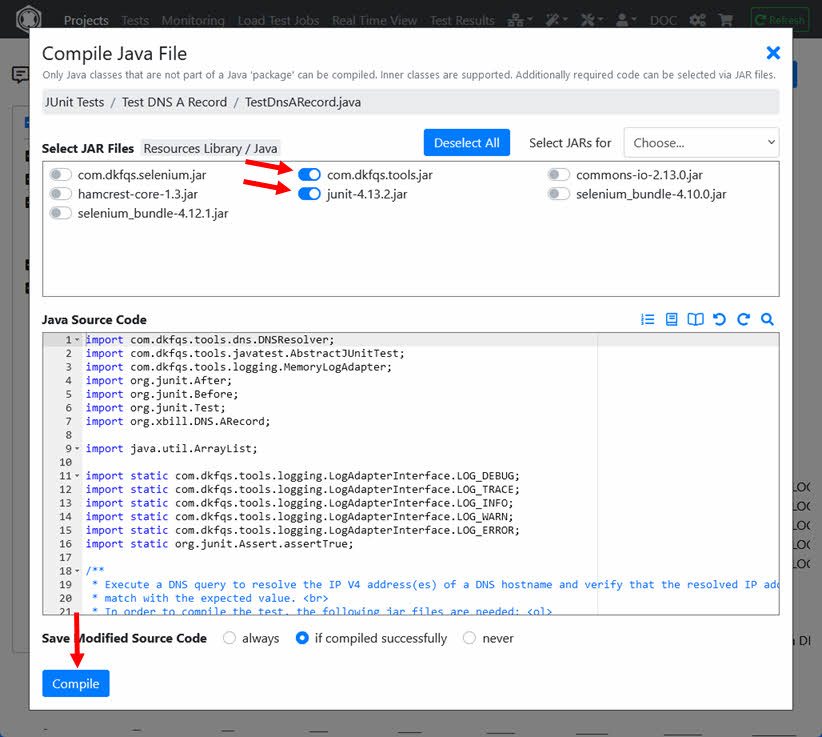

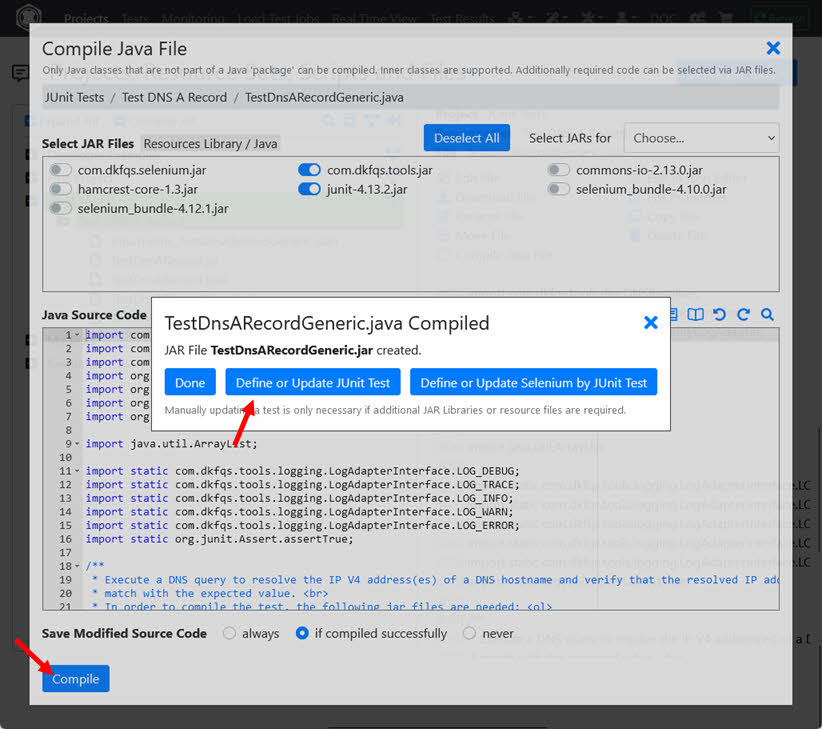

After saving the converted code, you will be automatically redirected to the Compile Java File dialog.

The JAR files required to compile are already preselected, i.e. you need only click the ‘Compile’ button.

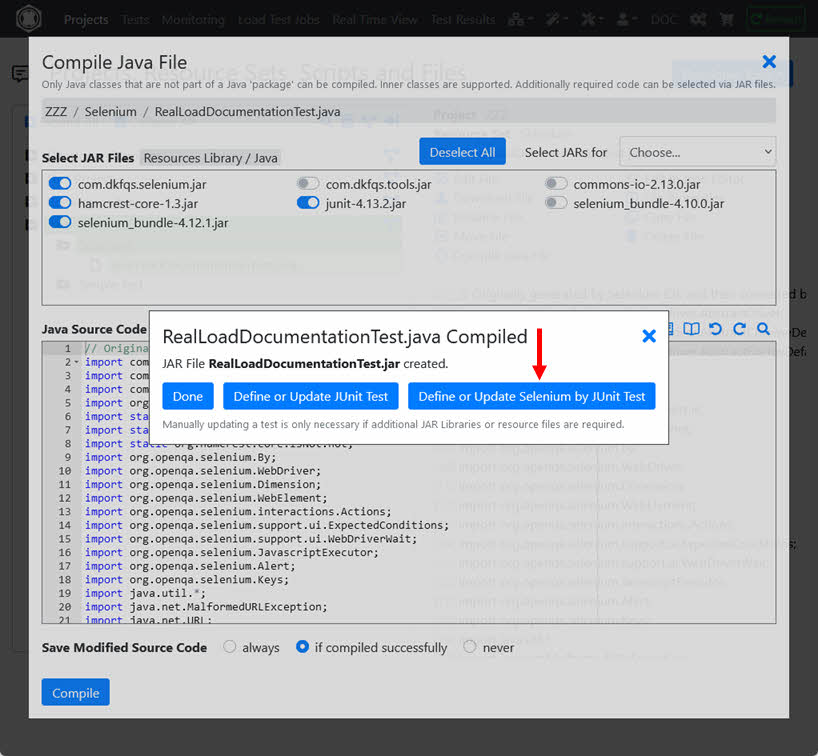

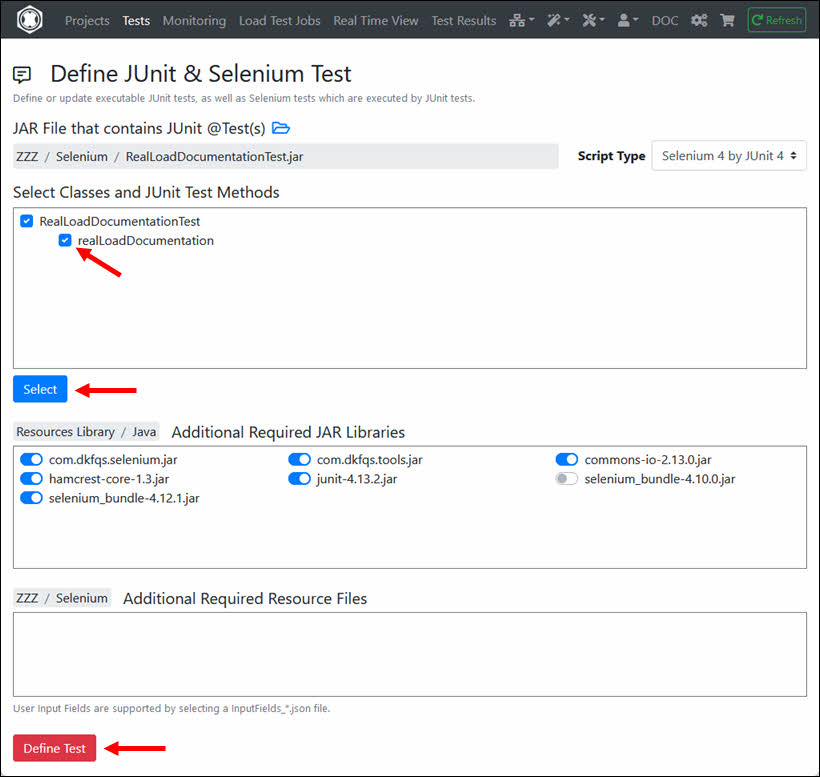

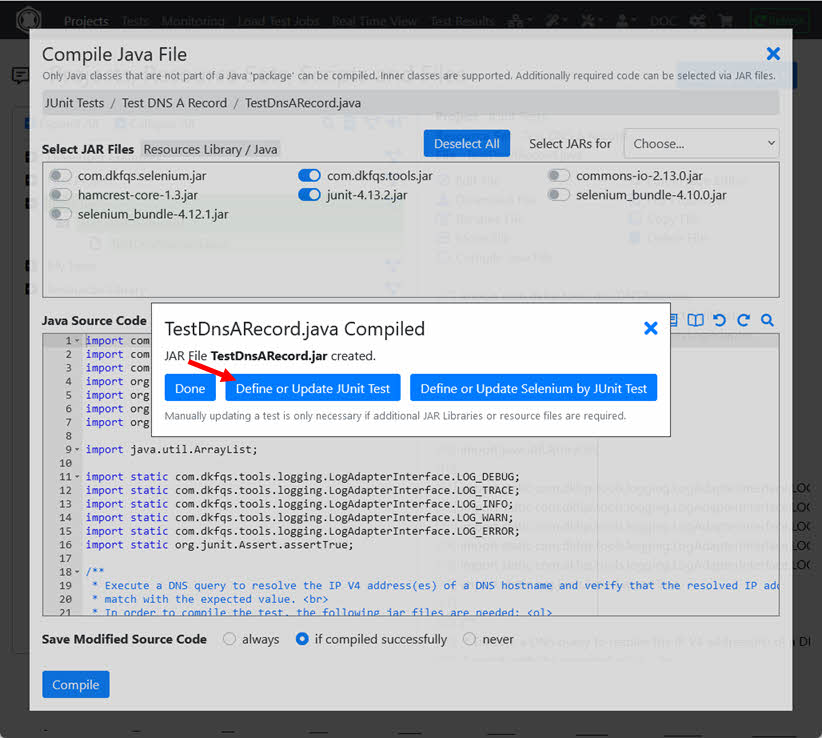

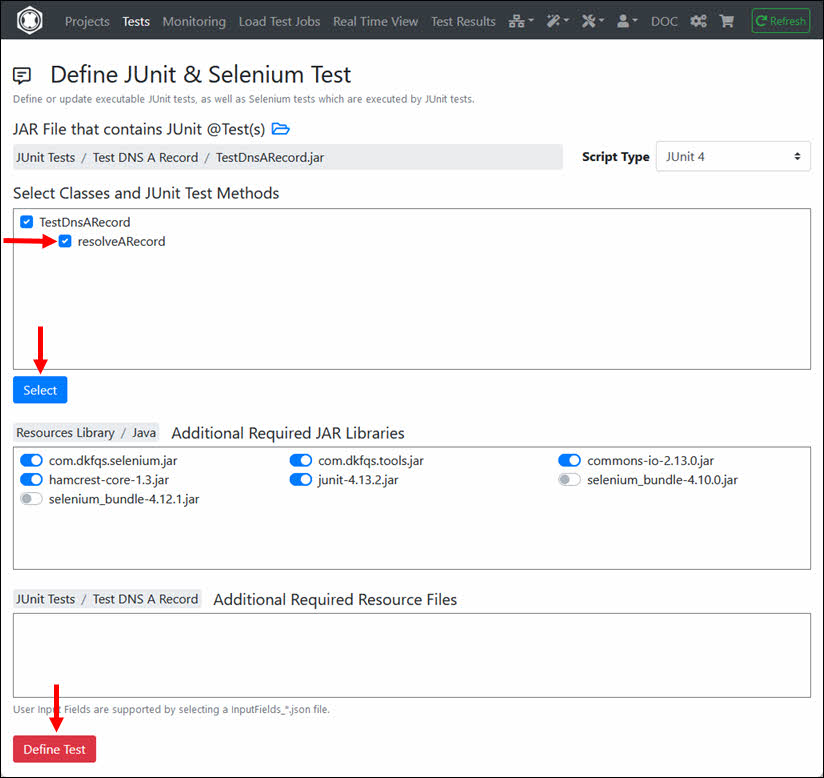

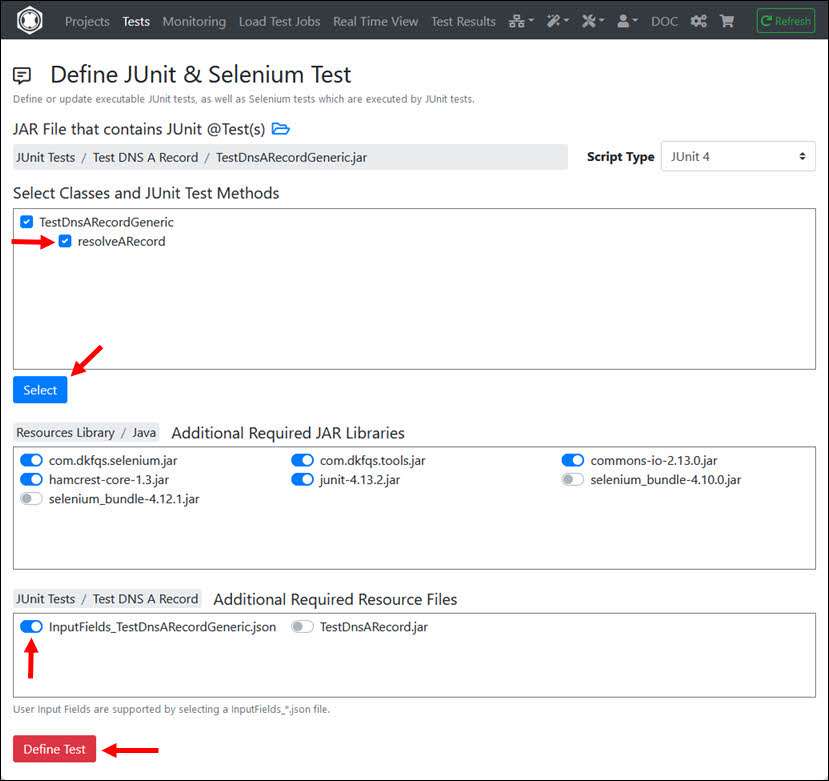

After successful compilation, click ‘Define or Update Selenium by JUnit Test’.

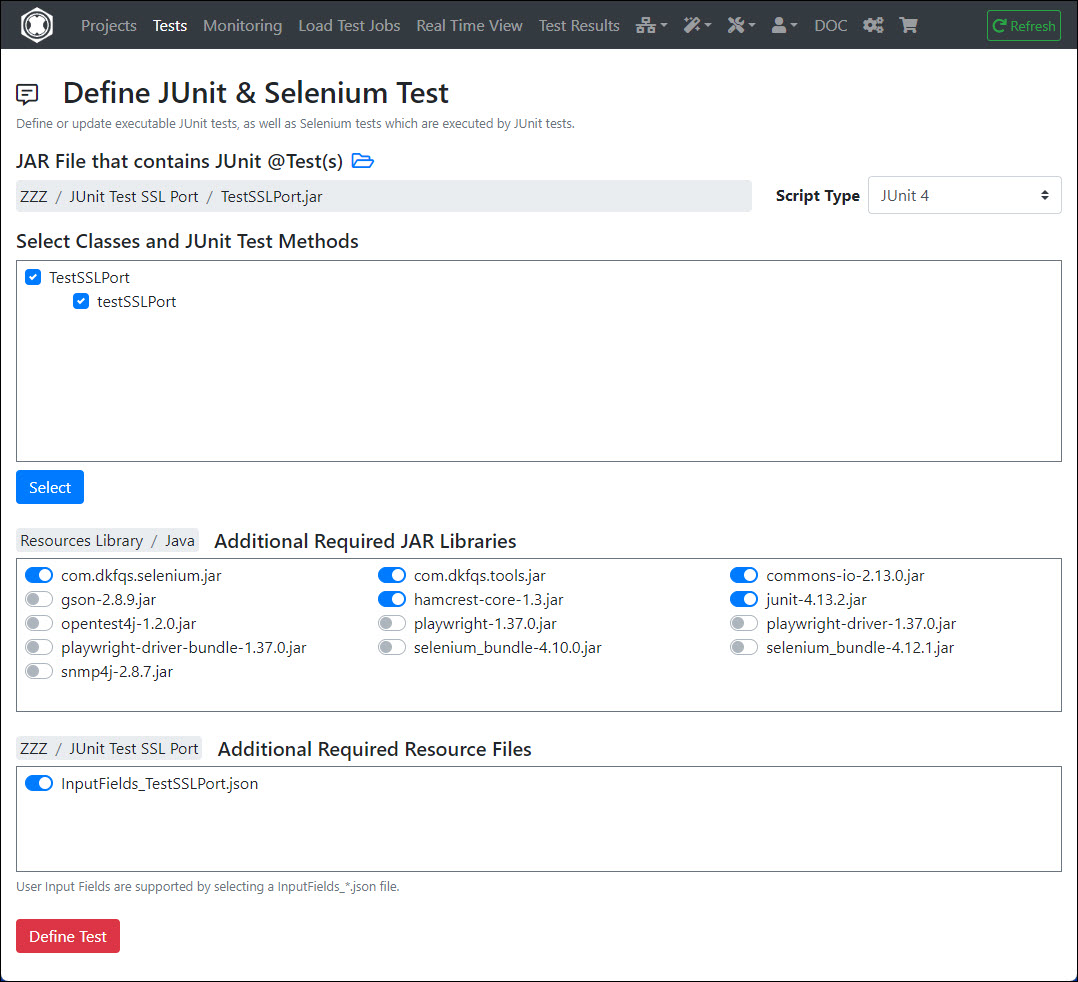

You will then be automatically redirected to the Define JUnit & Selenium Test dialog.

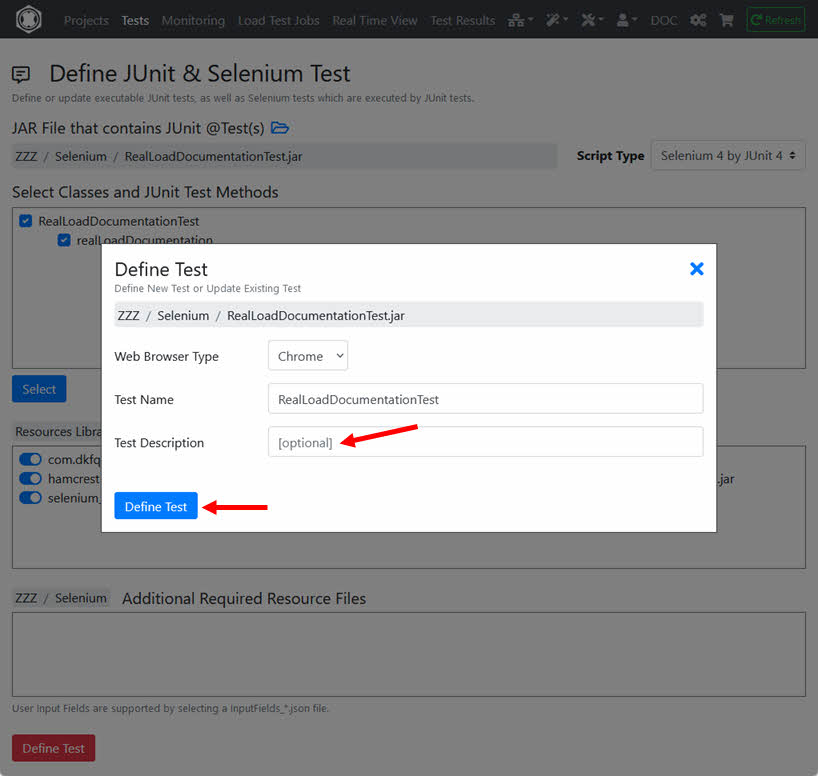

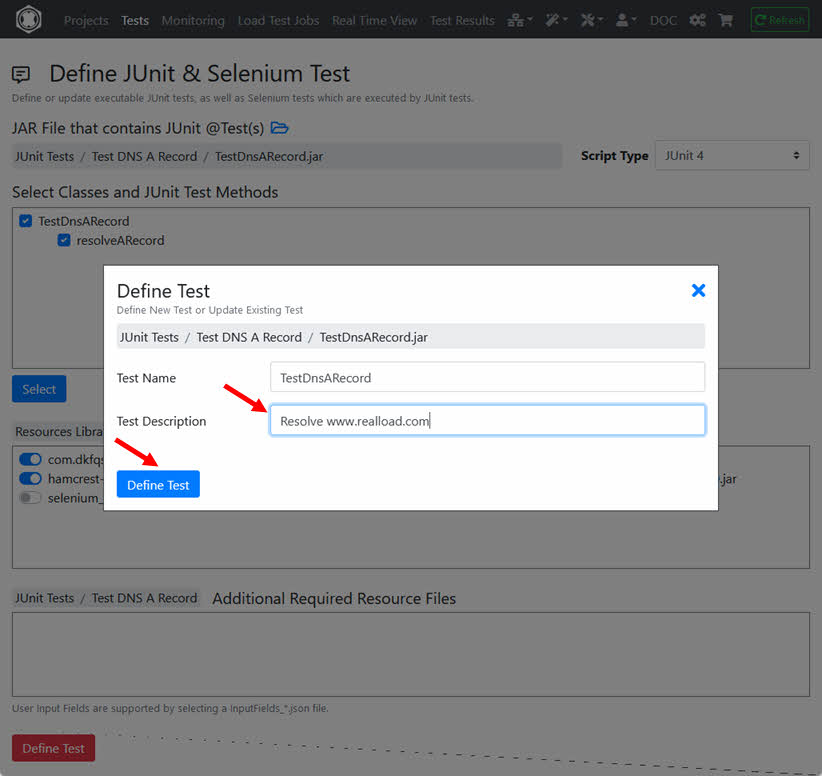

Select the JUnit @Test method (in this case only one will be shown) and click ‘Define Test’.

In an intermediate step you can enter an (optional) test description.

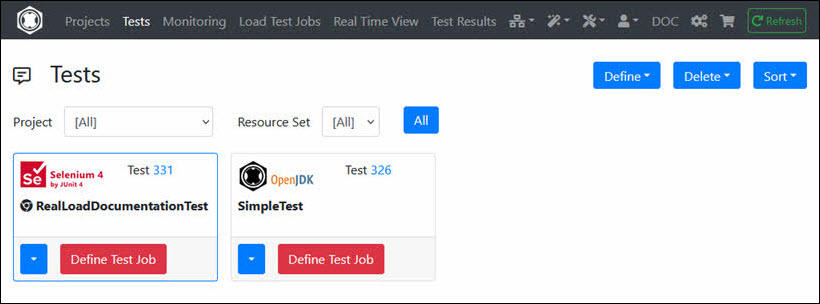

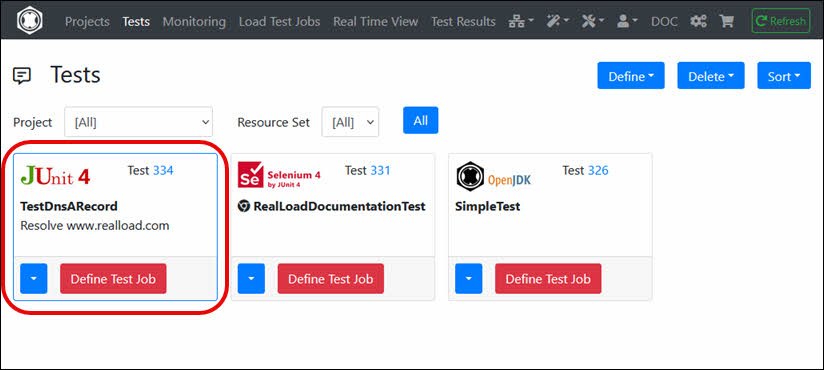

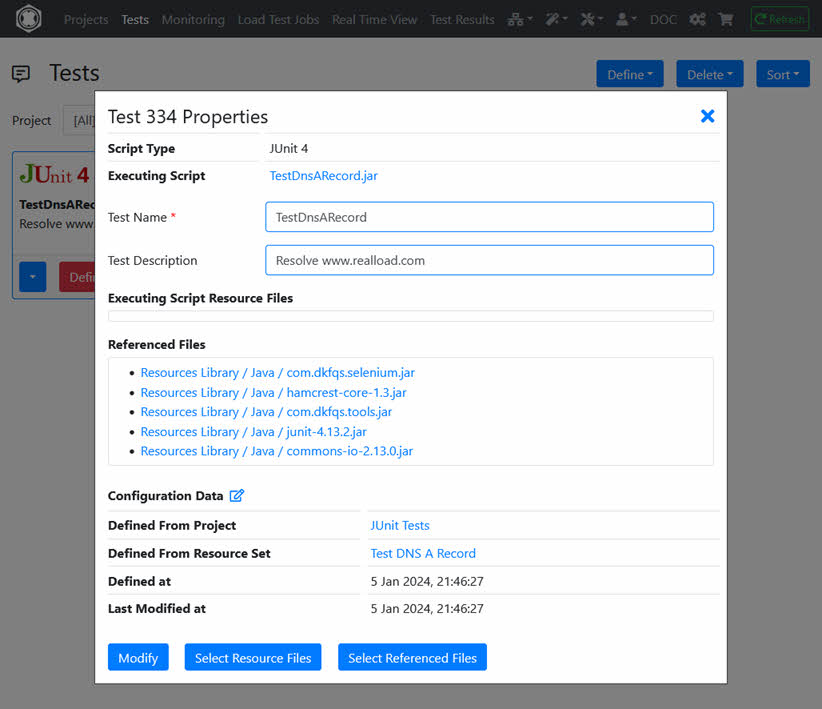

Now the Selenium RealLoad ‘Test’ is defined from which you can define a Load Test Job and/or a Monitoring Job.

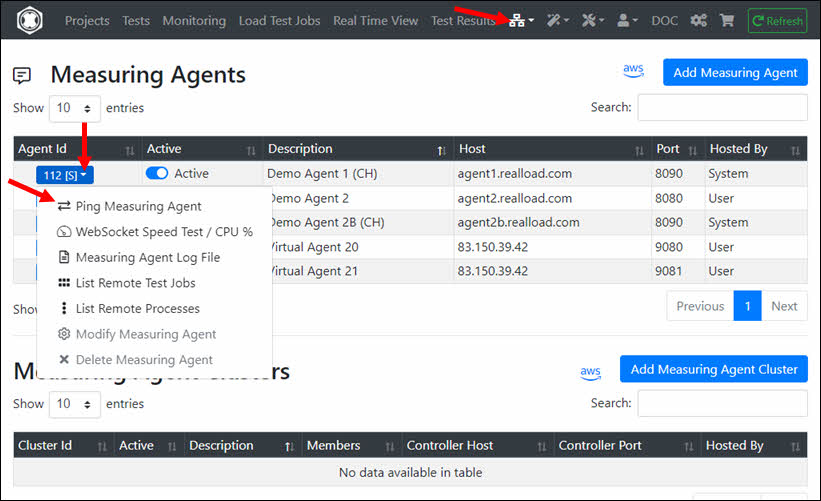

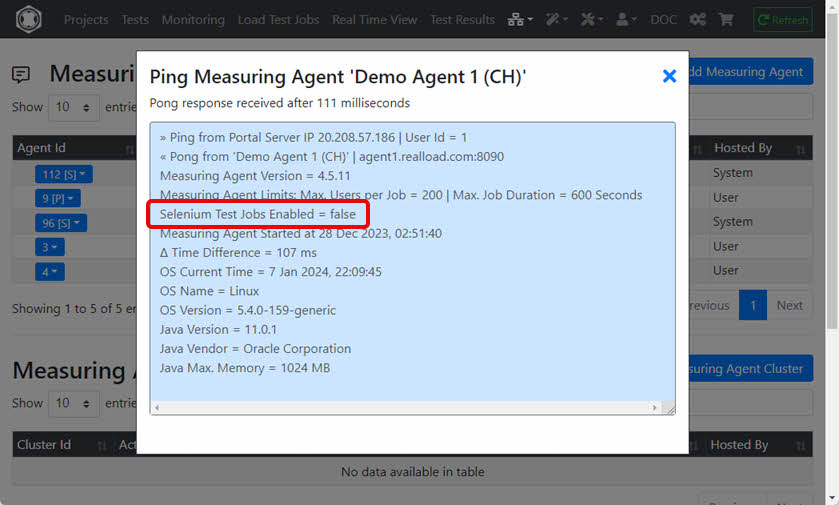

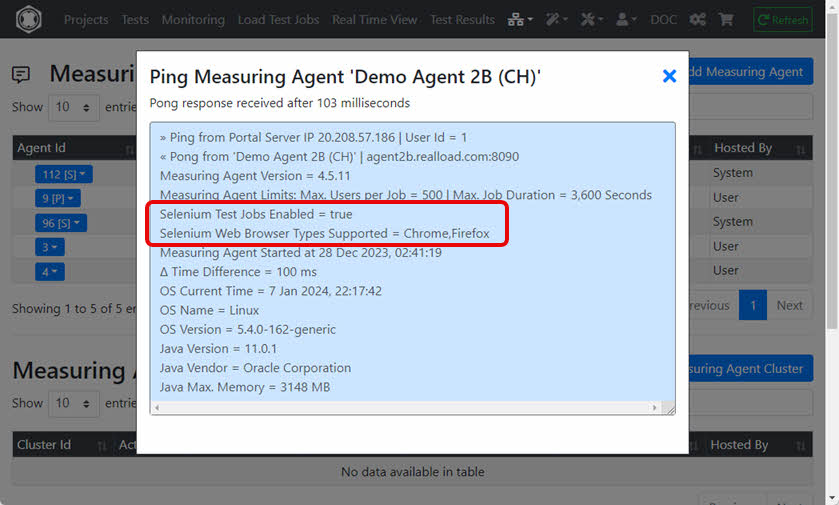

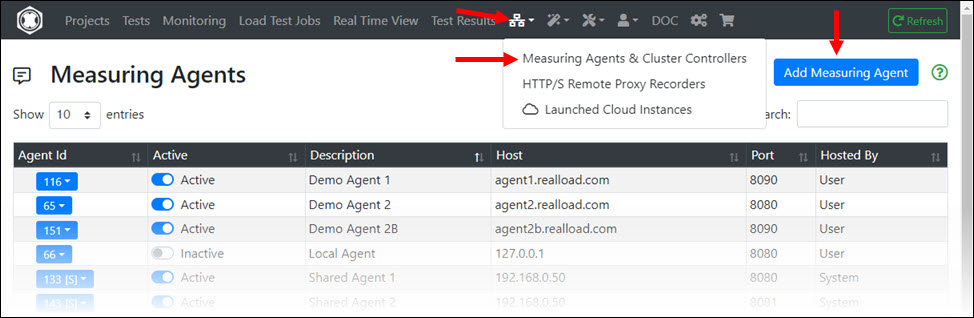

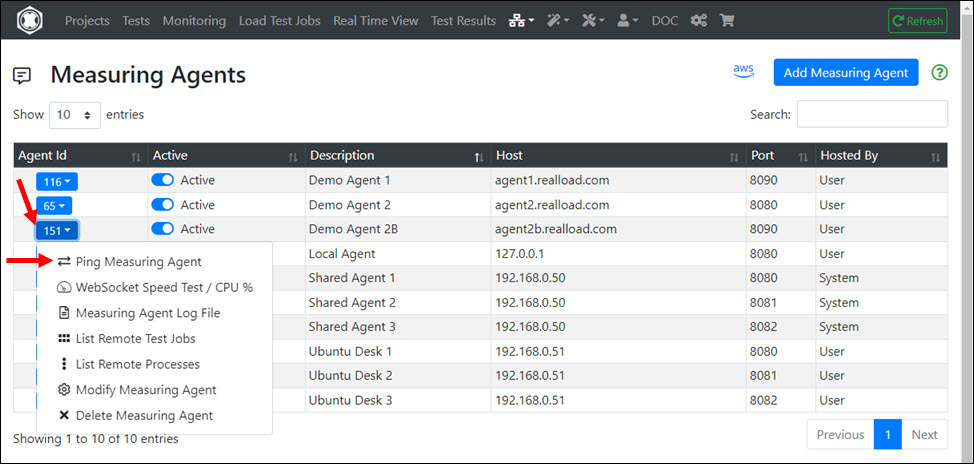

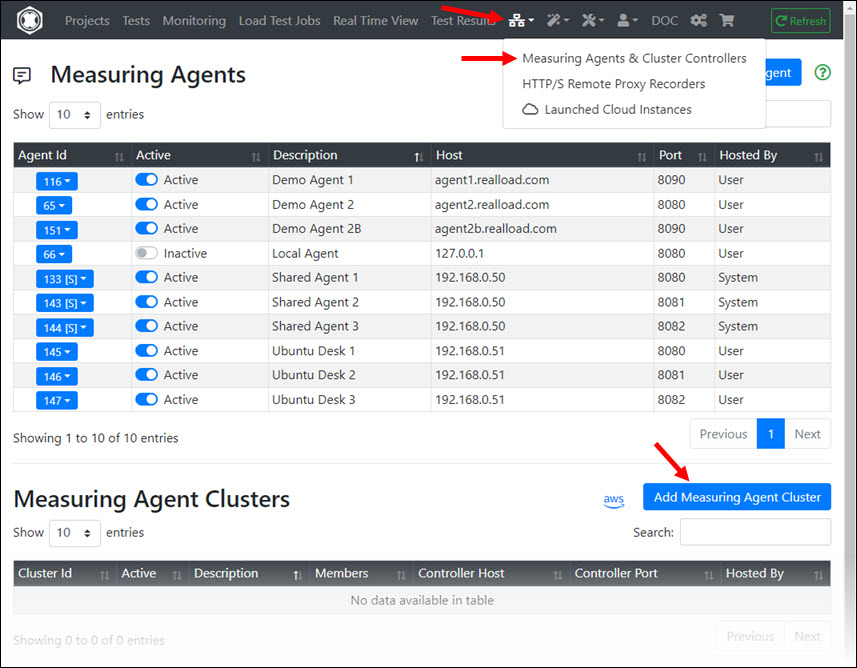

Please note that not all Measuring Agents support the execution of Selenium jobs. You can ‘ping’ a Measuring Agent at application level to find out whether Selenium jobs are supported:

Example 1: Measuring Agent ‘Demo Agent 1 (CH)’ does not support Selenium jobs

Example 2: Measuring Agent ‘Demo Agent 2B (CH)’ supports Selenium jobs

With this new release we have reached the point where a general load testing tool has been crossed with a monitoring tool and also with a regression testing tool - with both the monitoring tool and the regression testing tool are capable of executing test jobs with hundreds or thousands of concurrent users.

In order to enable the automatic Java memory configuration of a Measuring Agent 4.1.0, the following Java program arguments must be set in the startup script: -autoAdjustMemory -osReservedMemory 1GB

Furthermore, the Java memory of the Measuring Agent should be set in the startup script as shown in the table below:

| OS Physical Memory | Measuring Agent Java -Xmx setting |

|---|---|

| <2 GiB | 256m |

| 2..3 GiB | 512m |

| 4..7 GiB | 512m |

| 8..15 GiB | 1536m |

| 16..31 GiB | 3072m |

| 32..63 GiB | 4096m |

| 64..96 GiB | 6144m |

| >96 GiB | 8192m |

| Odd number of GiB should be rounded up (e.g. 7.7 = 8 = 1536m). |

Example: sudo -H -u dkfqs bash -c ‘CLASSPATH=/home/dkfqs/agent/bin/bcpkix-jdk15on-160.jar:/home/dkfqs/agent/bin/bcprov-jdk15on-160.jar:/home/dkfqs/agent/bin/bctls-jdk15on-160.jar:/home/dkfqs/agent/bin/DKFQSMeasuringAgent.jar;export CLASSPATH;nohup java -Xmx512m -DdkfqsMeasuringAgentProperties=/home/dkfqs/agent/config/measuringagent.properties -Dnashorn.args="–no-deprecation-warning" com.dkfqs.measuringagent.internal.StartDKFQSMeasuringAgent -autoAdjustMemory -osReservedMemory 1GB 1>/home/dkfqs/agent/log/MeasuringAgent.log 2>&1 &’

Advantages and disadvantages of ‘Cloud Credits’ versus launching AWS/EC2 instances by the ‘Desktop Companion’:

Benefits of ‘Cloud Credits’:

Advantages of the ‘Desktop Companion’:

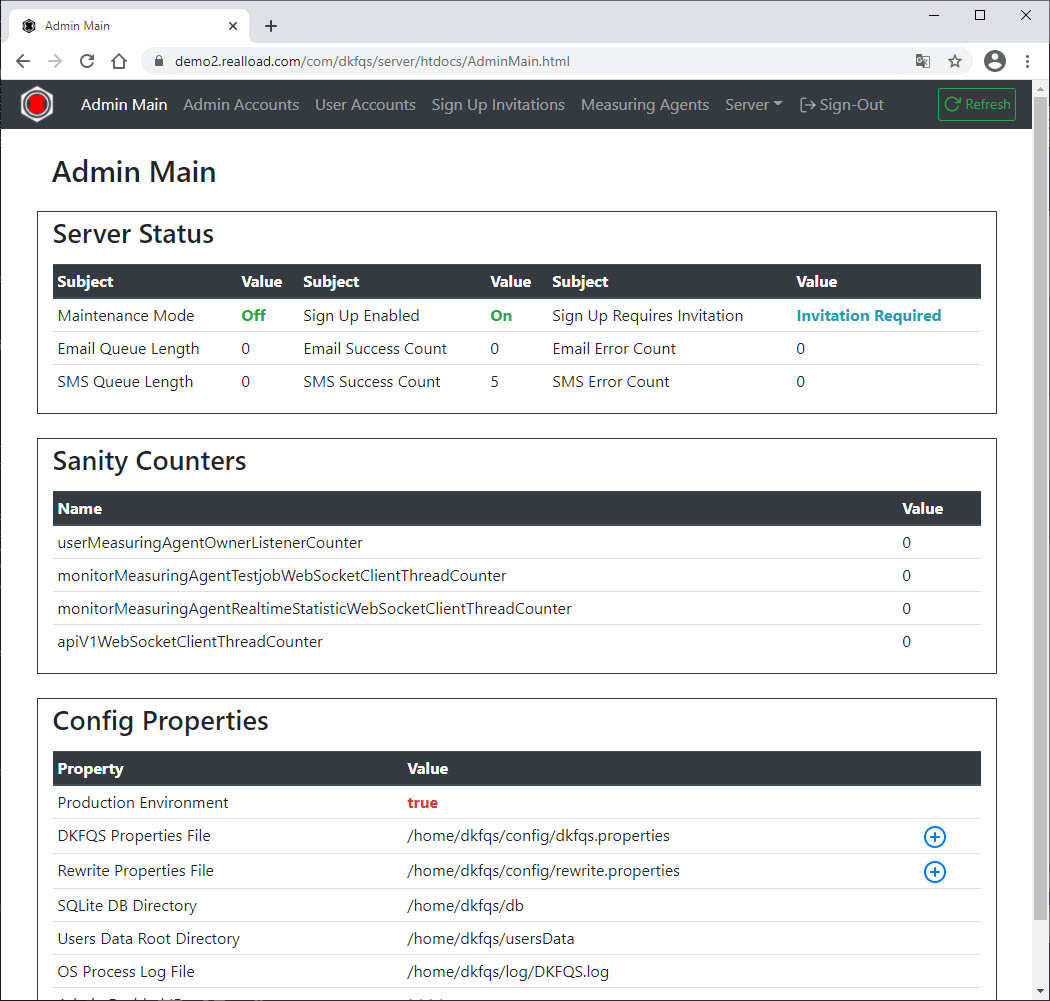

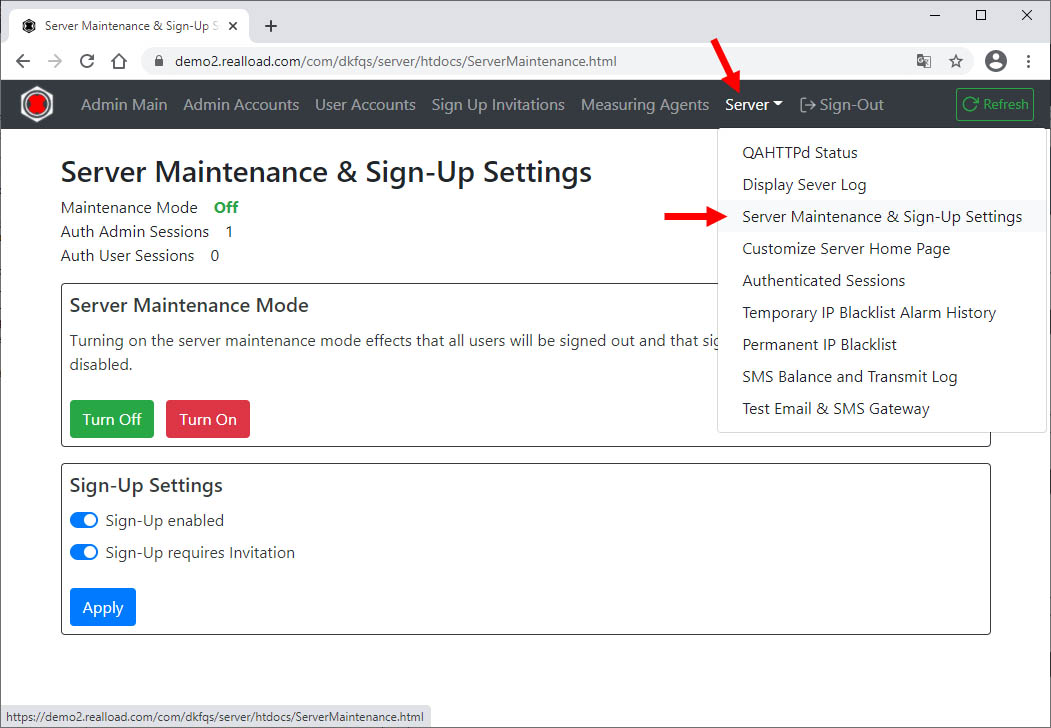

Portal Server Installation / Ubuntu 20: The “fontconfig” package has to be installed in order that the captcha generator is working:

sudo apt-get update

sudo apt-get install fontconfig

in /etc/security/limits.conf add or modify:

# TCP/IP Tuning

# =============

* soft nproc 262140

* hard nproc 262140

* soft nofile 262140

* hard nofile 262140

root soft nproc 262140

root hard nproc 262140

root soft nofile 262140

root hard nofile 262140

Enter: systemctl show -p TasksMax user-0

output: TasksMax=8966

if you get a value less than 262140 then add in /etc/systemd/system.conf

# System Tuning

# =============

DefaultTasksMax=262140

Reboot the system and verify the settings. Enter: ulimit -n

output: 262140

Enter: systemctl show -p TasksMax user-0

output: TasksMax=262140

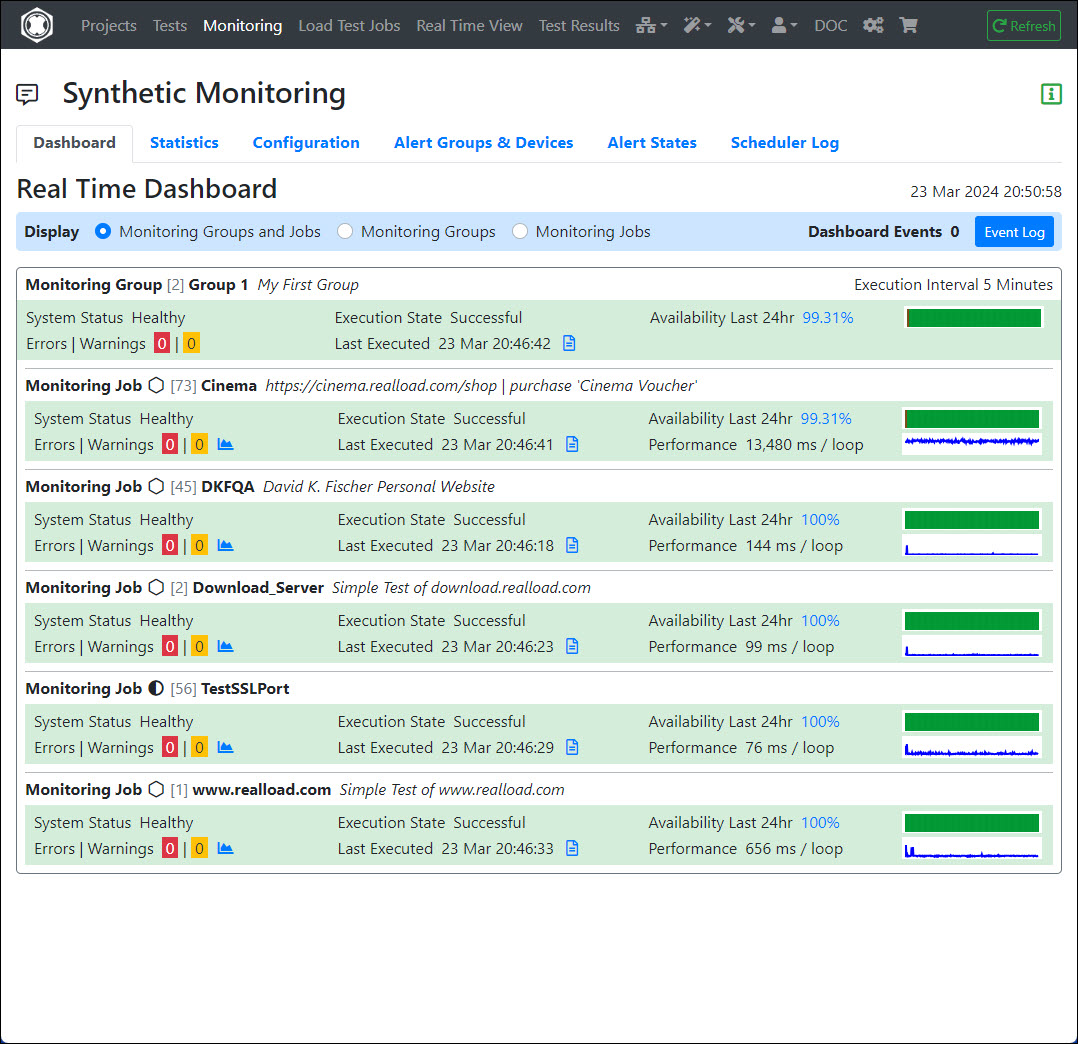

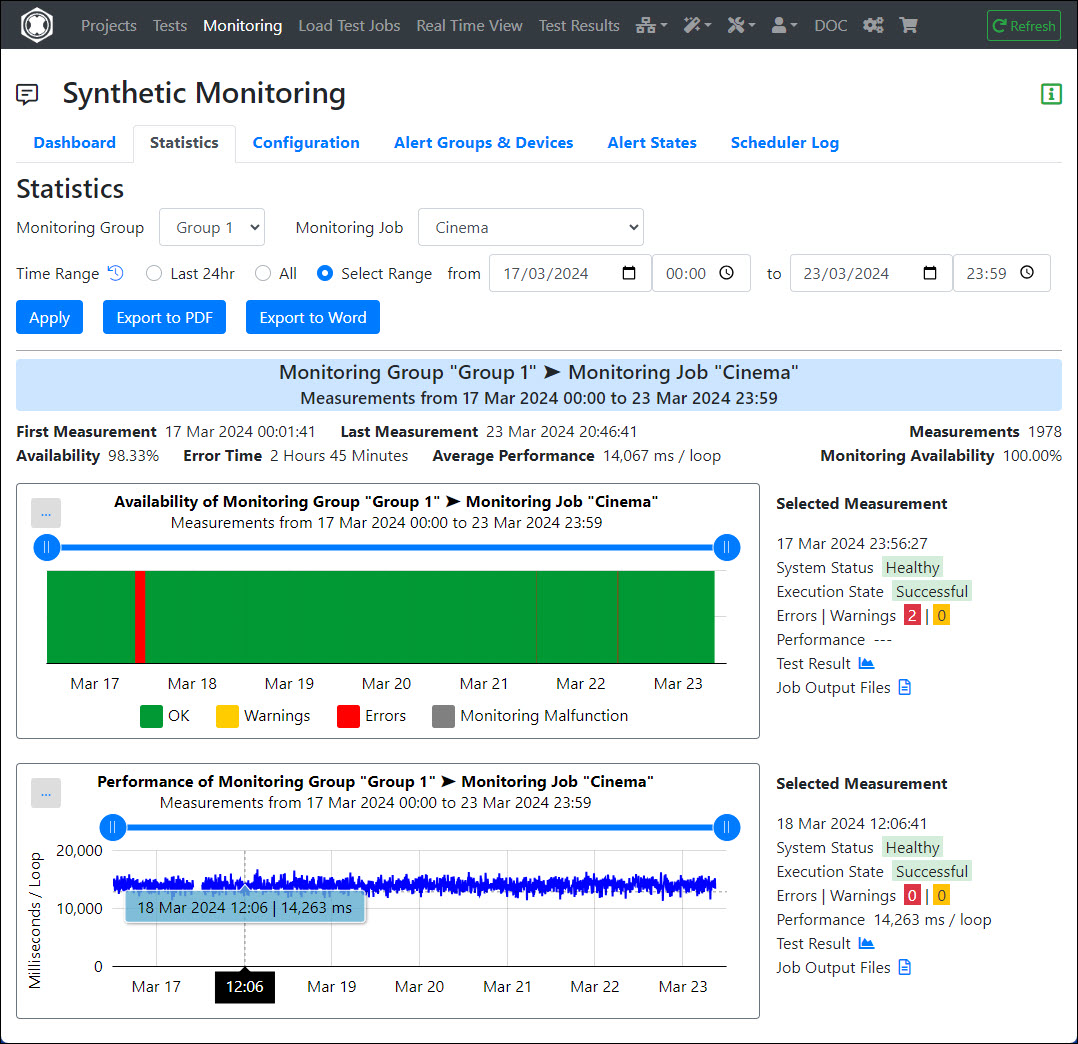

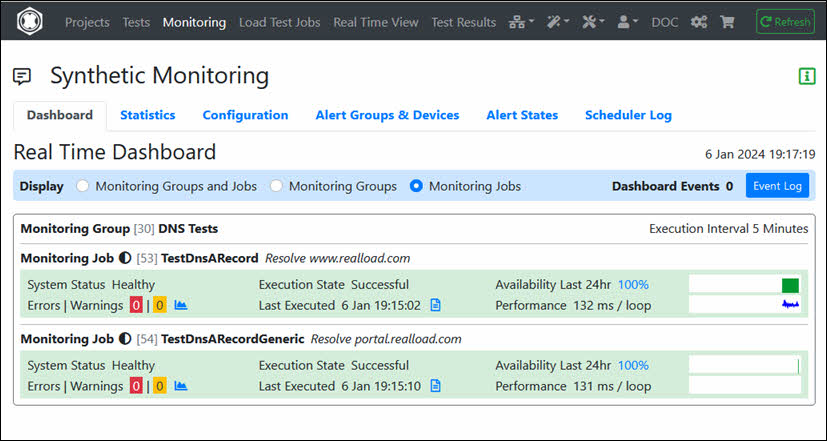

Synthetic Monitoring is an essential part of Digital Experience Monitoring, where organizations can detect their service outages or performance degrade proactively by periodically running the tests.

RealLoad provides an easy way of configuring synthetic tests with an outcome of accurate metrics, alerting and SLA details.

RealLoad Synthetic Monitoring Features

You have first to define a RealLoad ‘Test’ before you can add it to Synthetic Monitoring.

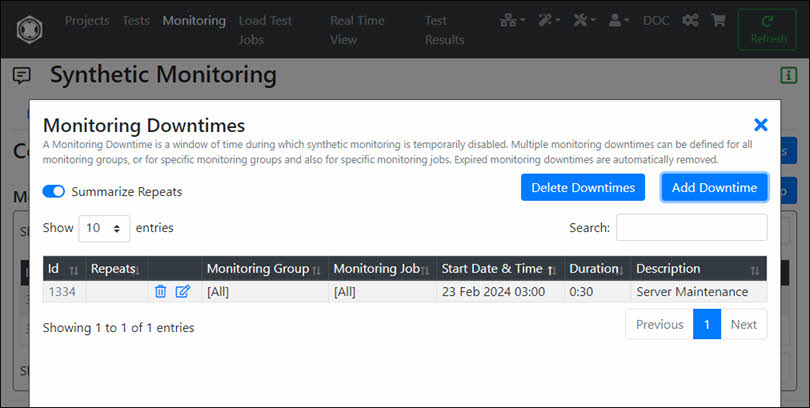

First add a Monitoring Group and then add one or more Monitoring Jobs to the Monitoring Group. If you want to run Monitoring Jobs with different intervals, you can also define several Monitoring Groups. Measuring Agents will be available for selection based on how many agents are already configured on different geographical locations.

Input Fields:

After you have added a monitoring group, its execution is switched off. Switch on the execution now:

In a Monitoring Group, first click on ‘Monitoring Jobs’ and then in the Monitoring Jobs area on ‘Add Monitoring Job’:

Then select a Test …

… And Configure and Define the Monitoring Job:

Input Fields:

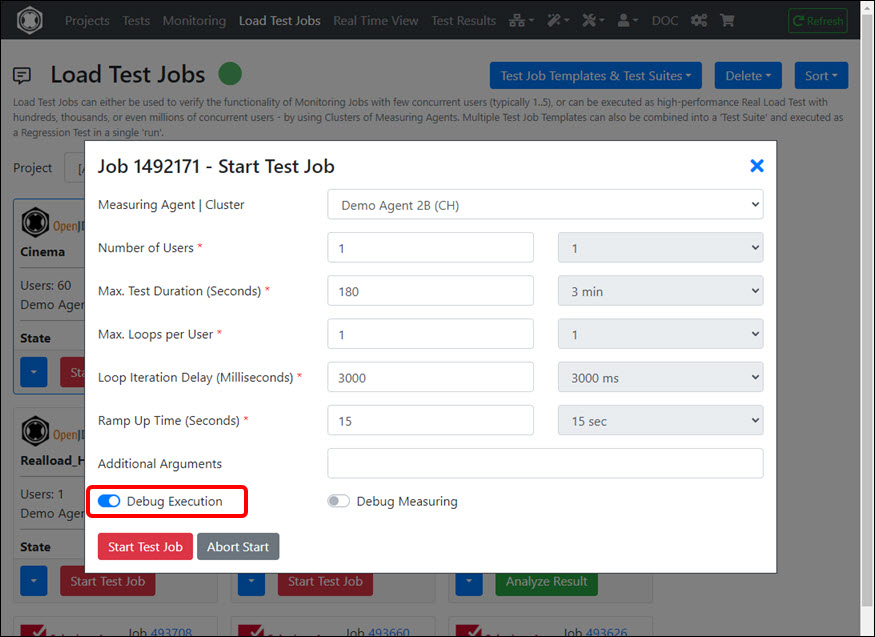

Normally you do not have to enter any “Additional Arguments” and leave “Debug Execution” and “Debug Measuring” switched off.

Tip: We recommend that you leave options ‘Performance Warning Alert Threshold’ and ‘Performance Error Alert Threshold’ switched off for now and configure them later - after the new defined Monitoring Job has been running for a few hours.

The following limits apply for Monitoring Jobs which are executed on shared Measuring Agents:

If you operate your own, private Measurement Agents (or have us to operate them), these limits do not apply - meaning you can run Monitoring Jobs with hundreds or thousands of concurrent users.

Proceed as follows:

First click on the ‘Alert Groups & Devices’ tab and then click on ‘Add Alert Group’.

Input Fields:

Tip: For example, you can define two alert groups, one for operations and another for developers. In the developer alert group, you could turn off the switches ‘Report System Failures’ and ‘Report Execution Failures’.

First expand the area of the alert device type and then click the ‘+’ icon:

After adding the alert device, you should test it:

After an Alert Device has been added, you can assign it to one or more Alert Groups.

To receive Alert Notifications, you must assign the Alert Groups to Monitoring Groups and/or to Monitoring Jobs.

If you click on a bell icon you can only assign one Alert Group. However, you can click the icon multiple times to assign multiple Alert Groups.

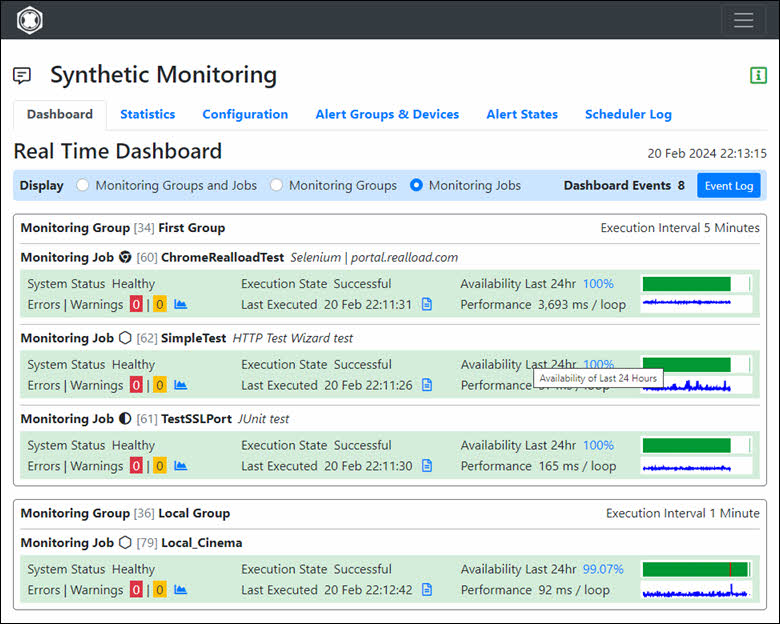

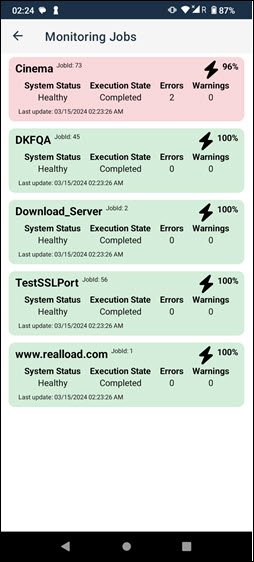

Now the created monitoring groups with its monitoring jobs will be available under the Real-Time Dashboard like below with the important metrics and also have the option to drill down into test results

Custom dashboards can be created on third party products like Grafana, using our WebSocket API

Next explains about the statistics data, which will be the source for many KPIs , for example to calculate the defined SLA for an application or an API service

The portal server has three APIs:

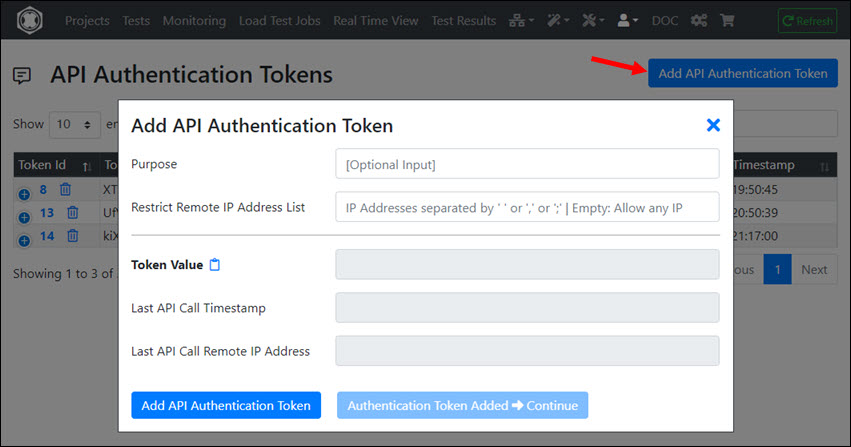

All APIs require so-called “Authentication Tokens” to verify the access authorization. If 5 or more invalid “Authentication Tokens” are sent within 60 seconds, the corresponding remote IP address is blocked for 30 minutes.

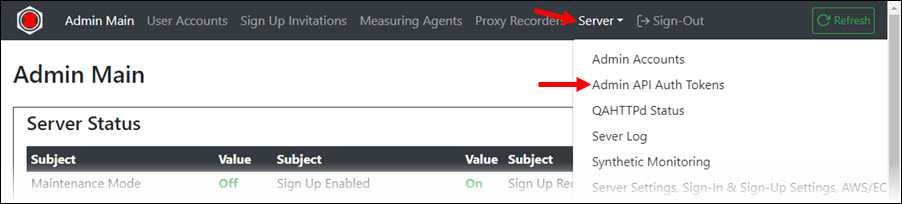

To perform a Remote Admin API call, you must first generate an “Admin API Auth Token” in the Administrator Menu of the portal. When generating the token you can enter a purpose (only used as information) and also restrict the remote IP addresses for which the token is valid. You can also specify whether the token has read/write access or read/only access.

The API supports the following functions (so-called “actions”):

| URL | HTTP Method |

|---|---|

| https://portal.realload.com/RemoteAdminAPI | POST |

| All data are sent and received in JSON data format. The “authTokenValue” and the “action” must always be sent when an API call is made. |

curl -v --request POST --header "Content-Type: application/json" --data "@getAllUserAccounts.json" https://portal.realload.com/RemoteAdminAPI

{

"authTokenValue": "8mKSz1UzaQg17kfu",

"action": "getAllUserAccounts"

}

{"allUserAccountsArray":

[{"userId":13,"nickname":"DKF","firstName":"Max","lastName":"Fischer","primaryEmail":"max@dkfqa.com","primarySMSPhone":"+41771111111","secondaryEmail":"","secondarySMSPhone":"","accountBlocked":false,"accountCreateTime":1538556183756,"lastLoginTime":1625181623869,"lastLoginIP":"127.0.0.1","pricePlanId":1,"accountExpiresTime":-1,"pricePlanTitle":"Unlimited"},{"userId":18,"nickname":"AX","firstName":"Alex","lastName":"Fischer","primaryEmail":"alexfischer66@yahoo.com","primarySMSPhone":"+41781111111","secondaryEmail":"","secondarySMSPhone":"","accountBlocked":false,"accountCreateTime":1539874749677,"lastLoginTime":1616111301975,"lastLoginIP":"127.0.0.1","pricePlanId":1,"accountExpiresTime":-1,"pricePlanTitle":"Unlimited"},{"userId":22,"nickname":"Kes","firstName":"Kesorn","lastName":"Fischer","primaryEmail":"gsklsta@yahoo.com","primarySMSPhone":"+66000000000","secondaryEmail":"","secondarySMSPhone":"","accountBlocked":false,"accountCreateTime":1605303204754,"lastLoginTime":1624389324770,"lastLoginIP":"127.0.0.1","pricePlanId":6,"accountExpiresTime":-1,"pricePlanTitle":"BASIC1"},{"userId":48,"nickname":"BET","firstName":"Bettina","lastName":"Meier","primaryEmail":"b123456@lucini.id.au","primarySMSPhone":"+61404905702","secondaryEmail":"","secondarySMSPhone":"","accountBlocked":false,"accountCreateTime":1623719604561,"lastLoginTime":-1,"lastLoginIP":"","pricePlanId":6,"accountExpiresTime":1625061600000,"pricePlanTitle":"BASIC1"}],

"isError":false}

If the API call is successful, then the response field “isError” is false. If a numeric field has a value of -1 (minus one), this means “no data” or “unlimited” depending on the context.

Specific Request Fields:

Specific Error Flags:

Specific Request Fields:

Specific Error Flags:

Specific Request Fields:

The license is successfully assigned to a user if either mapToUserEmailAddress or mapToUserMobilePhone matches to a user account.

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"8mKSz1UzaQg17kfu",

"action":"addLicenseCertificateToUser",

"licenseProvider": "Real Load Pty Ltd / nopCommerce",

"mapToUserEmailAddress": "max@dkfqa.com",

"mapToUserMobilePhone": "+41771111111",

"licenseCertificate": "-----BEGIN CERTIFICATE-----\r\nMIIEnjCCA4agAwIBAgIEyDnukzANBgkqhkiG9w0BA ...... Hn/UMGAGRB6xF4w+TewYqTAZrdhi/WLyYwg==\r\n-----END CERTIFICATE-----"

}

JSON Response Example (Success Case):

{"licenseId":12,"cloudCreditLicenseId":-1,"userId":13,"isCloudCreditsLicense":false,"isError":false}

JSON Response Example (Error Case):

{"isError":true,"genericErrorText":"","writeAccessError":false,"licenseProviderError":false,"mapToUserError":false,"pricePlanError":false,"licenseCertificateAlreadyAddedError":false,"licenseCertificateError":true}

Specific Request Fields:

Specific Error Flags:

JSON Response Example:

{

"isServerMaintenanceMode":false,

"isSignInSelectPricePlanFromMultipleValidLicenseCertificates":true,

"isSignInExpiredAccountCanEnterLicenseCertificate":true,

"isSignUpEnabled":true,

"isSignUpRequiresInvitationTicket":false,

"signUpDefaultPricePlanId":2,

"signUpDefaultAccountExpiresInDays":14,

"deleteExpiredUserAccountsAfterDays":183,

"isError":false

}

Specific Request Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"8mKSz1UzaQg17kfu",

"action":"setServerMaintenanceMode",

"serverMaintenanceMode":true

}

JSON Response Example (Success Case):

{"isServerMaintenanceMode":true,"isError":false}

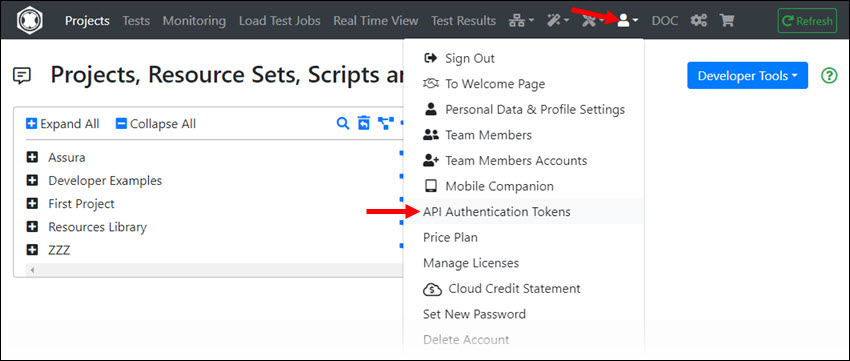

To perform a Remote User API call, you must first sign in into the portal and generate an “API Authentication Token”. When generating the token you can enter a purpose (only used as information) and also restrict the remote IP addresses for which the token is valid.

The API supports the following functions (so-called “actions”):

Common Functions:

Projects, Resource Sets and Files Functions:

Measuring Agents Functions:

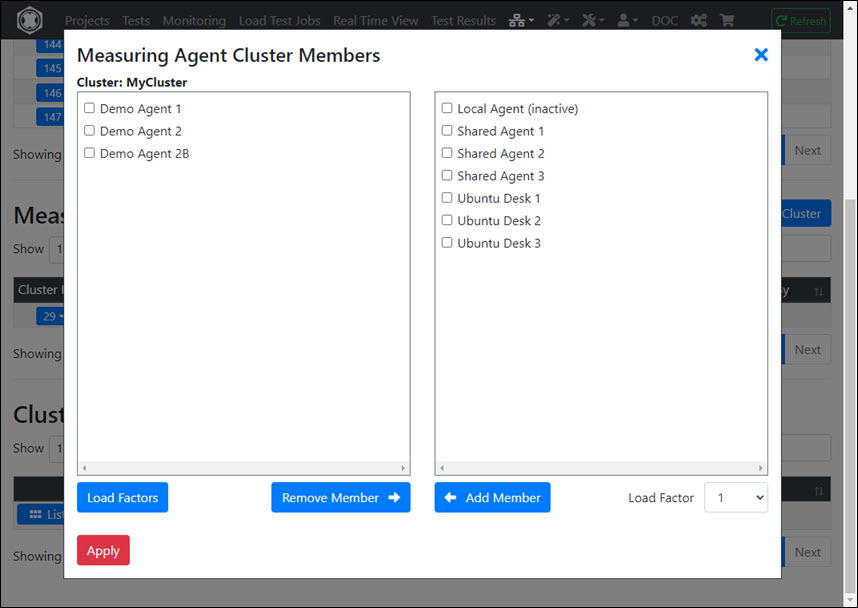

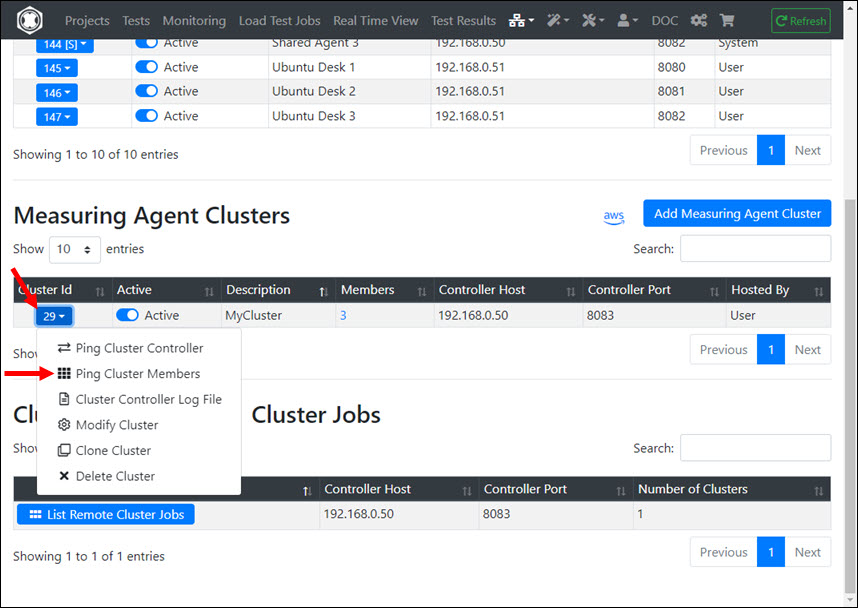

Measuring Agent Cluster Functions:

HTTP/S Remote Proxy Recorders Functions:

Load Test Jobs Functions:

Test Execution Suites Functions:

| URL | HTTP Method |

|---|---|

| https://portal.realload.com/RemoteUserAPI | POST |

| All data are sent and received in JSON data format. The “authTokenValue” and the “action” must always be sent when an API call is made. |

curl -v --request POST --header "Content-Type: application/json" --data {\"authTokenValue\":\"jPmFClqeDUXaEk8Q274q\",\"action\":\"getUserAccountInfo\"} https://portal.realload.com/RemoteUserAPI

{

"authTokenValue": "jPmFClqeDUXaEk8Q274q",

"action": "getUserAccountInfo"

}

{

"userAccountInfo":{

"userId":48,

"nickname":"BET",

"firstName":"Bettina",

"lastName":"MeierHans",

"primaryEmail":"bettina@meierhans.id.au",

"primarySMSPhone":"+61401111111",

"secondaryEmail":"",

"secondarySMSPhone":"",

"accountBlocked":false,

"accountCreateTime":1623719604561,

"lastLoginTime":1625348376450,

"lastLoginIP":"127.0.0.1",

"pricePlanId":6,

"accountExpiresTime":1625953109397,

"pricePlanTitle":"BASIC1"

},

"isError":false

}

If the API call is successful, then the response field “isError” is false. If a numeric field has a value of -1 (minus one), this means “no data” or “unlimited” depending on the context.

Specific Request Fields:

Specific Error Flags:

Specific Request Fields:

Specific Error Flags:

JSON Response Example:

{

"pricePlanInfo":{

"pricePlanId":6,

"title":"BASIC1",

"description":"",

"isDeprecated":false,

"lastModified":1625348413042,

"maxDiskSpaceMB":1024,

"maxSubUserAccounts":0,

"maxMeasuringAgentsOwnedByUser":3,

"maxRemoteProxyRecordersOwnedByUser":3,

"executeLoadJobsEnabled":true,

"executeMonitoringJobsEnabled":false,

"apiAccessEnabled":true,

"maxStartLoadJobsLast24h":24,

"maxUsersPerLoadJob":500,

"maxDurationPerLoadJob":1800

},

"isError":false

}

The unit for “maxDurationPerLoadJob” is seconds.

Specific Request Fields:

Specific Error Flags:

JSON Response Example:

{

"projectsArray": [

{

"projectId": 97,

"projectName": "Common",

"projectDescription": "",

"resourceSetsArray": [

{

"resourceSetId": 154,

"resourceSetName": "Input Files",

"resourceSetDescription": "",

"filesArray": [

{

"fileName": "InputFile.txt",

"fileSize": 233,

"fileHashCode": 1873256029,

"fileLastModified": 1613835992073

}

]

},

{

"resourceSetId": 155,

"resourceSetName": "Jar Files",

"resourceSetDescription": "",

"filesArray": [

{

"fileName": "com.dkfqs.tools.jar",

"fileSize": 578087,

"fileHashCode": -2033420926,

"fileLastModified": 1613838181727

}

]

},

{

"resourceSetId": 156,

"resourceSetName": "Plug-Ins",

"resourceSetDescription": "",

"filesArray": [

{

"fileName": "HttpSessionPlugin_ChangeCopyright.json",

"fileSize": 5321,

"fileHashCode": 1958407366,

"fileLastModified": 1613838287871

}

]

}

]

},

...

...

...

],

"isError": false

}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"createProject",

"projectName":"My New Project",

"projectDescription": "Created by API call"

}

JSON Response Example (Success Case):

{"projectId":113,"isError":false}

JSON Response Example (Error Case):

{"isError":true,"genericErrorText":"","diskSpaceLimitExceededError":false,"projectNameError":true}

Specific Request Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"deleteProject",

"projectId":113,

"moveToTrash":false

}

Specific Request Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"getResourceSetsOfProject",

"projectId":97

}

JSON Response Example (Success Case):

{

"resourceSetsArray": [

{

"resourceSetId": 154,

"resourceSetName": "Input Files",

"resourceSetDescription": "",

"filesArray": [

{

"fileName": "InputFile.txt",

"fileSize": 233,

"fileHashCode": 1873256029,

"fileLastModified": 1613835992073

}

]

},

{

"resourceSetId": 155,

"resourceSetName": "Jar Files",

"resourceSetDescription": "",

"filesArray": [

{

"fileName": "com.dkfqs.tools.jar",

"fileSize": 578087,

"fileHashCode": -2033420926,

"fileLastModified": 1613838181727

}

]

},

{

"resourceSetId": 156,

"resourceSetName": "Plug-Ins",

"resourceSetDescription": "",

"filesArray": [

{

"fileName": "HttpSessionPlugin_ChangeCopyright.json",

"fileSize": 5321,

"fileHashCode": 1958407366,

"fileLastModified": 1613838287871

}

]

}

],

"isError": false

}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"createResourceSet",

"projectId":97,

"resourceSetName":"My New Resource Set",

"resourceSetDescription": "Created by API call"

}

JSON Response Example (Success Case):

{"resourceSetId":172,"isError":false}

JSON Response Example (Error Case):

{"isError":true,"genericErrorText":"","resourceSetNameError":true,"projectIdError":false,"diskSpaceLimitExceededError":false}

Specific Request Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"deleteResourceSet",

"projectId":97,

"resourceSetId":172,

"moveToTrash":false

}

Specific Request Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"getFilesInfoOfResourceSet",

"projectId":23,

"resourceSetId":143

}

JSON Response Example:

{

"filesArray": [

{

"fileName": "DKFQSLibrary2.psm1",

"fileSize": 16339,

"fileHashCode": -1503445747,

"fileLastModified": 1603566144851

},

{

"fileName": "powershell-http-bern2.ps1",

"fileSize": 12900,

"fileHashCode": -1174212096,

"fileLastModified": 1603566162094

},

{

"fileName": "TestResult_powershell-http-bern2Neu_2020-10-24@21-06-04.json",

"fileSize": 14395,

"fileHashCode": -951574615,

"fileLastModified": 1603566379097

},

{

"fileName": "TestResult_powershell-http-bern2Neu_2020-10-24@21-09-45.json",

"fileSize": 55128,

"fileHashCode": 1499924815,

"fileLastModified": 1603566591322

}

],

"isError": false

}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"createFile",

"projectId":23,

"resourceSetId":143,

"fileName":"test.txt",

"fileContentB64":"VGhpcyBpcyB0aGUgY29udGVudCBvZiB0aGUgZmlsZS4="

}

JSON Response Example (Success Case):

{

"fileName":"test.txt",

"fileSize":32,

"fileHashCode":-1460278014,

"fileLastModified":1625423562384,

"isError":false

}

JSON Response Example (Error Case):

{"isError":true,"genericErrorText":"","projectIdError":false,"resourceSetIdError":false,"diskSpaceLimitExceededError":false,"fileNameError":true}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"getFile",

"projectId":23,

"resourceSetId":143,

"fileName":"test.txt"

}

JSON Response Example (Success Case):

{

"fileName":"test.txt",

"fileContentB64":"VGhpcyBpcyB0aGUgY29udGVudCBvZiB0aGUgZmlsZS4=",

"fileSize":32,

"fileHashCode":-1460278014,

"fileLastModified":1625423562384,

"isError":false

}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"deleteFile",

"projectId":23,

"resourceSetId":143,

"fileName":"test.txt",

"moveToTrash":false

}

JSON Response Example (Success Case):

{"fileDeleted":true,"isError":false}

JSON Response Example (Error Case):

{"isError":true,"genericErrorText":"","projectIdError":false,"resourceSetIdError":false,"fileNameError":true}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"getMeasuringAgents"

}

JSON Response Example (Success Case):

{

"measuringAgentsArray": [

{

"agentId": 46,

"createdBySystem": false,

"ownerUserId": 13,

"agentActive": true,

"agentDescription": "Local Agent",

"agentHost": "127.0.0.1",

"agentPort": 8080,

"authToken": "OrKmpkbyNWEHok"

},

{

"agentId": 49,

"createdBySystem": false,

"ownerUserId": 13,

"agentActive": false,

"agentDescription": "Rasberry 1",

"agentHost": "192.168.0.51",

"agentPort": 8080,

"authToken": ""

},

{

"agentId": 50,

"createdBySystem": false,

"ownerUserId": 13,

"agentActive": true,

"agentDescription": "Ubuntu 10",

"agentHost": "192.168.0.110",

"agentPort": 8080,

"authToken": ""

},

{

"agentId": 51,

"createdBySystem": false,

"ownerUserId": 13,

"agentActive": true,

"agentDescription": "Ubuntu 11",

"agentHost": "192.168.0.111",

"agentPort": 8080,

"authToken": ""

}

],

"isError": false

}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"getMinRequiredMeasuringAgentVersion"

}

JSON Response Example (Success Case):

{

"minRequiredMeasuringAgentVersion":"3.9.34",

"isError":false

}

Specific Request Fields:

Response Fields (JSON object “measuringAgent”):

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"addMeasuringAgent",

"agentDescription":"Ubuntu 12",

"agentHost":"192.168.0.112",

"agentPort":8080,

"agentActive": true,

"agentAuthToken": "nixda"

}

JSON Response Example (Success Case):

{

"measuringAgent": {

"agentId": 53,

"createdBySystem": false,

"ownerUserId": 13,

"agentActive": true,

"agentDescription": "Ubuntu 12",

"agentHost": "192.168.0.112",

"agentPort": 8080,

"authToken": "nixda"

},

"isError": false

}

Specific Request Fields:

Response Fields (JSON object “agentResponse”):

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"pingMeasuringAgent",

"agentId":48

}

JSON Response Example (Success Case):

{

"agentResponse": {

"pingFromRemoteIp": "83.150.39.44",

"pingFromRemoteUserId": 13,

"productVersion": "3.9.30",

"limitMaxUsersPerJob": 500,

"limitMaxJobDurationSeconds": 300,

"osName": "Linux",

"osVersion": "4.15.0-136-generic",

"javaVersion": "11.0.1",

"javaVendor": "Oracle Corporation",

"javaMaxMemory":"2048 MB",

"systemTime": 1625513238236,

"deltaTimeMillis": 841,

"agentStartupTimeStamp": 1622836702172,

"httpExecuteTimeMillis": 247

},

"isError": false

}

JSON Response Example (Error Case 1):

{

"isError": true,

"genericErrorText": "API V1 request to 192.168.0.51:8080 timed out",

"agentIdError": false,

"agentAccessDeniedError": false,

"agentNotReachableError": true,

"agentVersionOutdatedError": false

}

JSON Response Example (Error Case 2):

{

"isError": true,

"genericErrorText": "Min. measuring agent version required: 3.9.30",

"agentIdError": false,

"agentAccessDeniedError": false,

"agentNotReachableError": false,

"agentVersionOutdatedError": true

}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"setMeasuringAgentActive",

"agentId":46,

"agentActive":false

}

JSON Response Example (Success Case):

{"isError":false}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"deleteMeasuringAgent",

"agentId":54

}

JSON Response Example (Success Case):

{"isError":false}

Specific Request Fields:

Response Fields (JSON array “measuringAgentClustersArray”):

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"getMeasuringAgentClusters"

}

JSON Response Example:

{

"measuringAgentClustersArray": [

{

"clusterId": 11,

"createdBySystem": false,

"ownerUserId": 13,

"clusterActive": true,

"clusterDescription": "C1",

"controllerHost": "192.168.0.50",

"controllerPort": 8083,

"controllerAuthToken": "aberaber",

"clusterMembersArray": [

{

"clusterMemberId": 59,

"loadFactor": 1,

"agentId": 64,

"agentActive": true,

"agentDescription": "Agent 1",

"agentHost": "192.168.0.10",

"agentPort": 8080,

"agentAuthToken": "OrKmAAbyNWEHok"

},

{

"clusterMemberId": 60,

"loadFactor": 1,

"agentId": 59,

"agentActive": true,

"agentDescription": "Ubuntu 10",

"agentHost": "192.168.0.110",

"agentPort": 8080,

"agentAuthToken": "asc7jhacab"

},

{

"clusterMemberId": 61,

"loadFactor": 1,

"agentId": 60,

"agentActive": true,

"agentDescription": "Ubuntu 11",

"agentHost": "192.168.0.111",

"agentPort": 8080,

"agentAuthToken": "66ascascsdac"

}

]

},

{

"clusterId": 14,

"createdBySystem": false,

"ownerUserId": 13,

"clusterActive": true,

"clusterDescription": "C2",

"controllerHost": "192.168.0.50",

"controllerPort": 8083,

"controllerAuthToken": "aberaber",

"clusterMembersArray": [

{

"clusterMemberId": 66,

"loadFactor": 1,

"agentId": 56,

"agentActive": true,

"agentDescription": "Test System",

"agentHost": "192.168.0.60",

"agentPort": 8080,

"agentAuthToken": "aberdoch"

},

{

"clusterMemberId": 67,

"loadFactor": 1,

"agentId": 59,

"agentActive": true,

"agentDescription": "Ubuntu 10",

"agentHost": "192.168.0.110",

"agentPort": 8080,

"agentAuthToken": "asc7jhacab"

}

]

}

],

"isError": false

}

Specific Request Fields:

Response Fields (JSON array “clusterControllersArray”):

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"getClusterControllers"

}

JSON Response Example:

{

"clusterControllersArray": [

{

"controllerHost": "192.168.0.33",

"controllerPort": 8083,

"controllerAuthToken": "2fasdtfffe",

"measuringAgentClustersArray": [

{

"clusterId": 11,

"clusterDescription": "C1",

"clusterActive": 1

},

{

"clusterId": 13,

"clusterDescription": "C2",

"clusterActive": 1

},

{

"clusterId": 14,

"clusterDescription": "C3",

"clusterActive": 1

}

]

},

{

"controllerHost": "192.168.0.50",

"controllerPort": 8083,

"controllerAuthToken": "asfsdgh763",

"measuringAgentClustersArray": [

{

"clusterId": 15,

"clusterDescription": "C4",

"clusterActive": 1

},

{

"clusterId": 16,

"clusterDescription": "C7",

"clusterActive": 1

}

]

}

],

"isError": false

}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"getMinRequiredClusterControllerVersion"

}

JSON Response Example:

{

"minRequiredClusterControllerVersion":"4.0.4",

"isError":false

}

Specific Request Fields:

Response Fields (JSON object “controllerResponse”):

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"pingClusterController",

"controllerHost":"192.168.0.50",

"controllerPort":8083,

"controllerAuthToken":"hagsajjs99"

}

JSON Response Example (Success Case):

{

"controllerResponse": {

"pingFromRemoteIp": "192.168.0.100",

"pingFromRemoteUserId": 13,

"productVersion": "4.0.4",

"osName": "Linux",

"osVersion": "4.15.0-135-generic",

"javaVersion": "11.0.1",

"javaVendor": "Oracle Corporation",

"javaMaxMemory": "512 MB",

"systemTime": 1643406118552,

"deltaTimeMillis": 1120,

"controllerStartupTimeStamp": 1643322597013,

"httpExecuteTimeMillis": 249,

"clusterControllerOutdated": false

},

"isError": false

}

JSON Response Example (Error Case 1):

{

"isError": true,

"genericErrorText": "API call pingGetControllerInfo failed. Error code = 18, Error message = Invalid authentication token",

"controllerHostError": false,

"controllerVersionOutdatedError": false,

"controllerNotReachableError": true,

"controllerPortError": false

}

JSON Response Example (Error Case 2):

{

"isError": true,

"genericErrorText": "Min. cluster controller version required: 4.0.4",

"controllerHostError": false,

"controllerVersionOutdatedError": true,

"controllerNotReachableError": false,

"controllerPortError": false

}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"addMeasuringAgentCluster",

"clusterActive":true,

"clusterDescription":"C7",

"controllerHost":"192.168.0.50",

"controllerPort":8083,

"controllerAuthToken":"aberaber",

"clusterMembersArray":[

{

"agentId":59,

"loadFactor":1

},

{

"agentId":60,

"loadFactor":1

}

]

}

JSON Response Example (Success Case):

{

"clusterId":16,

"clusterMembersArray":[

{

"clusterMemberId":71,

"agentId":59,

"loadFactor":1

},

{

"clusterMemberId":72,

"agentId":60,

"loadFactor":1

}

],

"isError":false

}

JSON Response Example (Error Case):

{

"isError": true,

"genericErrorText": "Invalid agentId = 101",

"controllerHostError": false,

"agentIdError": true,

"controllerPortError": false,

"loadFactorError": false,

"clusterDescriptionError": false

}

Specific Request Fields:

Response Fields (JSON object “clusterMember”):

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"addMemberToMeasuringAgentCluster",

"clusterId":17,

"agentId":64,

"loadFactor":1

}

JSON Response Example:

{

"clusterMember": {

"clusterMemberId": 75,

"agentId": 64,

"loadFactor": 1

},

"isError": false

}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"removeMemberFromMeasuringAgentCluster",

"clusterId":17,

"clusterMemberId":75

}

JSON Response Example:

{"isError":false}

Specific Request Fields:

Response Fields (JSON object “controllerResponse”):

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"pingMeasuringAgentClusterMembers",

"clusterId":16

}

JSON Response Example (Success Case):

{

"controllerResponse": {

"productVersion": "4.0.4",

"clusterConnectResult": {

"measuringAgentClusterMemberArray": [

{

"clusterMemberId": 71,

"loadFactor": 1,

"agentId": 59,

"agentActive": true,

"agentDescription": "Ubuntu 10",

"agentHost": "192.168.0.110",

"agentPort": 8080,

"agentAuthToken": "agsdhagsj"

},

{

"clusterMemberId": 72,

"loadFactor": 1,

"agentId": 60,

"agentActive": true,

"agentDescription": "Ubuntu 11",

"agentHost": "192.168.0.111",

"agentPort": 8080,

"agentAuthToken": "nvbjnvbnn"

}

],

"connectSuccessfulClusterMemberArray": [

71,

72

],

"connectFailedClusterMemberArray": [],

"clusterConnectStartTimestamp": 1643410829270,

"clusterConnectDurationMillis": 79

},

"clusterActionResult": {

"actionSuccessfulClusterMemberArray": [

71,

72

],

"actionFailedClusterMemberArray": [],

"jsonResponseClusterMemberArray": [

{

"clusterMemberId": 71,

"jsonResponseObject": {

"productVersion": "4.0.4",

"systemTime": 1643410829340,

"deltaTimeMillis": -10,

"osName": "Linux",

"osVersion": "5.4.0-92-generic",

"javaVersion": "11.0.1",

"javaVendor": "Oracle Corporation",

"javaMaxMemory": "2048 MB",

"samplingGranularityMillis": 4000,

"isError": false,

"measuringAgentOutdated": false

}

},

{

"clusterMemberId": 72,

"jsonResponseObject": {

"productVersion": "4.0.4",

"systemTime": 1643410829351,

"deltaTimeMillis": -10,

"osName": "Linux",

"osVersion": "5.4.0-92-generic",

"javaVersion": "11.0.1",

"javaVendor": "Oracle Corporation",

"javaMaxMemory": "2048 MB",

"samplingGranularityMillis": 4000,

"isError": false,

"measuringAgentOutdated": false

}

}

],

"clusterActionStartTimestamp": 1643410829349,

"clusterActionDurationMillis": 43

},

"httpExecuteTimeMillis": 1778,

"clusterControllerOutdated": false

},

"isError": false

}

JSON Response Example (Error Case / Partly failed):

{

"controllerResponse": {

"productVersion": "4.0.4",

"clusterConnectResult": {

"measuringAgentClusterMemberArray": [

{

"clusterMemberId": 71,

"loadFactor": 1,

"agentId": 59,

"agentActive": true,

"agentDescription": "Ubuntu 10",

"agentHost": "192.168.0.110",

"agentPort": 8080,

"agentAuthToken": "marderzahn"

},

{

"clusterMemberId": 72,

"loadFactor": 1,

"agentId": 60,

"agentActive": true,

"agentDescription": "Ubuntu 11",

"agentHost": "192.168.0.111",

"agentPort": 8080,

"agentAuthToken": "marderzahn"

}

],

"connectSuccessfulClusterMemberArray": [

72

],

"connectFailedClusterMemberArray": [

{

"clusterMemberId": 71,

"errorMessage": "Connection refused (Connection refused)"

}

],

"clusterConnectStartTimestamp": 1643414272214,

"clusterConnectDurationMillis": 97

},

"clusterActionResult": {

"actionSuccessfulClusterMemberArray": [

72

],

"actionFailedClusterMemberArray": [],

"jsonResponseClusterMemberArray": [

{

"clusterMemberId": 72,

"jsonResponseObject": {

"productVersion": "4.0.4",

"systemTime": 1643414272310,

"deltaTimeMillis": -8,

"osName": "Linux",

"osVersion": "5.4.0-92-generic",

"javaVersion": "11.0.1",

"javaVendor": "Oracle Corporation",

"javaMaxMemory": "2048 MB",

"samplingGranularityMillis": 4000,

"isError": false,

"measuringAgentOutdated": false

}

}

],

"clusterActionStartTimestamp": 1643414272311,

"clusterActionDurationMillis": 21

},

"httpExecuteTimeMillis": 1769,

"clusterControllerOutdated": false

}

}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"setMeasuringAgentClusterActive",

"clusterId":16,

"clusterActive":true

}

JSON Response Example (Success Case):

{"isError":false}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"deleteMeasuringAgentCluster",

"clusterId":16

}

JSON Response Example (Success Case):

{"isError":false}

Specific Request Fields:

Response Fields (JSON array “proxyRecordersArray”):

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"getProxyRecorders"

}

JSON Response Example:

{

"proxyRecordersArray": [

{

"recorderId": 3,

"createdBySystem": false,

"ownerUserId": 13,

"recorderDescription": "Erster",

"recorderProxyHost": "192.168.0.40",

"recorderProxyPort": 8082,

"recorderProxyAuthUsername": "",

"recorderProxyAuthPassword": "",

"recorderControlPort": 8081,

"recorderControlAuthToken": ""

},

{

"recorderId": 4,

"createdBySystem": false,

"ownerUserId": 13,

"recorderDescription": "proxy.realload.com",

"recorderProxyHost": "proxy.realload.com",

"recorderProxyPort": 8082,

"recorderProxyAuthUsername": "max.meier",

"recorderProxyAuthPassword": "123456",

"recorderControlPort": 8081,

"recorderControlAuthToken": "aZujkl97zuwert"

}

],

"isError": false

}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"getMinRequiredProxyRecorderVersion"

}

JSON Response Example (Success Case):

{

"minRequiredProxyRecorderVersion":"0.2.2",

"isError":false

}

Specific Request Fields:

Response Fields (JSON object “proxyRecorder”):

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"addProxyRecorder",

"recorderDescription":"My New Proxy Recorder",

"recorderProxyHost":"192.168.0.148",

"recorderProxyPort":8082,

"recorderProxyAuthUsername":"max.meier",

"recorderProxyAuthPassword":"123456",

"recorderControlPort":8081,

"recorderControlAuthToken":"aZujkl97zuwert"

}

JSON Response Example (Success Case):

{

"proxyRecorder": {

"recorderId": 10,

"createdBySystem": false,

"ownerUserId": 13,

"recorderDescription": "My New Proxy Recorder",

"recorderProxyHost": "192.168.0.148",

"recorderProxyPort": 8082,

"recorderProxyAuthUsername": "max.meier",

"recorderProxyAuthPassword": "123456",

"recorderControlPort": 8081,

"recorderControlAuthToken": "aZujkl97zuwert"

},

"isError": false

}

Specific Request Fields:

Response Fields (JSON object “pongResponse”):

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"pingProxyRecorder",

"recorderId":4

}

JSON Response Example (Success Case):

{

"pongResponse": {

"pingFromRemoteIp": "83.150.39.44",

"pingFromRemoteUserId": 13,

"productVersion": "0.2.0",

"recorderComponentVersion": "1.1.0",

"isRecording": false,

"recordHostFilter": "www.dkfqa.com",

"numRecordedElements": 0,

"osName": "Linux",

"osVersion": "5.4.0-74-generic",

"javaMemoryMB": 2048,

"javaVersion": "11.0.1",

"javaVendor": "Oracle Corporation",

"systemTime": 1625529858405,

"deltaTimeMillis": 790,

"httpExecuteTimeMillis": 88

},

"isError": false

}

JSON Response Example (Error Case):

{

"isError": true,

"genericErrorText": "connect timed out",

"recorderNotReachableError": true,

"recorderIdError": false,

"recorderAccessDeniedError": false

}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"deleteProxyRecorder",

"recorderId":10

}

JSON Response Example (Success Case):

{"isError":false}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"getProxyRecordersClientCertificates"

}

JSON Response Example (Success Case):

{

"clientCertificatesArray": [

{

"certificateId": 1,

"ownerUserId": 13,

"recorderId": -1,

"originalCertFileName": "fischer@dkfqa.com.p12",

"certFileType": "PKCS#12",

"certFileDataB64": "MIIRQQ.....CAA=",

"certPassword": "12345678",

"certDescription": "Fischer's Cert",

"certActive": true,

"applyForHost": "192.168.0.100",

"applyForPort": 499

},

{

"certificateId": 3,

"ownerUserId": 13,

"recorderId": -1,

"originalCertFileName": "miller@dkfqa.com.p12",

"certFileType": "PKCS#12",

"certFileDataB64": "MIIRXB.....CCAA=",

"certPassword": "abcdefgh",

"certDescription": "",

"certActive": false,

"applyForHost": "www.werwaswo.com",

"applyForPort": 443

}

],

"isError": false

}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"addProxyRecordersClientCertificate",

"recorderId": -1,

"certFileName": "felix@dkfqa.com.p12",

"certFileType": "PKCS#12",

"certFileDataB64": "MIIRQQIBAzCCEQ.....3dUWQICCAA=",

"certPassword": "mammamia",

"certDescription":"",

"certActive": true,

"applyForHost": "www.dkfqa.com",

"applyForPort": 443

}

JSON Response Example (Success Case):

{

"addedClientCertificate": {

"certificateId": 5,

"ownerUserId": 13,

"recorderId": -1,

"originalCertFileName": "felix@dkfqa.com.p12",

"certFileType": "PKCS#12",

"certDescription": "",

"certActive": true,

"applyForHost": "www.dkfqa.com",

"applyForPort": 443

},

"isError": false

}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"setProxyRecordersClientCertificateActive",

"certificateId": 5,

"certActive": false

}

JSON Response Example (Success Case):

{

"updatedClientCertificate": {

"certificateId": 5,

"ownerUserId": 13,

"recorderId": -1,

"originalCertFileName": "felix@dkfqa.com.p12",

"certFileType": "PKCS#12",

"certDescription": "",

"certActive": false,

"applyForHost": "www.dkfqa.com",

"applyForPort": 443

},

"isError": false

}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"modifyProxyRecordersClientCertificate",

"certificateId": 5,

"certDescription": "My Cert",

"applyForHost": "www.dkfqa.com",

"applyForPort": 444

}

JSON Response Example (Success Case):

{

"updatedClientCertificate": {

"certificateId": 5,

"ownerUserId": 13,

"recorderId": -1,

"originalCertFileName": "felix@dkfqa.com.p12",

"certFileType": "PKCS#12",

"certDescription": "My Cert",

"certActive": false,

"applyForHost": "www.dkfqa.com",

"applyForPort": 444

},

"isError": false

}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"deleteProxyRecordersClientCertificate",

"certificateId": 5

}

JSON Response Example (Success Case):

{"isError": false}

JSON Response Example (Error Case):

{"isError": true, "genericErrorText": "Invalid certificate Id"}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"getTestjobTemplates"

}

JSON Response Example (Success Case):

{

"testjobTemplatesArray": [

{

"testjobTemplate": {

"templateId": 10,

"templateName": "SimpleTest 1VU",

"templateDescription": "",

"testjobProperties": {

"testjobResultFileName": "",

"executeMeasuringAgentClusterInfo": "",

"testjobConcurrentUsers": "1",

"testjobDescription": "SimpleTest",

"testjobDebugMeasuring": "false",

"testjobMaxTestDuration": "30",

"isClusterJob": "",

"testjobRampUpTime": "10",

"localTestjobId": "",

"testjobMaxLoopsPerUser": "1",

"productVersion": "4.8.23",

"remoteTestjobId": "",

"testjobAdditionalArguments": "",

"definedFromTestId": "384",

"testjobSignature": "",

"testjobDelayPerLoop": "1000",

"testjobExitCode": "",

"createTimeStamp": "1694549684261",

"userInputFieldsFileName": "",

"testjobType": "load test",

"enableAutomaticFileSync": "true",

"testjobState": "invalid",

"isClusterControllerJobProperties": "",

"testjobDebugExecution": "false",

"executeOnUserMeasuringAgentId": "",

"definedFromTimeZoneId": "Europe/Berlin",

"executeMeasuringAgentInfo": "",

"executeOnMeasuringAgentClusterId": ""

},

"hasUserInputFields": false

},

"testIsHealthy": true

},

{

"testjobTemplate": {

"templateId": 11,

"templateName": "SimpleTestWithInputFields 10VU",

"templateDescription": "",

"testjobProperties": {

"testjobResultFileName": "",

"executeMeasuringAgentClusterInfo": "",

"testjobConcurrentUsers": "10",

"testjobDescription": "SimpleTestWithInputFields",

"testjobDebugMeasuring": "false",

"testjobMaxTestDuration": "60",

"isClusterJob": "",

"testjobRampUpTime": "10",

"localTestjobId": "",

"testjobMaxLoopsPerUser": "5",

"productVersion": "4.8.23",

"remoteTestjobId": "",

"testjobAdditionalArguments": "",

"definedFromTestId": "385",

"testjobSignature": "",

"testjobDelayPerLoop": "1000",

"testjobExitCode": "",

"createTimeStamp": "1695047319785",

"userInputFieldsFileName": "InputFields_SimpleTestWithInputFields.json",

"testjobType": "load test",

"enableAutomaticFileSync": "true",

"testjobState": "invalid",

"isClusterControllerJobProperties": "",

"testjobDebugExecution": "true",

"executeOnUserMeasuringAgentId": "",

"definedFromTimeZoneId": "Europe/Berlin",

"executeMeasuringAgentInfo": "",

"executeOnMeasuringAgentClusterId": ""

},

"hasUserInputFields": true,

"userInputFieldsFile": {

"useShortNames": false,

"magicPattern": "UserInputFields",

"productVersion": "4.8.23",

"userInputFieldsArray": [

{

"useShortNames": false,

"productVersion": "4.8.23",

"label": "Input Host",

"variableName": "vHost",

"inputType": "string",

"defaultValue": "126.3.4.5",

"currentValue": "126.3.4.5"

},

{

"useShortNames": false,

"productVersion": "4.8.23",

"label": "Input Port",

"variableName": "vPort",

"inputType": "integer",

"defaultValue": "444",

"currentValue": "444"

}

]

}

},

"testIsHealthy": true

}

],

"isError": false

}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"defineNewTestjobFromTemplate",

"templateId":10,

"measuringAgentOrClusterId":65,

"isCluster":false,

"jobDescription": "Simple Test"

}

JSON Response Example (Success Case):

{

"newTestjobId":33533,

"isError":false

}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"submitTestjob",

"localTestjobId":33534

}

JSON Response Example (Success Case):

{

"isClusterJob": false,

"agentResponse": {

"remoteTestjobId": 24129,

"httpExecuteTimeMillis": 338

},

"isError": false

}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"makeTestjobReadyToRun",

"localTestjobId":33534

}

JSON Response Example (Success Case):

{

"isClusterJob": false,

"agentResponse": {

"testjobProperties": {

"testjobResultFileName": "",

"executeMeasuringAgentClusterInfo": "",

"testjobConcurrentUsers": "1",

"testjobDescription": "Simple Test",

"testjobDebugMeasuring": "false",

"testjobMaxTestDuration": "30",

"isClusterJob": "false",

"testjobRampUpTime": "10",

"localTestjobId": "33534",

"testjobMaxLoopsPerUser": "1",

"productVersion": "4.8.18",

"remoteTestjobId": "24129",

"testjobAdditionalArguments": "",

"definedFromTestId": "384",

"testjobSignature": "Z9B963yIj232nht1DIX5aSRt8m77QuXpvVA5736Tl9Wc7UZ7jOiU3l6UC7y3Nx6CXDDZB4qUg4FHDtTuHsD9xd0T/CSq/g==",

"testjobDelayPerLoop": "1000",

"testjobExitCode": "",

"createTimeStamp": "1695050460395",

"userInputFieldsFileName": "",

"testjobType": "load test",

"enableAutomaticFileSync": "true",

"testjobState": "ready to run",

"isClusterControllerJobProperties": "",

"testjobDebugExecution": "false",

"executeOnUserMeasuringAgentId": "65",

"definedFromTimeZoneId": "Europe/Berlin",

"executeMeasuringAgentInfo": "agent2.realload.com:8080",

"executeOnMeasuringAgentClusterId": ""

},

"httpExecuteTimeMillis": 2871

},

"isError": false

}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"startTestjob",

"localTestjobId":33534

}

JSON Response Example (Success Case):

{

"isClusterJob": false,

"agentResponse": {

"testjobProperties": {

"testjobResultFileName": "",

"executeMeasuringAgentClusterInfo": "",

"testjobConcurrentUsers": "1",

"testjobDescription": "Simple Test",

"testjobDebugMeasuring": "false",

"testjobMaxTestDuration": "30",

"isClusterJob": "false",

"testjobRampUpTime": "10",

"localTestjobId": "33534",

"testjobMaxLoopsPerUser": "1",

"productVersion": "4.8.18",

"remoteTestjobId": "24129",

"testjobAdditionalArguments": "",

"definedFromTestId": "384",

"testjobSignature": "Z9B963yIj232nht1DIX5aSRt8m77QuXpvVA5736Tl9Wc7UZ7jOiU3l6UC7y3Nx6CXDDZB4qUg4FHDtTuHsD9xd0T/CSq/g==",

"testjobDelayPerLoop": "1000",

"testjobExitCode": "",

"createTimeStamp": "1695050460395",

"userInputFieldsFileName": "",

"testjobType": "load test",

"enableAutomaticFileSync": "true",

"testjobState": "running",

"isClusterControllerJobProperties": "",

"testjobDebugExecution": "false",

"executeOnUserMeasuringAgentId": "65",

"definedFromTimeZoneId": "Europe/Berlin",

"executeMeasuringAgentInfo": "agent2.realload.com:8080",

"executeOnMeasuringAgentClusterId": ""

},

"httpExecuteTimeMillis": 249

},

"isError": false

}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"getMeasuringAgentTestjobs",

"measuringAgentId":65,

"synchronizeLocalTestjobsState":true

}

JSON Response Example (Success Case):

{

"agentResponse": {

"allTestjobsArray": [

{

"testjobProperties": {

"testjobResultFileName": "TestResult_RealloadTest_2023-09-09@23-45-34.json",

"executeMeasuringAgentClusterInfo": "",

"testjobConcurrentUsers": "1",

"testjobDescription": "RealloadTest",

"testjobDebugMeasuring": "false",

"testjobMaxTestDuration": "60",

"isClusterJob": "false",

"testjobRampUpTime": "10",

"localTestjobId": "30717",

"testjobMaxLoopsPerUser": "1",

"productVersion": "4.8.18",

"remoteTestjobId": "15240",

"testjobAdditionalArguments": "",

"definedFromTestId": "379",

"testjobSignature": "NeYJG4vf8/joAwz4nR4Fie3W0Qr7ijps25ONuk6F5BLghuD8/clPL9mBKc/ogQiic7wI8OXOf53Mi4YDCpg4dQzuaWQ==",

"testjobDelayPerLoop": "1000",

"testjobExitCode": "0",

"createTimeStamp": "1694295923799",

"userInputFieldsFileName": "",

"testjobType": "load test",

"enableAutomaticFileSync": "true",

"testjobState": "completed",

"isClusterControllerJobProperties": "",

"testjobDebugExecution": "true",

"executeOnUserMeasuringAgentId": "65",

"definedFromTimeZoneId": "Europe/Berlin",

"executeMeasuringAgentInfo": "agent2.realload.com:8080",

"executeOnMeasuringAgentClusterId": ""

},

"testProperties": {

"referencedResourceFilesList": [

{

"projectId": 130,

"testplanId": 203,

"fileName": "com.dkfqs.selenium.jar"

},

{

"projectId": 130,

"testplanId": 203,

"fileName": "commons-io-2.13.0.jar"

},

{

"projectId": 130,

"testplanId": 203,

"fileName": "hamcrest-core-1.3.jar"

},

{

"projectId": 130,

"testplanId": 203,

"fileName": "com.dkfqs.tools.jar"

},

{

"projectId": 130,

"testplanId": 203,

"fileName": "junit-4.13.2.jar"

},

{

"projectId": 130,

"testplanId": 203,

"fileName": "selenium_bundle-4.12.1.jar"

}

],

"jsonConfigObject": {

"productVersion": "4.8.20",

"jUnitConfigExecutionClassArray": [

{

"productVersion": "4.8.20",

"executingClassName": "RealloadTest",

"testMethodNamesArray": [

"realload"

]

}

],

"seleniumWebBrowserType": "Firefox"

},

"executingScript": "RealloadTest.jar",

"executingScriptResourceFileList": "",

"createTimeStamp": "1694293491984",

"testDescription": "",

"definedFromTestplanId": "251",

"productVersion": "4.8.20",

"definedFromProjectId": "137",

"visibleTestName": "RealloadTest",

"scriptType": "Selenium4byJUnit4",

"testId": "379"

}

},

{

"testjobProperties": {

"testjobResultFileName": "TestResult_SimpleTest_2023-09-18@17-01-08.json",

"executeMeasuringAgentClusterInfo": "",

"testjobConcurrentUsers": "1",

"testjobDescription": "Simple Test",

"testjobDebugMeasuring": "false",

"testjobMaxTestDuration": "30",

"isClusterJob": "false",

"testjobRampUpTime": "10",

"localTestjobId": "33533",

"testjobMaxLoopsPerUser": "1",

"productVersion": "4.8.18",

"remoteTestjobId": "24112",

"testjobAdditionalArguments": "",

"definedFromTestId": "384",

"testjobSignature": "JYstx+myVNqbyVMr24u4Pi8IXkLGH0iXUquRh5+NL0veKs4DRJX/w2ZT77wLXF70J9YmTPzR7g==",

"testjobDelayPerLoop": "1000",

"testjobExitCode": "0",

"createTimeStamp": "1695049264730",

"userInputFieldsFileName": "",

"testjobType": "load test",

"enableAutomaticFileSync": "true",

"testjobState": "completed",

"isClusterControllerJobProperties": "",

"testjobDebugExecution": "false",

"executeOnUserMeasuringAgentId": "65",

"definedFromTimeZoneId": "Europe/Berlin",

"executeMeasuringAgentInfo": "agent2.realload.com:8080",

"executeOnMeasuringAgentClusterId": ""

},

"testProperties": {

"referencedResourceFilesList": [

{

"projectId": 130,

"testplanId": 203,

"fileName": "com.dkfqs.tools.jar"

}

],

"jsonConfigObject": {},

"executingScript": "SimpleTest.jar",

"executingScriptResourceFileList": "",

"createTimeStamp": "1694549112155",

"testDescription": "",

"definedFromTestplanId": "251",

"productVersion": "4.8.21",

"definedFromProjectId": "137",

"visibleTestName": "SimpleTest",

"scriptType": "OpenJDK11Jar",

"testId": "384"

}

},

{

"testjobProperties": {

"testjobResultFileName": "TestResult_SimpleTest_2023-09-18@17-51-53.json",

"executeMeasuringAgentClusterInfo": "",

"testjobConcurrentUsers": "1",

"testjobDescription": "Simple Test",

"testjobDebugMeasuring": "false",

"testjobMaxTestDuration": "30",

"isClusterJob": "false",

"testjobRampUpTime": "10",

"localTestjobId": "33534",

"testjobMaxLoopsPerUser": "1",

"productVersion": "4.8.18",

"remoteTestjobId": "24129",

"testjobAdditionalArguments": "",

"definedFromTestId": "384",

"testjobSignature": "Z9B963yIj232nht1DIX5aSRt8m77QuXYjP9rn4OBSq/g==",

"testjobDelayPerLoop": "1000",

"testjobExitCode": "0",

"createTimeStamp": "1695050460395",

"userInputFieldsFileName": "",

"testjobType": "load test",

"enableAutomaticFileSync": "true",

"testjobState": "completed",

"isClusterControllerJobProperties": "",

"testjobDebugExecution": "false",

"executeOnUserMeasuringAgentId": "65",

"definedFromTimeZoneId": "Europe/Berlin",

"executeMeasuringAgentInfo": "agent2.realload.com:8080",

"executeOnMeasuringAgentClusterId": ""

},

"testProperties": {

"referencedResourceFilesList": [

{

"projectId": 130,

"testplanId": 203,

"fileName": "com.dkfqs.tools.jar"

}

],

"jsonConfigObject": {},

"executingScript": "SimpleTest.jar",

"executingScriptResourceFileList": "",

"createTimeStamp": "1694549112155",

"testDescription": "",

"definedFromTestplanId": "251",

"productVersion": "4.8.21",

"definedFromProjectId": "137",

"visibleTestName": "SimpleTest",

"scriptType": "OpenJDK11Jar",

"testId": "384"

}

}

],

"httpExecuteTimeMillis": 141

},

"isError": false

}

Specific Request Fields:

Response Fields:

Specific Error Flags:

JSON Request Example:

{

"authTokenValue":"jPmFClqeDUXaEk8Q274q",

"action":"getTestjobOutDirectoryFilesInfo",

"localTestjobId":33534

}

JSON Response Example (Success Case):

{

"isClusterJob": false,

"measuringAgentData": {

"agentId": 65,

"createdBySystem": false,

"ownerUserId": 13,

"agentActive": true,

"agentDescription": "Demo Agent 2",

"agentHost": "agent2.realload.com",

"agentPort": 8080,

"authToken": "************",

"sharedMeasuringAgentTemplateId": -1,

"isLaunchedByCloudCredits": false

},

"filesInfoArray": [

{

"fileName": "DataCollector.err",

"fileSize": 0,

"lastModified": 1695051456261,

"mimeType": "application/octet-stream"

},

{

"fileName": "DataCollector.out",

"fileSize": 5647,

"lastModified": 1695052318516,

"mimeType": "application/octet-stream"

},

{

"fileName": "DataCollector.pid",

"fileSize": 8,

"lastModified": 1695051456273,

"mimeType": "application/octet-stream"

},