This is the multi-page printable view of this section. Click here to print.

Real Load News

- Regression testing using Test Templates and Suites

- Monitoring SSL endpoints

- New test execution APIs

- A simple use case for JUnit testing

- Test script involving OTPs? No problems

- Application High Latency Is Outage !

- All Purpose Interface!

- Real Load Synthetic Monitoring Generally Available!

- Support for SSL Cert Client authentication in Proxy Recorder

- Some SQL performance testing today?

- New Feature - URL Explorer

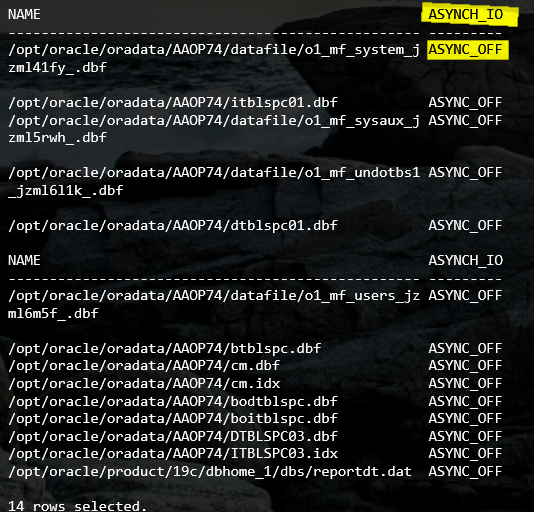

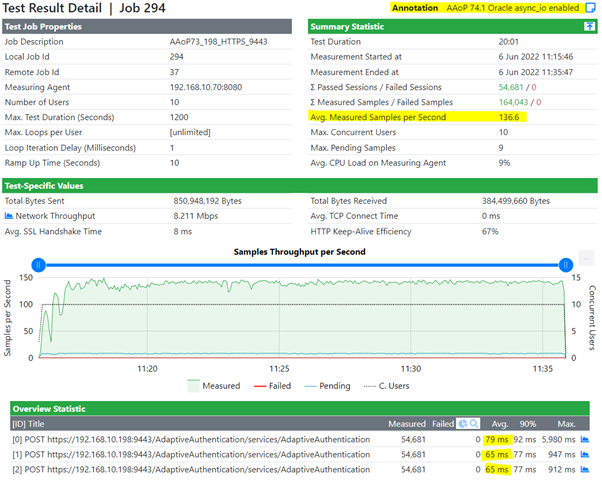

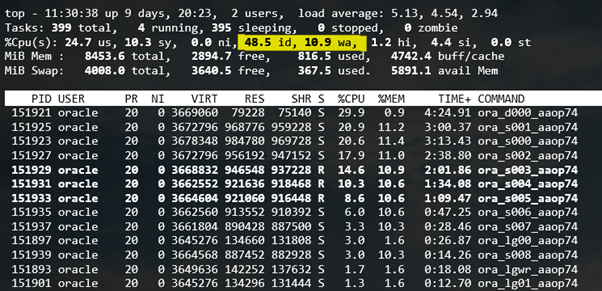

- Oracle and async I/O... A world of a difference

- Apache HTTPD on FreeBSD and Linux Load Test

- Real Load Portal Generally Available!

- Desktop Companion 0.24 Released

- My system just got faster!

- Desktop Companion Enhancements

- Desktop Companion Released

- Real Load Plugins Introduction

- Real Load Demo Portal online

Regression testing using Test Templates and Suites

An exciting set of new features was added in Real Load v4.8.24. Test Templates and Test Suites.

Test Templates allow you to:

- Pre-define a load test’s execution parameters like VUs, duration, etc..

- Each Template represents a specific performance test script.

Test Suites will allow you to:

- Execute one or more test templates to build complex execution scenarios simulating a variety of activities.

- Organize multiple Test Templates in test groups. Execution within a test group can be parallel or sequential.

- Have multiple execution groups within a test suite.

- Configure sequential or parallel execution of test groups.

Once a Test Suite has been executed you can:

- Compare results at the test suite level with multiple previous executions.

- Compare results at the template level with multiple previous executions.

Test Suites allow you to implement regression testing by executing a specific set of performance tests using the very same execution parameters. You can even automate execution of Test Suites by triggering it via the APIs exposed by the product.

All of this is documented in this short video (9 minutes) which walks you through these new features.

As always, feedback or questions are welcome using our contact form.

Monitoring SSL endpoints

Last week I wrote an article to illustrate how the ability to execute JUnit tests opens a whole new world of synthetic monitoring possibilities.

This week I’ve implemented another use case to detect SSL certificate related issues which I’ve actually seen causing issues in production environments. Most issues were were caused by expired certificates or CRLs, affecting websites, APIs or VPNs.

I’ve implemented a series of JUnit tests to verify these attributes of an SSL endpoint:

- Check for weak SSL cipher suites

- Check for weak SSL protocols (SSL v1.0 or v1.1 for example)

- Check SSL certificate expiration date. Alert if less than 30 days in future

- Check whether the certificate ended up in the CRL by mistake

- Check whether the currently published CRL is not expiring in then next 2 days

Below you’ll find the JUnit code to implement the above tests. Once the code is deployed to the Real Load platform you can configure a Synthetic Monitoring task to execute it regularly.

The code is intended for demonstration purposes only. Actual production code could be enhanced to perform additional checks on the presented SSL certificates or the other certs in the keychain. The code could also be extended to retrieve a list of SSL endpoints to be validated from a document or an API of some sort, to simplify maintenance.

Happy monitoring!

Required dependencies (Maven)

<dependencies>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.12</version>

</dependency>

<dependency>

<groupId>com.dkfqs.tools</groupId>

<artifactId>tools</artifactId>

<version>4.8.23</version>

</dependency>

<dependency>

<groupId>org.bouncycastle</groupId>

<artifactId>bcpkix-jdk15to18</artifactId>

<version>1.68</version>

<type>jar</type>

</dependency>

This is the code implementing the SSL checks mentioned above. You’ll notice a few variables at the top to set hostname, port and some other configuration parameters…

import com.dkfqs.tools.crypto.EncryptedSocket;

import com.dkfqs.tools.javatest.AbstractJUnitTest;

import static com.dkfqs.tools.javatest.AbstractJUnitTest.isArgDebugExecution;

import static com.dkfqs.tools.logging.LogAdapterInterface.LOG_DEBUG;

import static com.dkfqs.tools.logging.LogAdapterInterface.LOG_ERROR;

import com.dkfqs.tools.logging.MemoryLogAdapter;

import org.junit.After;

import org.junit.Before;

import org.junit.Test;

import javax.net.ssl.SSLSocket;

import java.io.ByteArrayInputStream;

import java.io.DataInputStream;

import java.net.URL;

import java.net.URLConnection;

import java.security.cert.Certificate;

import java.security.cert.CertificateFactory;

import java.security.cert.X509CRL;

import java.security.cert.X509CRLEntry;

import java.security.cert.X509Certificate;

import java.util.ArrayList;

import java.util.Arrays;

import java.util.Calendar;

import java.util.Date;

import java.util.List;

import junit.framework.TestCase;

import org.bouncycastle.asn1.ASN1InputStream;

import org.bouncycastle.asn1.ASN1Primitive;

import org.bouncycastle.asn1.DERIA5String;

import org.bouncycastle.asn1.DEROctetString;

import org.bouncycastle.asn1.x509.CRLDistPoint;

import org.bouncycastle.asn1.x509.DistributionPoint;

import org.bouncycastle.asn1.x509.DistributionPointName;

import org.bouncycastle.asn1.x509.Extension;

import org.bouncycastle.asn1.x509.GeneralName;

import org.bouncycastle.asn1.x509.GeneralNames;

import org.bouncycastle.cert.jcajce.JcaX509CertificateHolder;

import org.junit.Assert;

import org.junit.Ignore;

public class TestSSLPort extends AbstractJUnitTest {

private final static int TCP_CONNECT_TIMEOUT_MILLIS = 3000;

private final static int SSL_HANDSHAKE_TIMEOUT_MILLIS = 2000;

private String sslEndPoint = "www.realload.com"; // The SSL endpoint to validate

private String DNofCertWithCRLdistPoint ="R3"; // The DN of the issuing CA - Used for CRL checks

private int certExpirationDaysAlertThreshold = -30; //How many days before cert expiration we should be alerted.

private int crlExpirationDaysAlertThreshold = -2; //How many days before CRL expiration we should be alerted.

private final MemoryLogAdapter log = new MemoryLogAdapter(); // default log level is LOG_INFO

@Before

public void setUp() throws Exception {

if (isArgDebugExecution()) {

log.setLogLevel(LOG_DEBUG);

}

//log.setLogLevel(LOG_DEBUG);

openAllPurposeInterface();

log.message(LOG_DEBUG, "Testing SSL enpoint " + sslEndPoint + ":" + sslEndpointPort);

}

@Test

public void CheckForWeakCipherSuites() throws Exception {

SSLSocket sslSocket = SSLConnect(sslEndPoint, sslEndpointPort);

String[] cipherSuites = sslSocket.getEnabledCipherSuites();

// Add all weak ciphers here....

TestCase.assertNotNull(cipherSuites);

TestCase.assertFalse(Arrays.asList(cipherSuites).contains("SSL_RSA_WITH_DES_CBC_SHA"));

TestCase.assertFalse(Arrays.asList(cipherSuites).contains("SSL_DHE_DSS_WITH_DES_CBC_SHA"));

sslSocket.close();

}

@Test

public void CheckForWeakSSLProtocols() throws Exception {

SSLSocket sslSocket = SSLConnect(sslEndPoint, sslEndpointPort);

String[] sslProtocols = sslSocket.getEnabledProtocols();

TestCase.assertNotNull(sslProtocols);

TestCase.assertFalse(Arrays.asList(sslProtocols).contains("TLSv1.0"));

TestCase.assertFalse(Arrays.asList(sslProtocols).contains("TLSv1.1"));

sslSocket.close();

}

@Test

public void CheckCertExpiration30Days() throws Exception {

SSLSocket sslSocket = SSLConnect(sslEndPoint, sslEndpointPort);

TestCase.assertNotNull(sslSocket);

Certificate[] peerCerts = sslSocket.getSession().getPeerCertificates();

for (Certificate cert : peerCerts) {

if (cert instanceof X509Certificate) {

X509Certificate x = (X509Certificate) cert;

if (x.getSubjectDN().toString().contains(sslEndPoint)) {

JcaX509CertificateHolder certAttrs = new JcaX509CertificateHolder(x);

Date expDate = certAttrs.getNotAfter();

log.message(LOG_DEBUG, "Cert expiration: " + expDate + " " + sslEndPoint);

Calendar c = Calendar.getInstance();

c.setTime(expDate);

c.add(Calendar.DATE, certExpirationDaysAlertThreshold);

if (new Date().after(c.getTime())) {

log.message(LOG_ERROR, "Cert expiration: " + expDate + ". Less than " + certExpirationDaysAlertThreshold + "days in future");

Assert.assertEquals(true, false);

}

}

}

}

sslSocket.close();

}

@Test

public void CheckCRLRevocationStatus() throws Exception {

SSLSocket sslSocket = SSLConnect(sslEndPoint, sslEndpointPort);

TestCase.assertNotNull(sslSocket);

Certificate[] peerCerts = sslSocket.getSession().getPeerCertificates();

for (Certificate cert : peerCerts) {

if (cert instanceof X509Certificate) {

X509Certificate certToBeVerified = (X509Certificate) cert;

if (certToBeVerified.getSubjectDN().toString().contains(DNofCertWithCRLdistPoint)) {

checkCRLRevocationStatus(certToBeVerified);

}

}

}

sslSocket.close();

}

@Test

public void CheckCRLUpdateDueLess2Days() throws Exception {

SSLSocket sslSocket = SSLConnect(sslEndPoint, sslEndpointPort);

TestCase.assertNotNull(sslSocket);

Certificate[] peerCerts = sslSocket.getSession().getPeerCertificates();

for (Certificate cert : peerCerts) {

if (cert instanceof X509Certificate) {

X509Certificate certToBeVerified = (X509Certificate) cert;

if (certToBeVerified.getSubjectDN().toString().contains(DNofCertWithCRLdistPoint)) {

checkCRLUpdateDueLessXDays(certToBeVerified);

}

}

}

sslSocket.close();

}

@After

public void tearDown() throws Exception {

closeAllPurposeInterface();

log.writeToStdoutAndClear();

}

private static SSLSocket SSLConnect(String host, int port) throws Exception {

EncryptedSocket encryptedSocket = new EncryptedSocket(host, port);

encryptedSocket.setTCPConnectTimeoutMillis(TCP_CONNECT_TIMEOUT_MILLIS);

encryptedSocket.setSSLHandshakeTimeoutMillis(SSL_HANDSHAKE_TIMEOUT_MILLIS);

SSLSocket sslSocket = encryptedSocket.connect();

return sslSocket;

}

private void checkCRLRevocationStatus(X509Certificate certificate) throws Exception {

List<String> crlUrls = getCRLDistributionEndPoints(certificate);

CertificateFactory cf;

cf = CertificateFactory.getInstance("X509");

// Loop through all CRL distribution enpoints

for (String urlS : crlUrls) {

log.message(LOG_DEBUG, "CRL endpoint: " + urlS);

URL url = new URL(urlS);

URLConnection connection = url.openConnection();

X509CRL crl = null;

try (DataInputStream inStream = new DataInputStream(connection.getInputStream())) {

crl = (X509CRL) cf.generateCRL(inStream);

}

X509CRLEntry revokedCertificate = crl.getRevokedCertificate(certificate.getSerialNumber());

if (revokedCertificate != null) {

log.message(LOG_DEBUG, "Revoked");

Assert.assertEquals(true, false);

} else {

log.message(LOG_DEBUG, "Valid");

}

}

}

private void checkCRLUpdateDueLessXDays(X509Certificate certificate) throws Exception {

List<String> crlUrls = getCRLDistributionEndPoints(certificate);

CertificateFactory cf;

cf = CertificateFactory.getInstance("X509");

// Loop through all CRL distribution enpoints

for (String urlS : crlUrls) {

log.message(LOG_DEBUG, "CRL endpoint: " + urlS);

URL url = new URL(urlS);

URLConnection connection = url.openConnection();

X509CRL crl = null;

try (DataInputStream inStream = new DataInputStream(connection.getInputStream())) {

crl = (X509CRL) cf.generateCRL(inStream);

}

Date nextUpdateDueBy = crl.getNextUpdate();

log.message(LOG_DEBUG, "CRL Next Update: " + nextUpdateDueBy + " " + urlS);

Calendar c = Calendar.getInstance();

c.setTime(nextUpdateDueBy);

c.add(Calendar.DATE, crlExpirationDaysAlertThreshold);

if (new Date().after(c.getTime())) {

log.message(LOG_ERROR, "CRL " + urlS + " expiration: " + nextUpdateDueBy + ". Less than " + crlExpirationDaysAlertThreshold + " days in future");

Assert.assertEquals(true, false);

}

}

}

private List<String> getCRLDistributionEndPoints(X509Certificate certificate) throws Exception {

byte[] crlDistributionPointDerEncodedArray = certificate.getExtensionValue(Extension.cRLDistributionPoints.getId());

ASN1InputStream oAsnInStream = new ASN1InputStream(new ByteArrayInputStream(crlDistributionPointDerEncodedArray));

ASN1Primitive derObjCrlDP = oAsnInStream.readObject();

DEROctetString dosCrlDP = (DEROctetString) derObjCrlDP;

oAsnInStream.close();

byte[] crldpExtOctets = dosCrlDP.getOctets();

ASN1InputStream oAsnInStream2 = new ASN1InputStream(new ByteArrayInputStream(crldpExtOctets));

ASN1Primitive derObj2 = oAsnInStream2.readObject();

CRLDistPoint distPoint = CRLDistPoint.getInstance(derObj2);

oAsnInStream2.close();

List<String> crlUrls = new ArrayList<String>();

for (DistributionPoint dp : distPoint.getDistributionPoints()) {

DistributionPointName dpn = dp.getDistributionPoint();

// Look for URIs in fullName

if (dpn != null) {

if (dpn.getType() == DistributionPointName.FULL_NAME) {

GeneralName[] genNames = GeneralNames.getInstance(dpn.getName()).getNames();

// Look for an URI

for (int j = 0; j < genNames.length; j++) {

if (genNames[j].getTagNo() == GeneralName.uniformResourceIdentifier) {

String url = DERIA5String.getInstance(genNames[j].getName()).getString();

crlUrls.add(url);

}

}

}

}

}

return crlUrls;

}

}

P.S: Some of the code above was inspired from this Stack Overflow post.

New test execution APIs

Version 8.4.23 of the Real Load platform expose new API methods that allow triggering Load Test scripts programmatically, via REST API calls. This enhancement is quite important, as it allows you to automate performance test execution as part of build processes, etc…

Another use case would be to regularly execute performance test to maintain data volume in application databases. One of the applications I work with (Outseer’s Fraud Manager on Premise) has got internal housekeeping processes that over time will remove runtime data from its DB. While this is to be expected, it might be detrimental to environments to be used for performance testing, where you might want to simulate production like data volume, or at least maintain data to a specific size.

In this case, a good way to maintain the application data volume to a given size would be to simulate on a daily basis the same number of transactions that actually occur in production environment, by simulating a similar volume of API calls.

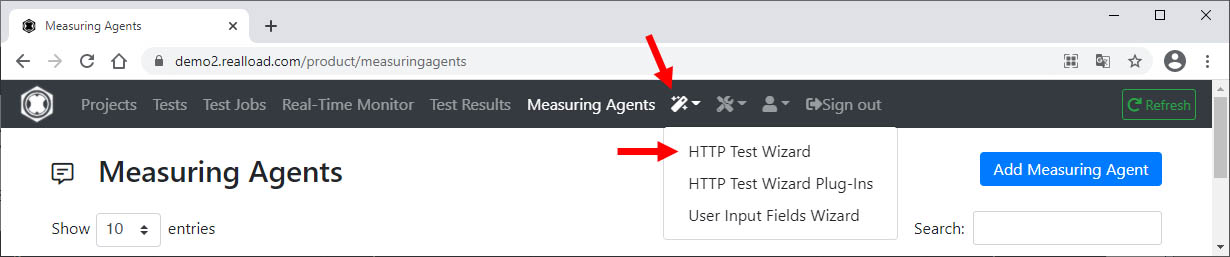

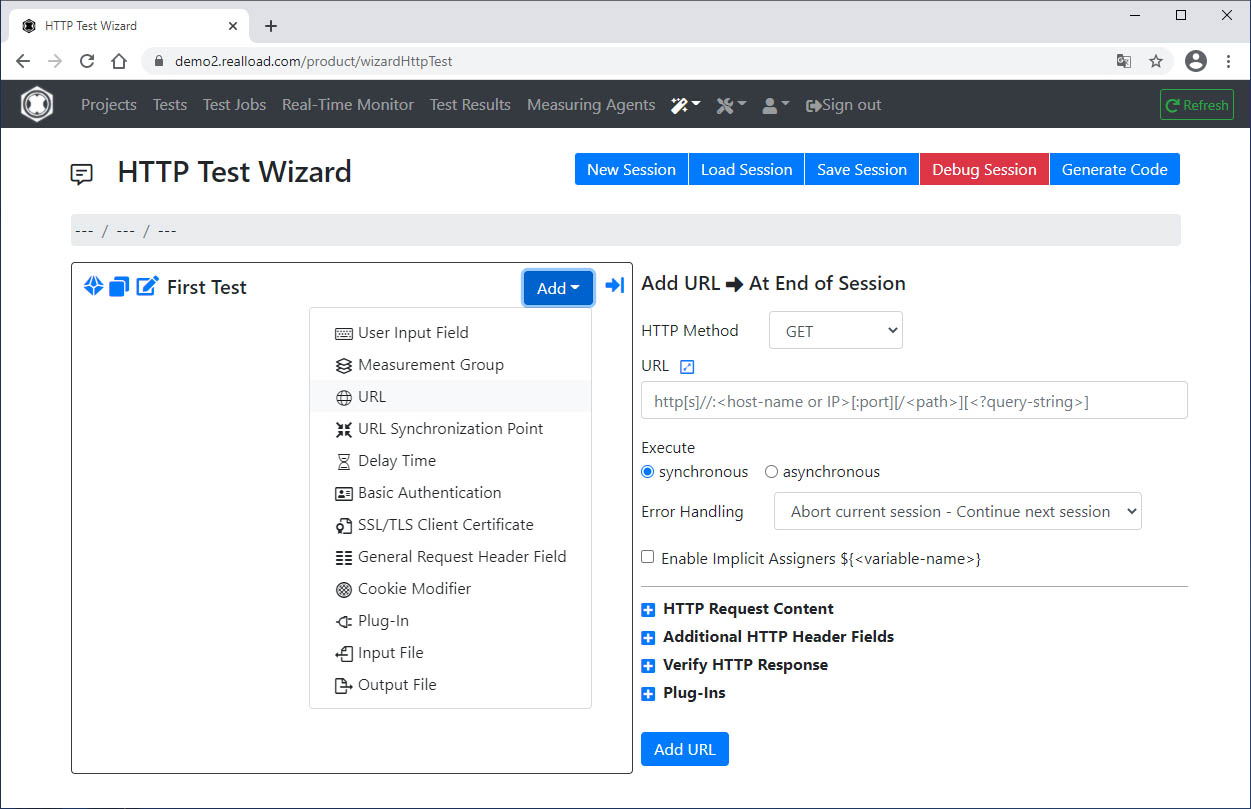

In this blog post I’ll illustrate with a simple PowerShell example how you can automate execution of a Real Load performance test using the newly exposed API methods.

The new API methods

As you can see in the v4.8.23 release notes, Real Load now exposes these new API methods:

- getTestjobTemplates

- defineNewTestjobFromTemplate

- submitTestjob

- makeTestjobReadyToRun

- startTestjob

- getMeasuringAgentTestjobs

- getTestjobOutDirectoryFilesInfo

- getTestjobOutDirectoryFile

- saveTestjobOutDirectoryFileToProjectTree

- deleteTestjob

Pre-requisites

Before getting started with the script, you’ll need to:

-

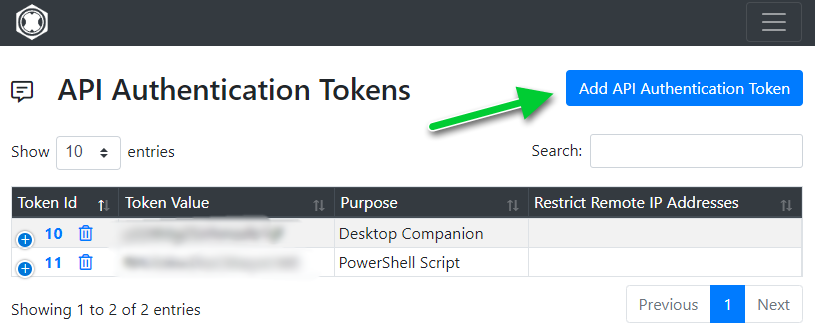

Obtain the API authentication token from the Real Load portal:

-

Configure a Load Testing template and note its ID

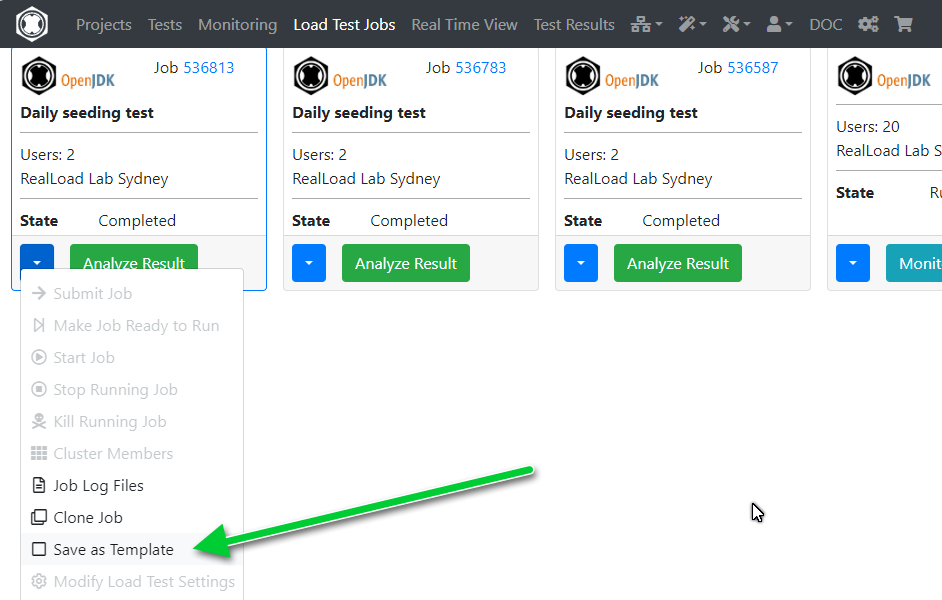

In the Load Test Jobs menu, look for a recently load test job that you’d like to trigger via the new APIs. To create a template from it, select the item pointed out in the screenshot:

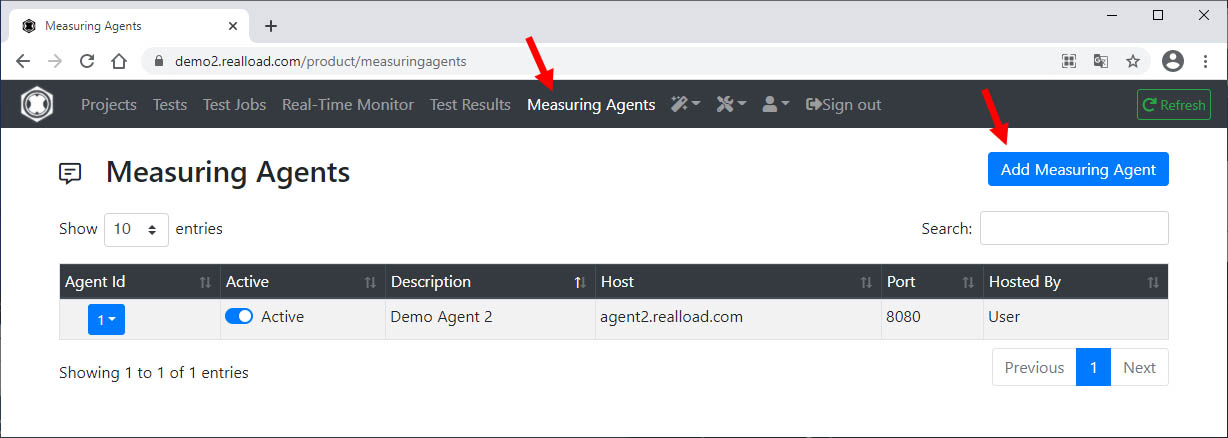

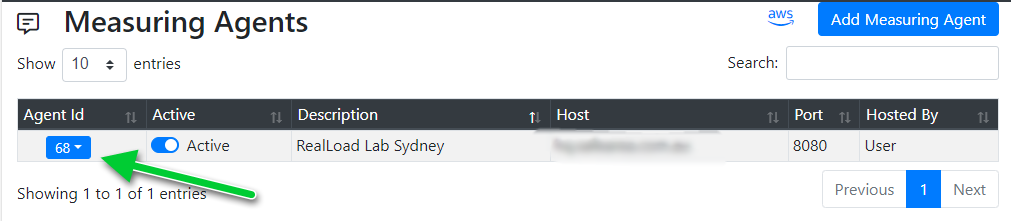

- Get the AgentID from where you want to generate execute the test

… as shown in this screenshot.

Prepare you script

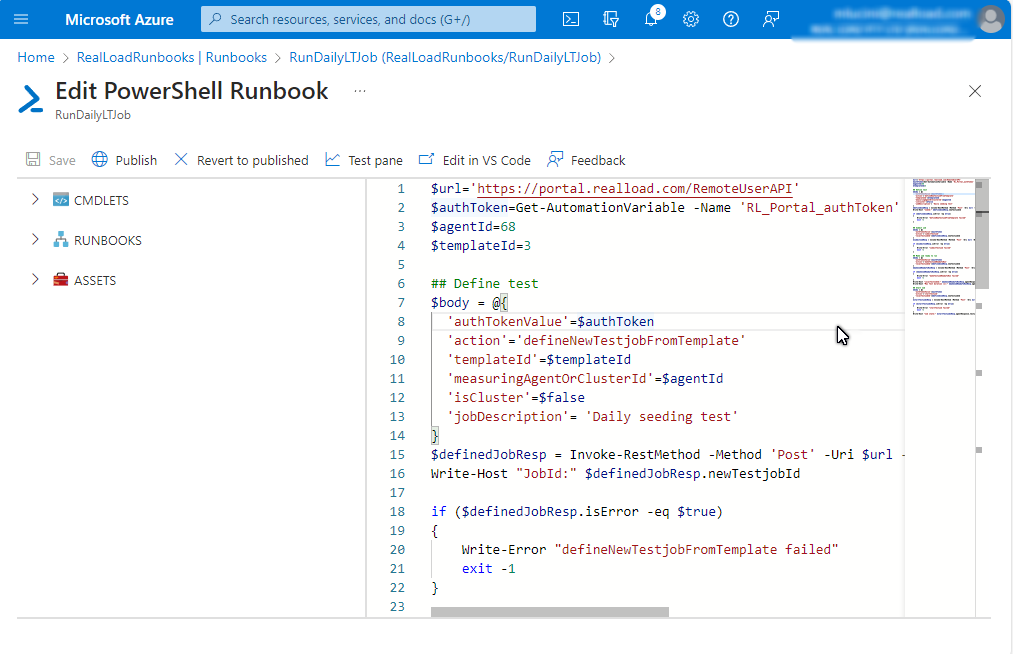

The next step involves preparing a script to invoke the Real Load API methods to trigger the test. I’ve used the following PowerShell script, which I’ll execute regularly as an Azure RunBook.

We’ll use 4 API methods:

- defineNewTestjobFromTemplate

- submitTestjob

- makeTestjobReadyToRun

- startTestjob

You’ll need to change the value of the agentId and templateId variables as needed. If you’re planning to run this script from an on-premises scheduler then you can hardcode the authToken value, instead of invoking Get-AutomationVariable.

$url='https://portal.realload.com/RemoteUserAPI'

$authToken=Get-AutomationVariable -Name 'RL_Portal_authToken'

$agentId=78

$templateId=787676

## Define test

$body = @{

'authTokenValue'=$authToken

'action'='defineNewTestjobFromTemplate'

'templateId'=$templateId

'measuringAgentOrClusterId'=$agentId

'isCluster'=$false

'jobDescription'= 'Daily seeding test'

}

$definedJobResp = Invoke-RestMethod -Method 'Post' -Uri $url -Body ($body|ConvertTo-Json) -ContentType "application/json"

Write-Host "JobId:" $definedJobResp.newTestjobId

if ($definedJobResp.isError -eq $true)

{

Write-Error "defineNewTestjobFromTemplate failed"

exit -1

}

## Submit job

$body = @{

'authTokenValue'=$authToken

'action'='submitTestjob'

'localTestjobId'=$definedJobResp.newTestjobId

}

$submitJobResp = Invoke-RestMethod -Method 'Post' -Uri $url -Body ($body|ConvertTo-Json) -ContentType "application/json"

if ($submitJobResp.isError -eq $true)

{

Write-Error "submitTestjob failed"

exit -1

}

## Make job ready to run

$body = @{

'authTokenValue'=$authToken

'action'='makeTestjobReadyToRun'

'localTestjobId'=$definedJobResp.newTestjobId

}

$makeJobReadyToRunResp = Invoke-RestMethod -Method 'Post' -Uri $url -Body ($body|ConvertTo-Json) -ContentType "application/json"

if ($makeJobReadyToRunResp.isError -eq $true)

{

Write-Error "makeTestjobReadyToRun failed"

exit -1

}

Write-Host "LocalTestJobId:" $makeJobReadyToRunResp.agentResponse.testjobProperties.localTestjobId

Write-Host "Max Test duration (s):" $makeJobReadyToRunResp.agentResponse.testjobProperties.testjobMaxTestDuration

## Start Job

$body = @{

'authTokenValue'=$authToken

'action'='startTestjob'

'localTestjobId'=$definedJobResp.newTestjobId

}

$startTestjobnResp = Invoke-RestMethod -Method 'Post' -Uri $url -Body ($body|ConvertTo-Json) -ContentType "application/json"

if ($startTestjobnResp.isError -eq $true)

{

Write-Error "startTestjob failed"

exit -1

}

Write-Host "Job state:" $startTestjobnResp.agentResponse.testjobProperties.testjobState

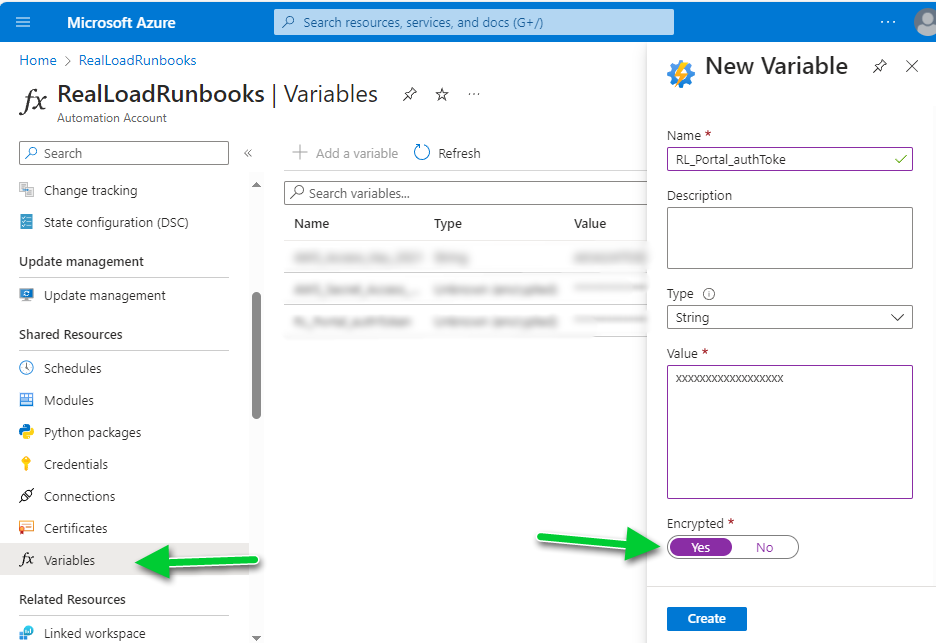

Configure RunBook variable

Configure an Azure RunBook variable by the same name as used in the powershell script, in this example “RL_Portal_authToken”. Make sure you set the type to String and select the “Encrypted” flag.

Create a new PowerShell RunBook

Now create a new RunBook of type PowerShell and copy and paste the above code in it. Use the “Test pane” to test execution and when successfully tested save and publish.

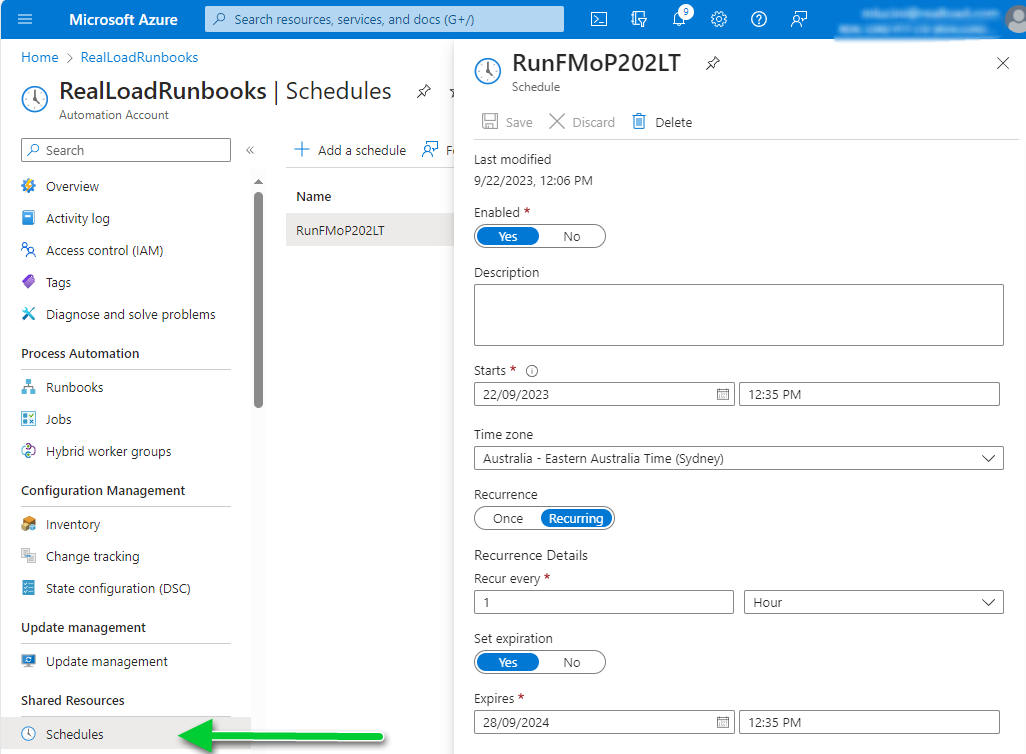

Create a schedule

Create a schedule to fit your needs. In this example, it’s an hourly schedule with an expiration date set (optional).

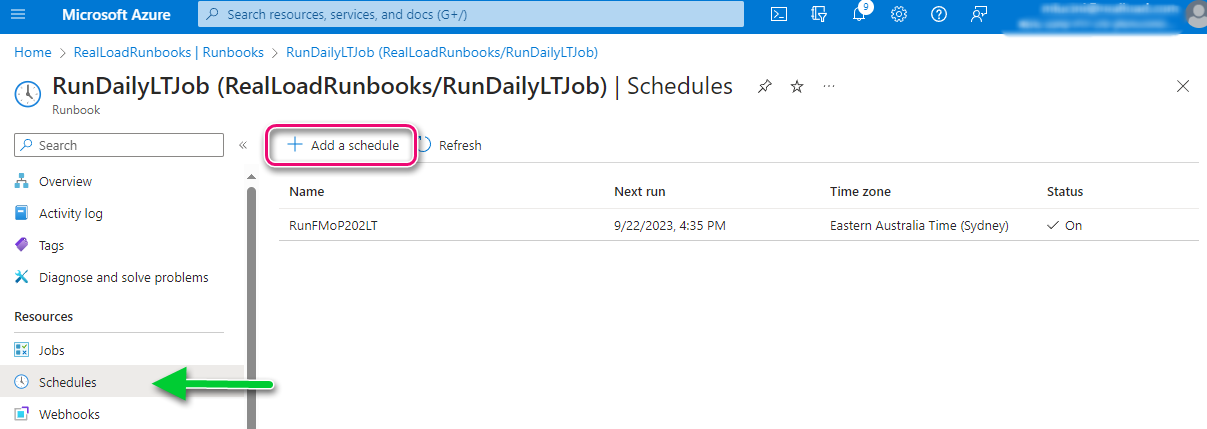

Associate the schedule to the RunBook

The last step is to associate the schedule to the RunBook:

Done. From now on the selected perfomance test will be executed as per the configured schedule. You’ll be able to see the results of the executed Load Test in the Real Load portal.

Other use cases

The use case illustrated in this blog is a trivial use case. You can use similar code to integrate Real Load in your build pipelines of almost any CI/CD tool that you’re using.

A simple use case for JUnit testing

A key feature that was added to the Real Load product recently is the support of JUnit tests. In a nutshell, it is possible to execute JUnit code as part of Synthetic Monitoring or even Performance Test scripts.

A simple example… Some DNS testing (again)

A while ago, I’ve implemented a simple DNS record testing script using an HTTP Web Test Plugin. Well, if I had to implement the same test today, I’d implement it using a JUnit test, as it looks more straightforward to me.

Prepare the JUnit code

First you’ll need to prepare your JUnit test code. You’ll need to compile your JUnit tests in a .jar archive, so what I did I created in my preferred IDE (NetBeans) a new Maven project.

The dependencies I’ve used for the DNS tests are:

<dependencies>

<dependency>

<groupId>junit</groupId>

<artifactId>junit</artifactId>

<version>4.12</version>

</dependency>

<dependency>

<groupId>dnsjava</groupId>

<artifactId>dnsjava</artifactId>

<version>3.5.2</version>

</dependency>

</dependencies>

Below the code implementing the DNS lookups against 4 specific servers. As you can see, each JUnit test executes the lookup against a different server:

import java.net.UnknownHostException;

import static junit.framework.Assert.assertEquals;

import org.junit.Test;

import org.xbill.DNS.*;

public class DNSNameChecker {

public DNSNameChecker() {

}

@Test

public void testGoogle8_8_8_8() {

String ARecord = CNAMELookup("8.8.8.8", "kb.realload.com");

assertEquals("www.realload.com.", ARecord);

}

@Test

public void testGoogle8_8_4_4() {

String ARecord = CNAMELookup("8.8.4.4", "kb.realload.com");

assertEquals("www.realload.com.", ARecord);

}

@Test

public void testOpenDNS208_67_222_222() {

String ARecord = CNAMELookup("208.67.222.222", "kb.realload.com");

assertEquals("www.realload.com.", ARecord);

}

@Test

public void testSOA_ns10_dnsmadeeasy_com() {

String ARecord = CNAMELookup("ns10.dnsmadeeasy.com", "kb.realload.com");

assertEquals("www.realload.com.", ARecord);

}

private String CNAMELookup(String DNSserver, String CNAME) {

try {

Resolver dnsResolver = null;

dnsResolver = new SimpleResolver(DNSserver);

Lookup l = new Lookup(Name.fromString(CNAME), Type.CNAME, DClass.IN);

l.setResolver(dnsResolver);

l.run();

if (l.getResult() == Lookup.SUCCESSFUL) {

// We'll only get back one A record, so we'll only return

// the first record.

return (l.getAnswers()[0].rdataToString());

}

} catch (UnknownHostException | TextParseException ex) {

return null;

}

// Return null in all other cases, which means some sort of error

// occurred while doing the lookup.

return null;

}

}

Test your code and then compile it into a .jar file.

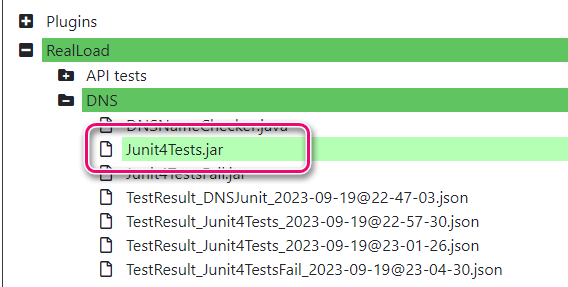

Upload the .jar file to Real Load portal

The next step is to upload the .jar file containing the JUnit tests to the Real Load portal.

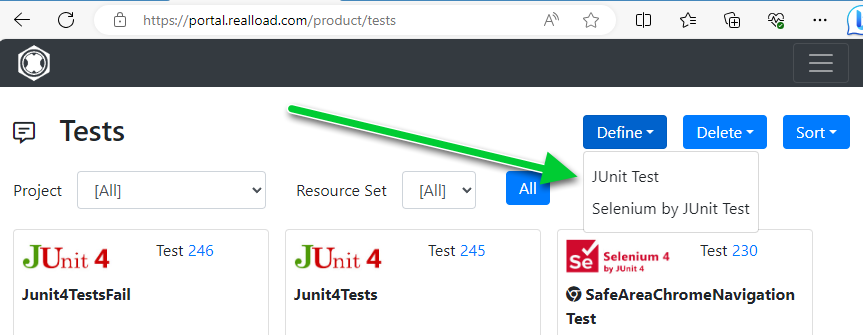

Configure the test

Now go to the Tests tab and define the new JUnit test:

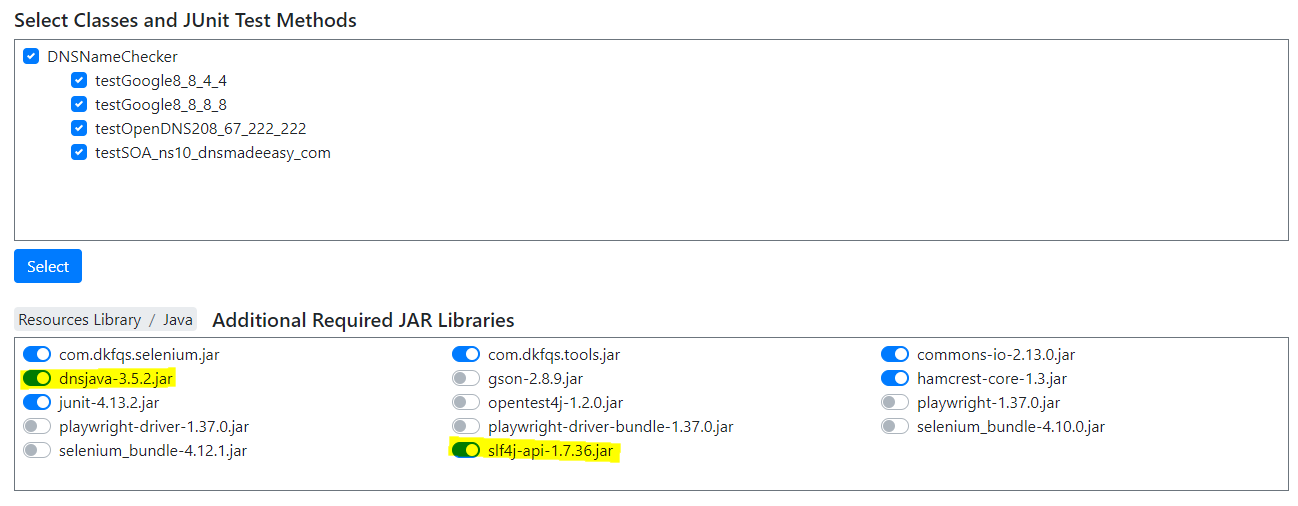

Select the .jar file containing the tests. You’ll then be presented with a page listing all tests found in the .jar file. Also make sure you select any dependencies required by your JUnit code, in this example the dnsjava and the slf4j jar files.

To verify that the test works, you might want to execute it once as a Performance Test (1 VU, 1 cycle).

Configure Synthetic Monitoring job

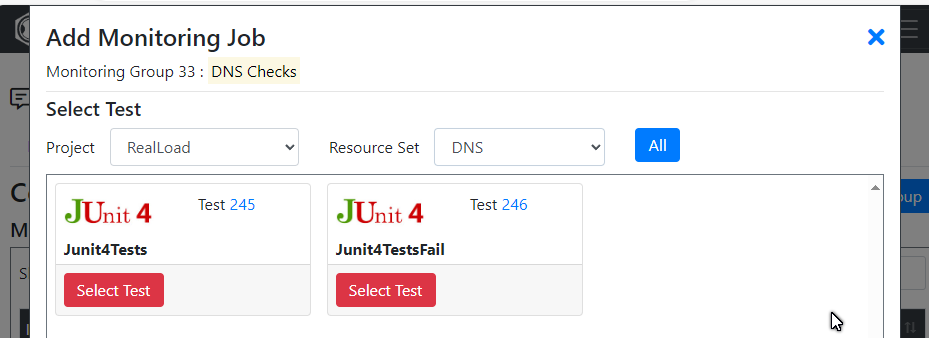

Now that the test is defined, add a Monitoring Job to execute a regular intervals your JUnit test. Assuming you already have a Monitoring Group defined, you’ll be able to add a JUnit test by selecting the test you’ve just configured:

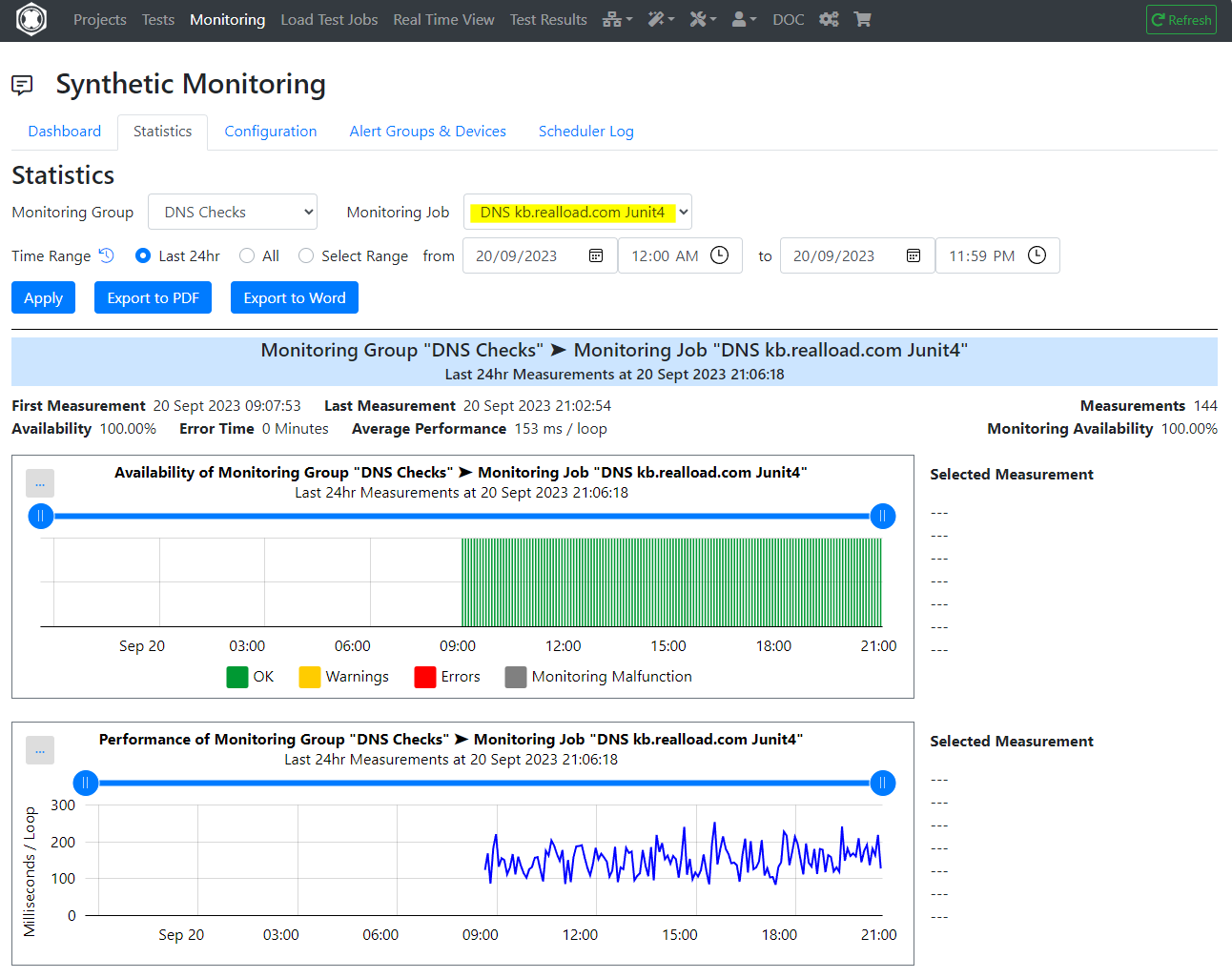

Review results

Done! Your JUnit tests will now execute as per configuration of your Monitoring Group, and you’ll be able to review historic data via the Real Load portal:

Test script involving OTPs? No problems

Say that you have an application that requires customers to authenticate using a Time based One Time Password (TOTP), generated by a mobile application. These OTPs are typically generated by implementing the algorithm described in RFC 6238.

Thanks to Real Load’s ability to implement plugins using the HTTP Test Wizard, it’s quite straightforward to generate OTPs in order to performance test your applications or to be used as part of synthetic monitoring.

So… from theory to practice. We had to implement such a plugin in order to performance test a third party product that requires users to submit a valid TOTP, if challenged. First, we’ve selected a Java TOTP implementation capable of generating OTPs as per the above RFC. There a are a few implementations out there, we decided to use Bastiaan Jansen’s implementation. A few lines of Java code only are required to generate the OTPs, and this implementation relies on one dependency only, so it was the perfect candidate.

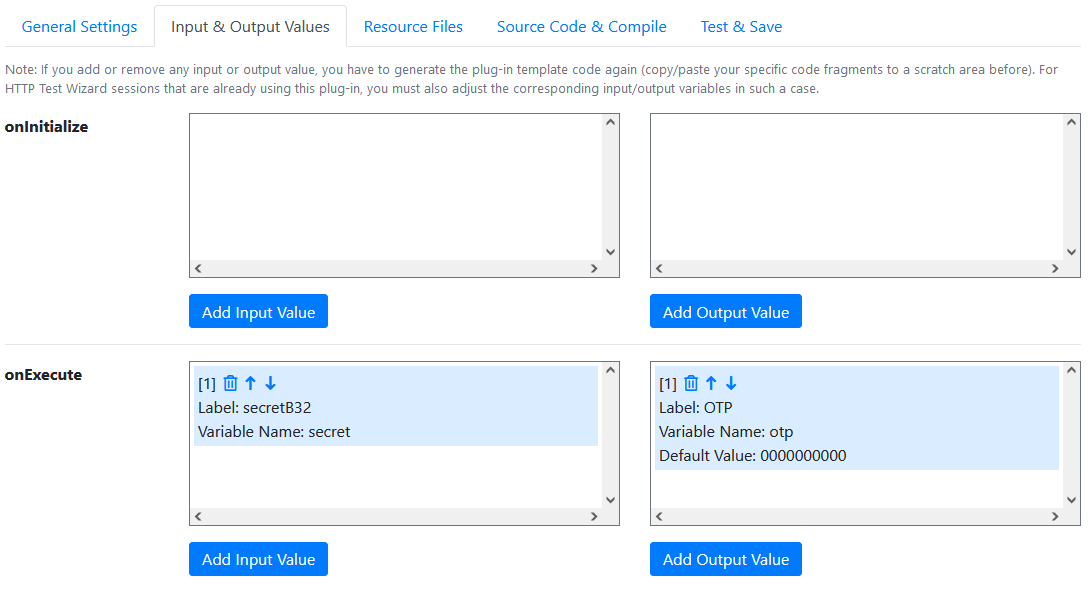

Define input and output parameters

First thing to do is to define which input and output values the plugin requires. Things are quite straightforward in this case, as most of the TOTP related parameters are well known (like OTP interval, number of digits and HMAC algorithm), so we’ve hardcoded them in the plugin’s logic. The only variable is the secret (B32 encoded) that is required to generate the TOTP, which which is specific to each Virtual User.

The only output value is the generated One Time Password.

Using the PLugin Wizard, we configured the parameters as follows:

Implement the plugin logic

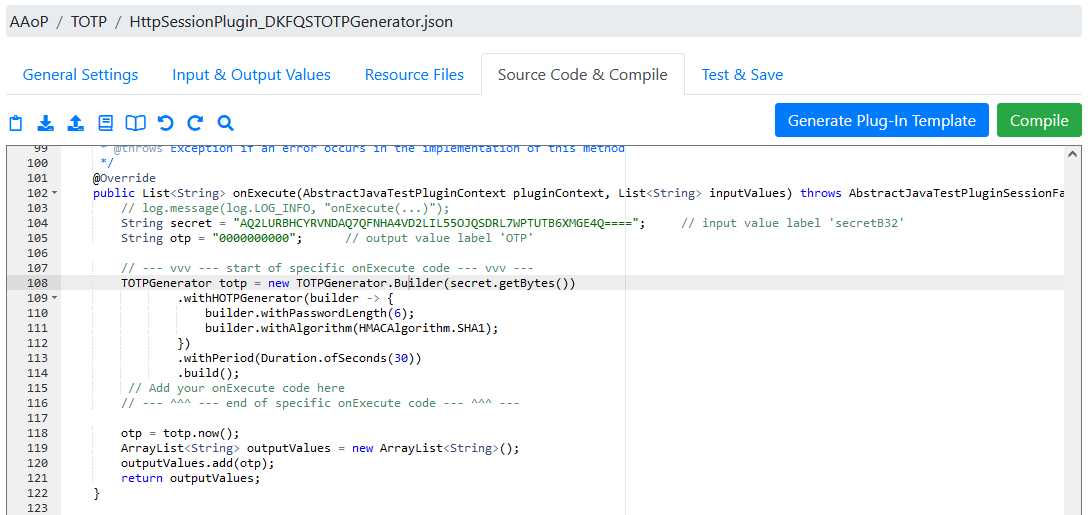

Next step is to implement the logic to generate the TOTP. In theory you can go key in your Java code in the Plugin Wizard shown in the screenshot below, but I’ve actually prepared the code in a separate IDE and then copy and pasted it back into online editor. Plz note that the Wizard will produce all scaffolding code, you just have to add the code shown between lines 108 and 115.

Test

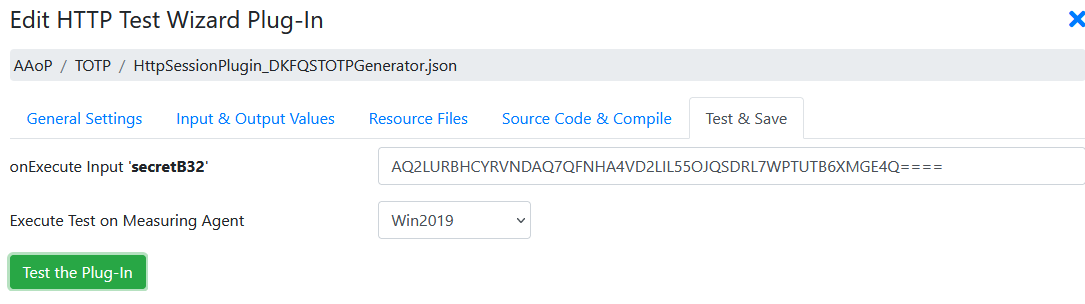

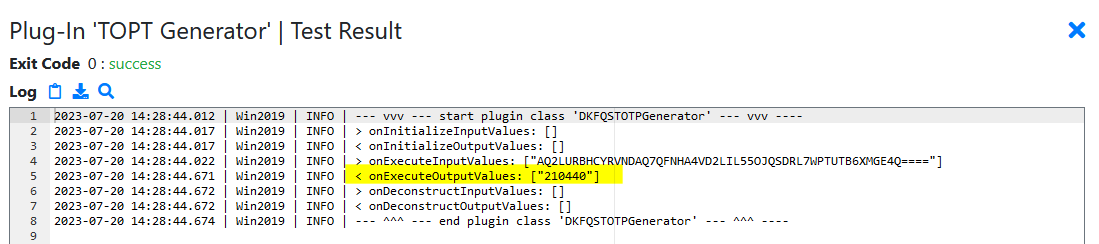

You’re now ready to test the plugin in the Wizard by going to the Test and Save tab. Provide the TOTP secret as base32 encoded string in the input parameter field:

… then test that the returned value is correct. Compare the value to the value generated by an online generator, there are a few out there.

Add to your test script

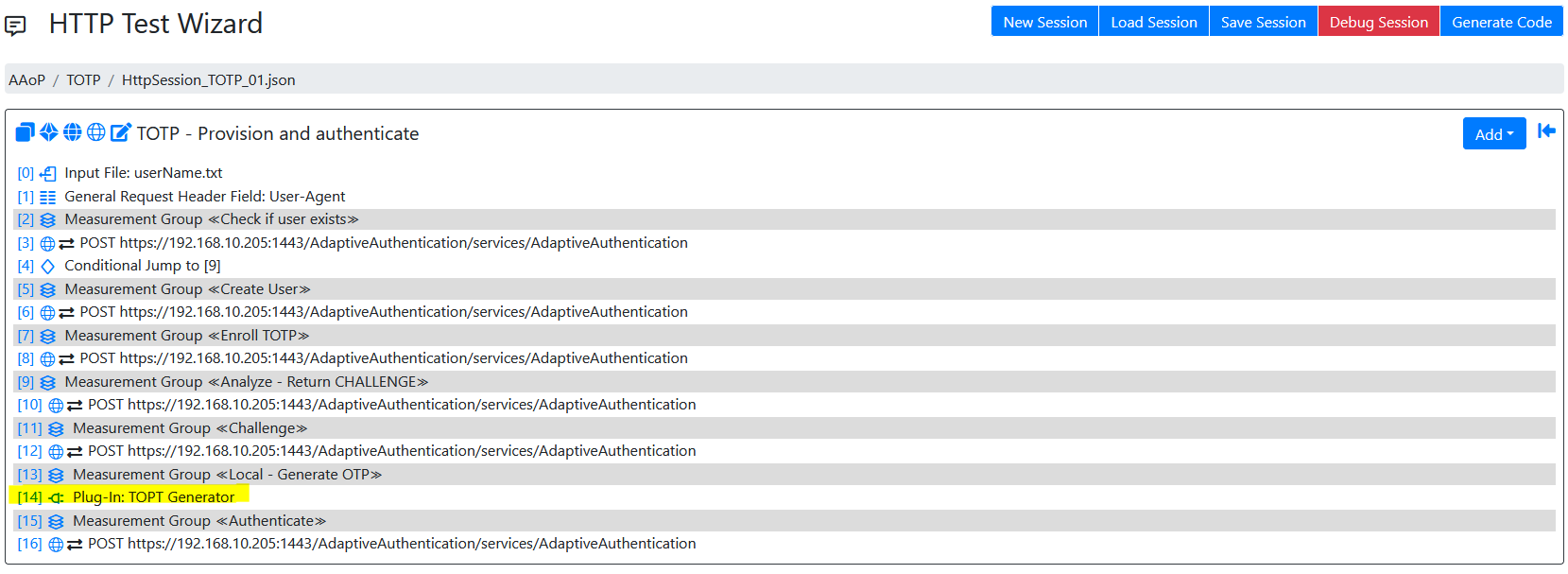

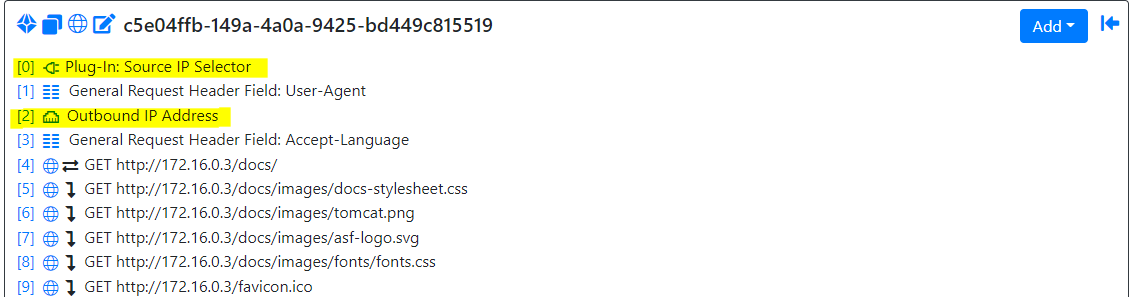

The last step is to add the plugin to your test script and invoke it at the right spot, like shown here:

The plugin’s output value will be assigned to a variable, which in turn will be used in the next test step.

You can now use the script both for synthetic monitoring or performance testing on the Real Load platform , even for scenarios where a user has to provide a valid OTP.

Application High Latency Is Outage !

Application High Latency Is Outage.

If an application experiences high latency to the point of becoming unavailable or experiencing an outage, it can have severe consequences for businesses and their reputation.

In such cases, it is crucial to address the issue promptly and effectively.

Below are some steps that can be taken inorder to avoid the application high latency issues.

-

Load Testing and Capacity Planning: Conduct regular load testing to ensure that the application can handle anticipated user loads without significant latency issues. Use the insights gained from load testing to plan for future capacity needs and scale the infrastructure accordingly.

-

Monitoring and Alerting: Enhance your monitoring and alerting systems to detect and notify potential latency issues early on. Implement proactive monitoring for performance metrics, response times, and key indicators of the application’s health. Set up alerts to notify the appropriate teams when thresholds are breached.

For taking these above mentioned steps you need a cost effective tool to provide flawless Load Testing & Synthetic (Proactive) monitoring experience.

Welcome to Real Load: The next generation Load Testing & Synthetic Monitoring tool!

Real Load offers a Synthetic Monitoring and Load Testing solution that is flexible enough to cater for testing of a variety of applications such as:

- Web applications (Both legacy and modern single page applications)

- Mobile Applications

- APIs

- Any other network protocol, provided a Java client implementation exists

- Custom Scripts written like in PowerShell

- Database

- Message Queues and more…

All Purpose Interface!

Want to create your scripts in any programming language, which you are comfortable with ? Check Out Real Load’s All Purpose Interface.

The uniqueness of Real Load when compared to other tools is it’s All Purpose Interface, which enables customers to define scripts in any programming languages. The only requirement is a script or program must comply in order to be executed by the Real Load Platform.

How it works

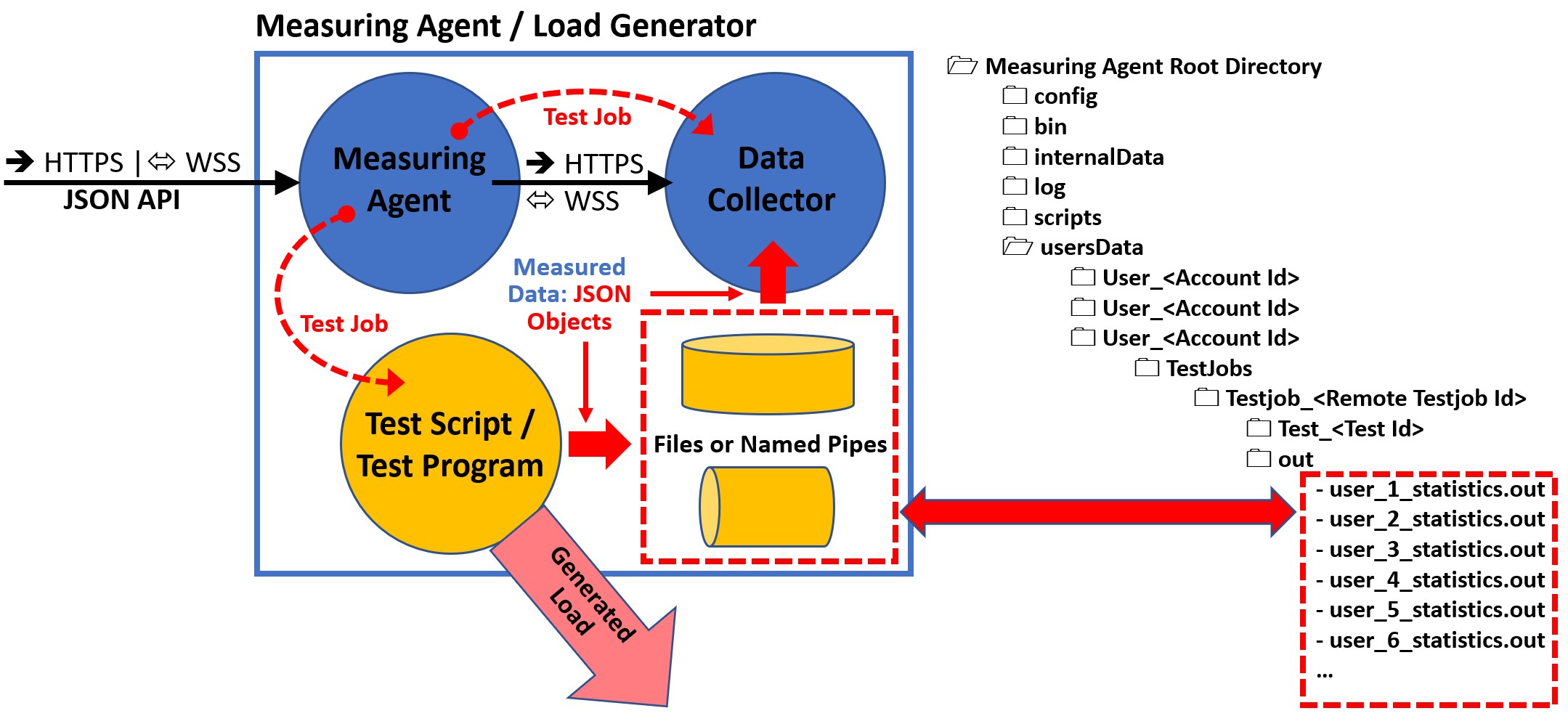

This document explains:

- How to develop a test program from scratch.

- How to add self-programmed measurements to the HTTP Test Wizard (as plug-ins).

The All Purpose Interface is the core of the product.

The architecture and the provided components that support this interface are referred as the Real Load Platform, which is optimized for performing load and stress tests with thousands of concurrent users.

This interface can be implemented by any programming language and regulates:

-

What requirements a script or program must comply in order to be executed by the Real Load Platform.

-

How the runtime behavior of the simulated users and the measured data of a script or program are reported to the Real Load Platform.

The great advantage of using the Real Load Platform is that only the basic functionality of a test has to be implemented. The powerful features of the Real Load Platform takes care of everything else, such as executing tests on remote systems and displaying the measured results in the form of diagrams, tables and statistics - in real time as well as final test result.

The product’s open architecture enables you to develop plug-ins, scripts and programs that measure anything that has numeric value - no matter which protocol is used!

The measured data are evaluated in real time and displayed as diagrams and lists. In addition to successfully measured values, also errors like timeouts or invalid response data can be collected and displayed in real time.

At least in theory, programs and scripts of any programming language can be executed, as long as such a program or script supports the All Purpose Interface.

In practice there are currently two options for integrating your own measurements into the Real Load Platform:

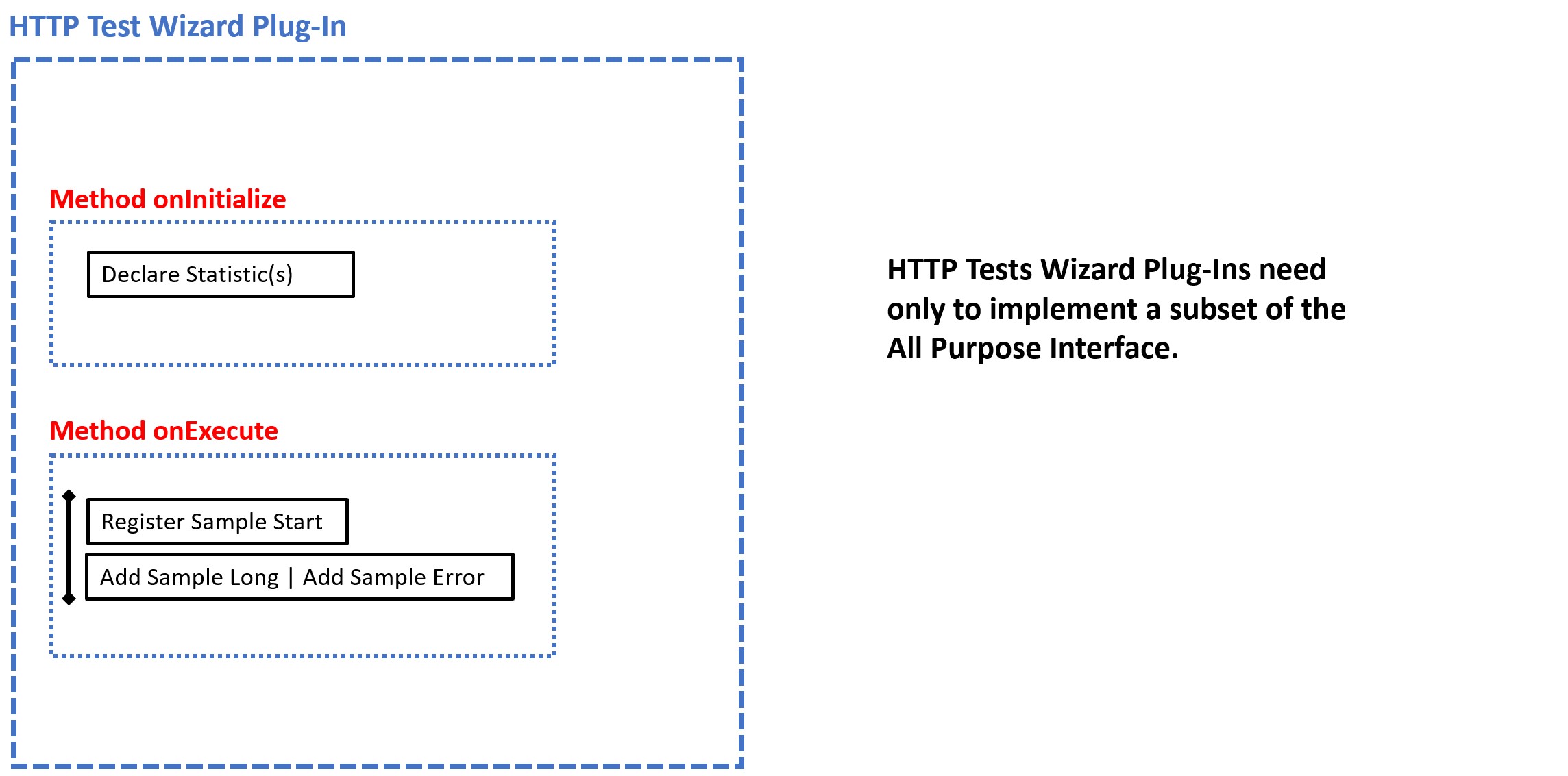

-

Write an HTTP Test Wizard Plug-In in Java that performs the measurement. This has the advantage that you only have to implement a subset of the “All Purpose Interface” yourself:

- Declare Statistic

- Register Sample Start

- Add Sample Long

- Add Sample Error

- [Optional: Add Counter Long, Add Average Delta And Current Value, Add Efficiency Ratio Delta, Add Throughput Delta, Add Test Result Annotation Exec Event]

Such plug-ins can be developed quite quickly, as all other functions of the “All Purpose Interface” are already implemented by the HTTP Test Wizard.

Tip: An HTTP Test Wizard session can also only consist of plug-ins, i.e. you can “misuse” the HTTP Test Wizard to only carry out measurements that you have programmed yourself: Plug-In Example

-

Write a test program or from scratch. This can currently be programmed in Java or PowerShell (support for additional programming languages will be added in the future). This is more time-consuming, but has the advantage that you have more freedom in program development. In this case you have to implement all functions of the “All Purpose Interface”.

Interface Specification

Basic Requirements for all Programs and Scripts

The All Purpose Interface must be implemented by all programs and scripts which are executed on the Real Load Platform. The interface is independent of any programming language and has only three requirements:

- The executed program or script must be able to be started from a command line, and passing program or script arguments must be supported.

- The executed program or script must be able to read and write files.

- The executed program or script must be able to measure one or more numerical values.

All of this seems a bit trivial, but has been chosen deliberately. So that the interface can support almost all programming languages.

Generic Program and Script Arguments

Each executed program or script must support at least the following arguments:

- Number of Users: The total number of simulated users (integer value > 0).

- Test Duration: The maximum test duration in seconds (integer value > 0).

- Ramp Up Time: The ramp up time in seconds until all simulated users are started (integer value >= 0). Example: If 10 users are started within 5 seconds then the first user is started immediately and then the remaining 9 users are started in (5 seconds / 9 users) = 0.55 seconds intervals.

- Max Session Loops: The maximum number of session loops per simulated user (integer value > 0, or -1 means infinite number of session loops).

- Delay Per Session Loop: The delay in milliseconds before a simulated user starts a next session loop iteration (integer value >= 0) – but not applied for the first session loop iteration.

- Data Output Directory: The directory to which the measured data have to be written. In addition, other data can also written to this directory like for example debug information.

Implementation Note: The test ends if either the Test Duration is elapsed or if Max Session Loops are reached for all simulated users. Currently executed sessions are not aborted.

In addition, the following arguments are optional, but also standardized:

- Description: A brief description of the test

- Debug Execution: Write debug information about the test execution to stdout

- Debug Measuring: Write debug information about the declared statistics and the measured values to stdout

| Argument | Java | PowerShell |

|---|---|---|

| Number of Users | -users number | -totalUsers number |

| Test Duration | -duration seconds | -inputTestDuration seconds |

| Ramp Up Time | -rampupTime seconds | -rampUpTime seconds |

| Max Session Loops | -maxLoops number | -inputMaxLoops number |

| Delay Per Session Loop | -delayPerLoop milliseconds | -inputDelayPerLoopMillis milliseconds |

| Data Output Directory | -dataOutputDir path | -dataOutDirectory path |

| Description | -description text | -description text |

| Debug Execution | -debugExec | -debugExecution |

| Debug Measuring | -debugData | -debugMeasuring |

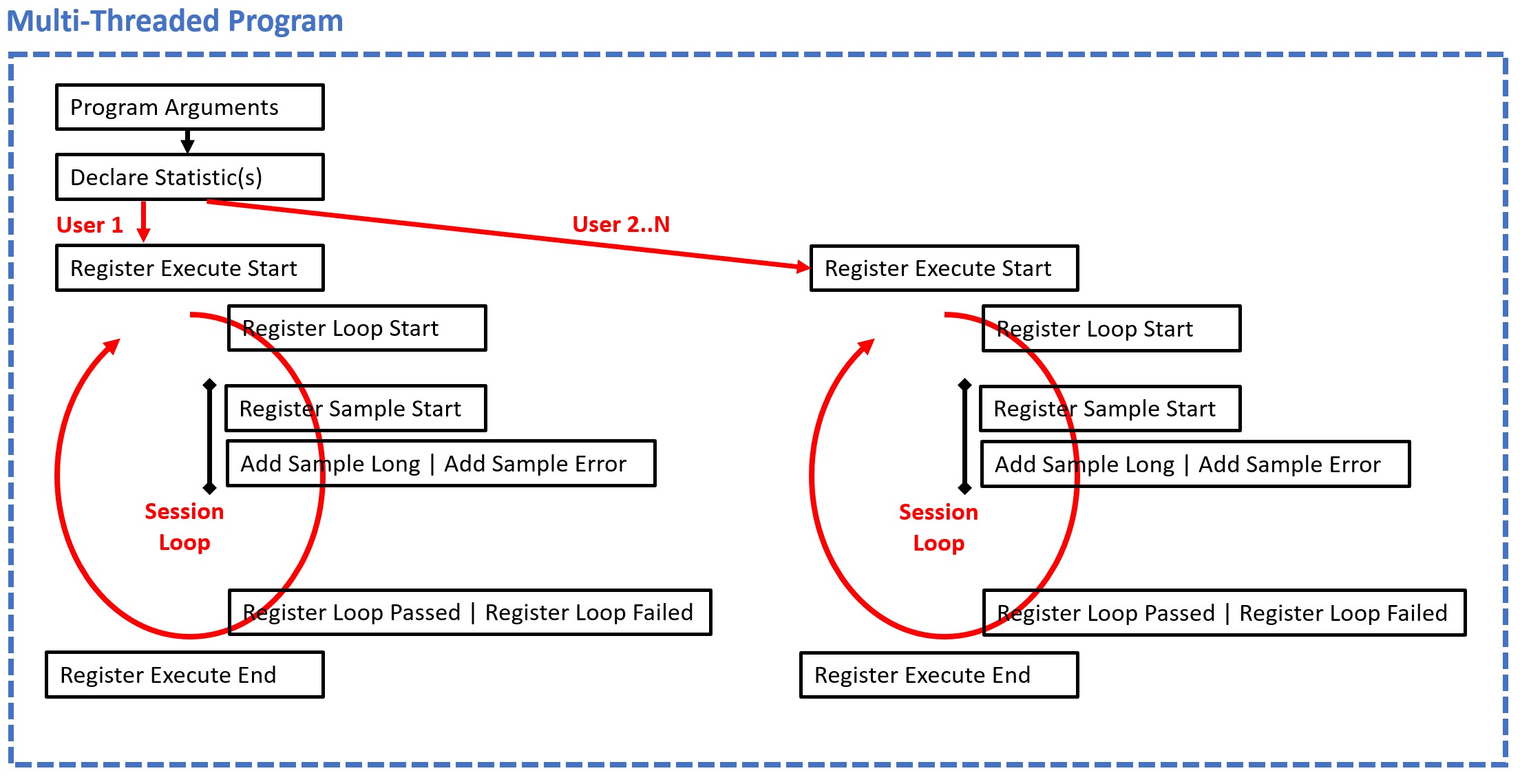

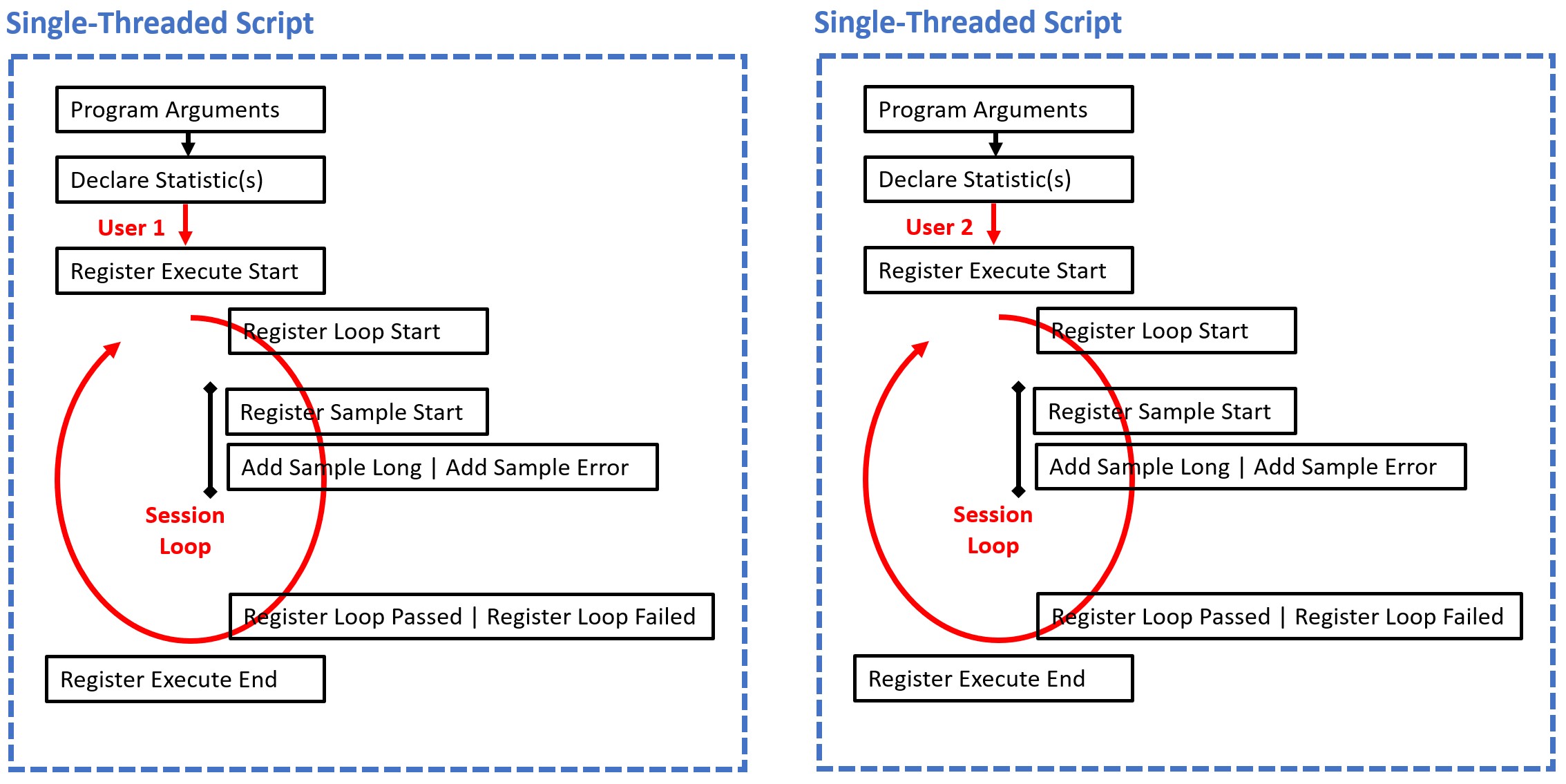

Single-Threaded Scripts vs. Multiple-Threaded Programs

For scripts which don’t support multiple threads the Real Load Platform starts for each simulated user a own operating system process per simulated user. On the other hand, for programs which support multiple threads, only one operating system process is started for all simulated users.

Scripts which are not able to run multiple threads must support the following additional generic command line argument:

- Executed User Number: The currently executed user (integer value > 0). Example: If 10 scripts are started then 1 is passed to the first started script, 2 is passed to the second started script, .. et cetera.

| Argument | PowerShell |

|---|---|

| Executed User Number | -inputUserNo number |

Specific Program and Script Arguments

Additional program and script specific arguments are supported by the Real Load Platform. Hoverer, their values are not validated by the platform.

Job Control Files

During the execution of a test the Real Load Platform can create and delete at runtime additional control files in the Data Output Directory of a test job. The existence, and respectively the absence of such control files must be frequently checked by the running script or program, but not too often to avoid CPU and I/O overload. Rule of thumb: Multi-threaded programs should check the existence of such files every 5..10 seconds. Single-threaded scripts should check such files before executing a new session loop iteration.

The following control files are created or removed in the Data Output Directory by the Real Load Platform:

- DKFQS_Action_AbortTest.txt If the existence of this file is detected then the test executions must be aborted gracefully as soon as possible. Currently executed session loops are not aborted.

- DKFQS_Action_SuspendTest.txt If the existence of this file is detected then the further execution of session loops is suspended until the file is removed by the Real Load Platform. Currently executed session loops are not interrupted on suspend. When resuming the test then the Ramp Up Time as passed as generic argument to the script or program must be re-applied. If a suspended test runs out of Test Duration then the test must end.

Testjob Data Files

When a test job is started by the Real Load Platform on a Measuring Agent, then the Real Load Platform creates at first for each simulated user an empty data file in the Data Output Directory of the test job:

Data File: user_<Executed User Number>_statistics.out

Example: user_1_statistics.out, user_2_statistics.out, user_3_statistics.out, .. et cetera.

After that, the test script(s) or test program is started as operating system process. The test script or the test program has to write the current state of the simulated user and measured data to the corresponding Data File of the simulated user in JSON object format (append data to the file only – don’t create new files).

The Real Load Platform component Measuring Agent and the corresponding Data Collector are listening to these data files and interpret the measured data at real-time, line by line as JSON objects.

Implementation Note

Because the Real Load Platform creates the (empty) Data Files first, and start immediately listening to them - before the test script or program is started - the test script or program must append the data to the already created Data Files only. Creating new Data Files by the test script or program will effect that no data are measured.Writing JSON Objects to the Data Files

The following JSON Objects can be written to the Data Files:

| JSON Object | Description |

|---|---|

| Declare Statistic | Declare a new statistic |

| Register Execute Start | Registers the start of a user |

| Register Execute Suspend | Registers that the execution of a user is suspended |

| Register Execute Resume | Registers that the execution of a user is resumed |

| Register Execute End | Registers that a user has ended |

| Register Loop Start | Registers that a user has started a session loop iteration |

| Register Loop Passed | Registers that a session loop iteration of a user has passed |

| Register Loop Failed | Registers that a session loop iteration of a user has failed |

| Register Sample Start | Statistic-type sample-event-time-chart: Registers the start of measuring a sample |

| Add Sample Long | Statistic-type sample-event-time-chart: Registers that a sample has measured and report the value |

| Add Sample Error | Statistic-type sample-event-time-chart: Registers that the measuring of a sample has failed |

| Add Counter Long | Statistic-type cumulative-counter-long: Add a positive delta value to the counter |

| Add Average Delta And Current Value | Statistic-type average-and-current-value: Add delta values to the average and set the current value |

| Add Efficiency Ratio Delta | Statistic-type efficiency-ratio-percent: Add efficiency ratio delta values |

| Add Throughput Delta | Statistic-type throughput-time-chart: Add a delta value to a throughput |

| Add Test Result Annotation Exec Event | Add an annotation event to the test result |

Note that the data of each JSON object must be written as a single line which end with a \r\n line terminator.

Why do some statistic-types require to report delta (difference) values instead of direct values?

This is because real-time monitoring is supported and also because delta values are required to merge the statistical values in measuring agent clusters to a combinded cluster-result.Program Sequence

JSON Object Specification

Declare Statistic Object

Before the measurement of data begins, the corresponding statistics must be declared at runtime. Each declared statistic must have a unique ID. Multiple declarations with the same ID are crossed out by the platform.

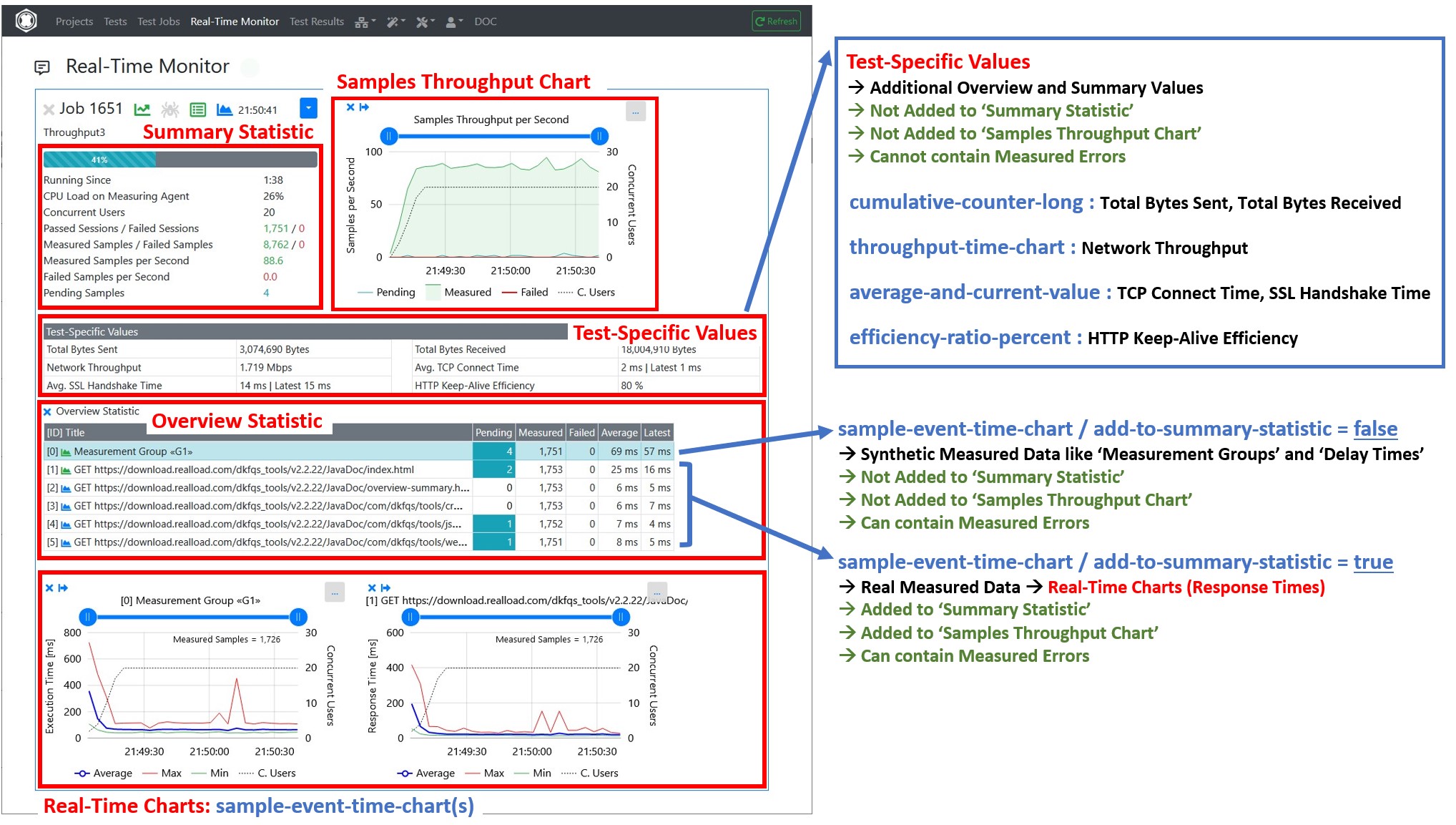

Currently 5 types of statistics are supported:

- sample-event-time-chart : This is the most common statistic type and contains continuously measured response times or any other continuously measured values of any unit. Information about failed measurements can also be added to the statistic. Statistics of this type are added to the ‘Overview Statistic’ area and can also displayed as a chart (see picture below).

- cumulative-counter-long : This is a single counter whose value is continuously increased during the test. Statistics of this type are added to the ‘Test-Specific Values’ area.

- average-and-current-value : This is a separately measured mean value and the last measured current value. Statistics of this type are added to the ‘Test-Specific Values’ area.

- efficiency-ratio-percent : This is a measured efficiency in percent (0..100%). Statistics of this type are added to the ‘Test-Specific Values’ area.

- throughput-time-chart : This is a measured throughput per second. Statistics of this type are added to the ‘Test-Specific Values’ area.

It’s also supported to declare new statistics at any time during test execution, but the statistics must be declared first, before the measured data are added.

{

"$schema": "http://json-schema.org/draft/2019-09/schema",

"title": "DeclareStatistic",

"type": "object",

"required": ["subject", "statistic-id", "statistic-type", "statistic-title"],

"properties": {

"subject": {

"type": "string",

"description": "Always 'declare-statistic'"

},

"statistic-id": {

"type": "integer",

"description": "Unique statistic id"

},

"statistic-type": {

"type": "string",

"description": "'sample-event-time-chart' or 'cumulative-counter-long' or 'average-and-current-value' or 'efficiency-ratio-percent' or 'throughput-time-chart'"

},

"statistic-title": {

"type": "string",

"description": "Statistic title"

},

"statistic-subtitle": {

"type": "string",

"description": "Statistic subtitle | only supported by 'sample-event-time-chart'"

},

"y-axis-title": {

"type": "string",

"description": "Y-Axis title | only supported by 'sample-event-time-chart'. Example: 'Response Time'"

},

"unit-text": {

"type": "string",

"description": "Text of measured unit. Example: 'ms'"

},

"sort-position": {

"type": "integer",

"description": "The UI sort position"

},

"add-to-summary-statistic": {

"type": "boolean",

"description": "If true = add the number of measured and failed samples to the summary statistic | only supported by 'sample-event-time-chart'. Note: Synthetic measured data like Measurement Groups or Delay Times should not be added to the summary statistic"

},

"background-color": {

"type": "string",

"description": "The background color either as #hex-triplet or as bootstrap css class name, or an empty string = no special background color. Examples: '#cad9fa', 'table-info'"

}

}

}

Example:

{

"subject":"declare-statistic",

"statistic-id":1,

"statistictype":"sample-event-time-chart",

"statistic-title":"GET http://192.168.0.111/",

"statistic-subtitle":"",

"y-axis-title":"Response Time",

"unit-text":"ms",

"sort-position":1,

"add-to-summarystatistic":true,

"background-color":""

}

Tip

Use for the sort position the same value as the statistic ID.After the statistics are declared then the activities of the simulated users can be started. Each simulated user must report the following changes of the current user-state:

- register-execute-start : Register that the simulated user has started the test.

- register-execute-suspend : Register that the simulated user suspend the execution of the test.

- register-execute-resume : Register that the simulated user resume the execution of the test.

- register-execute-end : Register that the simulated user has ended the test.

Register Execute Start Object

{

"$schema": "http://json-schema.org/draft/2019-09/schema",

"title": "RegisterExecuteStart",

"type": "object",

"required": ["subject", "timestamp"],

"properties": {

"subject": {

"type": "string",

"description": "Always 'register-execute-start'"

},

"timestamp": {

"type": "integer",

"description": "Unix-like time stamp"

}

}

}

Example:

{"subject":"register-execute-start","timestamp":1596219816129}

Register Execute Suspend Object

{

"$schema": "http://json-schema.org/draft/2019-09/schema",

"title": "RegisterExecuteSuspend",

"type": "object",

"required": ["subject", "timestamp"],

"properties": {

"subject": {

"type": "string",

"description": "Always 'register-execute-suspend'"

},

"timestamp": {

"type": "integer",

"description": "Unix-like time stamp"

}

}

}

Example:

{"subject":"register-execute-suspend","timestamp":1596219816129}

Register Execute Resume Object

{

"$schema": "http://json-schema.org/draft/2019-09/schema",

"title": "RegisterExecuteResume",

"type": "object",

"required": ["subject", "timestamp"],

"properties": {

"subject": {

"type": "string",

"description": "Always 'register-execute-resume'"

},

"timestamp": {

"type": "integer",

"description": "Unix-like time stamp"

}

}

}

Example:

{"subject":"register-execute-resume","timestamp":1596219816129}

Register Execute End Object

{

"$schema": "http://json-schema.org/draft/2019-09/schema",

"title": "RegisterExecuteEnd",

"type": "object",

"required": ["subject", "timestamp"],

"properties": {

"subject": {

"type": "string",

"description": "Always 'register-execute-end'"

},

"timestamp": {

"type": "integer",

"description": "Unix-like time stamp"

}

}

}

Example:

{"subject":"register-execute-end","timestamp":1596219816129}

Once a simulated user has started its activity it measures the data in so called ‘session loops’. Each simulated must report when a session loop iteration starts and ends:

- register-loop-start : Register the start of a session loop iteration.

- register-loop-passed : Register that a session loop iteration has passed / at end of the session loop iteration.

- register-loop-failed : Register that a session loop iteration has failed / if the session loop iteration is aborted.

Register Loop Start Object

{

"$schema": "http://json-schema.org/draft/2019-09/schema",

"title": "RegisterLoopStart",

"type": "object",

"required": ["subject", "timestamp"],

"properties": {

"subject": {

"type": "string",

"description": "Always 'register-loop-start'"

},

"timestamp": {

"type": "integer",

"description": "Unix-like time stamp"

}

}

}

Example:

{"subject":"register-loop-start","timestamp":1596219816129}

Register Loop Passed Object

{

"$schema": "http://json-schema.org/draft/2019-09/schema",

"title": "RegisterLoopPassed",

"type": "object",

"required": ["subject", "loop-time", "timestamp"],

"properties": {

"subject": {

"type": "string",

"description": "Always 'register-loop-passed'"

},

"loop-time": {

"type": "integer",

"description": "The time it takes to execute the loop in milliseconds"

},

"timestamp": {

"type": "integer",

"description": "Unix-like time stamp"

}

}

}

Example:

{"subject":"register-loop-passed","loop-time":1451, "timestamp":1596219816129}

Register Loop Failed Object

{

"$schema": "http://json-schema.org/draft/2019-09/schema",

"title": "RegisterLoopFailed",

"type": "object",

"required": ["subject", "timestamp"],

"properties": {

"subject": {

"type": "string",

"description": "Always 'register-loop-failed'"

},

"timestamp": {

"type": "integer",

"description": "Unix-like time stamp"

}

}

}

Example:

{"subject":"register-loop-failed","timestamp":1596219816129}

Within a session loop iteration the samples of the declared statistics are measured. For sample-event-time-chart statistics the simulated user must report when the measuring of a sample starts and ends:

- register-sample-start : Register that the measuring of a sample has started.

- add-sample-long : Add a measured value to a declared statistic.

- add-sample-error : Add an error to a declared statistic.

Register Sample Start Object (sample-event-time-chart only)

{

"$schema": "http://json-schema.org/draft/2019-09/schema",

"title": "RegisterSampleStart",

"type": "object",

"required": ["subject", "statistic-id", "timestamp"],

"properties": {

"subject": {

"type": "string",

"description": "Always 'register-sample-start'"

},

"statistic-id": {

"type": "integer",

"description": "The unique statistic id"

},

"timestamp": {

"type": "integer",

"description": "Unix-like time stamp"

}

}

}

Example:

{"subject":"register-sample-start","statisticid":2,"timestamp":1596219816165}

Add Sample Long Object (sample-event-time-chart only)

{

"$schema": "http://json-schema.org/draft/2019-09/schema",

"title": "AddSampleLong",

"type": "object",

"required": ["subject", "statistic-id", "value", "timestamp"],

"properties": {

"subject": {

"type": "string",

"description": "Always 'add-sample-long'"

},

"statistic-id": {

"type": "integer",

"description": "The unique statistic id"

},

"value": {

"type": "integer",

"description": "The measured value"

},

"timestamp": {

"type": "integer",

"description": "Unix-like time stamp"

}

}

}

Example:

{"subject":"add-sample-long","statisticid":2,"value":105,"timestamp":1596219842468}

Add Sample Error Object (sample-event-time-chart only)

{

"$schema": "http://json-schema.org/draft/2019-09/schema",

"title": "AddSampleError",

"type": "object",

"required": ["subject", "statistic-id", "error-subject", "error-severity",

"timestamp"],

"properties": {

"subject": {

"type": "string",

"description": "Always 'add-sample-error'"

},

"statistic-id": {

"type": "integer",

"description": "The unique statistic id"

},

"error-subject": {

"type": "string",

"description": "The subject or title of the error"

},

"error-severity": {

"type": "string",

"description": "'warning' or 'error' or 'fatal'"

},

"error-type": {

"type": "string",

"description": "The type of the error. Errors which contains the same error

type can be grouped."

},

"error-log": {

"type": "string",

"description": "The error log. Multiple lines are supported by adding \r\n line terminators."

},

"error-context": {

"type": "string",

"description": " Context information about the condition under which the error occurred. Multiple lines are supported by adding \r\n line terminators."

},

"timestamp": {

"type": "integer",

"description": "Unix-like time stamp"

}

}

}

Example:

{

"subject":"add-sample-error",

"statistic-id":2,

"error-subject":"Connection refused (Connection refused)",

"error-severity":"error",

"error-type":"java.net.ConnectException",

"error-log":"2020-08-01 21:24:51.662 | main-HTTPClientProcessing[3] | INFO | GET http://192.168.0.111/\r\n2020-08-01 21:24:51.670 | main-HTTPClientProcessing[3] | ERROR | Failed to open or reuse connection to 192.168.0.111:80 |

java.net.ConnectException: Connection refused (Connection refused)\r\n",

"error-context":"HTTP Request Header\r\nhttp://192.168.0.111/\r\nGET / HTTP/1.1\r\nHost: 192.168.0.111\r\nConnection: keep-alive\r\nAccept: */*\r\nAccept-Encoding: gzip, deflate\r\n",

"timestamp":1596309891672

}

Note about the error-severity :

- warning : After the error has occurred then the simulated user continues with the execution of the current session loop. Error color = yellow.

- error : After the error has occurred then the simulated aborts the execution of the current session loop iteration, and starts the execution of the next session loop iteration. Error color = red.

- fatal : After the error has occurred then the simulated user aborts any further execution of the test, which means that the test has ended for this simulated user. Error color = black.

Implementation note: After an error has occurred, the simulated user should wait at least 100 milliseconds before continuing his activities. This is to prevent that within a few seconds several thousand errors are measured and reported to the UI

Add Counter Long Object (cumulative-counter-long only)

For cumulative-counter-long statistics there is no such 2-step mechanism as for ‘sample-event-time-chart’ statistics. The value can simple increased by reporting a Add Counter Long object.

{

"$schema": "http://json-schema.org/draft/2019-09/schema",

"title": "AddCounterLong",

"type": "object",

"required": ["subject", "statistic-id", "value"],

"properties": {

"subject": {

"type": "string",

"description": "Always 'add-counter-long'"

},

"statistic-id": {

"type": "integer",

"description": "The unique statistic id"

},

"value": {

"type": "integer",

"description": "The value to increment"

}

}

}

Example:

{"subject":"add-counter-long","statistic-id":10,"value":2111}

Add Average Delta And Current Value Object (average-and-current-value only)

To update a average-and-current-value statistic the delta (difference) values of the cumulated sum and the delta (difference) of the cumulated number of values has to be reported. The platform calculates then the average value by dividing the cumulated sum by the cumulated number of values. In addition, the last measured value must also be reported.

{

"$schema": "http://json-schema.org/draft/2019-09/schema",

"title": "AddAverageDeltaAndCurrentValue",

"type": "object",

"required": ["subject", "statistic-id", "sumValuesDelta", "numValuesDelta", "currentValue", "currentValueTimestamp"],

"properties": {

"subject": {

"type": "string",

"description": "Always 'add-average-delta-and-current-value'"

},

"statistic-id": {

"type": "integer",

"description": "The unique statistic id"

},

"sumValuesDelta": {

"type": "integer",

"description": "The sum of delta values to add to the average"

},

"numValuesDelta": {

"type": "integer",

"description": "The number of delta values to add to the average"

},

"currentValue": {

"type": "integer",

"description": "The current value, or -1 if no such data is available"

},

"currentValueTimestamp": {

"type": "integer",

"description": "The Unix-like timestamp of the current value, or -1 if no such data is available"

}

}

}

Example:

{

"subject":"add-average-delta-and-current-value",

"statistic-id":100005,

"sumValuesDelta":6302,

"numValuesDelta":22,

"currentValue":272,

"currentValueTimestamp":1634401774374

}

Add Efficiency Ratio Delta Object (efficiency-ratio-percent only)

To update a efficiency-ratio-percent statistic, the delta (difference) of the number of efficient performed procedures and the delta (difference) of the number of inefficient performed procedures has to be reported.

{

"$schema": "http://json-schema.org/draft/2019-09/schema",

"title": "AddEfficiencyRatioDelta",

"type": "object",

"required": ["subject", "statistic-id", "efficiencyDeltaValue", "inefficiencyDeltaValue"],

"properties": {

"subject": {

"type": "string",

"description": "Always 'add-efficiency-ratio-delta'"

},

"statistic-id": {

"type": "integer",

"description": "The unique statistic id"

},

"efficiencyDeltaValue": {

"type": "integer",

"description": "The number of efficient performed procedures to add"

},

"inefficiencyDeltaValue": {

"type": "integer",

"description": "The number of inefficient performed procedures to add"

}

}

}

Example:

{

"subject":"add-efficiency-ratio-delta",

"statistic-id":100006,

"efficiencyDeltaValue":6,

"inefficiencyDeltaValue":22

}

Add Throughput Delta Object (throughput-time-chart only)

To update a throughput-time-chart statistic, the delta (difference) value from a last absolute, cumulated value to the current cumulated value has to be reported, whereby the current time stamp is included in the calculation.

Although this type of statistic always has the unit throughput per second, a measured delta (difference) value can be reported at any time.

{

"$schema": "http://json-schema.org/draft/2019-09/schema",

"title": "AddThroughputDelta",

"type": "object",

"required": ["subject", "statistic-id", "delta-value", "timestamp"],

"properties": {

"subject": {

"type": "string",

"description": "Always 'add-throughput-delta'"

},

"statistic-id": {

"type": "integer",

"description": "The unique statistic id"

},

"delta-value": {

"type": "number",

"description": "the delta (difference) value"

},

"timestamp": {

"type": "integer",

"description": "The Unix-like timestamp of the delta (difference) value"

}

}

}

Example:

{

"subject":"add-throughput-delta",

"statistic-id":100003,

"delta-value":0.53612,

"timestamp":1634401774410

}

Add Test Result Annotation Exec Event Object

Add an annotation event to the test result.

{

"$schema": "http://json-schema.org/draft/2019-09/schema",

"title": "AddTestResultAnnotationExecEvent",

"type": "object",

"required": ["subject", "event-id", "event-text", "timestamp"],

"properties": {

"subject": {

"type": "string",

"description": "Always 'add-test-result-annotation-exec-event'"

},

"event-id": {

"type": "integer",

"description": "The event id, valid range: -1 .. -999999"

},

"event-text": {

"type": "string",

"description": "the event text"

},

"timestamp": {

"type": "integer",

"description": "The Unix-like timestamp of the event"

}

}

}

Example:

{

"subject":"add-test-result-annotation-exec-event",

"event-id":-1,

"event-text":"Too many errors: Test job stopped by plug-in",

"timestamp":1634401774410

}

Notes:

- The event id must be in the range from -1 (minus one) to -999999.

- Events with the same event id are merged to one event.

[End of Interface Specification]

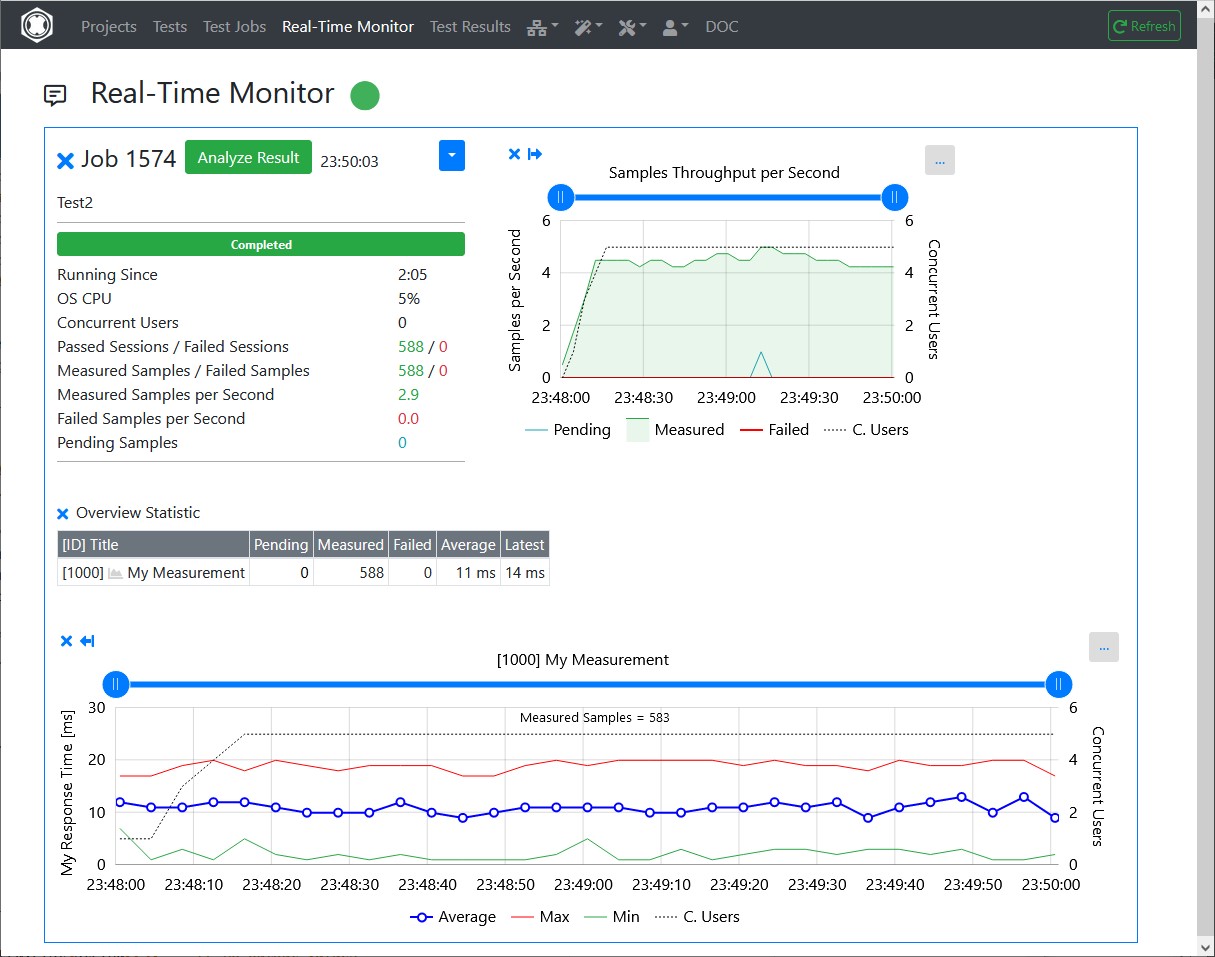

Example

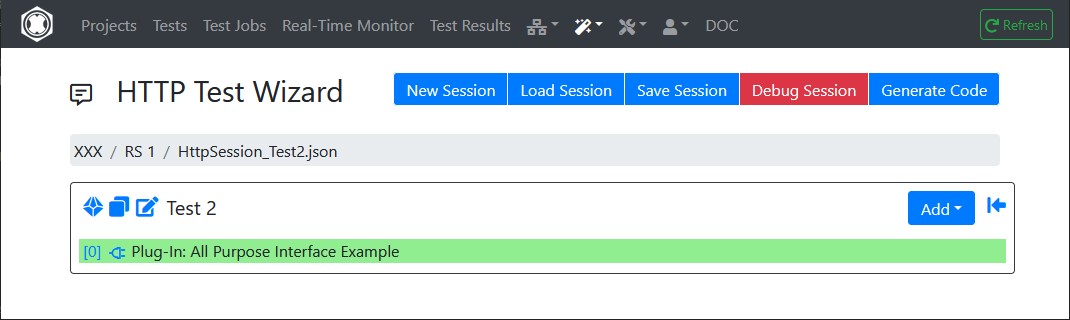

HTTP Test Wizard Plug-In

This plug-in “measures” a random value, and is executed in this example as the only part of an HTTP Test Wizard session.

The All Purpose Interface JSON objects are written using the corresponding methods of the com.dkfqs.tools.javatest.AbstractJavaTest class. This class is located in the JAR file com.dkfqs.tools.jar which is already predefined for all plug-ins.

Java Doc of com.dkfqs.tools

The Java Doc for the packages of com.dkfqs.tools is located at https://download.realload.com/dkfqs_tools/v2.2.25/JavaDoc/index.htmlimport com.dkfqs.tools.javatest.AbstractJavaTest;

import com.dkfqs.tools.javatest.AbstractJavaTestPluginContext;

import com.dkfqs.tools.javatest.AbstractJavaTestPluginInterface;

import com.dkfqs.tools.javatest.AbstractJavaTestPluginSessionFailedException;

import com.dkfqs.tools.javatest.AbstractJavaTestPluginTestFailedException;

import com.dkfqs.tools.javatest.AbstractJavaTestPluginUserFailedException;

import com.dkfqs.tools.logging.LogAdapterInterface;

import java.util.ArrayList;

import java.util.List;

// add your imports here

/**

* HTTP Test Wizard Plug-In 'All Purpose Interface Example'.

* Plug-in Type: Normal Session Element Plug-In.

* Created by 'DKF' at 24 Sep 2021 22:50:04

* DKFQS 4.3.22

*/

@AbstractJavaTestPluginInterface.PluginResourceFiles(fileNames={"com.dkfqs.tools.jar"})

public class AllPurposeInterfaceExample implements AbstractJavaTestPluginInterface {

private LogAdapterInterface log = null;

private static final int STATISTIC_ID = 1000;

private AbstractJavaTest javaTest = null; // refrence to the generated test program

/**

* Called by environment when the instance is created.

* @param log the log adapter

*/

@Override

public void setLog(LogAdapterInterface log) {

this.log = log;

}

/**

* On plug-in initialize. Called when the plug-in is initialized. <br>

* Depending on the initialization scope of the plug-in the following specific exceptions can be thrown:<ul>

* <li>Initialization scope <b>global:</b> AbstractJavaTestPluginTestFailedException</li>

* <li>Initialization scope <b>user:</b> AbstractJavaTestPluginTestFailedException, AbstractJavaTestPluginUserFailedException</li>

* <li>Initialization scope <b>session:</b> AbstractJavaTestPluginTestFailedException, AbstractJavaTestPluginUserFailedException, AbstractJavaTestPluginSessionFailedException</li>

* </ul>

* @param javaTest the reference to the executed test program, or null if no such information is available (in debugger environment)

* @param pluginContext the plug-in context

* @param inputValues the list of input values

* @return the list of output values

* @throws AbstractJavaTestPluginSessionFailedException if the plug-in signals that the 'user session' has to be aborted (abort current session - continue next session)

* @throws AbstractJavaTestPluginUserFailedException if the plug-in signals that the user has to be terminated

* @throws AbstractJavaTestPluginTestFailedException if the plug-in signals that the test has to be terminated

* @throws Exception if an error occurs in the implementation of this method

*/

@Override

public List<String> onInitialize(AbstractJavaTest javaTest, AbstractJavaTestPluginContext pluginContext, List<String> inputValues) throws AbstractJavaTestPluginSessionFailedException, AbstractJavaTestPluginUserFailedException, AbstractJavaTestPluginTestFailedException, Exception {

// log.message(log.LOG_INFO, "onInitialize(...)");

// --- vvv --- start of specific onInitialize code --- vvv ---

if (javaTest != null) {

this.javaTest = javaTest;

// declare the statistic

javaTest.declareStatistic(STATISTIC_ID,

AbstractJavaTest.STATISTIC_TYPE_SAMPLE_EVENT_TIME_CHART,

"My Measurement",

"",

"My Response Time",

"ms",

STATISTIC_ID,

true,

"");

}

// --- ^^^ --- end of specific onInitialize code --- ^^^ ---

return new ArrayList<String>(); // no output values

}

/**

* On plug-in execute. Called when the plug-in is executed. <br>

* Depending on the execution scope of the plug-in the following specific exceptions can be thrown:<ul>

* <li>Initialization scope <b>global:</b> AbstractJavaTestPluginTestFailedException</li>

* <li>Initialization scope <b>user:</b> AbstractJavaTestPluginTestFailedException, AbstractJavaTestPluginUserFailedException</li>

* <li>Initialization scope <b>session:</b> AbstractJavaTestPluginTestFailedException, AbstractJavaTestPluginUserFailedException, AbstractJavaTestPluginSessionFailedException</li>

* </ul>

* @param pluginContext the plug-in context

* @param inputValues the list of input values

* @return the list of output values

* @throws AbstractJavaTestPluginSessionFailedException if the plug-in signals that the 'user session' has to be aborted (abort current session - continue next session)

* @throws AbstractJavaTestPluginUserFailedException if the plug-in signals that the user has to be terminated

* @throws AbstractJavaTestPluginTestFailedException if the plug-in signals that the test has to be terminated

* @throws Exception if an error occurs in the implementation of this method

*/

@Override

public List<String> onExecute(AbstractJavaTestPluginContext pluginContext, List<String> inputValues) throws AbstractJavaTestPluginSessionFailedException, AbstractJavaTestPluginUserFailedException, AbstractJavaTestPluginTestFailedException, Exception {

// log.message(log.LOG_INFO, "onExecute(...)");

// --- vvv --- start of specific onExecute code --- vvv ---

if (javaTest != null) {

// register the start of the sample

javaTest.registerSampleStart(STATISTIC_ID);

// measure the sample

final long min = 1L;

final long max = 20L;

long responseTime = Math.round(((Math.random() * (max - min)) + min));

// add the measured sample to the statistic

javaTest.addSampleLong(STATISTIC_ID, responseTime);

/*

// error case

javaTest.addSampleError(STATISTIC_ID,

"My error subject",

AbstractJavaTest.ERROR_SEVERITY_WARNING,

"My error type",

"My error response text or log",

"");

*/

}

// --- ^^^ --- end of specific onExecute code --- ^^^ ---

return new ArrayList<String>(); // no output values

}

/**

* On plug-in deconstruct. Called when the plug-in is deconstructed.

* @param pluginContext the plug-in context

* @param inputValues the list of input values

* @return the list of output values

* @throws Exception if an error occurs in the implementation of this method

*/

@Override

public List<String> onDeconstruct(AbstractJavaTestPluginContext pluginContext, List<String> inputValues) throws Exception {

// log.message(log.LOG_INFO, "onDeconstruct(...)");

// --- vvv --- start of specific onDeconstruct code --- vvv ---

// no code here

// --- ^^^ --- end of specific onDeconstruct code --- ^^^ ---

return new ArrayList<String>(); // no output values

}

}

Debugging the Interface

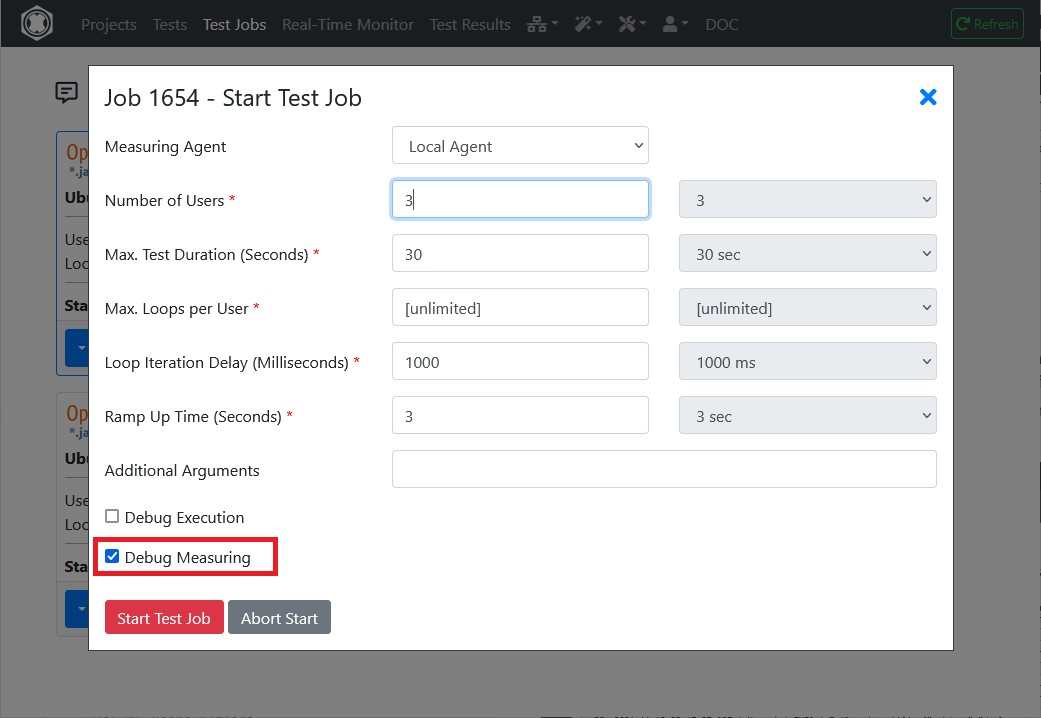

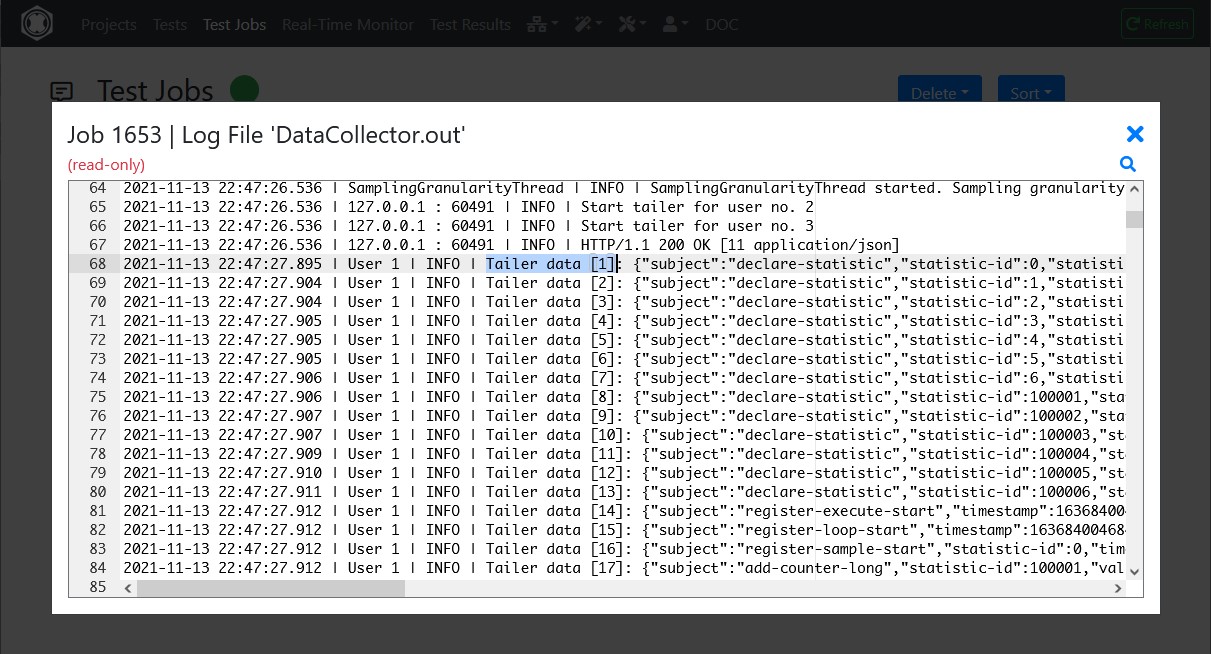

- In order to debug the processing of the reported data of the interface, activate the “Debug Measuring” checkbox when starting the test job.

- After the test job has completed, select in the Test Jobs menu at the corresponding test job the option “Job Log Files” and then select the file “DataCollector.out”.

- Review the “DataCollector.out” file for any errors. Lines which contains “| Tailer data” reflect the raw reported data.

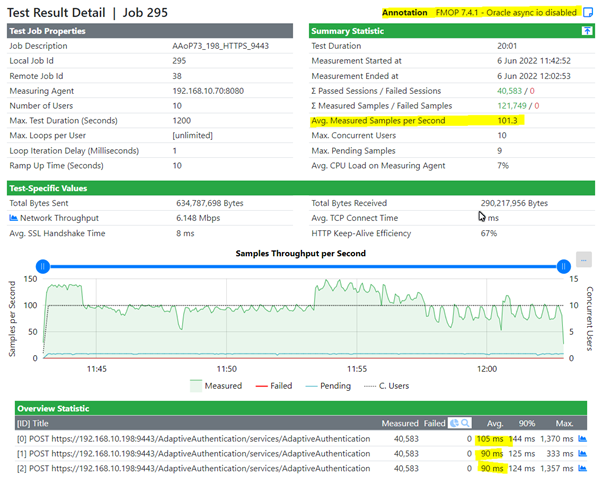

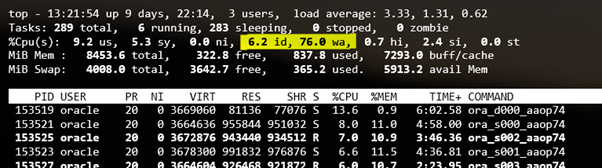

Real Load Synthetic Monitoring Generally Available!

Performance testing as a main and synthetic monitoring as a dessert? Now available at Real Load.

Recently we’ve launched a new version of our Real Load portal which adds the ability to periodically monitor your applications. The best thing to it is that you can re-use already developed load testing scripts to periodically monitor your applications. It makes sense to re-use the same underlying technology for both tasks, correct?

And, of course, nobody forces you to have the main followed by the dessert. You can also first have the dessert (… synthetic monitoring) followed by the main (… load testing). Or perhaps the dessert is the main for you, you can arrange the menu as it best suits your taste.

How it works

As for load testing, you’ll first have to prepare your testing script. Let’s assume you’ve already prepared it using our Wizards. Once the script is ready, the only remaining thing to do is to schedule it for periodic execution.

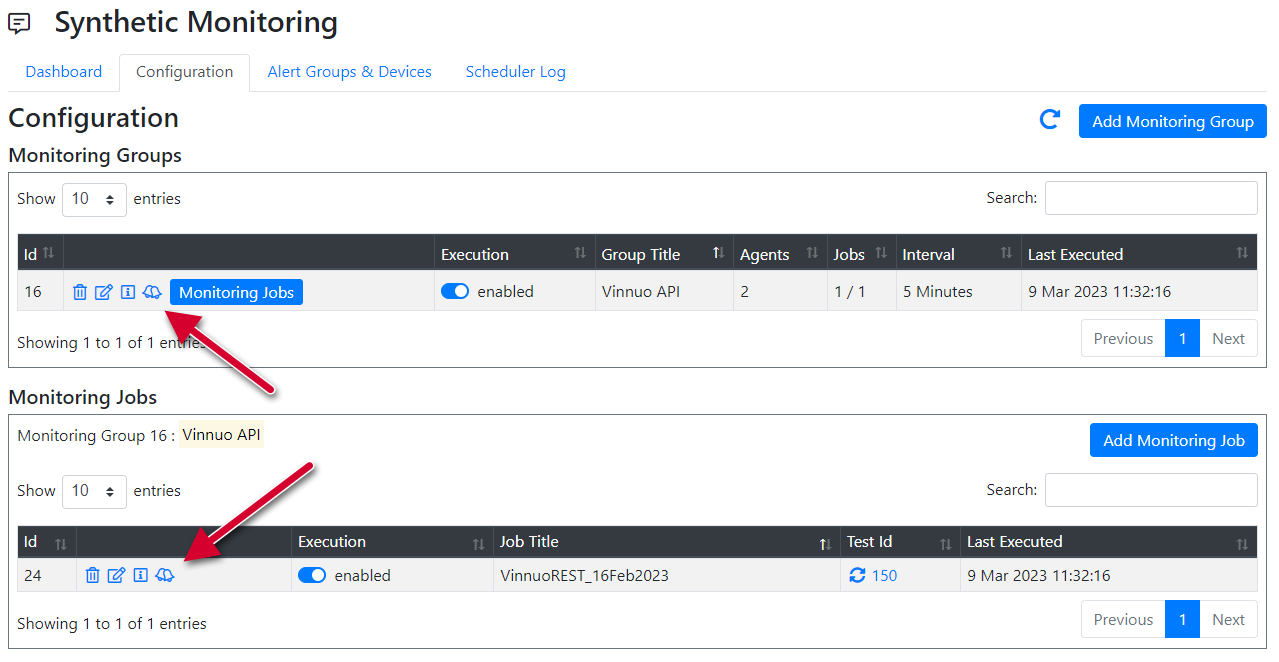

Configuring Monitoring Groups and Jobs

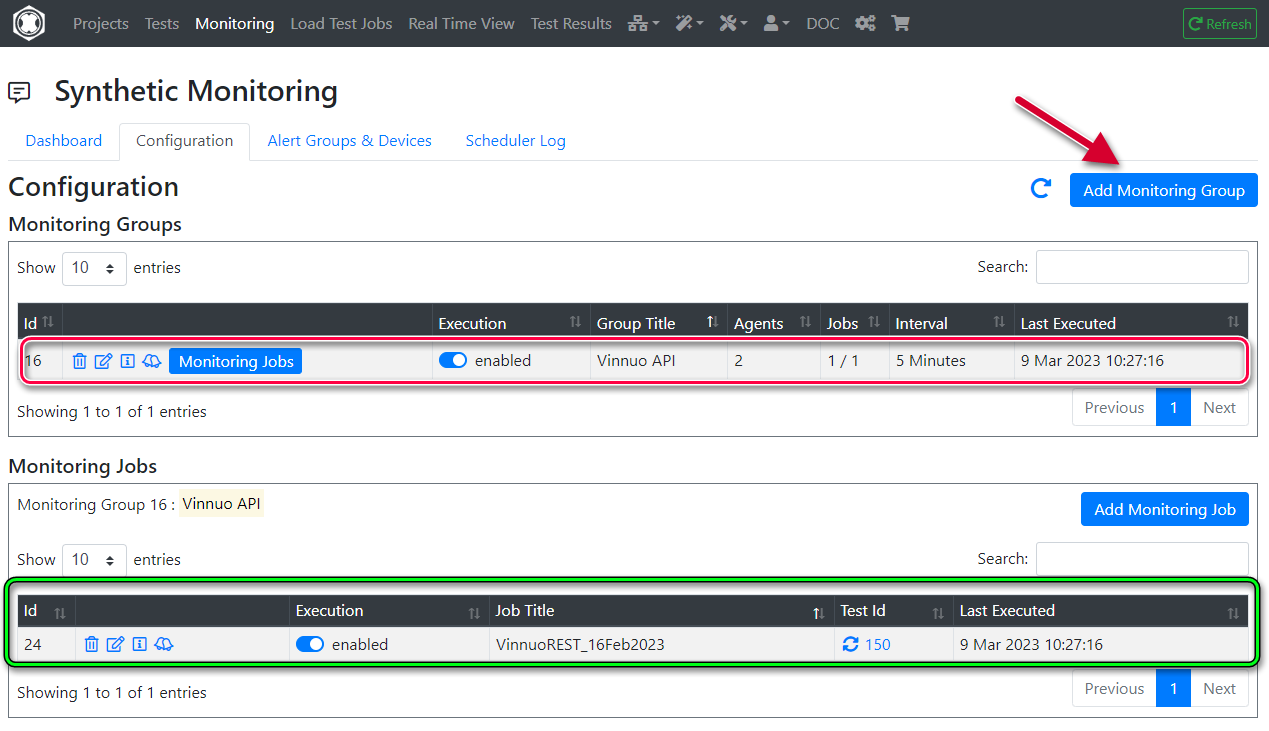

You can setup so called Monitoring Groups which will be made up of a number of Monitoring Jobs. Each monitoring job executes one of the prepared test scripts.

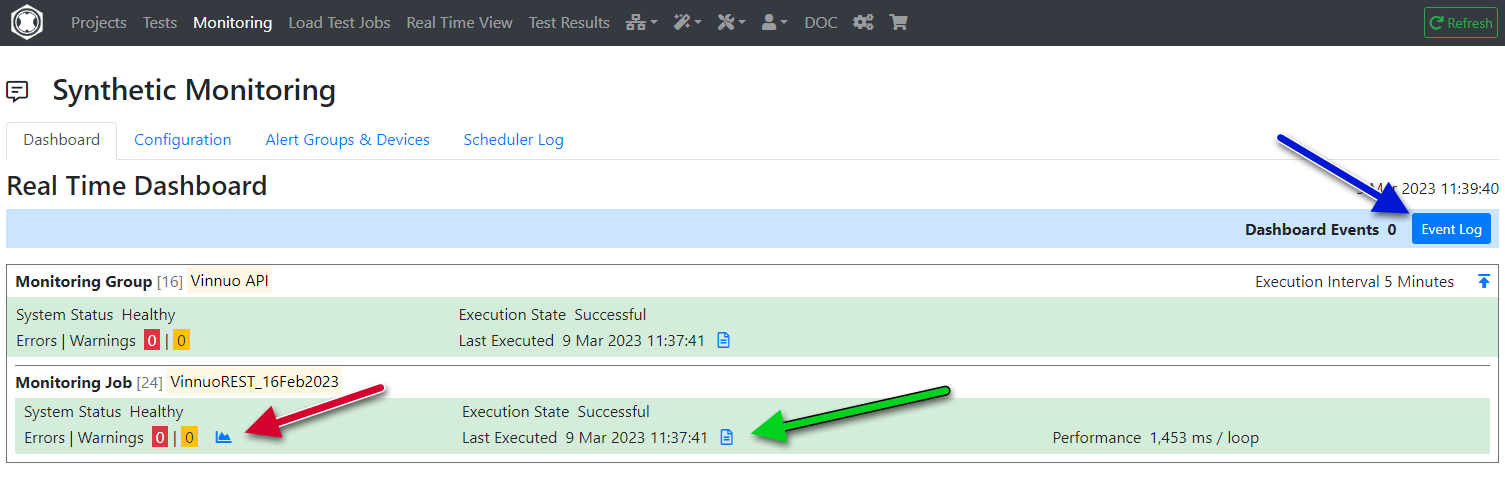

In this screenshot you’ll see one Monitoring Group called “Vinnuo APIs” (in the red box) which executes one test script, highlighted in the green box.

Scheduling execution interval and location

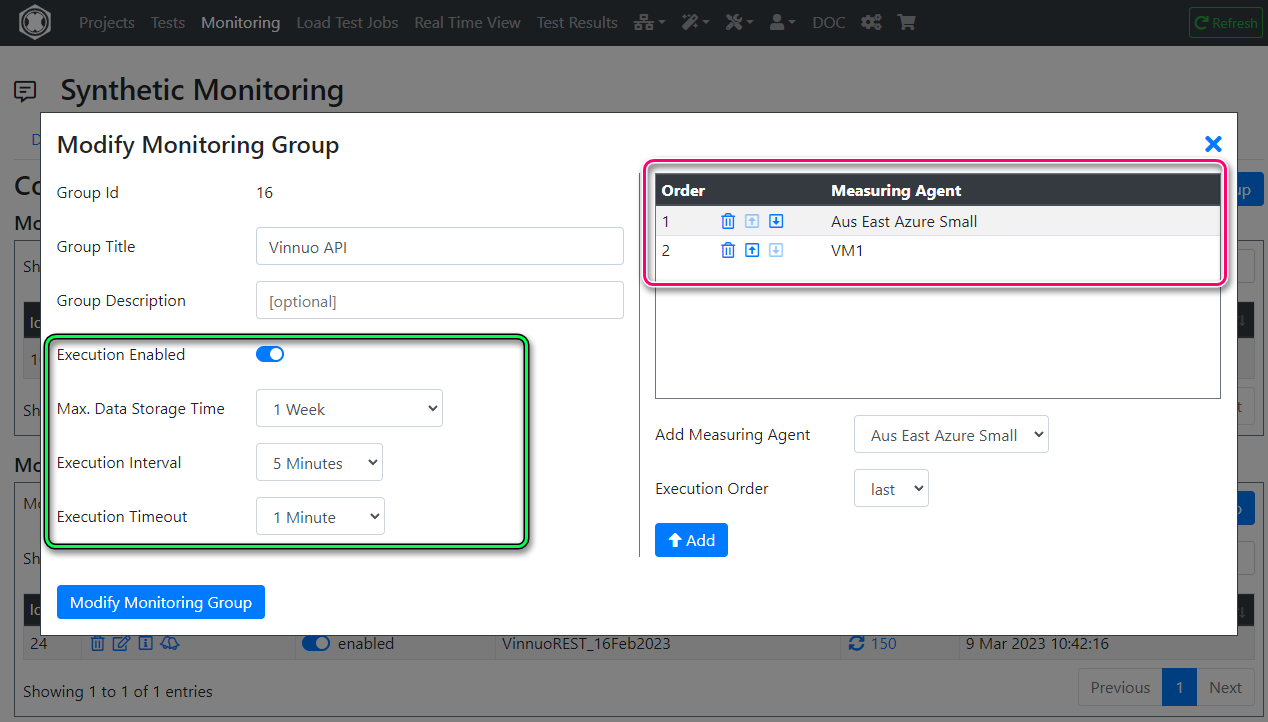

The key properties you can configure on a Monitoring Group are:

- Execution interval: Down to 1 minute, depending on licensing level.

- Execution timeout: How long to wait for the job to complete, before considering it failed.

- Max. Data Storage: How long to retain job execution results. Up to approx. 1 year, depending on licensing level.

- Measuring Agents: The location (agents) to executed the monitoring jobs from. We recommend executing the job from at least 2 agents.

Last, you can enable/disable execution for the group by using the Execution Enabled toggle switch.

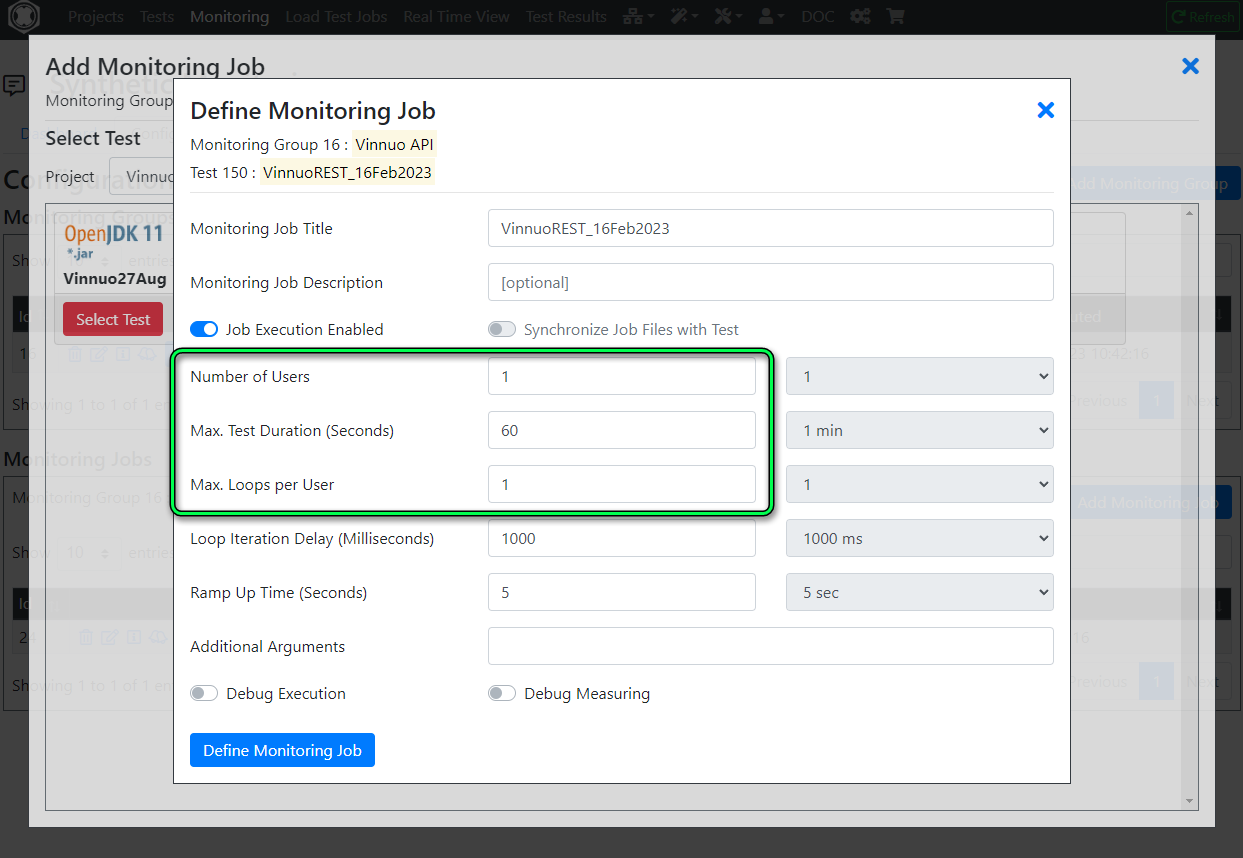

Configuring Monitoring Job

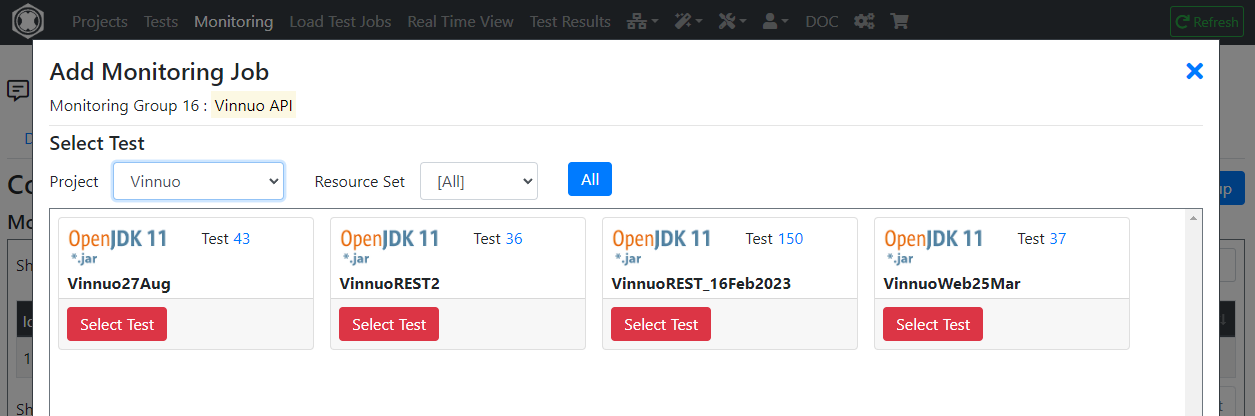

Next, you’ll add at least one Monitoring Job to the Monitoring test. Simply select from one of your projects a Test that was previously prepared.

In this example, I’ve picked one of the test that generates the relevant REST API call(s).

Next you’ll need to configured these key parameters relating to the execution of the test script. If you’re familiar with the load testing features of our product, these parameters will be familiar:

- Number of Users: The number of Virtual Users to simulate. Given this is a monitoring job, this would typically be a low number.

- Max test Duration: This will limit the duration of the job. Again, given this is a monitoring job the Max duration should be kept short.

- Max Loops per User: The maximum number of iterations of the test script executed by each Virtual User. One iteration should typically be sufficient for a monitoring job.

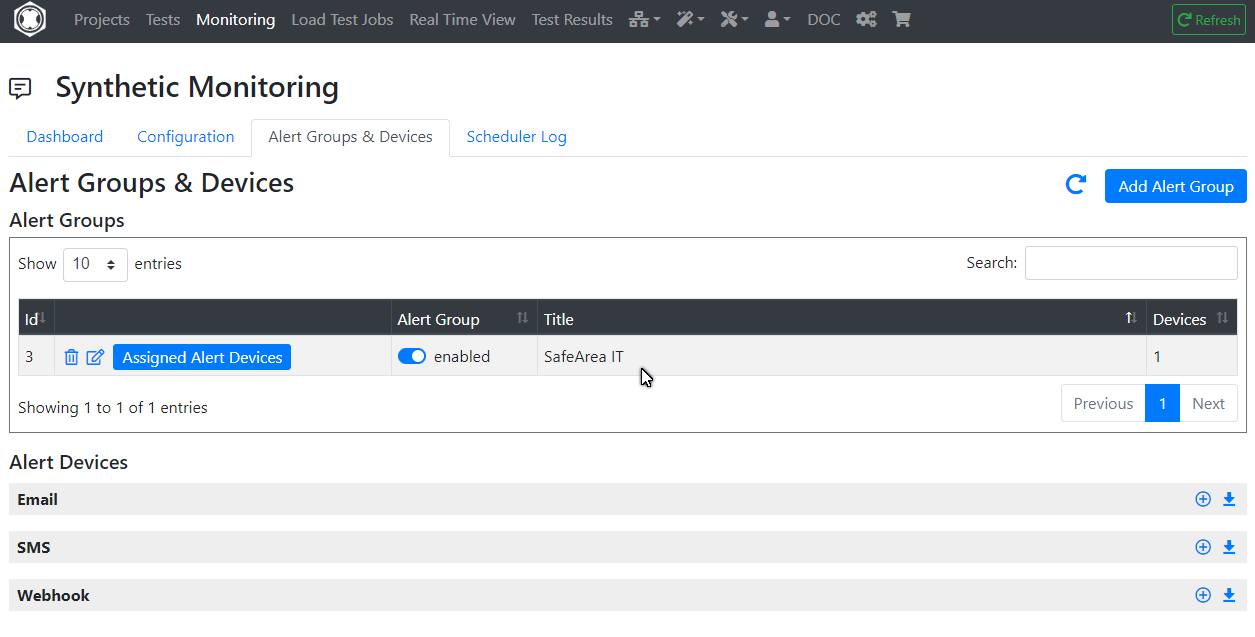

Alerting Groups and Devices

A synthetic monitoring solution wouldn’t be complete with an alerting functionality.

You can configure Alerting Groups to which you can assign a number of different device types to be alerted. Supported device types are:

- Email: An email address to deliver the alert to.

- SMS: SMS alerting. This is subject to additional costs (SMS delivery costs).

- Webhook: You can configure a WebHook, for example integrating with an existing alerting system.

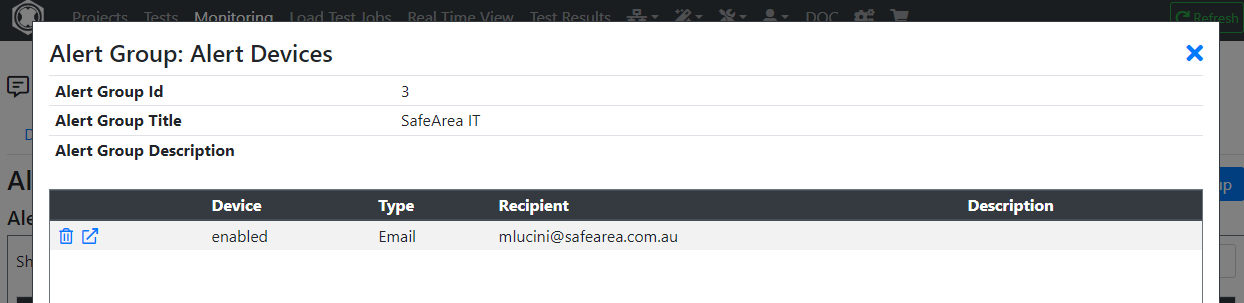

In this example, I’ve created an alerting group called “SafeArea IT”…

… to which I’ve assigned one email alerting device. Needless to say, you can assign the same alerting group to multiple

Now that the Alerting Groups are configured, you can configure alerting at the Monitoring Group or Monitoring Job level, whichever best suits your use case. Simply click on the alert icon and assign the Alerting Groups accordingly:

Monitoring Dashboard

Once you’re done with the configuration, you’ll be able to monitor the health of your applications from the Real Time Dashboard. Please note that his dashboard is in evolution, and we’re adding new features on a regular basis.

For now, you’ll be able to:

- See the overall status of all your synthetic monitoring jobs.

- Look at the results of the last test execution for each job by clicking on the graph symbol pointed at by the red arrow.

- Look at the logs of the last execution by clicking at the symbol pointed at by the green arrow.

- Look at the overall scheduling log by clicking at the symbol pointed at by the blue arrow.

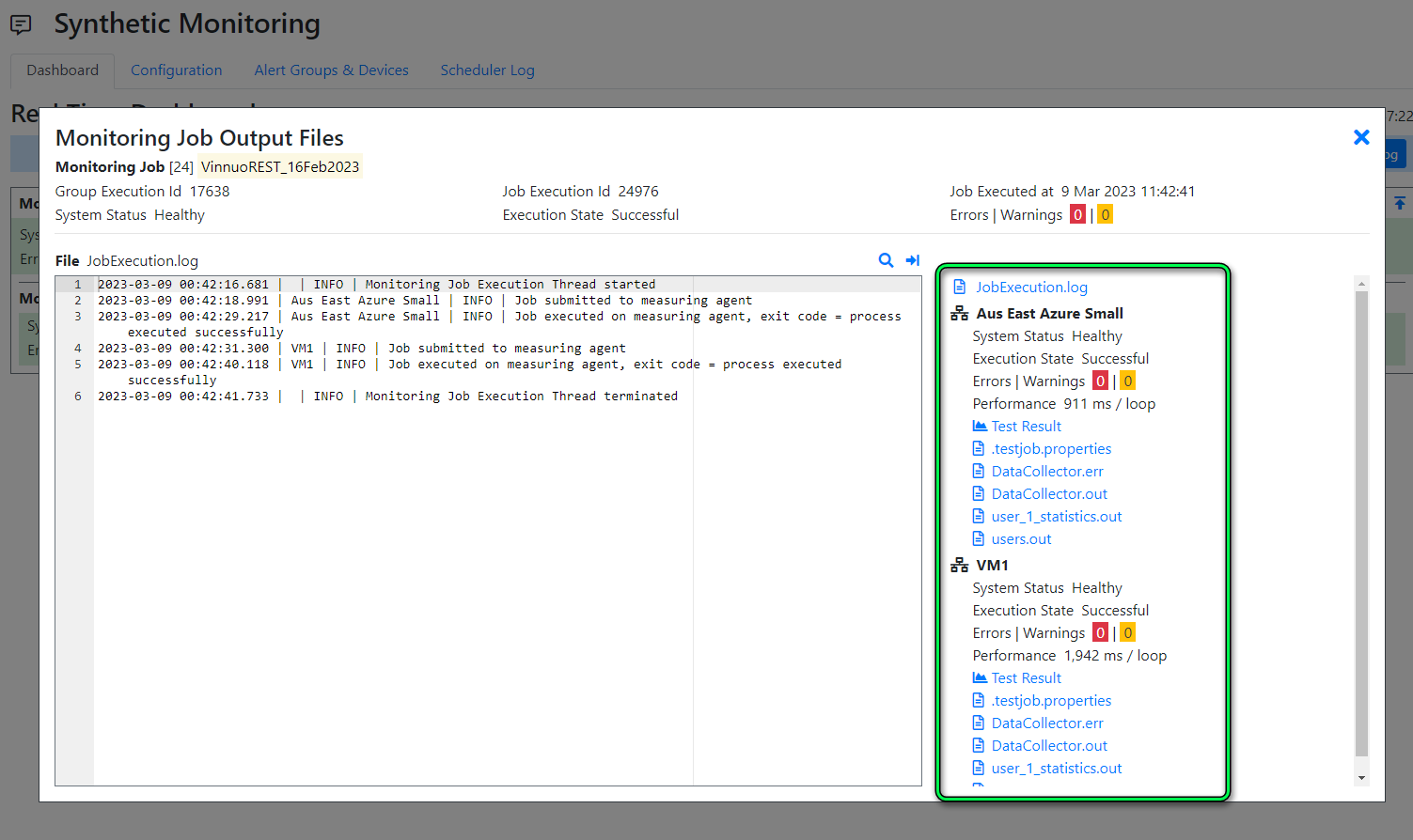

This screenshot shows the logs collected for the last job execution (green arrow). Note the additional logfiles (higlighted in the green box) that you can look at.

Interested?

Regardless of whether your looking for a Synthetic Monitoring or Perfomance Testing solution, we can satisfy both needs.

Sign up for a free account on our portal portal.realload.com and click at “Sign up” (no Credit Card required). Then reach out to us at support@realload.com so that we can get you started with your first project.

Happy monitoring and load testing!

Support for SSL Cert Client authentication in Proxy Recorder

Do you need to record HTTP requests for your test script that require SSL Cert Client authentication? Tick, we support this use case now…

How does it work…

The Proxy Recorder has been enhanced to support recording against websites or applications that require presenting a valid SSL Client certificate.

From an high-level point of view this is how things work:

- 1 - SSL Client certificates are uploaded to the Real Load Portal. Each certificate is associated with an hostname (or IP address) of the target server, so that the Proxy Recorder knows when to present the SSL Client certificate.

- 2 - The Real Portal will then share the SSL Client certs with Proxy Recorder. Currently Cloud Hosted proxy recorders are supported.

- 3 - The tester then executes the steps to be recorded and included in the test script.

- 4 - When the Proxy Recorder attempts to access hosts that required SSL Client authentication, the relevant SSL Client will be applied.

SSL Client Certificate Configuration

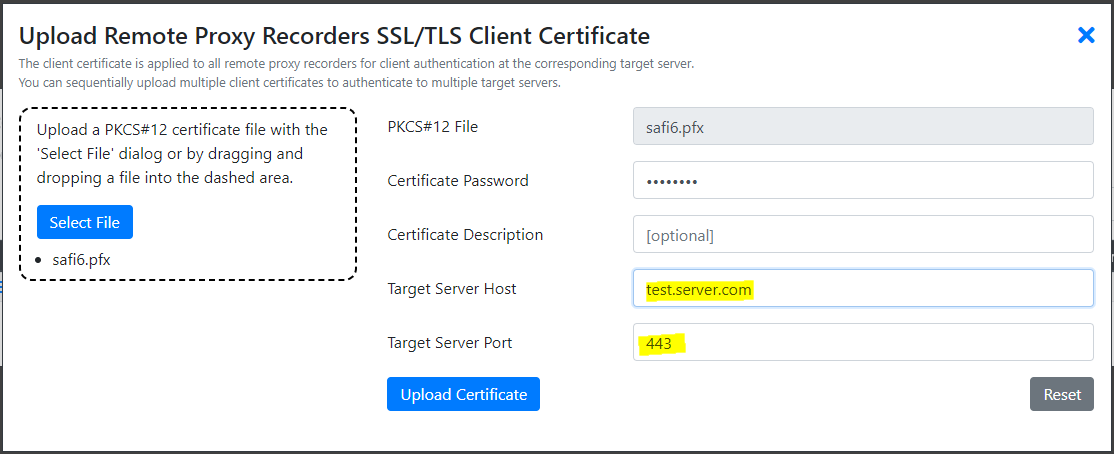

SSL Client certificates in the .pfx/.p12 format need to be uploaded to the Real Load Portal server.

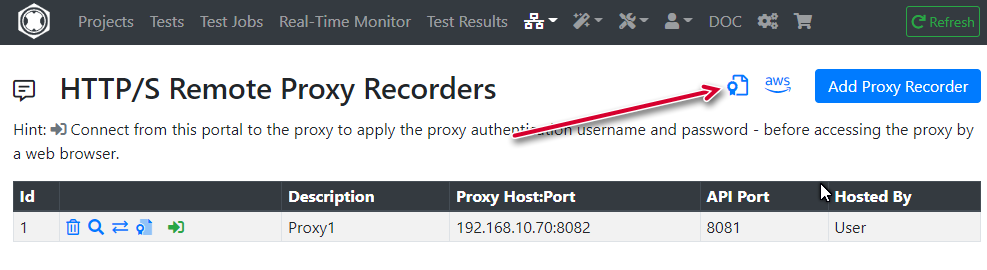

The configuration of such SSL Client certificates in the Real Load Portal server is done by going to the Remote Proxy Recorders menu item and then clicking on the certificate symbol:

Then provide details about the certificate your uploading. Importantly the target server host must exactly match the hostname (or IP address) that will appear in HTTP requests.

Done. Once uploaded, using the Proxy Recorder attempt to access a resource that requires SSL Client Cert authentication. You should be able to access the resource.

Some SQL performance testing today?

Most performance testing scenarios involve an application or an API presented over the HTTP or HTTPS protocol. The Real Load performance testing framework is capable of supporting essentially any type network application, as long as there is a way to generate valid client requests.

Real Load testing scripts are Java based applications that are executed by our platform. While our portal offers a wizard to easily create tests for the HTTP protocol, you can write write a performance test application for any network protocol by implementing such a Java based application.

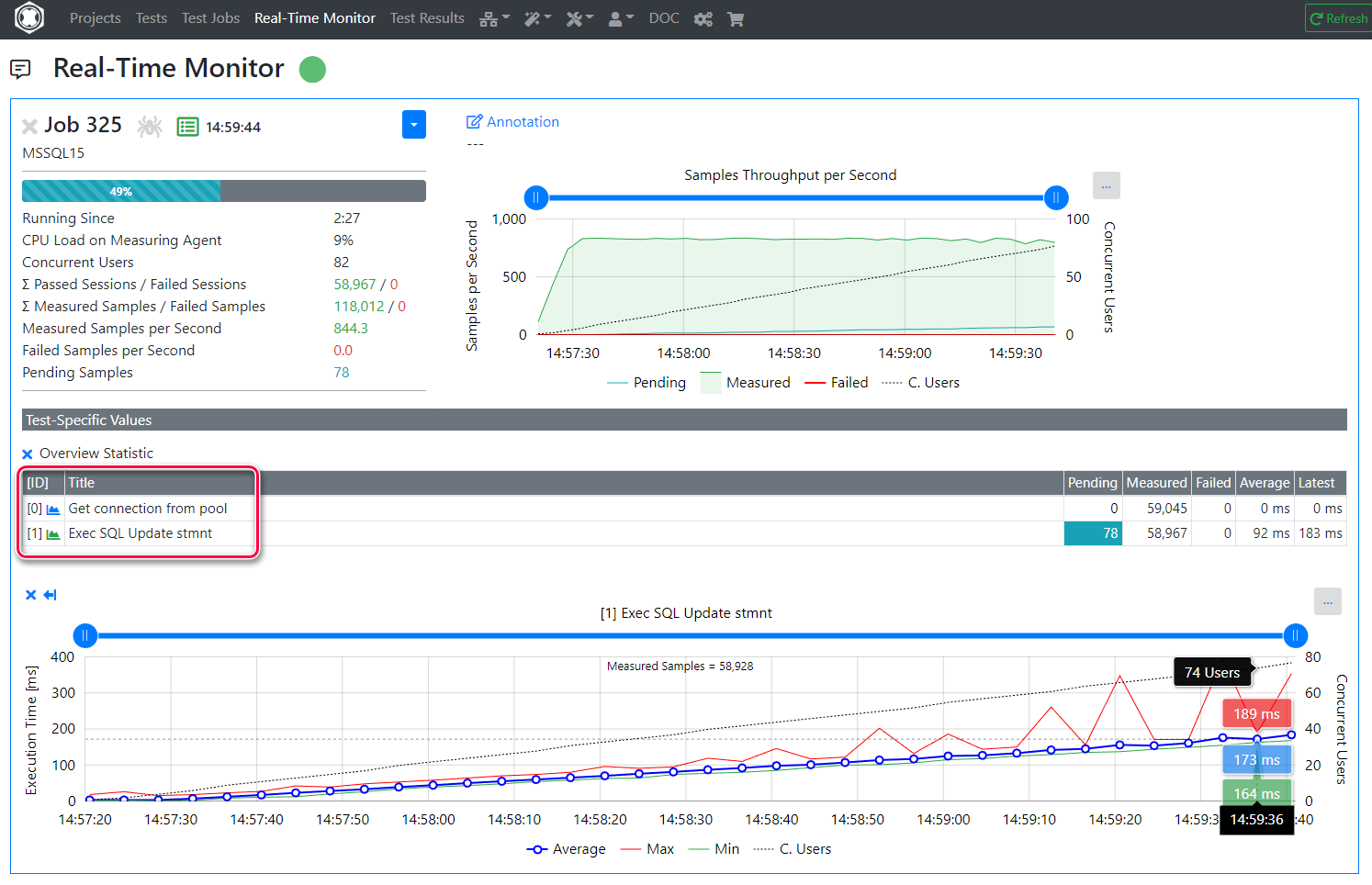

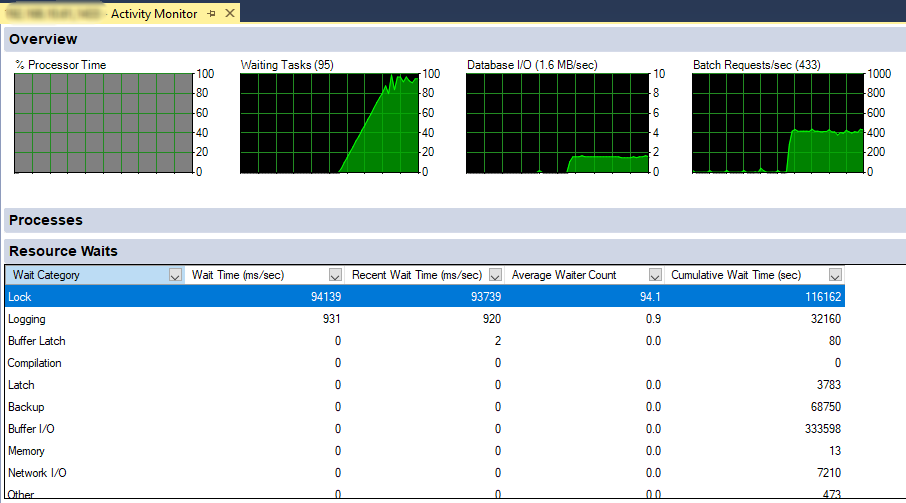

This article illustrates how to prepare a simple load test application for a non-HTTP application. I’ve chosen to performance test our lab MS-SQL server. What I want to find out is how the SQL server performs if multiple threads attempt to update data stored in the same row. While the test sounds academic, this is a scenario I’ve seen leading to performance issues in real life applications…

Requirements

Key requirements to implement such an application are:

- You’ll need Java client libraries (… and related dependencies) implementing the protocol you want to test. In this case I’ll use MicroSoft’s JDBC driver and Hikari as the SQL connection pool manager.

- You’ll need to determine what logic your load test application should execute. In this example, I’ll run an update SQL statement.

- You’ll need to determine the metrics you want to measure during test execution. We’ll collect time to obtain a connection from the pool and the time to execute the SQL operation.

- Make sure the Measuring Agent has network access to the service to be tested (… MS-SQL DB in this case).

- Last, you’ll need some Java skills to put together the load testing application or access to somebody that will do that for you.

Step 1 - Implement the test script as a Java application

Using your preferred Java development environment, create a project and add the following dependencies to it:

- DKFQSTools.jar - Required for all performance testing applications

- mssql-jdbc.jar (The MS-SQL JDBC driver)

- hikari-cp.jar (JDBC connection pooling)

- slf4j-api.jar (Required by Hikari)

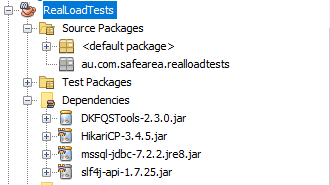

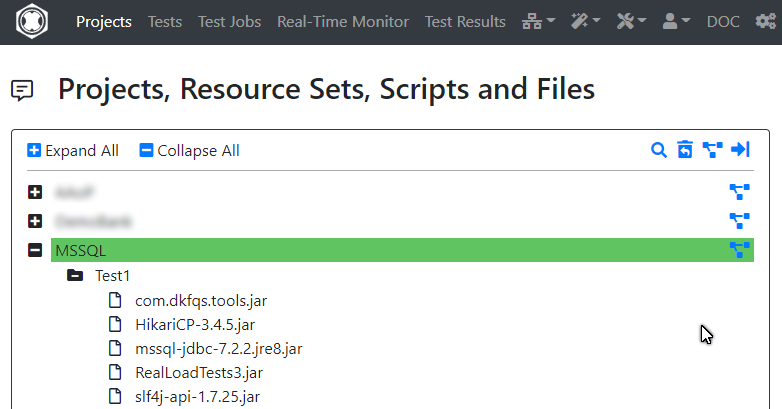

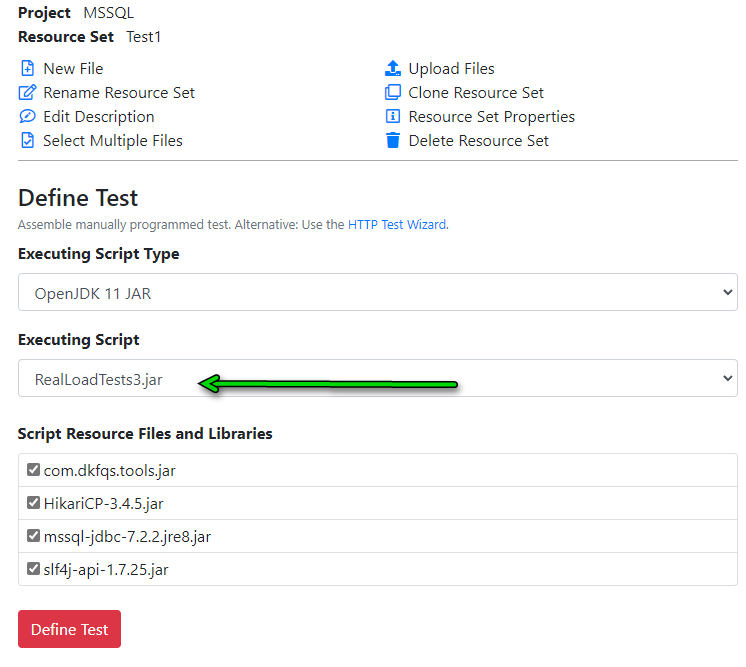

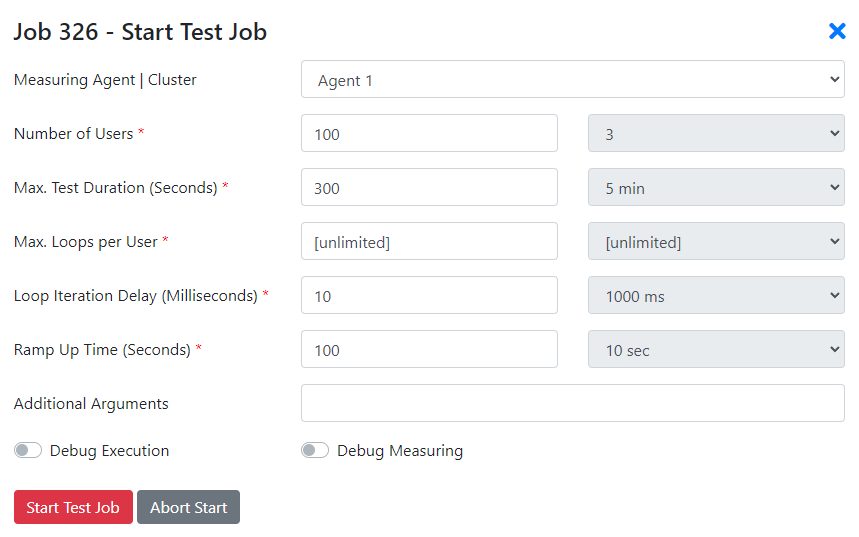

In NetBeans, the dependencies section would look as follows:

Once the dependencies are configured in your project, we’ll implemented the test logic (the AbstractJavaTest interface). For this application, we’ll create the below class.